Bonny Mahajan

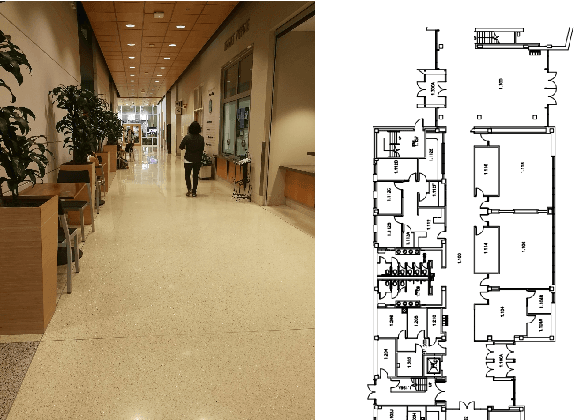

Unclogging Our Arteries: Using Human-Inspired Signals to Disambiguate Navigational Intentions

Sep 14, 2019

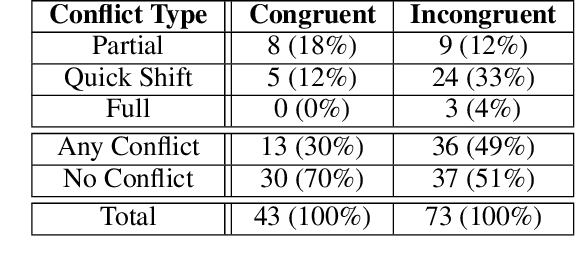

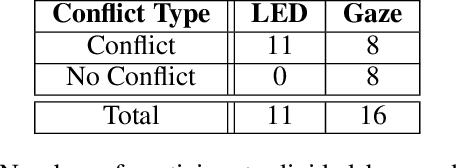

Abstract:People are proficient at communicating their intentions in order to avoid conflicts when navigating in narrow, crowded environments. In many situations mobile robots lack both the ability to interpret human intentions and the ability to clearly communicate their own intentions to people sharing their space. This work addresses the second of these points, leveraging insights about how people implicitly communicate with each other through observations of behaviors such as gaze to provide mobile robots with better social navigation skills. In a preliminary human study, the importance of gaze as a signal used by people to interpret each-other's intentions during navigation of a shared space is observed. This study is followed by the development of a virtual agent head which is mounted to the top of the chassis of the BWIBot mobile robot platform. Contrasting the performance of the virtual agent head against an LED turn signal demonstrates that the naturalistic, implicit gaze cue is more easily interpreted than the LED turn signal.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge