Bharanidhar Duraisamy

Multi-Modal Sensor Fusion using Hybrid Attention for Autonomous Driving

Apr 06, 2026Abstract:Accurate 3D object detection for autonomous driving requires complementary sensors. Cameras provide dense semantics but unreliable depth, while millimeter-wave radar offers precise range and velocity measurements with sparse geometry. We propose MMF-BEV, a radar-camera BEV fusion framework that leverages deformable attention for cross-modal feature alignment on the View-of-Delft (VoD) 4D radar dataset [1]. MMF-BEV builds a BEVDepth [2] camera branch and a RadarBEVNet [3] radar branch, each enhanced with Deformable Self-Attention, and fuses them via a Deformable Cross-Attention module. We evaluate three configurations: camera-only, radar-only, and hybrid fusion. A sensor contribution analysis quantifies per-distance modality weighting, providing interpretable evidence of sensor complementarity. A two-stage training strategy - pre-training the camera branch with depth supervision, then jointly training radar and fusion modules stabilizes learning. Experiments on VoD show that MMF-BEV consistently outperforms unimodal baselines and achieves competitive results against prior fusion methods across all object classes in both the full annotated area and near-range Region of Interest.

LEO: Graph Attention Network based Hybrid Multi Sensor Extended Object Fusion and Tracking for Autonomous Driving Applications

Apr 02, 2026Abstract:Accurate shape and trajectory estimation of dynamic objects is essential for reliable automated driving. Classical Bayesian extended-object models offer theoretical robustness and efficiency but depend on completeness of a-priori and update-likelihood functions, while deep learning methods bring adaptability at the cost of dense annotations and high compute. We bridge these strengths with LEO (Learned Extension of Objects), a spatio-temporal Graph Attention Network that fuses multi-modal production-grade sensor tracks to learn adaptive fusion weights, ensure temporal consistency, and represent multi-scale shapes. Using a task-specific parallelogram ground-truth formulation, LEO models complex geometries (e.g. articulated trucks and trailers) and generalizes across sensor types, configurations, object classes, and regions, remaining robust for challenging and long-range targets. Evaluations on the Mercedes-Benz DRIVE PILOT SAE L3 dataset demonstrate real-time computational efficiency suitable for production systems; additional validation on public datasets such as View of Delft (VoD) further confirms cross-dataset generalization.

Adaptive Learned State Estimation based on KalmanNet

Apr 02, 2026Abstract:Hybrid state estimators that combine model-based Kalman filtering with learned components have shown promise on simulated data, yet their performance on real-world automotive data remains insufficient. In this work we present Adaptive Multi-modal KalmanNet (AM-KNet), an advancement of KalmanNet tailored to the multi-sensor autonomous driving setting. AM-KNet introduces sensor-specific measurement modules that enable the network to learn the distinct noise characteristics of radar, lidar, and camera independently. A hypernetwork with context modulation conditions the filter on target type, motion state, and relative pose, allowing adaptation to diverse traffic scenarios. We further incorporate a covariance estimation branch based on the Josephs form and supervise it through negative log-likelihood losses on both the estimation error and the innovation. A comprehensive, component-wise loss function encodes physical priors on sensor reliability, target class, motion state, and measurement flow consistency. AM-KNet is trained and evaluated on the nuScenes and View-of-Delft datasets. The results demonstrate improved estimation accuracy and tracking stability compared to the base KalmanNet, narrowing the performance gap with classical Bayesian filters on real-world automotive data.

Performance Evaluation of Deep Learning-Based State Estimation: A Comparative Study of KalmanNet

Nov 25, 2024

Abstract:Kalman Filters (KF) are fundamental to real-time state estimation applications, including radar-based tracking systems used in modern driver assistance and safety technologies. In a linear dynamical system with Gaussian noise distributions the KF is the optimal estimator. However, real-world systems often deviate from these assumptions. This deviation combined with the success of deep learning across many disciplines has prompted the exploration of data driven approaches that leverage deep learning for filtering applications. These learned state estimators are often reported to outperform traditional model based systems. In this work, one prevalent model, KalmanNet, was selected and evaluated on automotive radar data to assess its performance under real-world conditions and compare it to an interacting multiple models (IMM) filter. The evaluation is based on raw and normalized errors as well as the state uncertainty. The results demonstrate that KalmanNet is outperformed by the IMM filter and indicate that while data-driven methods such as KalmanNet show promise, their current lack of reliability and robustness makes them unsuited for safety-critical applications.

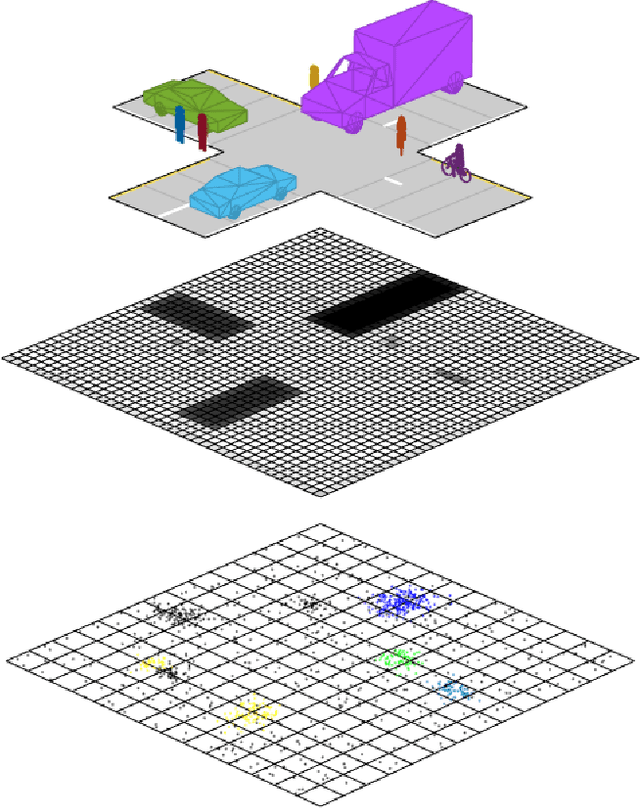

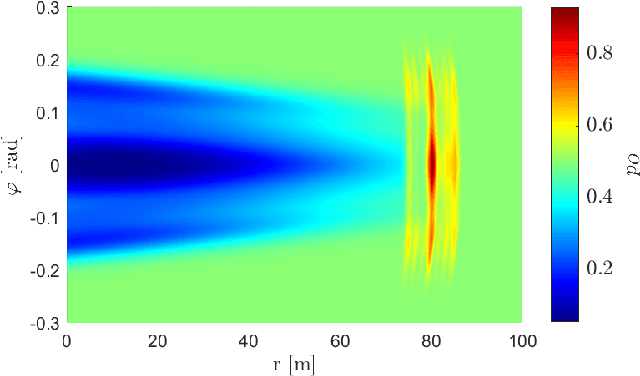

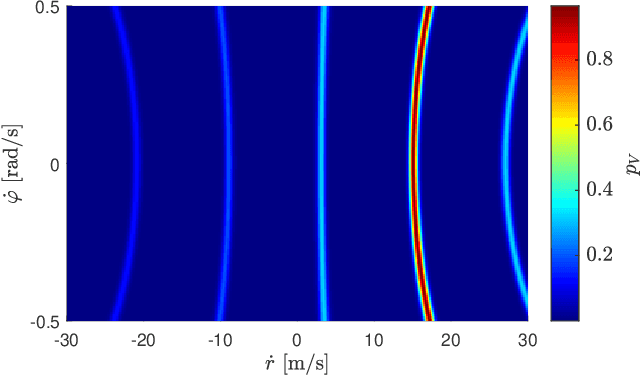

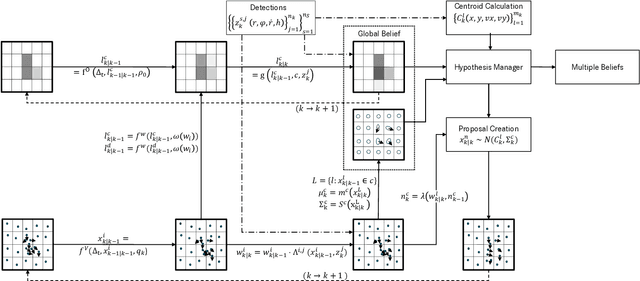

UNIFY: Multi-Belief Bayesian Grid Framework based on Automotive Radar

Apr 24, 2021

Abstract:Grid maps are widely established for the representation of static objects in robotics and automotive applications. Though, incorporating velocity information is still widely examined because of the increased complexity of dynamic grids concerning both velocity measurement models for radar sensors and the representation of velocity in a grid framework. In this paper, both issues are addressed: sensor models and an efficient grid framework, which are required to ensure efficient and robust environment perception with radar. To that, we introduce new inverse radar sensor models covering radar sensor artifacts such as measurement ambiguities to integrate automotive radar sensors for improved velocity estimation. Furthermore, we introduce UNIFY, a multiple belief Bayesian grid map framework for static occupancy and velocity estimation with independent layers. The proposed UNIFY framework utilizes a grid-cell-based layer to provide occupancy information and a particle-based velocity layer for motion state estimation in an autonomous vehicle's environment. Each UNIFY layer allows individual execution as well as simultaneous execution of both layers for optimal adaption to varying environments in autonomous driving applications. UNIFY was tested and evaluated in terms of plausibility and efficiency on a large real-world radar data-set in challenging traffic scenarios covering different densities in urban and rural sceneries.

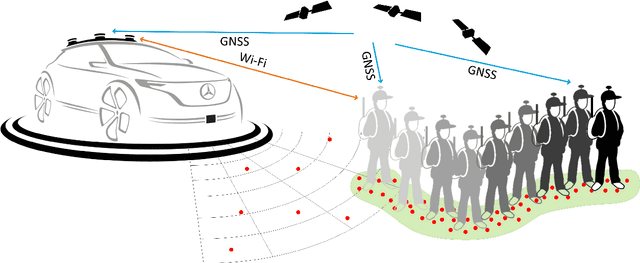

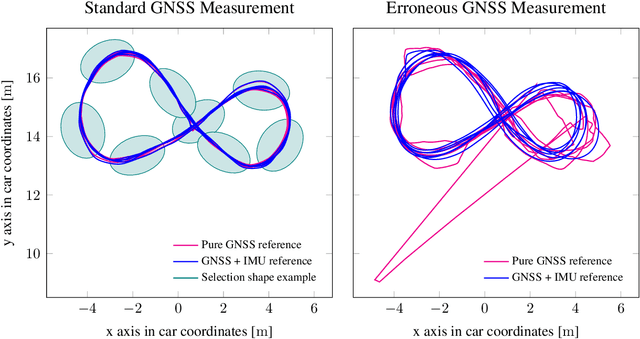

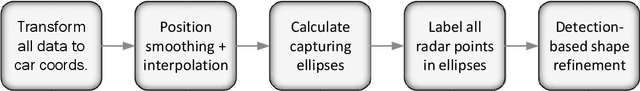

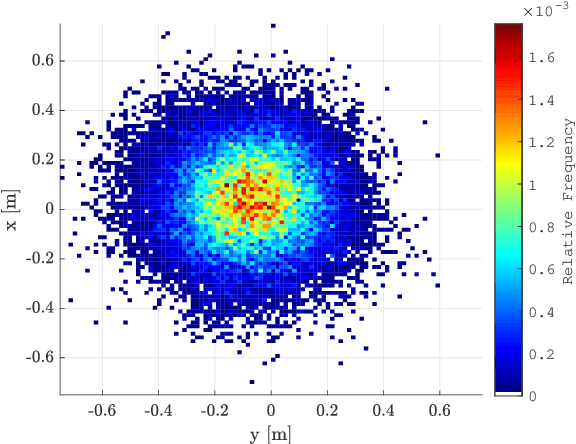

Automated Ground Truth Estimation For Automotive Radar Tracking Applications With Portable GNSS And IMU Devices

Jun 03, 2019

Abstract:Baseline generation for tracking applications is a difficult task when working with real world radar data. Data sparsity usually only allows an indirect way of estimating the original tracks as most objects' centers are not represented in the data. This article proposes an automated way of acquiring reference trajectories by using a highly accurate hand-held global navigation satellite system (GNSS). An embedded inertial measurement unit (IMU) is used for estimating orientation and motion behavior. This article contains two major contributions. A method for associating radar data to vulnerable road user (VRU) tracks is described. It is evaluated how accurate the system performs under different GNSS reception conditions and how carrying a reference system alters radar measurements. Second, the system is used to track pedestrians and cyclists over many measurement cycles in order to generate object centered occupancy grid maps. The reference system allows to much more precisely generate real world radar data distributions of VRUs than compared to conventional methods. Hereby, an important step towards radar-based VRU tracking is accomplished.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge