Asad Khan

Robotic Hierarchical Graph Neurons. A novel implementation of HGN for swarm robotic behaviour control

Oct 28, 2019

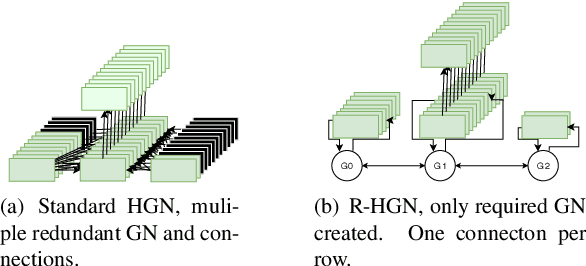

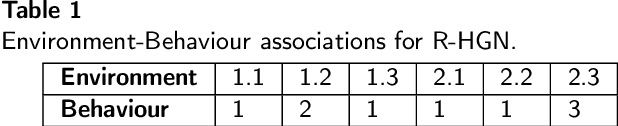

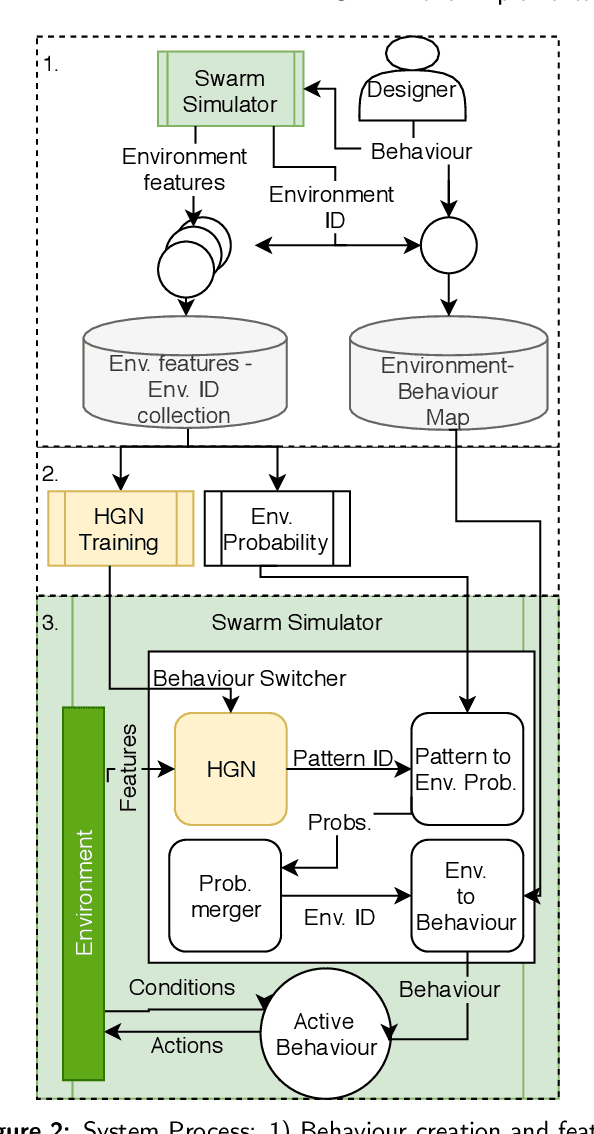

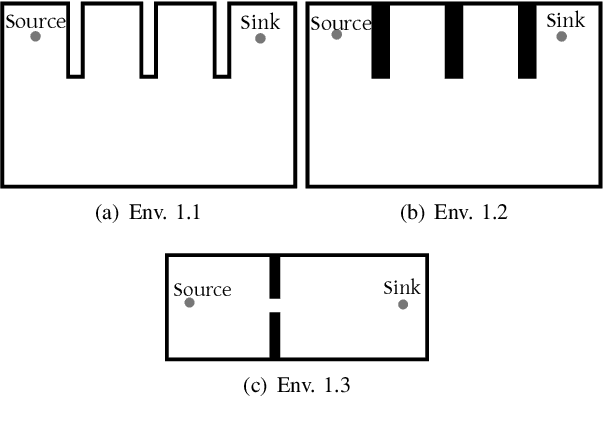

Abstract:This paper explores the use of a novel form of Hierarchical Graph Neurons (HGN) for in-operation behaviour selection in a swarm of robotic agents. This new HGN is called Robotic-HGN (R-HGN), as it matches robot environment observations to environment labels via fusion of match probabilities from both temporal and intra-swarm collections. This approach is novel for HGN as it addresses robotic observations being pseudo-continuous numbers, rather than categorical values. Additionally, the proposed approach is memory and computation-power conservative and thus is acceptable for use in mobile devices such as single-board computers, which are often used in mobile robotic agents. This R-HGN approach is validated against individual behaviour implementation and random behaviour selection. This contrast is made in two sets of simulated environments: environments designed to challenge the held behaviours of the R-HGN, and randomly generated environments which are more challenging for the robotic swarm than R-HGN training conditions. R-HGN has been found to enable appropriate behaviour selection in both these sets, allowing significant swarm performance in pre-trained and unexpected environment conditions.

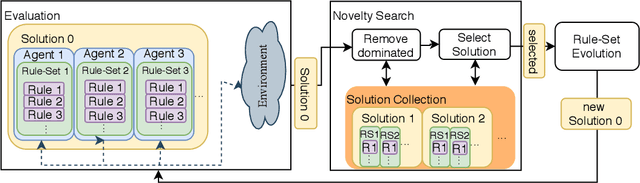

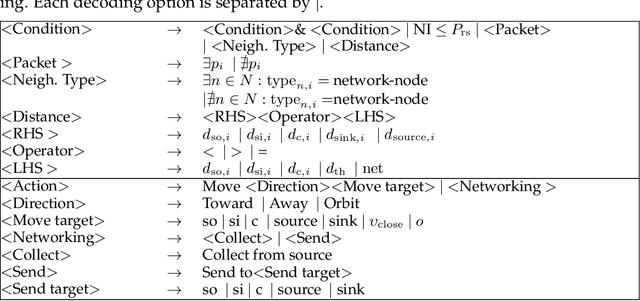

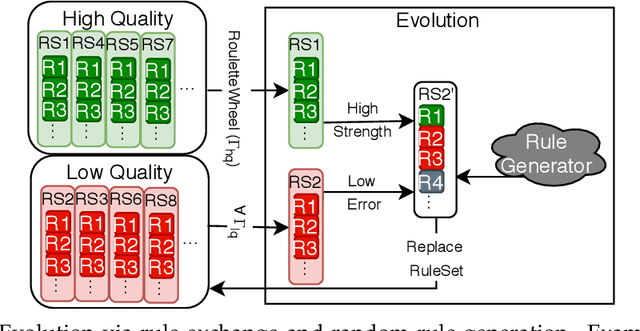

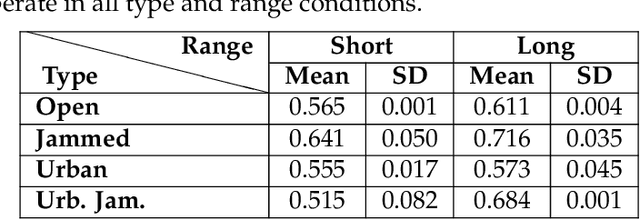

Swarm Behaviour Evolution via Rule Sharing and Novelty Search

Oct 28, 2019

Abstract:We present in this paper an exertion of our previous work by increasing the robustness and coverage of the evolution search via hybridisation with a state-of-the-art novelty search and accelerate the individual agent behaviour searches via a novel behaviour-component sharing technique. Via these improvements, we present Swarm Learning Classifier System 2.0 (SLCS2), a behaviour evolving algorithm which is robust to complex environments, and seen to out-perform a human behaviour designer in challenging cases of the data-transfer task in a range of environmental conditions. Additionally, we examine the impact of tailoring the SLCS2 rule generator for specific environmental conditions. We find this leads to over-fitting, as might be expected, and thus conclude that for greatest environment flexibility a general rule generator should be utilised.

Deep Learning for Multi-Messenger Astrophysics: A Gateway for Discovery in the Big Data Era

Feb 01, 2019Abstract:This report provides an overview of recent work that harnesses the Big Data Revolution and Large Scale Computing to address grand computational challenges in Multi-Messenger Astrophysics, with a particular emphasis on real-time discovery campaigns. Acknowledging the transdisciplinary nature of Multi-Messenger Astrophysics, this document has been prepared by members of the physics, astronomy, computer science, data science, software and cyberinfrastructure communities who attended the NSF-, DOE- and NVIDIA-funded "Deep Learning for Multi-Messenger Astrophysics: Real-time Discovery at Scale" workshop, hosted at the National Center for Supercomputing Applications, October 17-19, 2018. Highlights of this report include unanimous agreement that it is critical to accelerate the development and deployment of novel, signal-processing algorithms that use the synergy between artificial intelligence (AI) and high performance computing to maximize the potential for scientific discovery with Multi-Messenger Astrophysics. We discuss key aspects to realize this endeavor, namely (i) the design and exploitation of scalable and computationally efficient AI algorithms for Multi-Messenger Astrophysics; (ii) cyberinfrastructure requirements to numerically simulate astrophysical sources, and to process and interpret Multi-Messenger Astrophysics data; (iii) management of gravitational wave detections and triggers to enable electromagnetic and astro-particle follow-ups; (iv) a vision to harness future developments of machine and deep learning and cyberinfrastructure resources to cope with the scale of discovery in the Big Data Era; (v) and the need to build a community that brings domain experts together with data scientists on equal footing to maximize and accelerate discovery in the nascent field of Multi-Messenger Astrophysics.

Unsupervised learning and data clustering for the construction of Galaxy Catalogs in the Dark Energy Survey

Dec 05, 2018

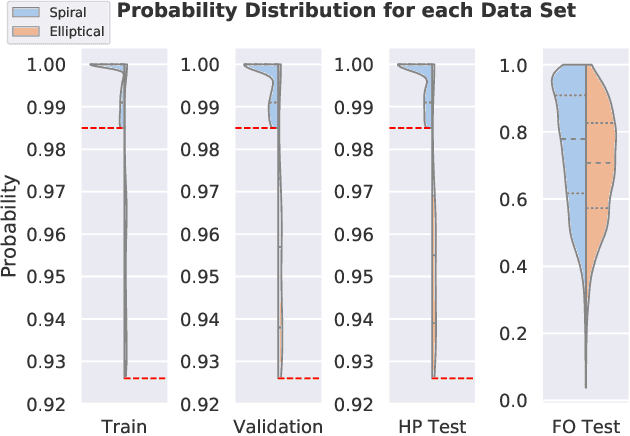

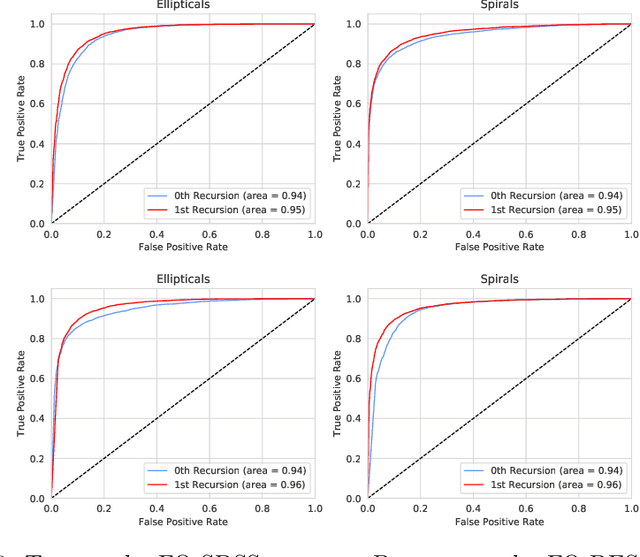

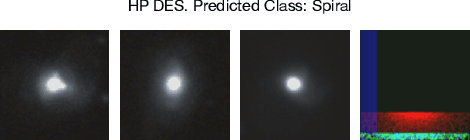

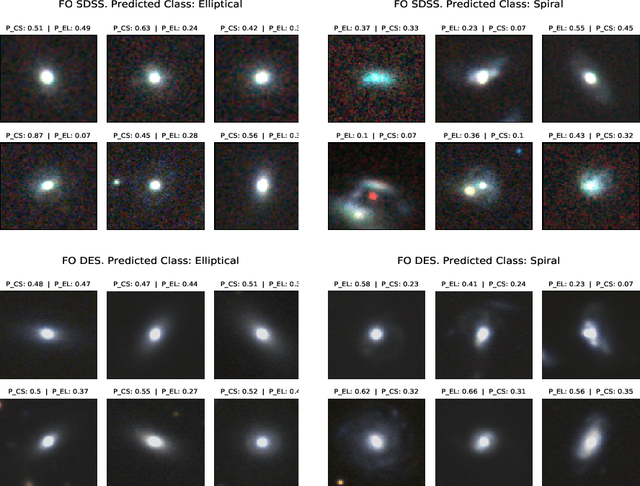

Abstract:Large scale astronomical surveys continue to increase their depth and scale, providing new opportunities to observe large numbers of celestial objects with ever increasing precision. At the same time, the sheer scale of ongoing and future surveys pose formidable challenges to classify astronomical objects. Pioneering efforts on this front include the citizen science approach adopted by the Sloan Digital Sky Survey (SDSS). These SDSS datasets have been used recently to train neural network models to classify galaxies in the Dark Energy Survey (DES) that overlap the footprint of both surveys. While this represents a significant step to classify unlabeled images of astrophysical objects in DES, the key issue at heart still remains, i.e., the classification of unlabelled DES galaxies that have not been observed in previous surveys. To start addressing this timely and pressing matter, we demonstrate that knowledge from deep learning algorithms trained with real-object images can be transferred to classify elliptical and spiral galaxies that overlap both SDSS and DES surveys, achieving state-of-the-art accuracy 99.6%. More importantly, to initiate the characterization of unlabelled DES galaxies that have not been observed in previous surveys, we demonstrate that our neural network model can also be used for unsupervised clustering, grouping together unlabeled DES galaxies into spiral and elliptical types. We showcase the application of this novel approach by classifying over ten thousand unlabelled DES galaxies into spiral and elliptical classes. We conclude by showing that unsupervised clustering can be combined with recursive training to start creating large-scale DES galaxy catalogs in preparation for the Large Synoptic Survey Telescope era.

Segmented and Non-Segmented Stacked Denoising Autoencoder for Hyperspectral Band Reduction

Apr 22, 2018

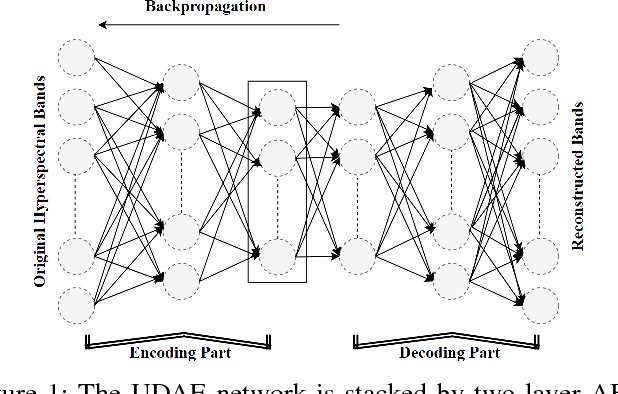

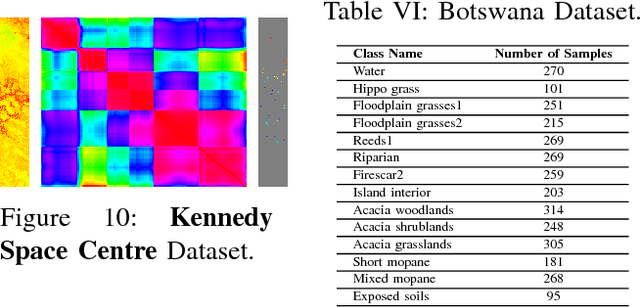

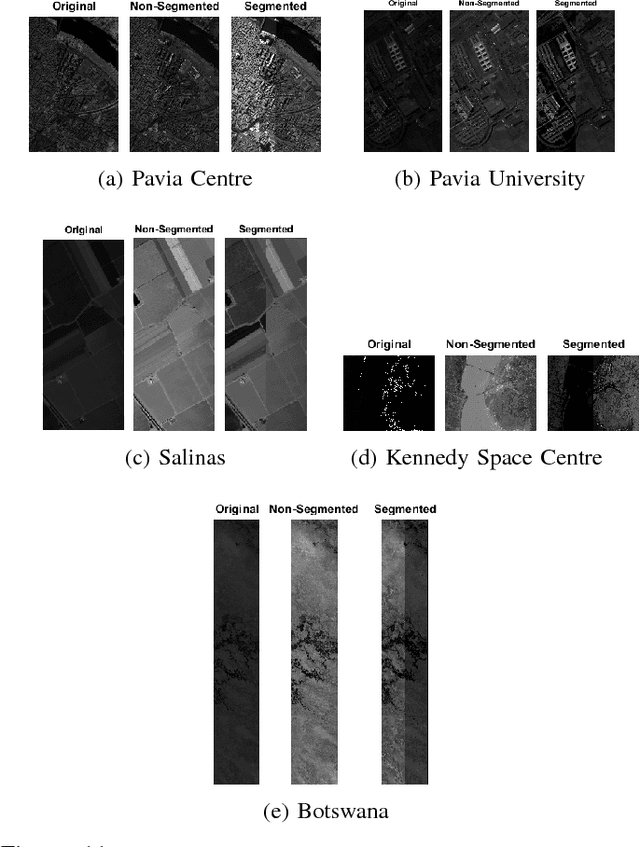

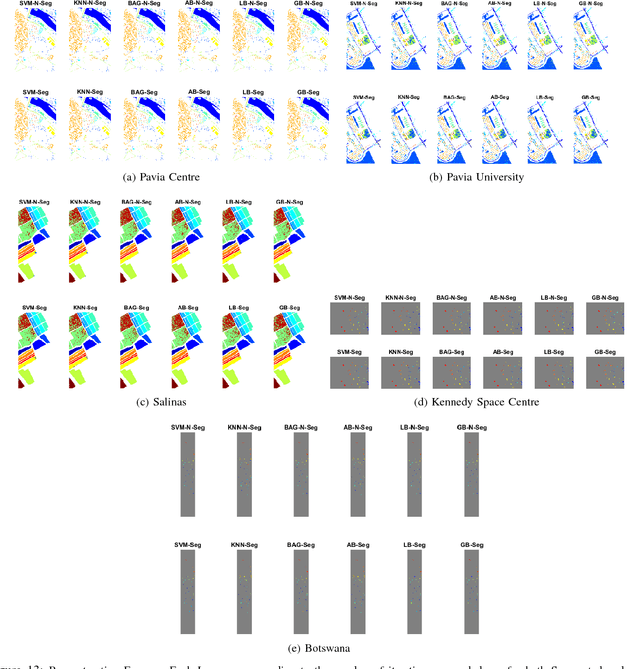

Abstract:Hyperspectral image analysis often requires selecting the most informative bands instead of processing the whole data without losing the key information. Existing band reduction (BR) methods have the capability to reveal the nonlinear properties exhibited in the data but at the expense of loosing its original representation. To cope with the said issue, an unsupervised non-linear segmented and non-segmented stacked denoising autoencoder (UDAE) based BR method is proposed. Our aim is to find an optimal mapping and construct a lower-dimensional space that has a similar structure to the original data with least reconstruction error. The proposed method first confronts the original hyperspectral data into smaller regions in a spatial domain and then each region is processed by UDAE individually. This results in reduced complexity and improved efficiency of BR for both semi-supervised and unsupervised tasks, i.e. classification and clustering. Our experiments on publicly available hyperspectral datasets with various types of classifiers demonstrate the effectiveness of UDAE method which equates favorably with other state-of-the-art dimensionality reduction and BR methods.

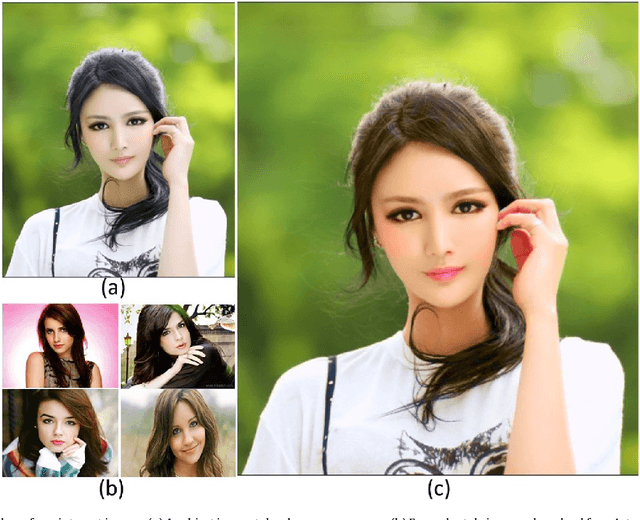

Digital Makeup from Internet Images

Dec 29, 2016

Abstract:We present a novel approach of color transfer between images by exploring their high-level semantic information. First, we set up a database which consists of the collection of downloaded images from the internet, which are segmented automatically by using matting techniques. We then, extract image foregrounds from both source and multiple target images. Then by using image matting algorithms, the system extracts the semantic information such as faces, lips, teeth, eyes, eyebrows, etc., from the extracted foregrounds of the source image. And, then the color is transferred between corresponding parts with the same semantic information. Next we get the color transferred result by seamlessly compositing different parts together using alpha blending. In the final step, we present an efficient method of color consistency to optimize the color of a collection of images showing the common scene. The main advantage of our method over existing techniques is that it does not need face matching, as one could use more than one target images. It is not restricted to head shot images as we can also change the color style in the wild. Moreover, our algorithm does not require to choose the same color style, same pose and image size between source and target images. Our algorithm is not restricted to one-to-one image color transfer and can make use of more than one target images to transfer the color in different parts in the source image. Comparing with other approaches, our algorithm is much better in color blending in the input data.

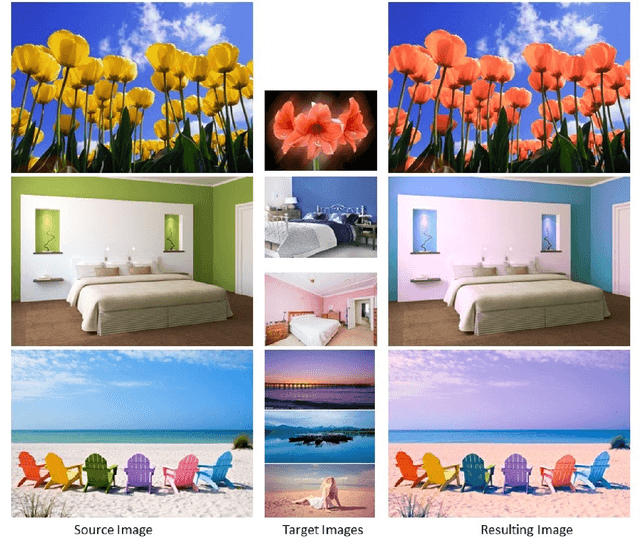

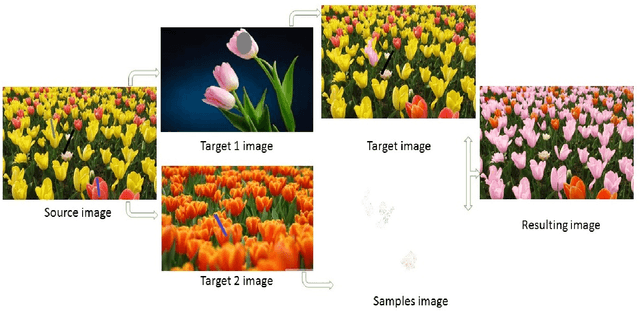

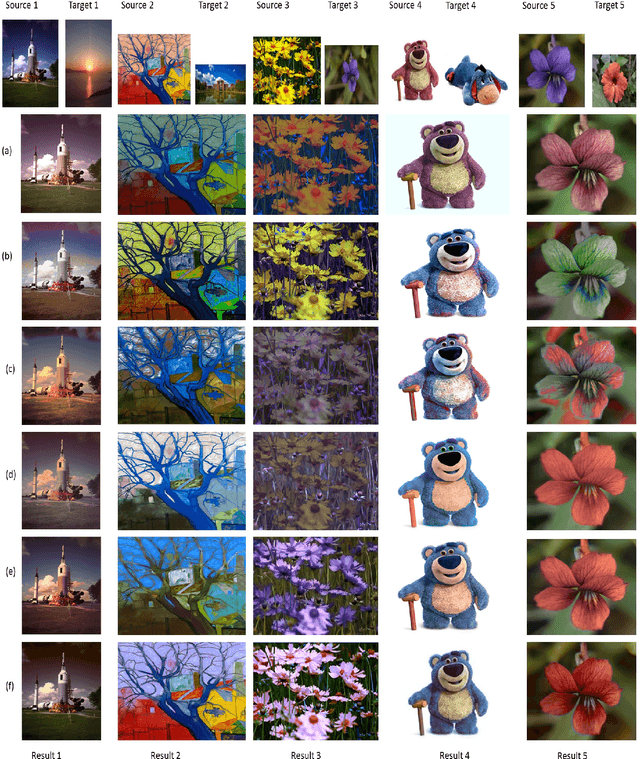

Fast color transfer from multiple images

Dec 28, 2016

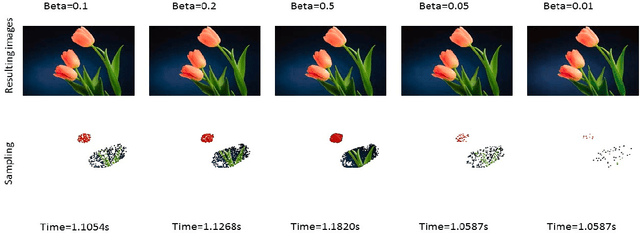

Abstract:Color transfer between images uses the statistics information of image effectively. We present a novel approach of local color transfer between images based on the simple statistics and locally linear embedding. A sketching interface is proposed for quickly and easily specifying the color correspondences between target and source image. The user can specify the correspondences of local region using scribes, which more accurately transfers the target color to the source image while smoothly preserving the boundaries, and exhibits more natural output results. Our algorithm is not restricted to one-to-one image color transfer and can make use of more than one target images to transfer the color in different regions in the source image. Moreover, our algorithm does not require to choose the same color style and image size between source and target images. We propose the sub-sampling to reduce the computational load. Comparing with other approaches, our algorithm is much better in color blending in the input data. Our approach preserves the other color details in the source image. Various experimental results show that our approach specifies the correspondences of local color region in source and target images. And it expresses the intention of users and generates more actual and natural results of visual effect.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge