Amin Ghafouri

LaMDA: Language Models for Dialog Applications

Feb 10, 2022

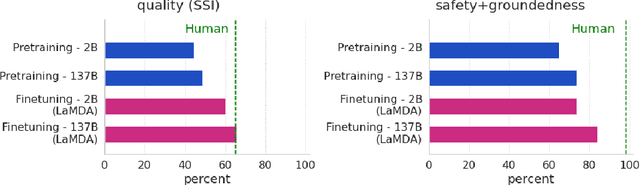

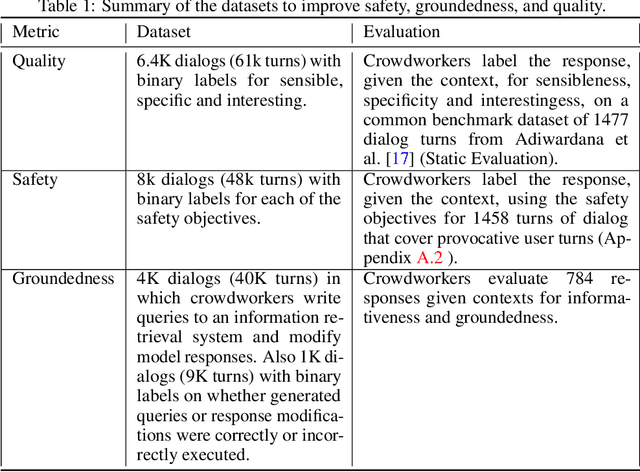

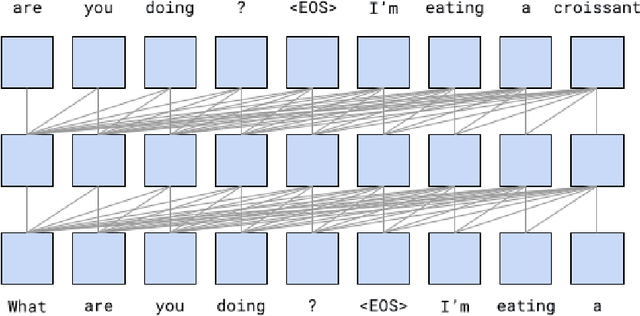

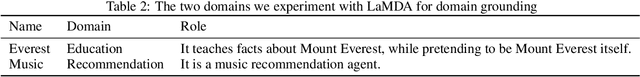

Abstract:We present LaMDA: Language Models for Dialog Applications. LaMDA is a family of Transformer-based neural language models specialized for dialog, which have up to 137B parameters and are pre-trained on 1.56T words of public dialog data and web text. While model scaling alone can improve quality, it shows less improvements on safety and factual grounding. We demonstrate that fine-tuning with annotated data and enabling the model to consult external knowledge sources can lead to significant improvements towards the two key challenges of safety and factual grounding. The first challenge, safety, involves ensuring that the model's responses are consistent with a set of human values, such as preventing harmful suggestions and unfair bias. We quantify safety using a metric based on an illustrative set of human values, and we find that filtering candidate responses using a LaMDA classifier fine-tuned with a small amount of crowdworker-annotated data offers a promising approach to improving model safety. The second challenge, factual grounding, involves enabling the model to consult external knowledge sources, such as an information retrieval system, a language translator, and a calculator. We quantify factuality using a groundedness metric, and we find that our approach enables the model to generate responses grounded in known sources, rather than responses that merely sound plausible. Finally, we explore the use of LaMDA in the domains of education and content recommendations, and analyze their helpfulness and role consistency.

Adversarial Regression for Detecting Attacks in Cyber-Physical Systems

Apr 30, 2018

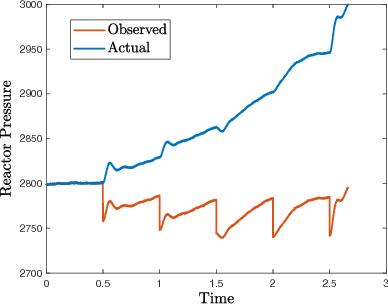

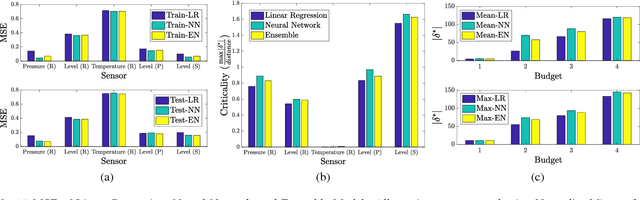

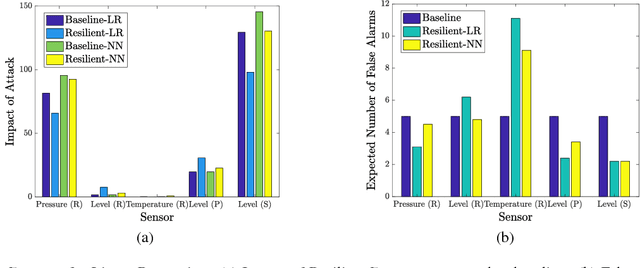

Abstract:Attacks in cyber-physical systems (CPS) which manipulate sensor readings can cause enormous physical damage if undetected. Detection of attacks on sensors is crucial to mitigate this issue. We study supervised regression as a means to detect anomalous sensor readings, where each sensor's measurement is predicted as a function of other sensors. We show that several common learning approaches in this context are still vulnerable to \emph{stealthy attacks}, which carefully modify readings of compromised sensors to cause desired damage while remaining undetected. Next, we model the interaction between the CPS defender and attacker as a Stackelberg game in which the defender chooses detection thresholds, while the attacker deploys a stealthy attack in response. We present a heuristic algorithm for finding an approximately optimal threshold for the defender in this game, and show that it increases system resilience to attacks without significantly increasing the false alarm rate.

Optimal Detection of Faulty Traffic Sensors Used in Route Planning

Mar 16, 2017

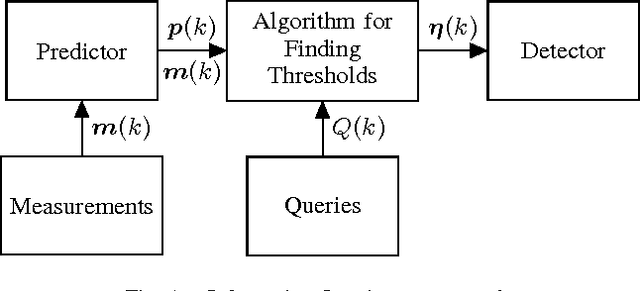

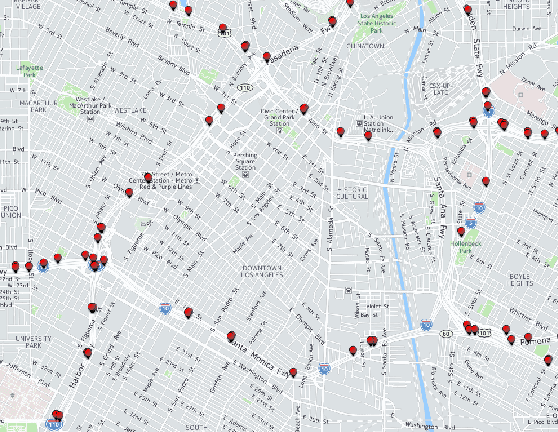

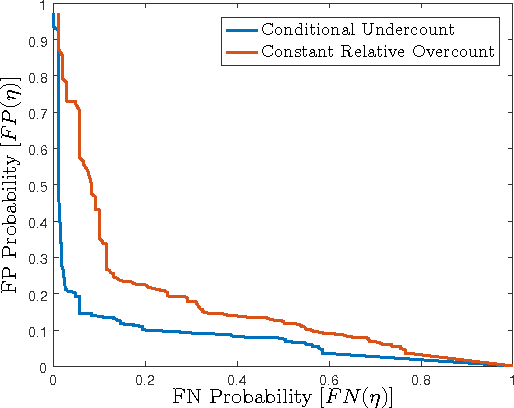

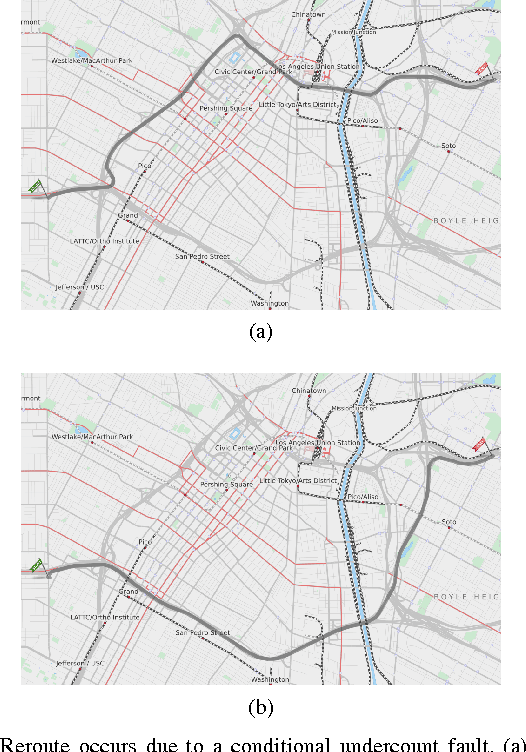

Abstract:In a smart city, real-time traffic sensors may be deployed for various applications, such as route planning. Unfortunately, sensors are prone to failures, which result in erroneous traffic data. Erroneous data can adversely affect applications such as route planning, and can cause increased travel time. To minimize the impact of sensor failures, we must detect them promptly and accurately. However, typical detection algorithms may lead to a large number of false positives (i.e., false alarms) and false negatives (i.e., missed detections), which can result in suboptimal route planning. In this paper, we devise an effective detector for identifying faulty traffic sensors using a prediction model based on Gaussian Processes. Further, we present an approach for computing the optimal parameters of the detector which minimize losses due to false-positive and false-negative errors. We also characterize critical sensors, whose failure can have high impact on the route planning application. Finally, we implement our method and evaluate it numerically using a real-world dataset and the route planning platform OpenTripPlanner.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge