Ali Emre Kavur

Why is the winner the best?

Mar 30, 2023

Abstract:International benchmarking competitions have become fundamental for the comparative performance assessment of image analysis methods. However, little attention has been given to investigating what can be learnt from these competitions. Do they really generate scientific progress? What are common and successful participation strategies? What makes a solution superior to a competing method? To address this gap in the literature, we performed a multi-center study with all 80 competitions that were conducted in the scope of IEEE ISBI 2021 and MICCAI 2021. Statistical analyses performed based on comprehensive descriptions of the submitted algorithms linked to their rank as well as the underlying participation strategies revealed common characteristics of winning solutions. These typically include the use of multi-task learning (63%) and/or multi-stage pipelines (61%), and a focus on augmentation (100%), image preprocessing (97%), data curation (79%), and postprocessing (66%). The "typical" lead of a winning team is a computer scientist with a doctoral degree, five years of experience in biomedical image analysis, and four years of experience in deep learning. Two core general development strategies stood out for highly-ranked teams: the reflection of the metrics in the method design and the focus on analyzing and handling failure cases. According to the organizers, 43% of the winning algorithms exceeded the state of the art but only 11% completely solved the respective domain problem. The insights of our study could help researchers (1) improve algorithm development strategies when approaching new problems, and (2) focus on open research questions revealed by this work.

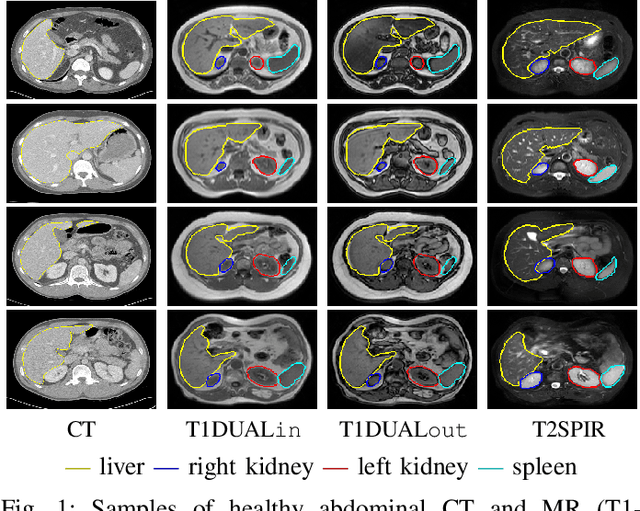

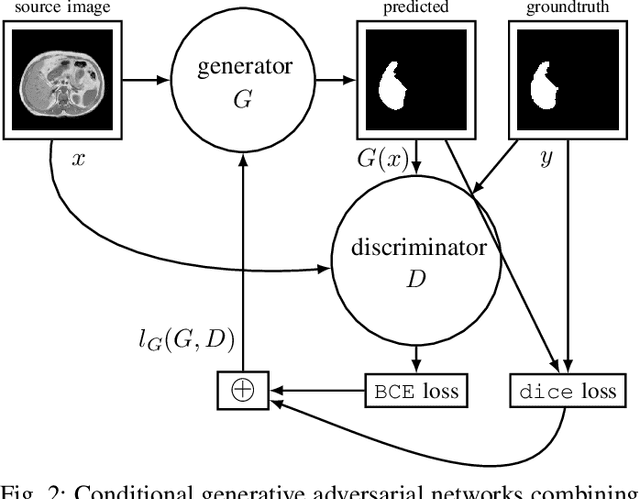

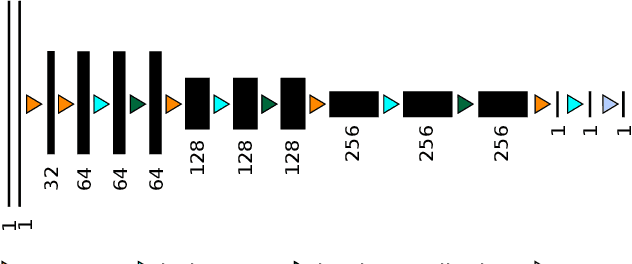

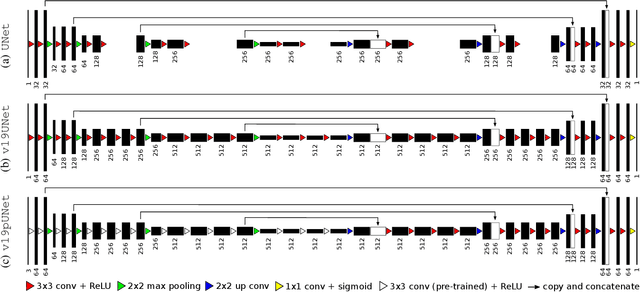

Abdominal multi-organ segmentation with cascaded convolutional and adversarial deep networks

Jan 26, 2020

Abstract:Objective : Abdominal anatomy segmentation is crucial for numerous applications from computer-assisted diagnosis to image-guided surgery. In this context, we address fully-automated multi-organ segmentation from abdominal CT and MR images using deep learning. Methods: The proposed model extends standard conditional generative adversarial networks. Additionally to the discriminator which enforces the model to create realistic organ delineations, it embeds cascaded partially pre-trained convolutional encoder-decoders as generator. Encoder fine-tuning from a large amount of non-medical images alleviates data scarcity limitations. The network is trained end-to-end to benefit from simultaneous multi-level segmentation refinements using auto-context. Results : Employed for healthy liver, kidneys and spleen segmentation, our pipeline provides promising results by outperforming state-of-the-art encoder-decoder schemes. Followed for the Combined Healthy Abdominal Organ Segmentation (CHAOS) challenge organized in conjunction with the IEEE International Symposium on Biomedical Imaging 2019, it gave us the first rank for three competition categories: liver CT, liver MR and multi-organ MR segmentation. Conclusion : Combining cascaded convolutional and adversarial networks strengthens the ability of deep learning pipelines to automatically delineate multiple abdominal organs, with good generalization capability. Significance : The comprehensive evaluation provided suggests that better guidance could be achieved to help clinicians in abdominal image interpretation and clinical decision making.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge