Akira Imakura

A new type of federated clustering: A non-model-sharing approach

Jun 11, 2025Abstract:In recent years, the growing need to leverage sensitive data across institutions has led to increased attention on federated learning (FL), a decentralized machine learning paradigm that enables model training without sharing raw data. However, existing FL-based clustering methods, known as federated clustering, typically assume simple data partitioning scenarios such as horizontal or vertical splits, and cannot handle more complex distributed structures. This study proposes data collaboration clustering (DC-Clustering), a novel federated clustering method that supports clustering over complex data partitioning scenarios where horizontal and vertical splits coexist. In DC-Clustering, each institution shares only intermediate representations instead of raw data, ensuring privacy preservation while enabling collaborative clustering. The method allows flexible selection between k-means and spectral clustering, and achieves final results with a single round of communication with the central server. We conducted extensive experiments using synthetic and open benchmark datasets. The results show that our method achieves clustering performance comparable to centralized clustering where all data are pooled. DC-Clustering addresses an important gap in current FL research by enabling effective knowledge discovery from distributed heterogeneous data. Its practical properties -- privacy preservation, communication efficiency, and flexibility -- make it a promising tool for privacy-sensitive domains such as healthcare and finance.

FedDCL: a federated data collaboration learning as a hybrid-type privacy-preserving framework based on federated learning and data collaboration

Sep 27, 2024Abstract:Recently, federated learning has attracted much attention as a privacy-preserving integrated analysis that enables integrated analysis of data held by multiple institutions without sharing raw data. On the other hand, federated learning requires iterative communication across institutions and has a big challenge for implementation in situations where continuous communication with the outside world is extremely difficult. In this study, we propose a federated data collaboration learning (FedDCL), which solves such communication issues by combining federated learning with recently proposed non-model share-type federated learning named as data collaboration analysis. In the proposed FedDCL framework, each user institution independently constructs dimensionality-reduced intermediate representations and shares them with neighboring institutions on intra-group DC servers. On each intra-group DC server, intermediate representations are transformed to incorporable forms called collaboration representations. Federated learning is then conducted between intra-group DC servers. The proposed FedDCL framework does not require iterative communication by user institutions and can be implemented in situations where continuous communication with the outside world is extremely difficult. The experimental results show that the performance of the proposed FedDCL is comparable to that of existing federated learning.

New Solutions Based on the Generalized Eigenvalue Problem for the Data Collaboration Analysis

Apr 22, 2024Abstract:In recent years, the accumulation of data across various institutions has garnered attention for the technology of confidential data analysis, which improves analytical accuracy by sharing data between multiple institutions while protecting sensitive information. Among these methods, Data Collaboration Analysis (DCA) is noted for its efficiency in terms of computational cost and communication load, facilitating data sharing and analysis across different institutions while safeguarding confidential information. However, existing optimization problems for determining the necessary collaborative functions have faced challenges, such as the optimal solution for the collaborative representation often being a zero matrix and the difficulty in understanding the process of deriving solutions. This research addresses these issues by formulating the optimization problem through the segmentation of matrices into column vectors and proposing a solution method based on the generalized eigenvalue problem. Additionally, we demonstrate methods for constructing collaborative functions more effectively through weighting and the selection of efficient algorithms suited to specific situations. Experiments using real-world datasets have shown that our proposed formulation and solution for the collaborative function optimization problem achieve superior predictive accuracy compared to existing methods.

Estimation of conditional average treatment effects on distributed data: A privacy-preserving approach

Feb 05, 2024Abstract:Estimation of conditional average treatment effects (CATEs) is an important topic in various fields such as medical and social sciences. CATEs can be estimated with high accuracy if distributed data across multiple parties can be centralized. However, it is difficult to aggregate such data if they contain privacy information. To address this issue, we proposed data collaboration double machine learning (DC-DML), a method that can estimate CATE models with privacy preservation of distributed data, and evaluated the method through numerical experiments. Our contributions are summarized in the following three points. First, our method enables estimation and testing of semi-parametric CATE models without iterative communication on distributed data. Semi-parametric or non-parametric CATE models enable estimation and testing that is more robust to model mis-specification than parametric models. However, to our knowledge, no communication-efficient method has been proposed for estimating and testing semi-parametric or non-parametric CATE models on distributed data. Second, our method enables collaborative estimation between different parties as well as multiple time points because the dimensionality-reduced intermediate representations can be accumulated. Third, our method performed as well or better than other methods in evaluation experiments using synthetic, semi-synthetic and real-world datasets.

Data Collaboration Analysis applied to Compound Datasets and the Introduction of Projection data to Non-IID settings

Aug 01, 2023Abstract:Given the time and expense associated with bringing a drug to market, numerous studies have been conducted to predict the properties of compounds based on their structure using machine learning. Federated learning has been applied to compound datasets to increase their prediction accuracy while safeguarding potentially proprietary information. However, federated learning is encumbered by low accuracy in not identically and independently distributed (non-IID) settings, i.e., data partitioning has a large label bias, and is considered unsuitable for compound datasets, which tend to have large label bias. To address this limitation, we utilized an alternative method of distributed machine learning to chemical compound data from open sources, called data collaboration analysis (DC). We also proposed data collaboration analysis using projection data (DCPd), which is an improved method that utilizes auxiliary PubChem data. This improves the quality of individual user-side data transformations for the projection data for the creation of intermediate representations. The classification accuracy, i.e., area under the curve in the receiver operating characteristic curve (ROC-AUC) and AUC in the precision-recall curve (PR-AUC), of federated averaging (FedAvg), DC, and DCPd was compared for five compound datasets. We determined that the machine learning performance for non-IID settings was in the order of DCPd, DC, and FedAvg, although they were almost the same in identically and independently distributed (IID) settings. Moreover, the results showed that compared to other methods, DCPd exhibited a negligible decline in classification accuracy in experiments with different degrees of label bias. Thus, DCPd can address the low performance in non-IID settings, which is one of the challenges of federated learning.

Achieving Transparency in Distributed Machine Learning with Explainable Data Collaboration

Dec 06, 2022

Abstract:Transparency of Machine Learning models used for decision support in various industries becomes essential for ensuring their ethical use. To that end, feature attribution methods such as SHAP (SHapley Additive exPlanations) are widely used to explain the predictions of black-box machine learning models to customers and developers. However, a parallel trend has been to train machine learning models in collaboration with other data holders without accessing their data. Such models, trained over horizontally or vertically partitioned data, present a challenge for explainable AI because the explaining party may have a biased view of background data or a partial view of the feature space. As a result, explanations obtained from different participants of distributed machine learning might not be consistent with one another, undermining trust in the product. This paper presents an Explainable Data Collaboration Framework based on a model-agnostic additive feature attribution algorithm (KernelSHAP) and Data Collaboration method of privacy-preserving distributed machine learning. In particular, we present three algorithms for different scenarios of explainability in Data Collaboration and verify their consistency with experiments on open-access datasets. Our results demonstrated a significant (by at least a factor of 1.75) decrease in feature attribution discrepancies among the users of distributed machine learning.

Non-readily identifiable data collaboration analysis for multiple datasets including personal information

Aug 31, 2022

Abstract:Multi-source data fusion, in which multiple data sources are jointly analyzed to obtain improved information, has considerable research attention. For the datasets of multiple medical institutions, data confidentiality and cross-institutional communication are critical. In such cases, data collaboration (DC) analysis by sharing dimensionality-reduced intermediate representations without iterative cross-institutional communications may be appropriate. Identifiability of the shared data is essential when analyzing data including personal information. In this study, the identifiability of the DC analysis is investigated. The results reveals that the shared intermediate representations are readily identifiable to the original data for supervised learning. This study then proposes a non-readily identifiable DC analysis only sharing non-readily identifiable data for multiple medical datasets including personal information. The proposed method solves identifiability concerns based on a random sample permutation, the concept of interpretable DC analysis, and usage of functions that cannot be reconstructed. In numerical experiments on medical datasets, the proposed method exhibits a non-readily identifiability while maintaining a high recognition performance of the conventional DC analysis. For a hospital dataset, the proposed method exhibits a nine percentage point improvement regarding the recognition performance over the local analysis that uses only local dataset.

Another Use of SMOTE for Interpretable Data Collaboration Analysis

Aug 26, 2022

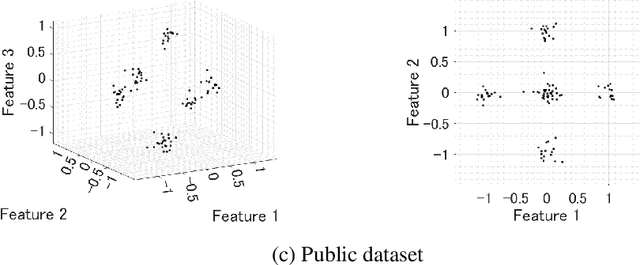

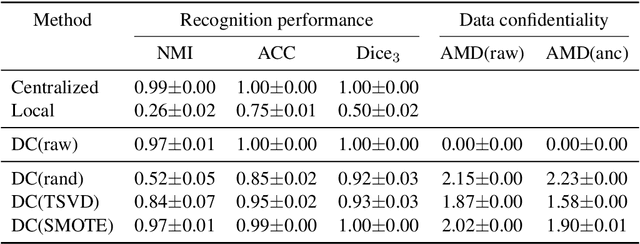

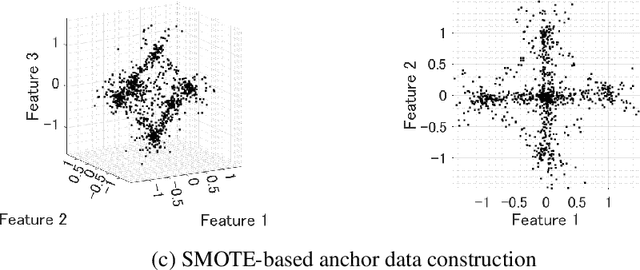

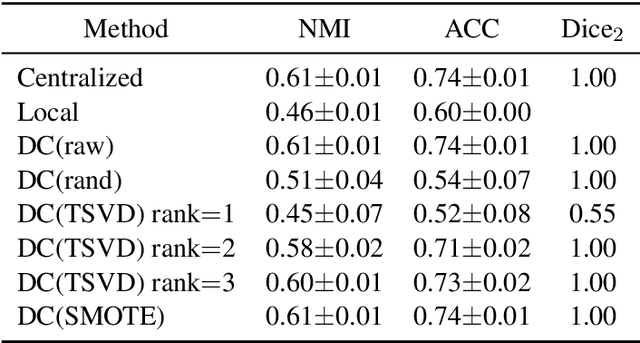

Abstract:Recently, data collaboration (DC) analysis has been developed for privacy-preserving integrated analysis across multiple institutions. DC analysis centralizes individually constructed dimensionality-reduced intermediate representations and realizes integrated analysis via collaboration representations without sharing the original data. To construct the collaboration representations, each institution generates and shares a shareable anchor dataset and centralizes its intermediate representation. Although, random anchor dataset functions well for DC analysis in general, using an anchor dataset whose distribution is close to that of the raw dataset is expected to improve the recognition performance, particularly for the interpretable DC analysis. Based on an extension of the synthetic minority over-sampling technique (SMOTE), this study proposes an anchor data construction technique to improve the recognition performance without increasing the risk of data leakage. Numerical results demonstrate the efficiency of the proposed SMOTE-based method over the existing anchor data constructions for artificial and real-world datasets. Specifically, the proposed method achieves 9 percentage point and 38 percentage point performance improvements regarding accuracy and essential feature selection, respectively, over existing methods for an income dataset. The proposed method provides another use of SMOTE not for imbalanced data classifications but for a key technology of privacy-preserving integrated analysis.

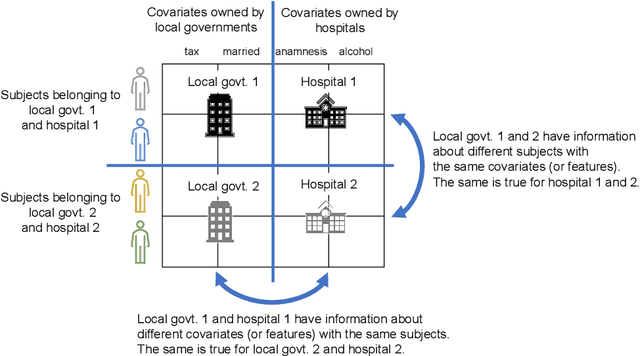

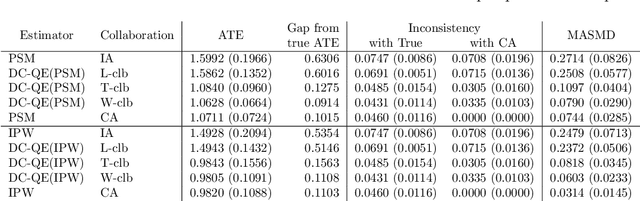

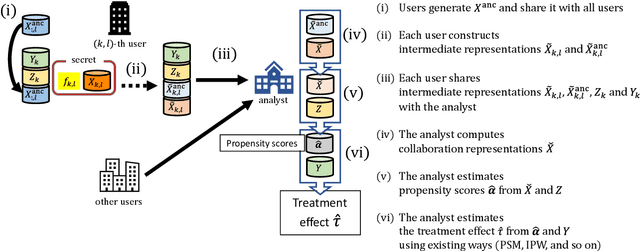

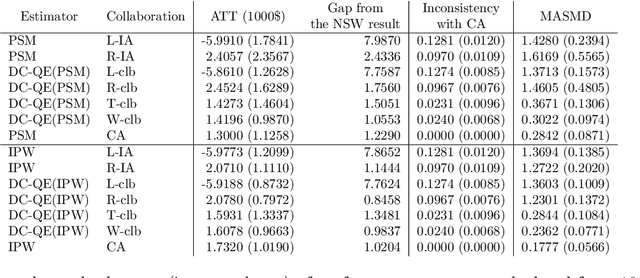

Collaborative causal inference on distributed data

Aug 16, 2022

Abstract:The development of technologies for causal inference with the privacy preservation of distributed data has attracted considerable attention in recent years. To address this issue, we propose a quasi-experiment based on data collaboration (DC-QE) that enables causal inference from distributed data with privacy preservation. Our method preserves the privacy of private data by sharing only dimensionality-reduced intermediate representations, which are individually constructed by each party. Moreover, our method can reduce both random errors and biases, whereas existing methods can only reduce random errors in the estimation of treatment effects. Through numerical experiments on both artificial and real-world data, we confirmed that our method can lead to better estimation results than individual analyses. With the spread of our method, intermediate representations can be published as open data to help researchers find causalities and accumulated as a knowledge base.

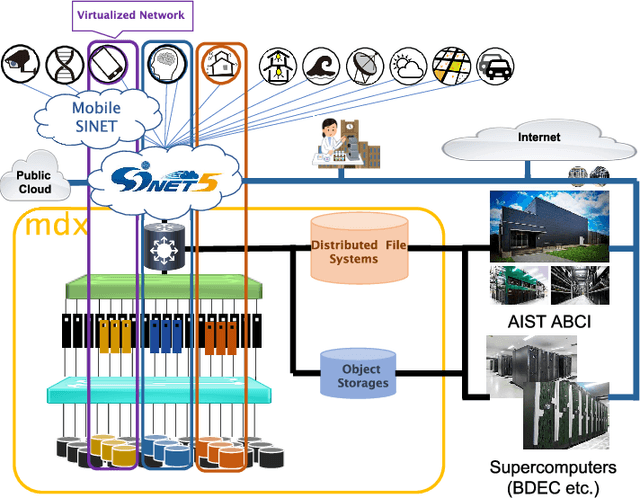

mdx: A Cloud Platform for Supporting Data Science and Cross-Disciplinary Research Collaborations

Mar 27, 2022

Abstract:The growing amount of data and advances in data science have created a need for a new kind of cloud platform that provides users with flexibility, strong security, and the ability to couple with supercomputers and edge devices through high-performance networks. We have built such a nation-wide cloud platform, called "mdx" to meet this need. The mdx platform's virtualization service, jointly operated by 9 national universities and 2 national research institutes in Japan, launched in 2021, and more features are in development. Currently mdx is used by researchers in a wide variety of domains, including materials informatics, geo-spatial information science, life science, astronomical science, economics, social science, and computer science. This paper provides an the overview of the mdx platform, details the motivation for its development, reports its current status, and outlines its future plans.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge