Airong Chen

Physics-informed neural operator for predictive parametric phase-field modelling

Mar 10, 2026Abstract:Predicting the microstructural and morphological evolution of materials through phase-field modelling is computationally intensive, particularly for high-throughput parametric studies. While neural operators such as the Fourier neural operator (FNO) show promise in accelerating the solution of parametric partial differential equations (PDEs), the lack of explicit physical constraints, may limit generalisation and long-term accuracy for complex phase-field dynamics. Here, we develop a physics-informed neural operator framework to learn parametric phase-field PDEs, namely PF-PINO. By embedding the residuals of phase-field governing equations into the data-fidelity loss function, our framework effectively enforces physical constraints during training. We validate PF-PINO against benchmark phase-field problems, including electrochemical corrosion, dendritic crystal solidification, and spinodal decomposition. Our results demonstrate that PF-PINO significantly outperforms conventional FNO in accuracy, generalisation capability, and long-term stability. This work provides a robust and efficient computational tool for phase-field modelling and highlights the potential of physics-informed neural operators to advance scientific machine learning for complex interfacial evolution problems.

Enforcing hidden physics in physics-informed neural networks

Nov 18, 2025Abstract:Physics-informed neural networks (PINNs) represent a new paradigm for solving partial differential equations (PDEs) by integrating physical laws into the learning process of neural networks. However, despite their foundational role, the hidden irreversibility implied by the Second Law of Thermodynamics is often neglected during training, leading to unphysical solutions or even training failures in conventional PINNs. In this paper, we identify this critical gap and introduce a simple, generalized, yet robust irreversibility-regularized strategy that enforces hidden physical laws as soft constraints during training. This approach ensures that the learned solutions consistently respect the intrinsic one-way nature of irreversible physical processes. Across a wide range of benchmarks spanning traveling wave propagation, steady combustion, ice melting, corrosion evolution, and crack propagation, we demonstrate that our regularization scheme reduces predictive errors by more than an order of magnitude, while requiring only minimal modification to existing PINN frameworks. We believe that the proposed framework is broadly applicable to a wide class of PDE-governed physical systems and will have significant impact within the scientific machine learning community.

Sharp-PINNs: staggered hard-constrained physics-informed neural networks for phase field modelling of corrosion

Feb 17, 2025Abstract:Physics-informed neural networks have shown significant potential in solving partial differential equations (PDEs) across diverse scientific fields. However, their performance often deteriorates when addressing PDEs with intricate and strongly coupled solutions. In this work, we present a novel Sharp-PINN framework to tackle complex phase field corrosion problems. Instead of minimizing all governing PDE residuals simultaneously, the Sharp-PINNs introduce a staggered training scheme that alternately minimizes the residuals of Allen-Cahn and Cahn-Hilliard equations, which govern the corrosion system. To further enhance its efficiency and accuracy, we design an advanced neural network architecture that integrates random Fourier features as coordinate embeddings, employs a modified multi-layer perceptron as the primary backbone, and enforces hard constraints in the output layer. This framework is benchmarked through simulations of corrosion problems with multiple pits, where the staggered training scheme and network architecture significantly improve both the efficiency and accuracy of PINNs. Moreover, in three-dimensional cases, our approach is 5-10 times faster than traditional finite element methods while maintaining competitive accuracy, demonstrating its potential for real-world engineering applications in corrosion prediction.

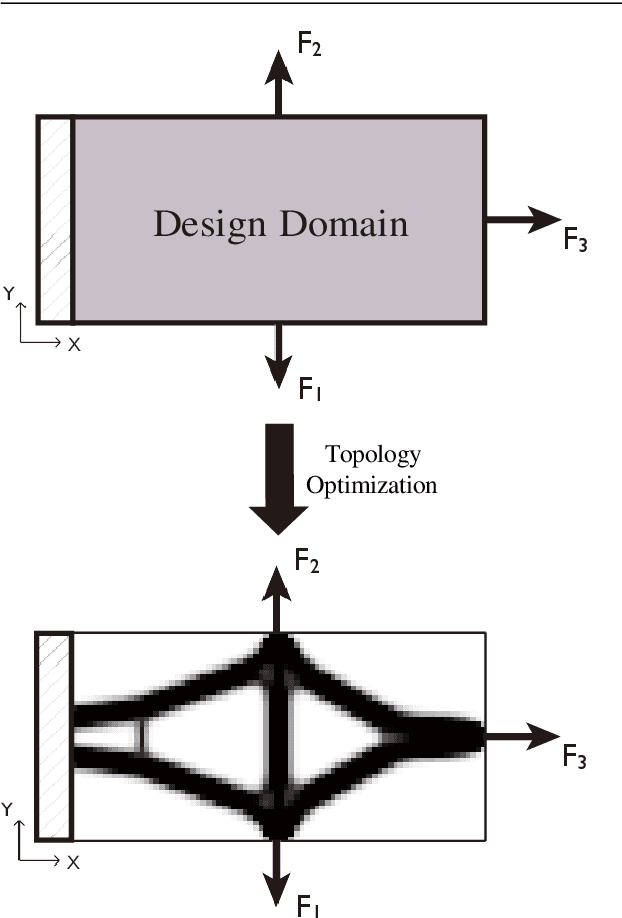

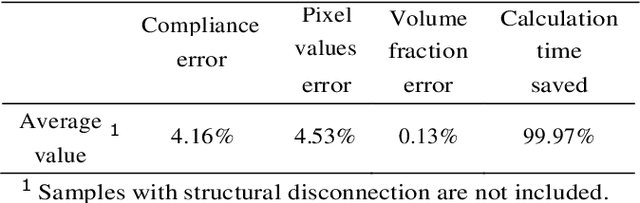

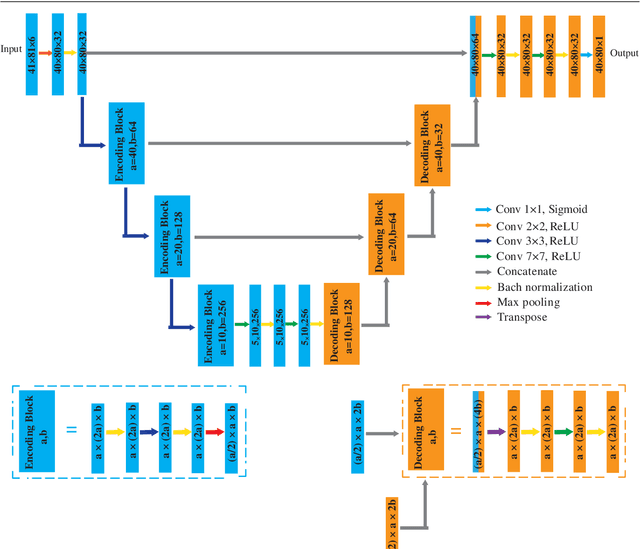

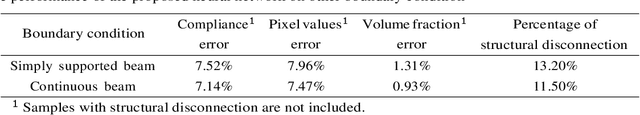

A deep Convolutional Neural Network for topology optimization with strong generalization ability

Jan 23, 2019

Abstract:This paper proposes a deep Convolutional Neural Network(CNN) with strong generalization ability for structural topology optimization. The architecture of the neural network is made up of encoding and decoding parts, which provide down- and up-sampling operations. In addition, a popular technique, namely U-Net, was adopted to improve the performance of the proposed neural network. The input of the neural network is a well-designed tensor with each channel includes different information for the problem, and the output is the layout of the optimal structure. To train the neural network, a large dataset is generated by a conventional topology optimization approach, i.e. SIMP. The performance of the proposed method was evaluated by comparing its efficiency and accuracy with SIMP on a series of typical optimization problems. Results show that a significant reduction in computation cost was achieved with little sacrifice on the optimality of design solutions. Furthermore, the proposed method can intelligently solve problems under boundary conditions not being included in the training dataset.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge