Abdullah Al Redwan Newaz

Energy-Efficient Multi-Robot Coverage Path Planning of Non-Convex Regions of Interests

Apr 24, 2026Abstract:This letter presents an energy-efficient multi-robot coverage path planning (MRCPP) framework for large, nonconvex Regions of Interest (ROI) containing obstacles and no-fly zones (NFZ). Existing minimum-energy coverage planning algorithms utilize meta-heuristic boustrophedon workspace decomposition. Therefore, even with minimum energy objectives and energy consumption constraints, they cannot achieve optimal energy efficiency. Moreover, most existing frameworks support only a single type of robotic platform. MRCPP overcomes these limitations by: generating globally-informed swath generation, creating parallel sweeping paths with minimal turns, calculating safety buffers to ensure safe turning clearance, using an efficient mTSP solver to balance workloads and minimize mission time, and connecting disjoint segments via a modified visibility graph that tracks heading angles while maintaining transitions within safe regions. The efficacy of the proposed MRCPP framework is demonstrated through real-world experiments involving autonomous aerial vehicles (AAVs) and autonomous surface vehicles (ASVs). Evaluations demonstrate that the proposed MRCPP consistently outperforms state-of-the-art planners, reducing average total energy consumption by 3\% to 40\% for a team of 3 robots and computation time by an order of magnitude, while maintaining balanced workload distribution and strong scalability across increasing fleet sizes. The MRCPP framework is released as an open-source package and videos of real-world and simulated experiments are available at https://mrc-pp.github.io.

YoloTag: Vision-based Robust UAV Navigation with Fiducial Markers

Sep 03, 2024Abstract:By harnessing fiducial markers as visual landmarks in the environment, Unmanned Aerial Vehicles (UAVs) can rapidly build precise maps and navigate spaces safely and efficiently, unlocking their potential for fluent collaboration and coexistence with humans. Existing fiducial marker methods rely on handcrafted feature extraction, which sacrifices accuracy. On the other hand, deep learning pipelines for marker detection fail to meet real-time runtime constraints crucial for navigation applications. In this work, we propose YoloTag \textemdash a real-time fiducial marker-based localization system. YoloTag uses a lightweight YOLO v8 object detector to accurately detect fiducial markers in images while meeting the runtime constraints needed for navigation. The detected markers are then used by an efficient perspective-n-point algorithm to estimate UAV states. However, this localization system introduces noise, causing instability in trajectory tracking. To suppress noise, we design a higher-order Butterworth filter that effectively eliminates noise through frequency domain analysis. We evaluate our algorithm through real-robot experiments in an indoor environment, comparing the trajectory tracking performance of our method against other approaches in terms of several distance metrics.

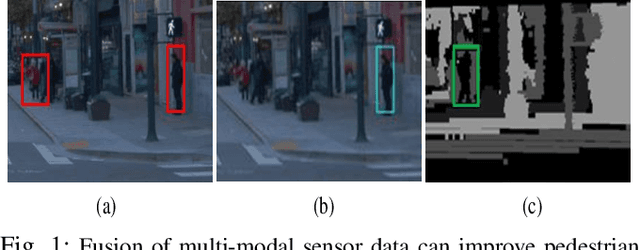

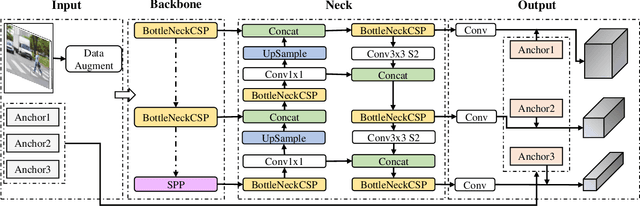

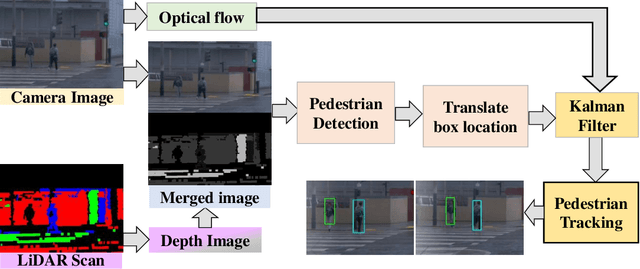

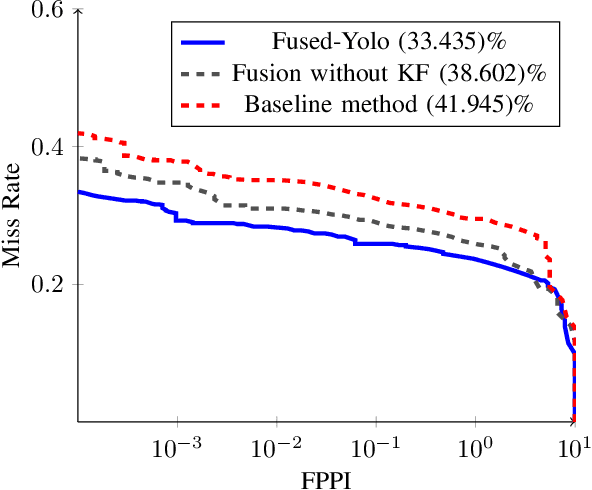

A Pedestrian Detection and Tracking Framework for Autonomous Cars: Efficient Fusion of Camera and LiDAR Data

Aug 27, 2021

Abstract:This paper presents a novel method for pedestrian detection and tracking by fusing camera and LiDAR sensor data. To deal with the challenges associated with the autonomous driving scenarios, an integrated tracking and detection framework is proposed. The detection phase is performed by converting LiDAR streams to computationally tractable depth images, and then, a deep neural network is developed to identify pedestrian candidates both in RGB and depth images. To provide accurate information, the detection phase is further enhanced by fusing multi-modal sensor information using the Kalman filter. The tracking phase is a combination of the Kalman filter prediction and an optical flow algorithm to track multiple pedestrians in a scene. We evaluate our framework on a real public driving dataset. Experimental results demonstrate that the proposed method achieves significant performance improvement over a baseline method that solely uses image-based pedestrian detection.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge