Abbas Khosravi

Detection of Epileptic Seizures on EEG Signals Using ANFIS Classifier, Autoencoders and Fuzzy Entropies

Sep 06, 2021

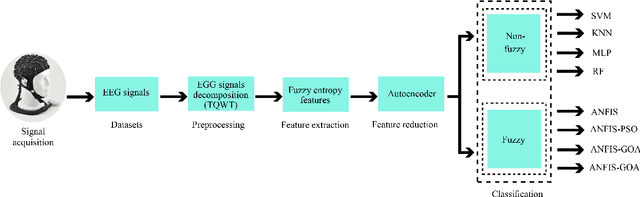

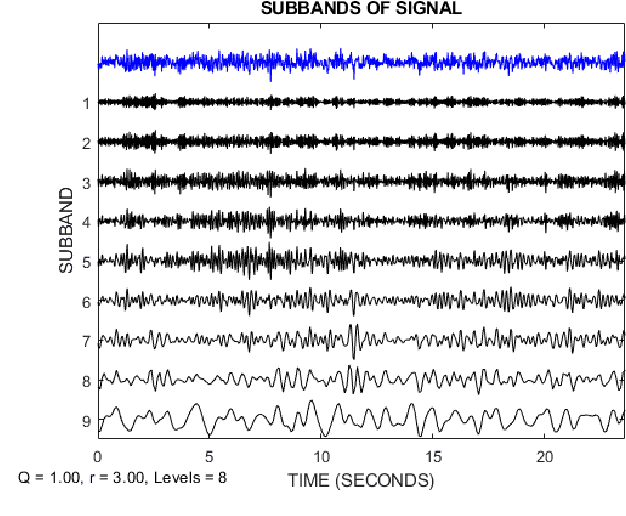

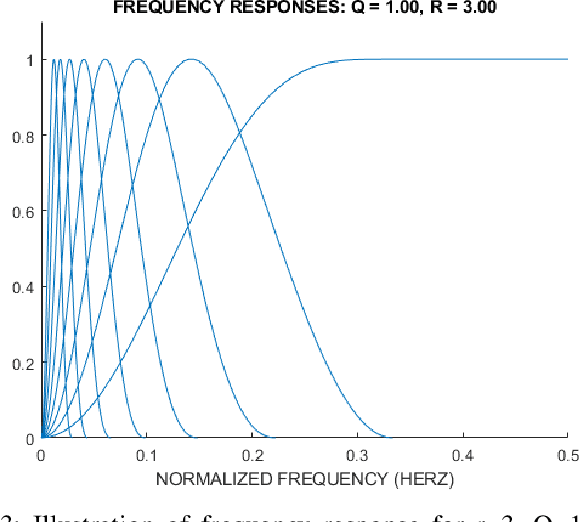

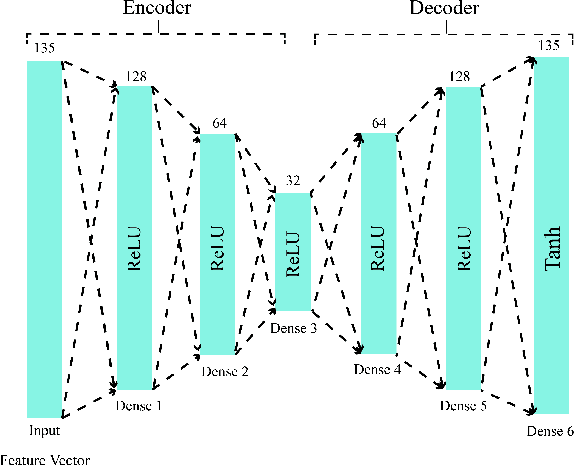

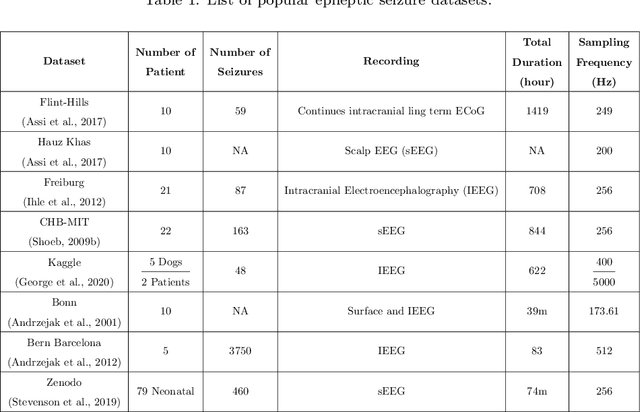

Abstract:Epilepsy is one of the most crucial neurological disorders, and its early diagnosis will help the clinicians to provide accurate treatment for the patients. The electroencephalogram (EEG) signals are widely used for epileptic seizures detection, which provides specialists with substantial information about the functioning of the brain. In this paper, a novel diagnostic procedure using fuzzy theory and deep learning techniques are introduced. The proposed method is evaluated on the Bonn University dataset with six classification combinations and also on the Freiburg dataset. The tunable-Q wavelet transform (TQWT) is employed to decompose the EEG signals into different sub-bands. In the feature extraction step, 13 different fuzzy entropies are calculated from different sub-bands of TQWT, and their computational complexities are calculated to help researchers choose the best feature sets. In the following, an autoencoder (AE) with six layers is employed for dimensionality reduction. Finally, the standard adaptive neuro-fuzzy inference system (ANFIS), and also its variants with grasshopper optimization algorithm (ANFIS-GOA), particle swarm optimization (ANFIS-PSO), and breeding swarm optimization (ANFIS-BS) methods are used for classification. Using our proposed method, ANFIS-BS method has obtained an accuracy of 99.74% in classifying into two classes and an accuracy of 99.46% in ternary classification on the Bonn dataset and 99.28% on the Freiburg dataset, reaching state-of-the-art performances on both of them.

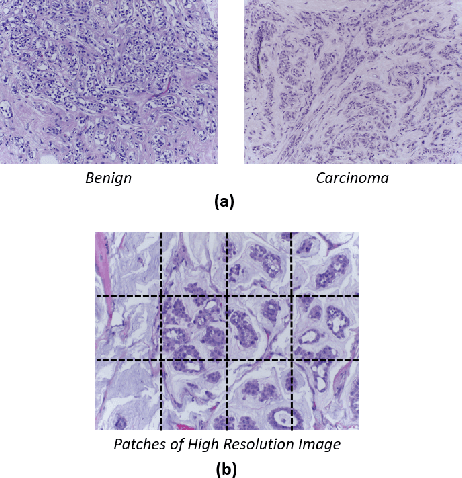

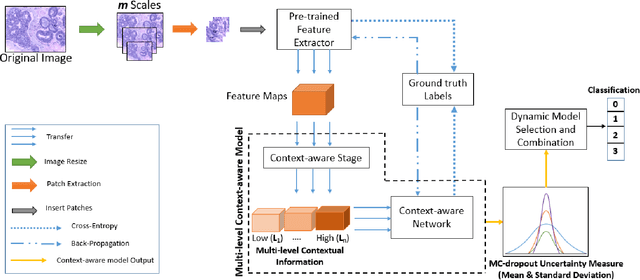

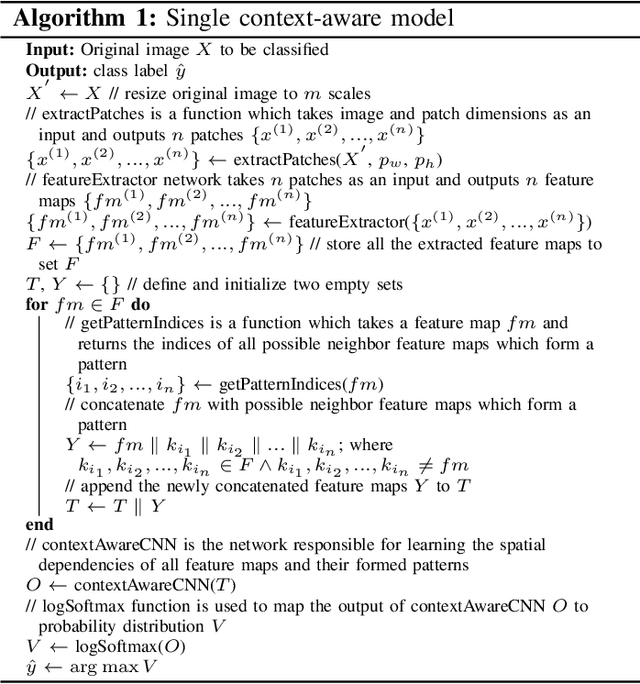

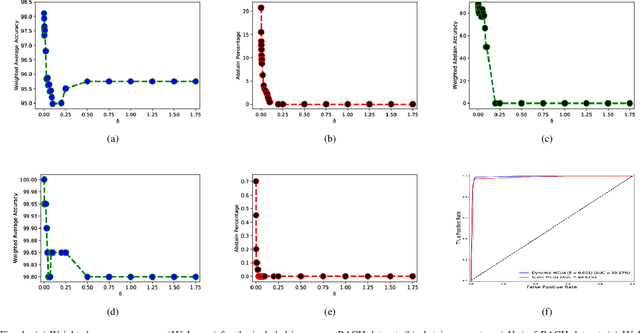

MCUa: Multi-level Context and Uncertainty aware Dynamic Deep Ensemble for Breast Cancer Histology Image Classification

Aug 24, 2021

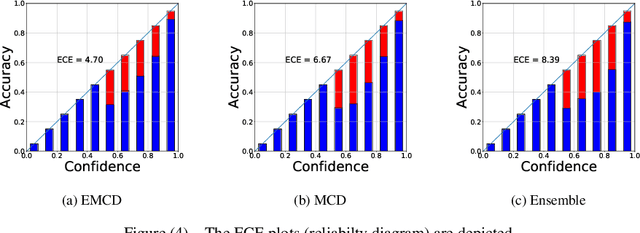

Abstract:Breast histology image classification is a crucial step in the early diagnosis of breast cancer. In breast pathological diagnosis, Convolutional Neural Networks (CNNs) have demonstrated great success using digitized histology slides. However, tissue classification is still challenging due to the high visual variability of the large-sized digitized samples and the lack of contextual information. In this paper, we propose a novel CNN, called Multi-level Context and Uncertainty aware (MCUa) dynamic deep learning ensemble model.MCUamodel consists of several multi-level context-aware models to learn the spatial dependency between image patches in a layer-wise fashion. It exploits the high sensitivity to the multi-level contextual information using an uncertainty quantification component to accomplish a novel dynamic ensemble model.MCUamodelhas achieved a high accuracy of 98.11% on a breast cancer histology image dataset. Experimental results show the superior effectiveness of the proposed solution compared to the state-of-the-art histology classification models.

* accepted by IEEE Transactions on Biomedical Engineering

Uncertainty-Aware Credit Card Fraud Detection Using Deep Learning

Jul 28, 2021

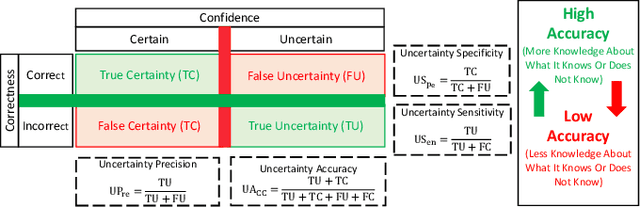

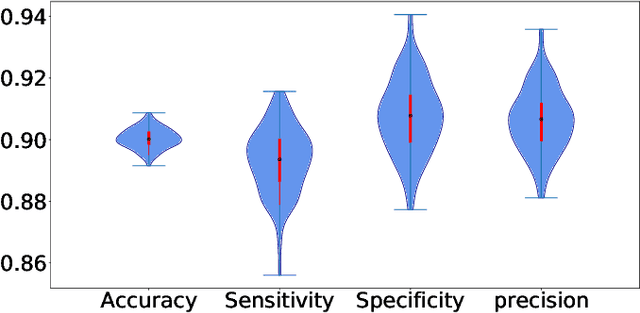

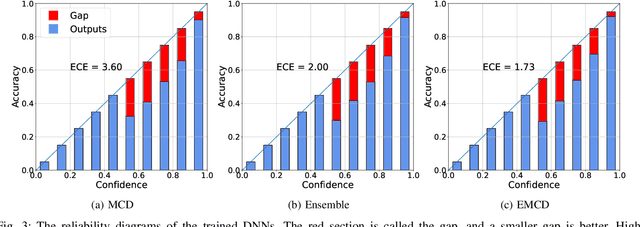

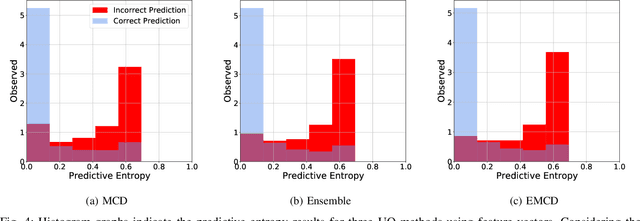

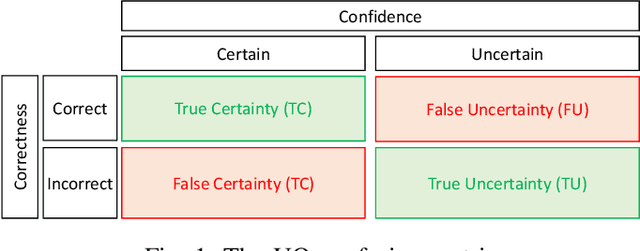

Abstract:Countless research works of deep neural networks (DNNs) in the task of credit card fraud detection have focused on improving the accuracy of point predictions and mitigating unwanted biases by building different network architectures or learning models. Quantifying uncertainty accompanied by point estimation is essential because it mitigates model unfairness and permits practitioners to develop trustworthy systems which abstain from suboptimal decisions due to low confidence. Explicitly, assessing uncertainties associated with DNNs predictions is critical in real-world card fraud detection settings for characteristic reasons, including (a) fraudsters constantly change their strategies, and accordingly, DNNs encounter observations that are not generated by the same process as the training distribution, (b) owing to the time-consuming process, very few transactions are timely checked by professional experts to update DNNs. Therefore, this study proposes three uncertainty quantification (UQ) techniques named Monte Carlo dropout, ensemble, and ensemble Monte Carlo dropout for card fraud detection applied on transaction data. Moreover, to evaluate the predictive uncertainty estimates, UQ confusion matrix and several performance metrics are utilized. Through experimental results, we show that the ensemble is more effective in capturing uncertainty corresponding to generated predictions. Additionally, we demonstrate that the proposed UQ methods provide extra insight to the point predictions, leading to elevate the fraud prevention process.

An Uncertainty-Aware Deep Learning Framework for Defect Detection in Casting Products

Jul 24, 2021

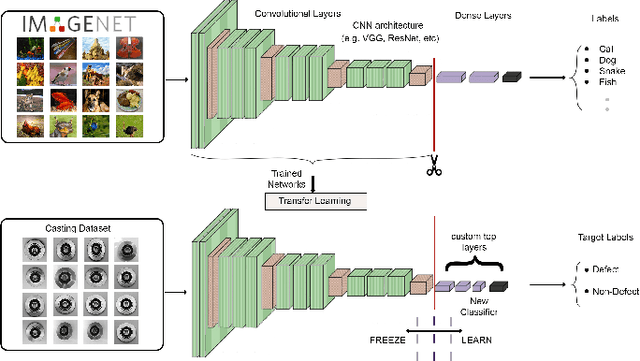

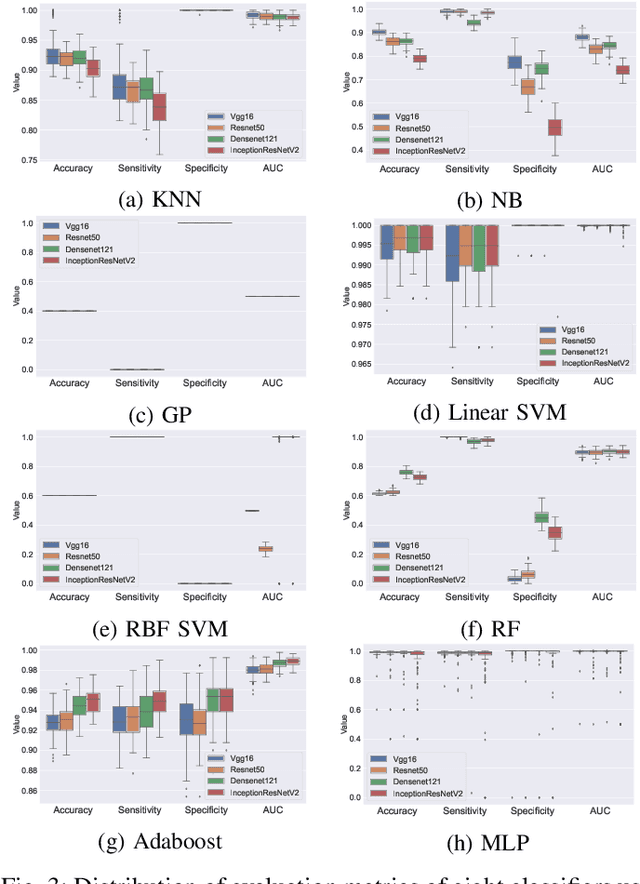

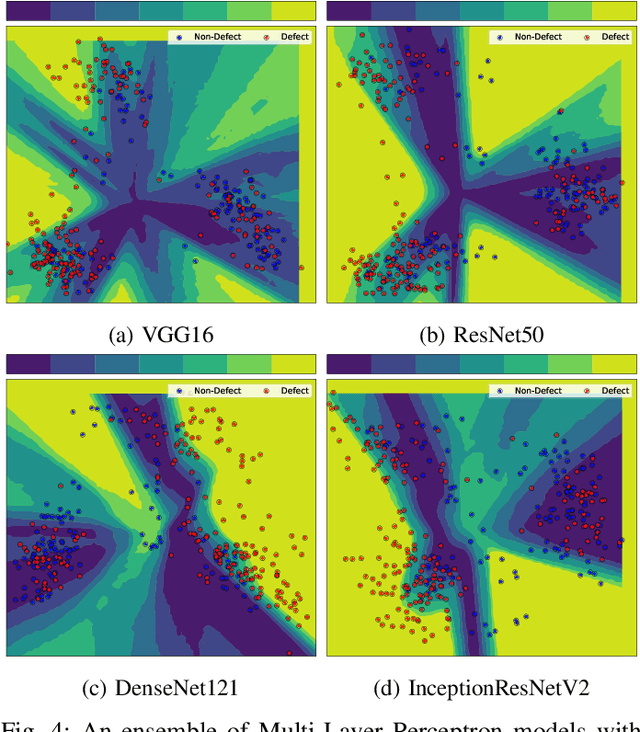

Abstract:Defects are unavoidable in casting production owing to the complexity of the casting process. While conventional human-visual inspection of casting products is slow and unproductive in mass productions, an automatic and reliable defect detection not just enhances the quality control process but positively improves productivity. However, casting defect detection is a challenging task due to diversity and variation in defects' appearance. Convolutional neural networks (CNNs) have been widely applied in both image classification and defect detection tasks. Howbeit, CNNs with frequentist inference require a massive amount of data to train on and still fall short in reporting beneficial estimates of their predictive uncertainty. Accordingly, leveraging the transfer learning paradigm, we first apply four powerful CNN-based models (VGG16, ResNet50, DenseNet121, and InceptionResNetV2) on a small dataset to extract meaningful features. Extracted features are then processed by various machine learning algorithms to perform the classification task. Simulation results demonstrate that linear support vector machine (SVM) and multi-layer perceptron (MLP) show the finest performance in defect detection of casting images. Secondly, to achieve a reliable classification and to measure epistemic uncertainty, we employ an uncertainty quantification (UQ) technique (ensemble of MLP models) using features extracted from four pre-trained CNNs. UQ confusion matrix and uncertainty accuracy metric are also utilized to evaluate the predictive uncertainty estimates. Comprehensive comparisons reveal that UQ method based on VGG16 outperforms others to fetch uncertainty. We believe an uncertainty-aware automatic defect detection solution will reinforce casting productions quality assurance.

Confidence Aware Neural Networks for Skin Cancer Detection

Jul 24, 2021

Abstract:Deep learning (DL) models have received particular attention in medical imaging due to their promising pattern recognition capabilities. However, Deep Neural Networks (DNNs) require a huge amount of data, and because of the lack of sufficient data in this field, transfer learning can be a great solution. DNNs used for disease diagnosis meticulously concentrate on improving the accuracy of predictions without providing a figure about their confidence of predictions. Knowing how much a DNN model is confident in a computer-aided diagnosis model is necessary for gaining clinicians' confidence and trust in DL-based solutions. To address this issue, this work presents three different methods for quantifying uncertainties for skin cancer detection from images. It also comprehensively evaluates and compares performance of these DNNs using novel uncertainty-related metrics. The obtained results reveal that the predictive uncertainty estimation methods are capable of flagging risky and erroneous predictions with a high uncertainty estimate. We also demonstrate that ensemble approaches are more reliable in capturing uncertainties through inference.

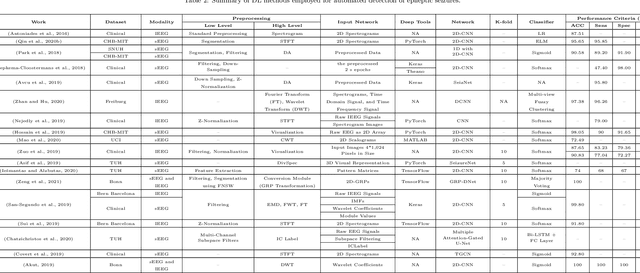

Applications of Epileptic Seizures Detection in Neuroimaging Modalities Using Deep Learning Techniques: Methods, Challenges, and Future Works

May 29, 2021

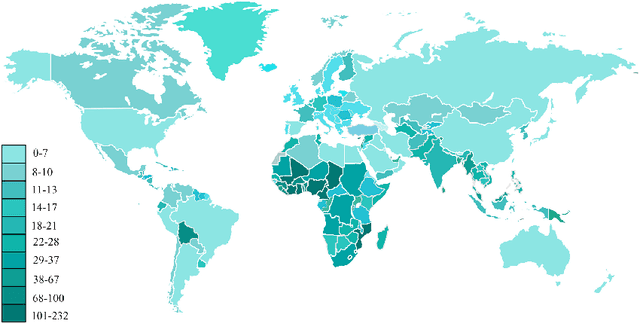

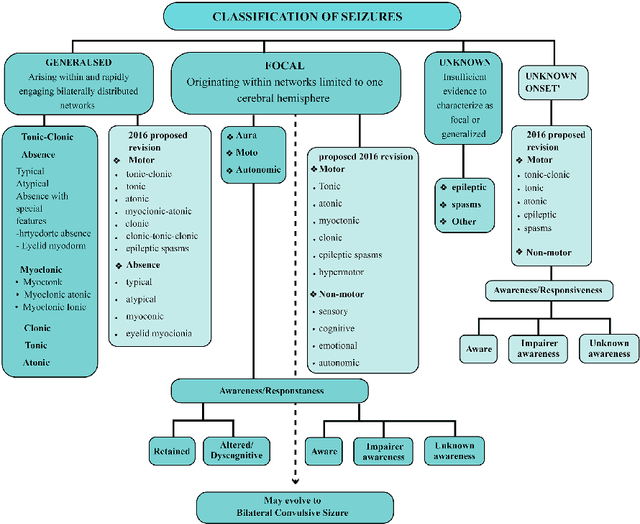

Abstract:Epileptic seizures are a type of neurological disorder that affect many people worldwide. Specialist physicians and neurologists take advantage of structural and functional neuroimaging modalities to diagnose various types of epileptic seizures. Neuroimaging modalities assist specialist physicians considerably in analyzing brain tissue and the changes made in it. One method to accelerate the accurate and fast diagnosis of epileptic seizures is to employ computer aided diagnosis systems (CADS) based on artificial intelligence (AI) and functional and structural neuroimaging modalities. AI encompasses a variety of areas, and one of its branches is deep learning (DL). Not long ago, and before the rise of DL algorithms, feature extraction was an essential part of every conventional machine learning method, yet handcrafting features limit these models' performances to the knowledge of system designers. DL methods resolved this issue entirely by automating the feature extraction and classification process; applications of these methods in many fields of medicine, such as the diagnosis of epileptic seizures, have made notable improvements. In this paper, a comprehensive overview of the types of DL methods exploited to diagnose epileptic seizures from various neuroimaging modalities has been studied. Additionally, rehabilitation systems and cloud computing in epileptic seizures diagnosis applications have been exactly investigated using various modalities.

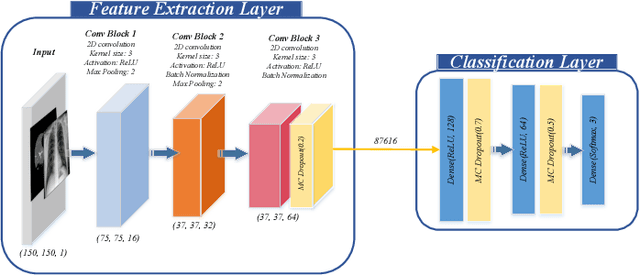

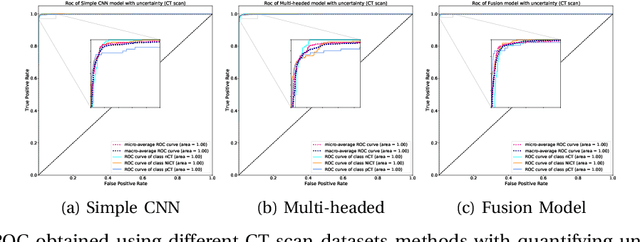

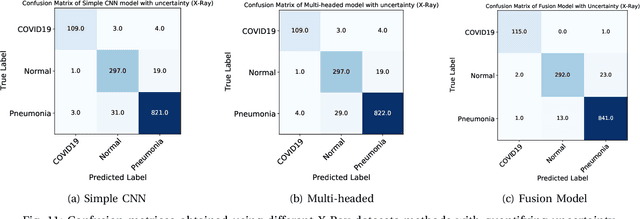

UncertaintyFuseNet: Robust Uncertainty-aware Hierarchical Feature Fusion with Ensemble Monte Carlo Dropout for COVID-19 Detection

May 22, 2021

Abstract:The COVID-19 (Coronavirus disease 2019) has infected more than 151 million people and caused approximately 3.17 million deaths around the world up to the present. The rapid spread of COVID-19 is continuing to threaten human's life and health. Therefore, the development of computer-aided detection (CAD) systems based on machine and deep learning methods which are able to accurately differentiate COVID-19 from other diseases using chest computed tomography (CT) and X-Ray datasets is essential and of immediate priority. Different from most of the previous studies which used either one of CT or X-ray images, we employed both data types with sufficient samples in implementation. On the other hand, due to the extreme sensitivity of this pervasive virus, model uncertainty should be considered, while most previous studies have overlooked it. Therefore, we propose a novel powerful fusion model named $UncertaintyFuseNet$ that consists of an uncertainty module: Ensemble Monte Carlo (EMC) dropout. The obtained results prove the effectiveness of our proposed fusion for COVID-19 detection using CT scan and X-Ray datasets. Also, our proposed $UncertaintyFuseNet$ model is significantly robust to noise and performs well with the previously unseen data. The source codes and models of this study are available at: https://github.com/moloud1987/UncertaintyFuseNet-for-COVID-19-Classification.

Time Series Forecasting of New Cases and New Deaths Rate for COVID-19 using Deep Learning Methods

Apr 28, 2021

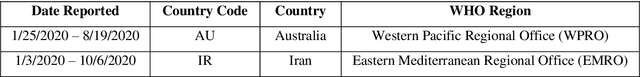

Abstract:Covid-19 has been started in the year 2019 and imposed restrictions in many countries and costs organisations and governments. Predicting the number of new cases and deaths during this period can be a useful step in predicting the costs and facilities required in the future. The purpose of this study is to predict new cases and death rate for seven days ahead. Deep learning methods and statistical analysis model these predictions for 100 days. Six different deep learning methods are examined for the data adopted from the WHO website. Three methods are known as LSTM, Convolutional LSTM, and GRU. The bi-directional mode is then considered for each method to forecast the rate of new cases and new deaths for Australia and Iran countries. This study is novel as it attempts to implement the mentioned three deep learning methods, along with their Bi-directional models, to predict COVID-19 new cases and new death rate time series. All methods are compared, and results are presented. The results are examined in the form of graphs and statistical analyses. The results show that the Bi-directional models have lower error than other models. Several error evaluation metrics are presented to compare all models, and finally, the superiority of Bi-directional methods are determined. The experimental results and statistical test show on datasets to compare the proposed method with other baseline methods. This research could be useful for organisations working against COVID-19 and determining their long-term plans.

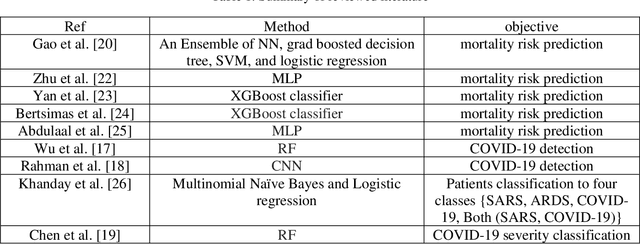

CNN AE: Convolution Neural Network combined with Autoencoder approach to detect survival chance of COVID 19 patients

Apr 18, 2021

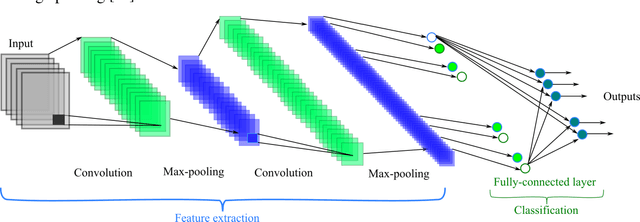

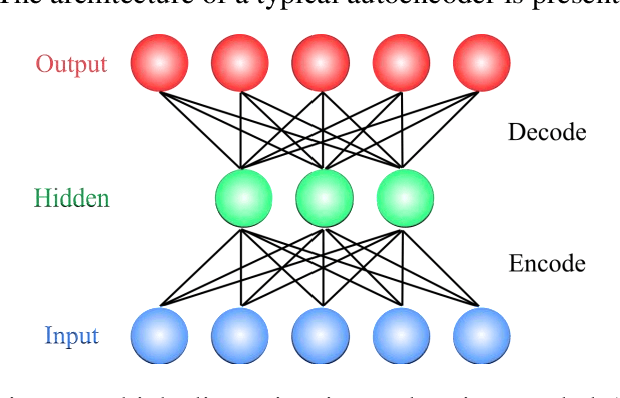

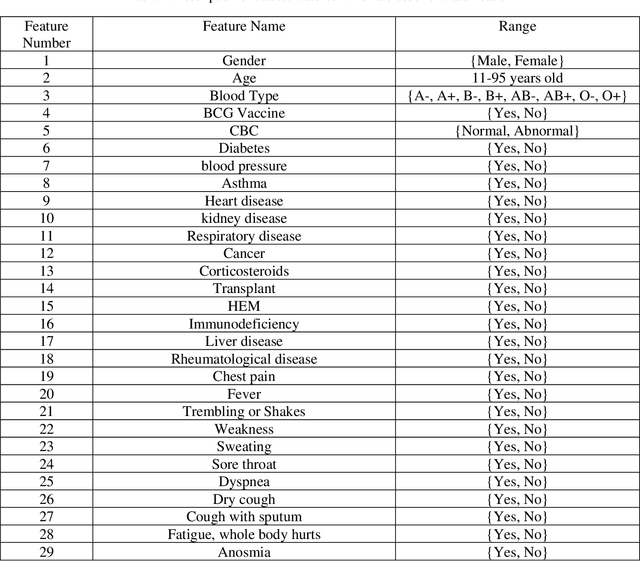

Abstract:In this paper, we propose a novel method named CNN-AE to predict survival chance of COVID-19 patients using a CNN trained on clinical information. To further increase the prediction accuracy, we use the CNN in combination with an autoencoder. Our method is one of the first that aims to predict survival chance of already infected patients. We rely on clinical data to carry out the prediction. The motivation is that the required resources to prepare CT images are expensive and limited compared to the resources required to collect clinical data such as blood pressure, liver disease, etc. We evaluate our method on a publicly available clinical dataset of deceased and recovered patients which we have collected. Careful analysis of the dataset properties is also presented which consists of important features extraction and correlation computation between features. Since most of COVID-19 patients are usually recovered, the number of deceased samples of our dataset is low leading to data imbalance. To remedy this issue, a data augmentation procedure based on autoencoders is proposed. To demonstrate the generality of our augmentation method, we train random forest and Na\"ive Bayes on our dataset with and without augmentation and compare their performance. We also evaluate our method on another dataset for further generality verification. Experimental results reveal the superiority of CNN-AE method compared to the standard CNN as well as other methods such as random forest and Na\"ive Bayes. COVID-19 detection average accuracy of CNN-AE is 96.05% which is higher than CNN average accuracy of 92.49%. To show that clinical data can be used as a reliable dataset for COVID-19 survival chance prediction, CNN-AE is compared with a standard CNN which is trained on CT images.

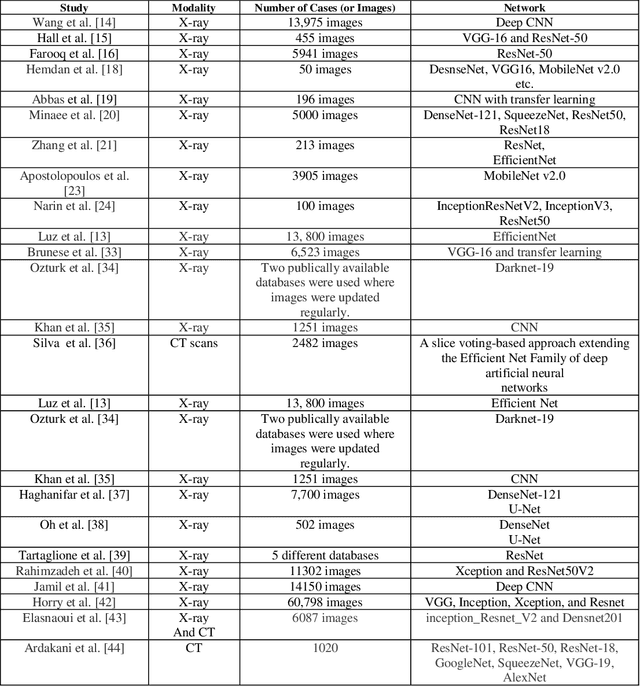

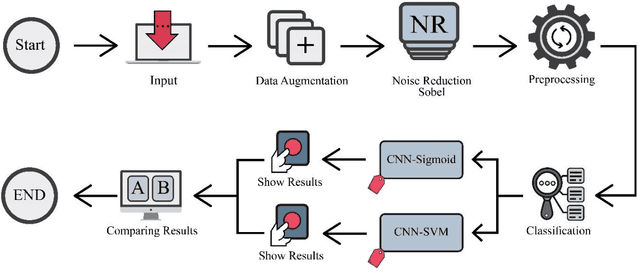

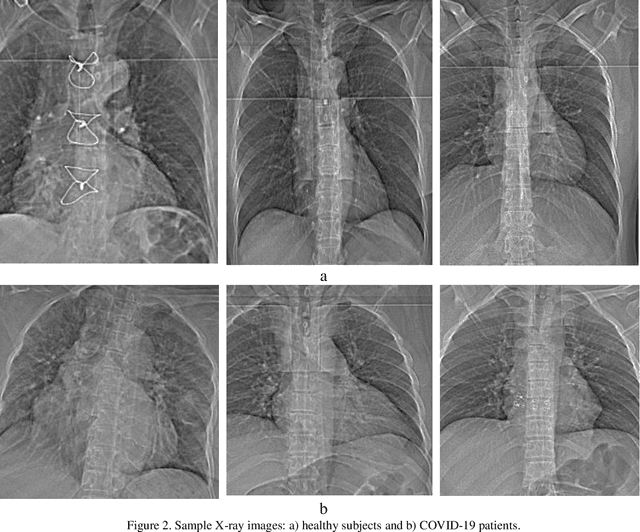

Fusion of convolution neural network, support vector machine and Sobel filter for accurate detection of COVID-19 patients using X-ray images

Feb 13, 2021

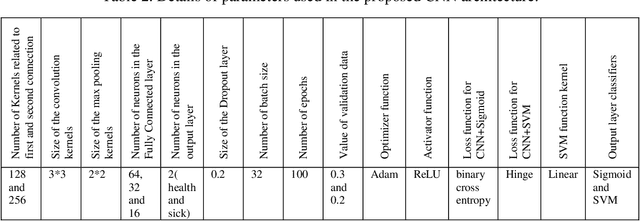

Abstract:The coronavirus (COVID-19) is currently the most common contagious disease which is prevalent all over the world. The main challenge of this disease is the primary diagnosis to prevent secondary infections and its spread from one person to another. Therefore, it is essential to use an automatic diagnosis system along with clinical procedures for the rapid diagnosis of COVID-19 to prevent its spread. Artificial intelligence techniques using computed tomography (CT) images of the lungs and chest radiography have the potential to obtain high diagnostic performance for Covid-19 diagnosis. In this study, a fusion of convolutional neural network (CNN), support vector machine (SVM), and Sobel filter is proposed to detect COVID-19 using X-ray images. A new X-ray image dataset was collected and subjected to high pass filter using a Sobel filter to obtain the edges of the images. Then these images are fed to CNN deep learning model followed by SVM classifier with ten-fold cross validation strategy. This method is designed so that it can learn with not many data. Our results show that the proposed CNN-SVM with Sobel filtering (CNN-SVM+Sobel) achieved the highest classification accuracy of 99.02% in accurate detection of COVID-19. It showed that using Sobel filter can improve the performance of CNN. Unlike most of the other researches, this method does not use a pre-trained network. We have also validated our developed model using six public databases and obtained the highest performance. Hence, our developed model is ready for clinical application

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge