Zuwei Li

End-to-End Visual Speech Recognition for Small-Scale Datasets

Apr 02, 2019

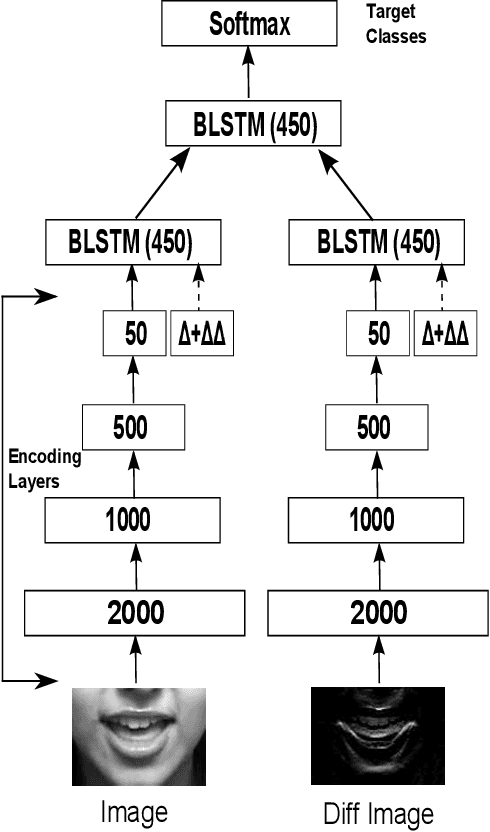

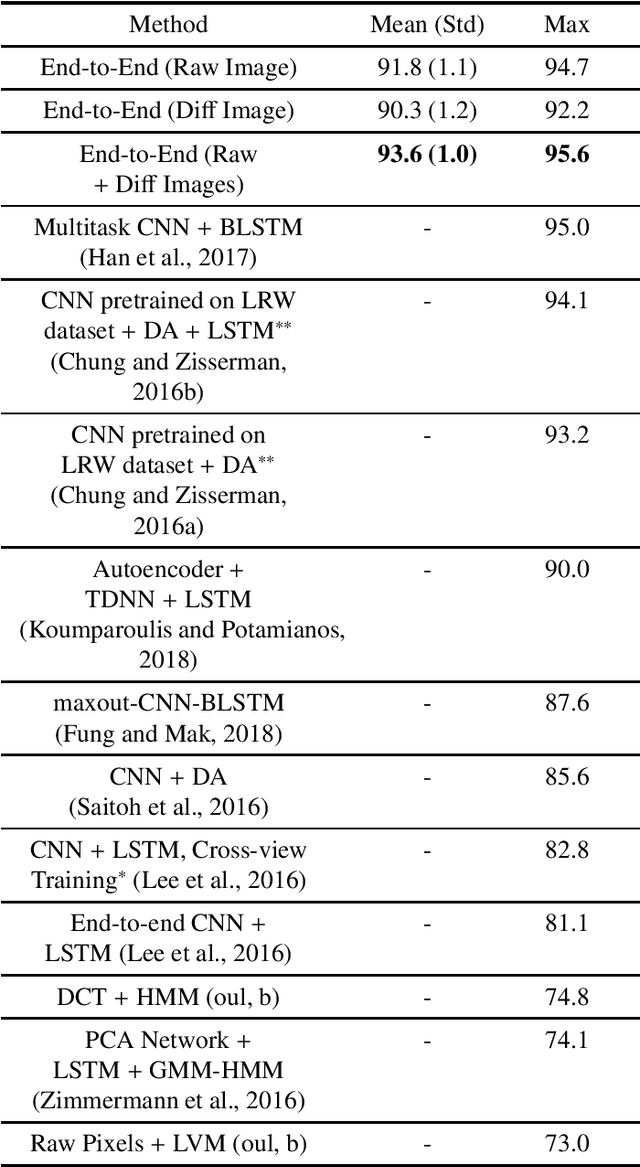

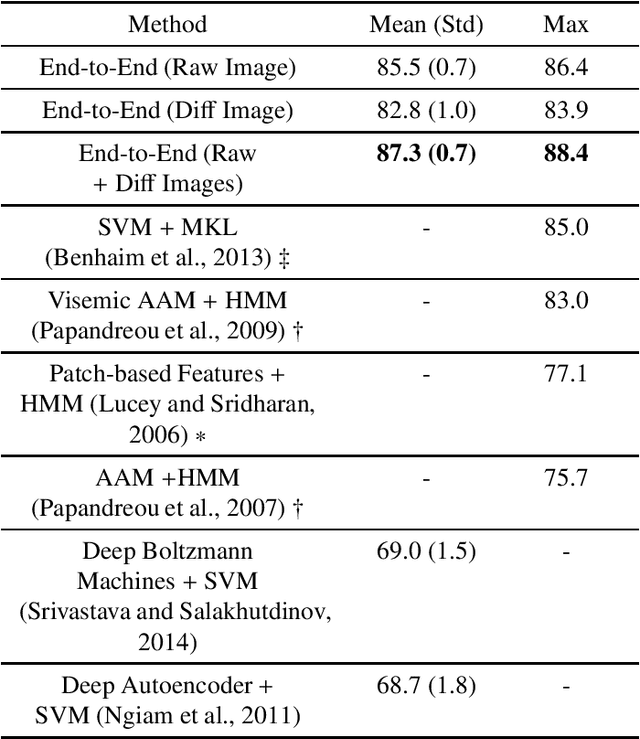

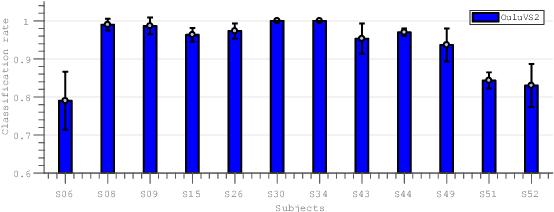

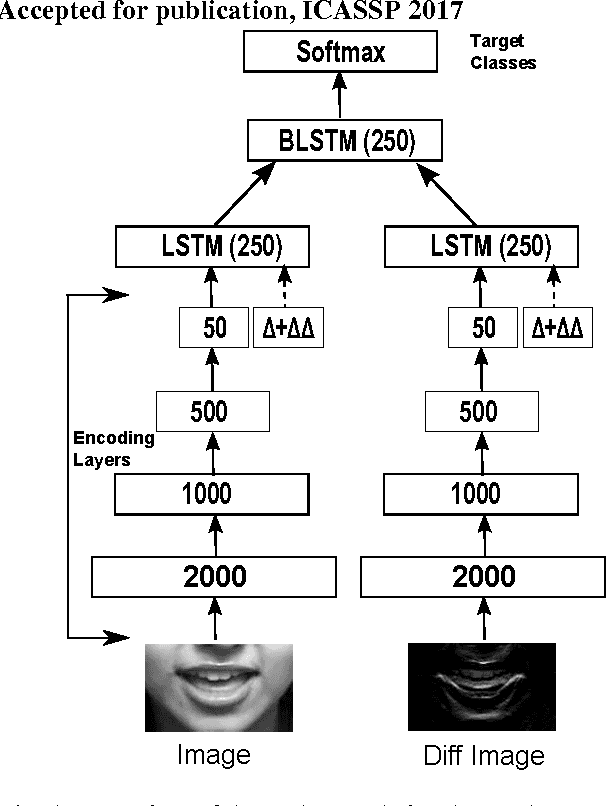

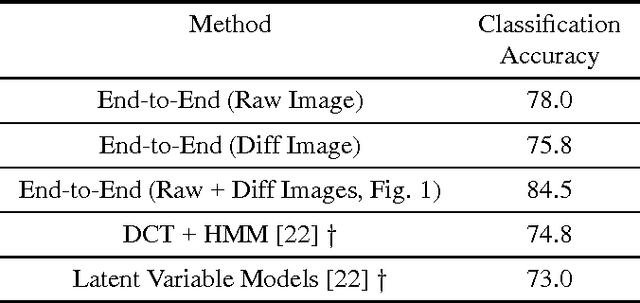

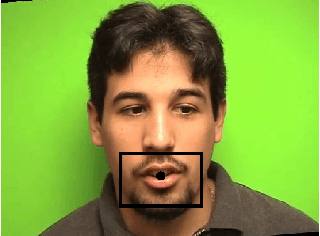

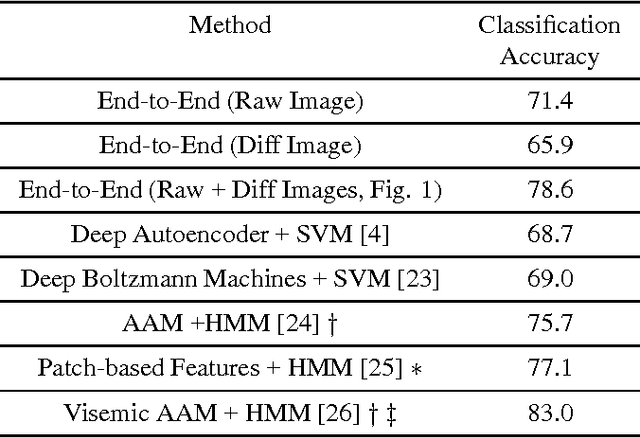

Abstract:Traditional visual speech recognition systems consist of two stages, feature extraction and classification. Recently, several deep learning approaches have been presented which automatically extract features from the mouth images and aim to replace the feature extraction stage. However, research on joint learning of features and classification remains limited. In addition, most of the existing methods require large amounts of data in order to achieve state-of-the-art performance, otherwise they under-perform. In this work, we present an end-to-end visual speech recognition system based on fully-connected layers and Long-Short Memory (LSTM) networks which is suitable for small-scale datasets. The model consists of two streams which extract features directly from the mouth and difference images, respectively. The temporal dynamics in each stream are modelled by a Bidirectional LSTM (BLSTM) and the fusion of the two streams takes place via another BLSTM. An absolute improvement of 0.6%, 3.4%, 3.9%, 11.4% over the state-of-the-art is reported on the OuluVS2, CUAVE, AVLetters and AVLetters2 databases, respectively.

End-to-End Audiovisual Fusion with LSTMs

Sep 12, 2017

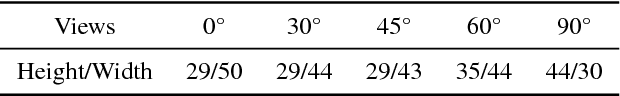

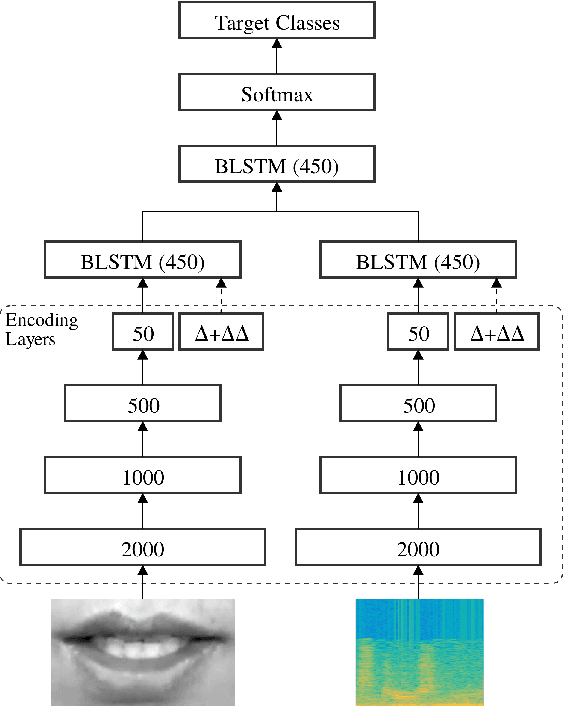

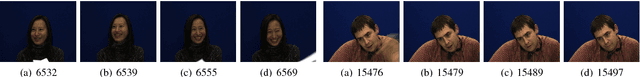

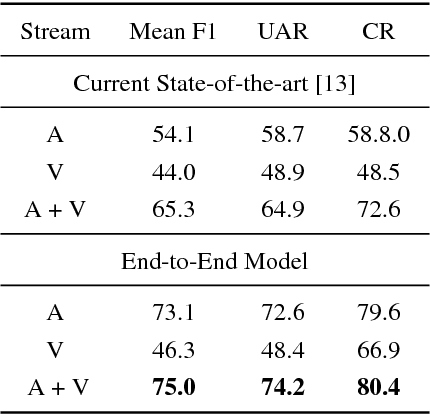

Abstract:Several end-to-end deep learning approaches have been recently presented which simultaneously extract visual features from the input images and perform visual speech classification. However, research on jointly extracting audio and visual features and performing classification is very limited. In this work, we present an end-to-end audiovisual model based on Bidirectional Long Short-Term Memory (BLSTM) networks. To the best of our knowledge, this is the first audiovisual fusion model which simultaneously learns to extract features directly from the pixels and spectrograms and perform classification of speech and nonlinguistic vocalisations. The model consists of multiple identical streams, one for each modality, which extract features directly from mouth regions and spectrograms. The temporal dynamics in each stream/modality are modeled by a BLSTM and the fusion of multiple streams/modalities takes place via another BLSTM. An absolute improvement of 1.9% in the mean F1 of 4 nonlingusitic vocalisations over audio-only classification is reported on the AVIC database. At the same time, the proposed end-to-end audiovisual fusion system improves the state-of-the-art performance on the AVIC database leading to a 9.7% absolute increase in the mean F1 measure. We also perform audiovisual speech recognition experiments on the OuluVS2 database using different views of the mouth, frontal to profile. The proposed audiovisual system significantly outperforms the audio-only model for all views when the acoustic noise is high.

End-To-End Visual Speech Recognition With LSTMs

Jan 20, 2017

Abstract:Traditional visual speech recognition systems consist of two stages, feature extraction and classification. Recently, several deep learning approaches have been presented which automatically extract features from the mouth images and aim to replace the feature extraction stage. However, research on joint learning of features and classification is very limited. In this work, we present an end-to-end visual speech recognition system based on Long-Short Memory (LSTM) networks. To the best of our knowledge, this is the first model which simultaneously learns to extract features directly from the pixels and perform classification and also achieves state-of-the-art performance in visual speech classification. The model consists of two streams which extract features directly from the mouth and difference images, respectively. The temporal dynamics in each stream are modelled by an LSTM and the fusion of the two streams takes place via a Bidirectional LSTM (BLSTM). An absolute improvement of 9.7% over the base line is reported on the OuluVS2 database, and 1.5% on the CUAVE database when compared with other methods which use a similar visual front-end.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge