Zhuangzi Li

R4-CGQA: Retrieval-based Vision Language Models for Computer Graphics Image Quality Assessment

Mar 11, 2026Abstract:Immersive Computer Graphics (CGs) rendering has become ubiquitous in modern daily life. However, comprehensively evaluating CG quality remains challenging for two reasons: First, existing CG datasets lack systematic descriptions of rendering quality; and second existing CG quality assessment methods cannot provide reasonable text-based explanations. To address these issues, we first identify six key perceptual dimensions of CG quality from the user perspective and construct a dataset of 3500 CG images with corresponding quality descriptions. Each description covers CG style, content, and perceived quality along the selected dimensions. Furthermore, we use a subset of the dataset to build several question-answer benchmarks based on the descriptions in order to evaluate the responses of existing Vision Language Models (VLMs). We find that current VLMs are not sufficiently accurate in judging fine-grained CG quality, but that descriptions of visually similar images can significantly improve a VLM's understanding of a given CG image. Motivated by this observation, we adopt retrieval-augmented generation and propose a two-stream retrieval framework that effectively enhances the CG quality assessment capabilities of VLMs. Experiments on several representative VLMs demonstrate that our method substantially improves their performance on CG quality assessment.

MUGSQA: Novel Multi-Uncertainty-Based Gaussian Splatting Quality Assessment Method, Dataset, and Benchmarks

Nov 10, 2025

Abstract:Gaussian Splatting (GS) has recently emerged as a promising technique for 3D object reconstruction, delivering high-quality rendering results with significantly improved reconstruction speed. As variants continue to appear, assessing the perceptual quality of 3D objects reconstructed with different GS-based methods remains an open challenge. To address this issue, we first propose a unified multi-distance subjective quality assessment method that closely mimics human viewing behavior for objects reconstructed with GS-based methods in actual applications, thereby better collecting perceptual experiences. Based on it, we also construct a novel GS quality assessment dataset named MUGSQA, which is constructed considering multiple uncertainties of the input data. These uncertainties include the quantity and resolution of input views, the view distance, and the accuracy of the initial point cloud. Moreover, we construct two benchmarks: one to evaluate the robustness of various GS-based reconstruction methods under multiple uncertainties, and the other to evaluate the performance of existing quality assessment metrics. Our dataset and benchmark code will be released soon.

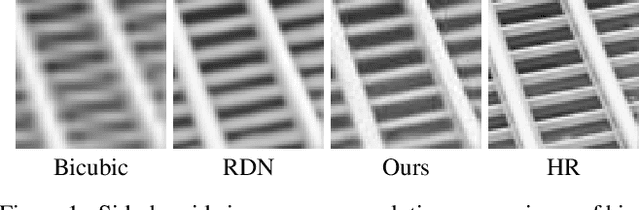

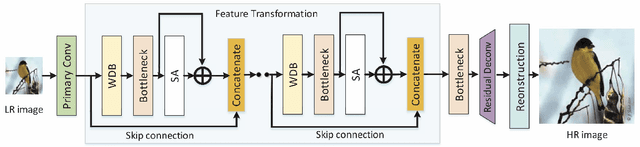

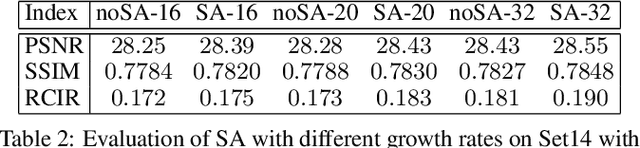

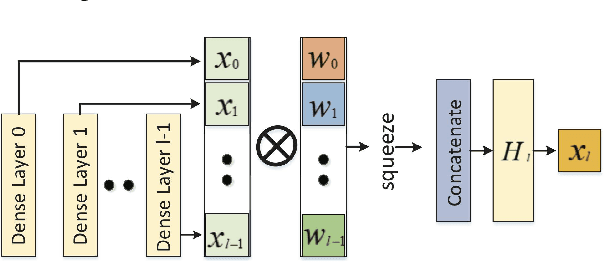

Image Super-Resolution Using Attention Based DenseNet with Residual Deconvolution

Jul 03, 2019

Abstract:Image super-resolution is a challenging task and has attracted increasing attention in research and industrial communities. In this paper, we propose a novel end-to-end Attention-based DenseNet with Residual Deconvolution named as ADRD. In our ADRD, a weighted dense block, in which the current layer receives weighted features from all previous levels, is proposed to capture valuable features rely in dense layers adaptively. And a novel spatial attention module is presented to generate a group of attentive maps for emphasizing informative regions. In addition, we design an innovative strategy to upsample residual information via the deconvolution layer, so that the high-frequency details can be accurately upsampled. Extensive experiments conducted on publicly available datasets demonstrate the promising performance of the proposed ADRD against the state-of-the-arts, both quantitatively and qualitatively.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge