Zhe Xiong

Bi-modality Images Transfer with a Discrete Process Matching Method

Sep 06, 2024Abstract:Recently, medical image synthesis gains more and more popularity, along with the rapid development of generative models. Medical image synthesis aims to generate an unacquired image modality, often from other observed data modalities. Synthesized images can be used for clinical diagnostic assistance, data augmentation for model training and validation or image quality improving. In the meanwhile, the flow-based models are among the successful generative models for the ability of generating realistic and high-quality synthetic images. However, most flow-based models require to calculate flow ordinary different equation (ODE) evolution steps in transfer process, for which the performances are significantly limited by heavy computation time due to a large number of time iterations. In this paper, we propose a novel flow-based model, namely Discrete Process Matching (DPM) to accomplish the bi-modality image transfer tasks. Different to other flow matching based models, we propose to utilize both forward and backward ODE flow and enhance the consistency on the intermediate images of few discrete time steps, resulting in a transfer process with much less iteration steps while maintaining high-quality generations for both modalities. Our experiments on three datasets of MRI T1/T2 and CT/MRI demonstrate that DPM outperforms other state-of-the-art flow-based methods for bi-modality image synthesis, achieving higher image quality with less computation time cost.

SyMOT-Flow: Learning optimal transport flow for two arbitrary distributions with maximum mean discrepancy

Aug 26, 2023Abstract:Finding a transformation between two unknown probability distributions from samples is crucial for modeling complex data distributions and perform tasks such as density estimation, sample generation, and statistical inference. One powerful framework for such transformations is normalizing flow, which transforms an unknown distribution into a standard normal distribution using an invertible network. In this paper, we introduce a novel model called SyMOT-Flow that trains an invertible transformation by minimizing the symmetric maximum mean discrepancy between samples from two unknown distributions, and we incorporate an optimal transport cost as regularization to obtain a short-distance and interpretable transformation. The resulted transformation leads to more stable and accurate sample generation. We establish several theoretical results for the proposed model and demonstrate its effectiveness with low-dimensional illustrative examples as well as high-dimensional generative samples obtained through the forward and reverse flows.

Topic-aware chatbot using Recurrent Neural Networks and Nonnegative Matrix Factorization

Dec 04, 2019

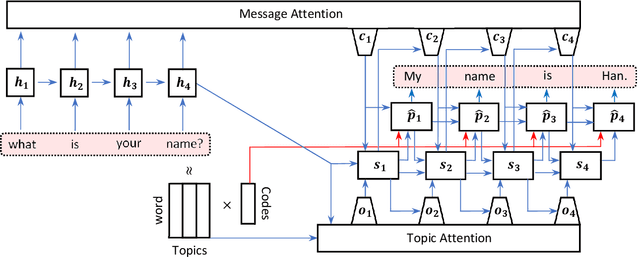

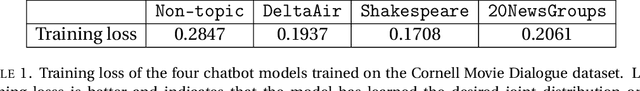

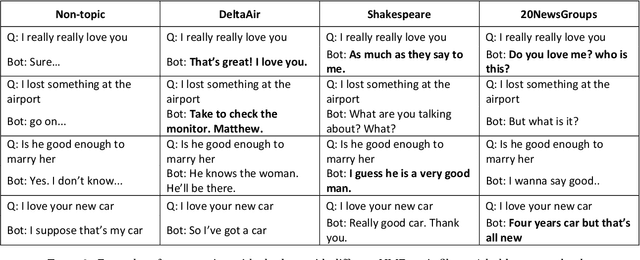

Abstract:We propose a novel model for a topic-aware chatbot by combining the traditional Recurrent Neural Network (RNN) encoder-decoder model with a topic attention layer based on Nonnegative Matrix Factorization (NMF). After learning topic vectors from an auxiliary text corpus via NMF, the decoder is trained so that it is more likely to sample response words from the most correlated topic vectors. One of the main advantages in our architecture is that the user can easily switch the NMF-learned topic vectors so that the chatbot obtains desired topic-awareness. We demonstrate our model by training on a single conversational data set which is then augmented with topic matrices learned from different auxiliary data sets. We show that our topic-aware chatbot not only outperforms the non-topic counterpart, but also that each topic-aware model qualitatively and contextually gives the most relevant answer depending on the topic of question.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge