Yunfei Ye

Unified Structural-Hydrodynamic Modeling of Underwater Underactuated Mechanisms and Soft Robots

Mar 09, 2026Abstract:Underwater robots are widely deployed for ocean exploration and manipulation. Underactuated mechanisms are particularly advantageous in aquatic environments, as reducing actuator count lowers the risk of motor leakage while introducing inherent mechanical compliance. However, accurate modeling of underwater underactuated and soft robotic systems remains challenging because it requires identifying a high-dimensional set of internal structural and external hydrodynamic parameters. In this work, we propose a trajectory-driven global optimization framework for unified structural-hydrodynamic modeling of underwater multibody systems. Inspired by the Covariance Matrix Adaptation Evolution Strategy (CMA-ES), the proposed approach simultaneously identifies coupled internal elastic, damping, and distributed hydrodynamic parameters through trajectory-level matching between simulation and experimental motion. This enables high-fidelity reproduction of both underactuated mechanisms and compliant soft robotic systems in underwater environments. We first validate the framework on a link-by-link underactuated multibody mechanism, demonstrating accurate identification of distributed hydrodynamic coefficients, with a normalized end effector position error below 5% across multiple trajectories, varying initial conditions, and both active-passive and fully passive configurations. The identified modeling strategy is then transferred to a single octopus-inspired soft arm, showing strong real-to-sim consistency without manual retuning. Finally, eight identified arms are assembled into a swimming octopus robot, where the unified parameter set enables realistic whole body behavior without additional parameter calibration. These results demonstrate the scalability and transferability of the proposed structural-hydrodynamic modeling framework across underwater underactuated and soft robotic systems.

Multi-distance Support Matrix Machines

Jul 02, 2018

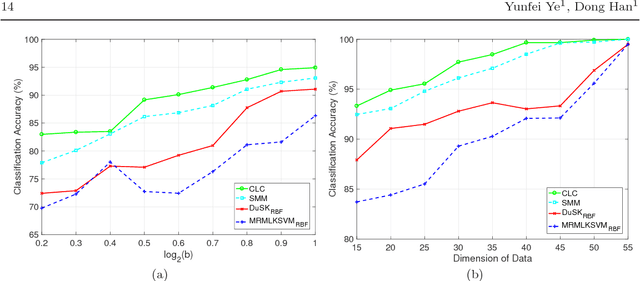

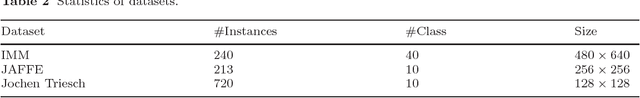

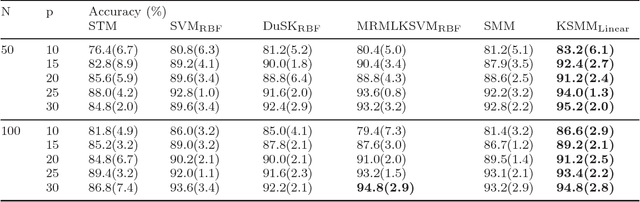

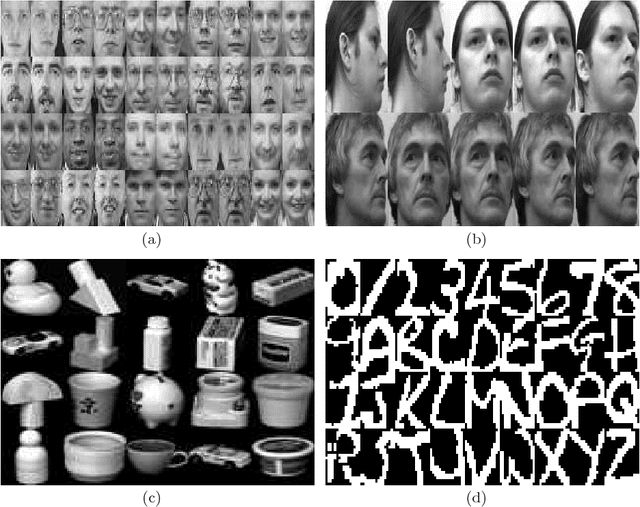

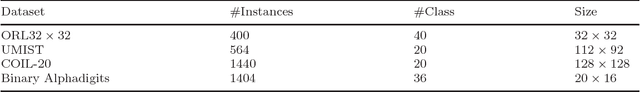

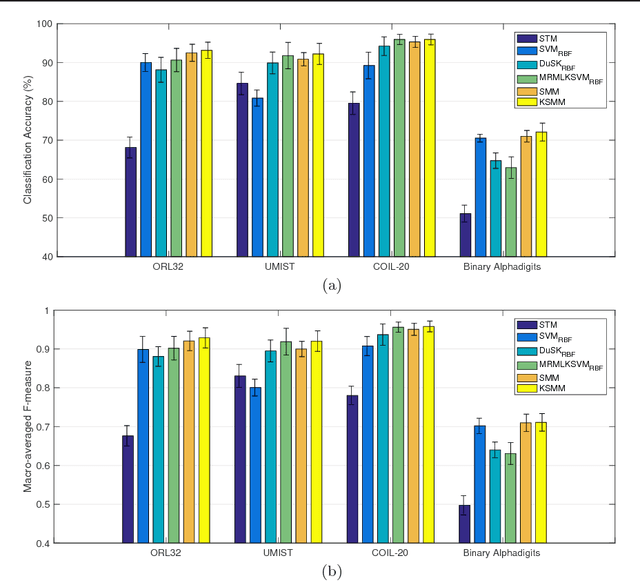

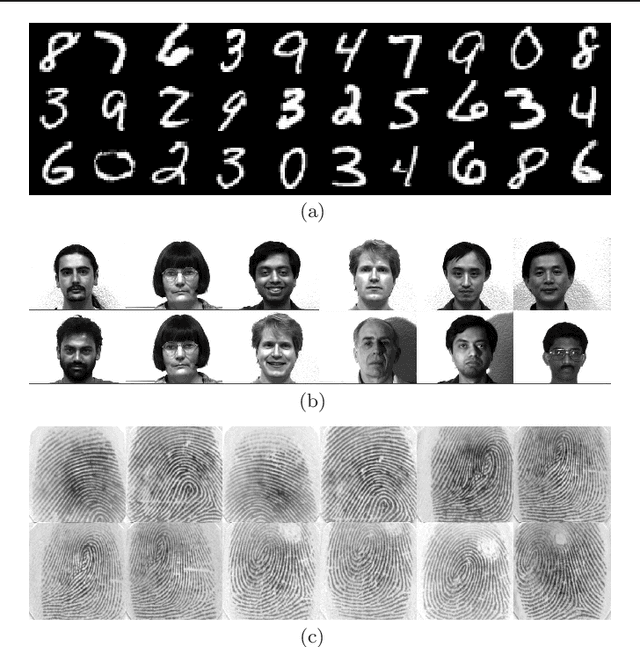

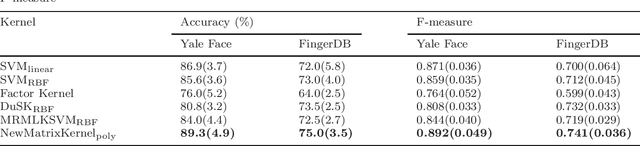

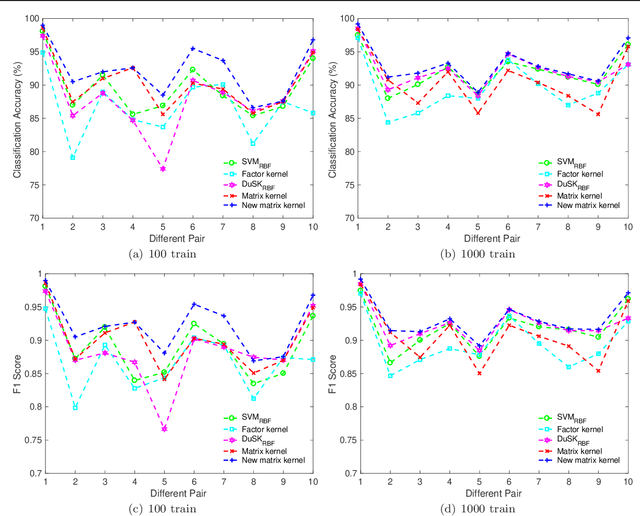

Abstract:Real-world data such as digital images, MRI scans and electroencephalography signals are naturally represented as matrices with structural information. Most existing classifiers aim to capture these structures by regularizing the regression matrix to be low-rank or sparse. Some other methodologies introduce factorization technique to explore nonlinear relationships of matrix data in kernel space. In this paper, we propose a multi-distance support matrix machine (MDSMM), which provides a principled way of solving matrix classification problems. The multi-distance is introduced to capture the correlation within matrix data, by means of intrinsic information in rows and columns of input data. A complex hyperplane is established upon these values to separate distinct classes. We further study the generalization bounds for i.i.d. processes and non i.i.d. process based on both SVM and SMM classifiers. For typical hypothesis classes where matrix norms are constrained, MDSMM achieves a faster learning rate than traditional classifiers. We also provide a more general approach for samples without prior knowledge. We demonstrate the merits of the proposed method by conducting exhaustive experiments on both simulation study and a number of real-word datasets.

A Nonlinear Kernel Support Matrix Machine for Matrix Learning

Jan 04, 2018

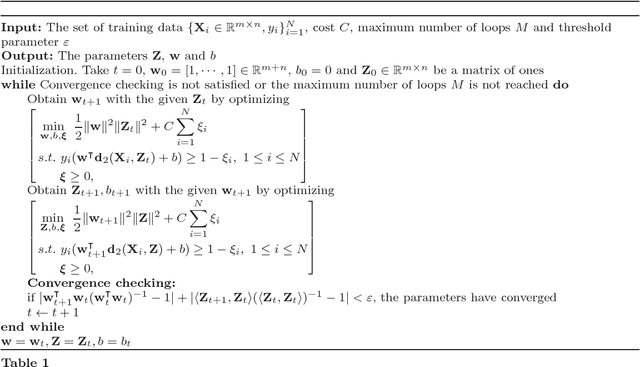

Abstract:In many problems of supervised tensor learning (STL), real world data such as face images or MRI scans are naturally represented as matrices, which are also called as second order tensors. Most existing classifiers based on tensor representation, such as support tensor machine (STM) need to solve iteratively which occupy much time and may suffer from local minima. In this paper, we present a kernel support matrix machine (KSMM) to perform supervised learning when data are represented as matrices. KSMM is a general framework for the construction of matrix-based hyperplane to exploit structural information. We analyze a unifying optimization problem for which we propose an asymptotically convergent algorithm. Theoretical analysis for the generalization bounds is derived based on Rademacher complexity with respect to a probability distribution. We demonstrate the merits of the proposed method by exhaustive experiments on both simulation study and a number of real-word datasets from a variety of application domains.

The Matrix Hilbert Space and Its Application to Matrix Learning

Nov 14, 2017

Abstract:Theoretical studies have proven that the Hilbert space has remarkable performance in many fields of applications. Frames in tensor product of Hilbert spaces were introduced to generalize the inner product to high-order tensors. However, these techniques require tensor decomposition which could lead to the loss of information and it is a NP-hard problem to determine the rank of tensors. Here, we present a new framework, namely matrix Hilbert space to perform a matrix inner product space when data observations are represented as matrices. We preserve the structure of initial data and multi-way correlation among them is captured in the process. In addition, we extend the reproducing kernel Hilbert space (RKHS) to reproducing kernel matrix Hilbert space (RKMHS) and propose an equivalent condition of the space uses of the certain kernel function. A new family of kernels is introduced in our framework to apply the classifier of Support Tensor Machine(STM) and comparative experiments are performed on a number of real-world datasets to support our contributions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge