Yuan An

Human-in-the-Loop and AI: Crowdsourcing Metadata Vocabulary for Materials Science

Dec 10, 2025

Abstract:Metadata vocabularies are essential for advancing FAIR and FARR data principles, but their development constrained by limited human resources and inconsistent standardization practices. This paper introduces MatSci-YAMZ, a platform that integrates artificial intelligence (AI) and human-in-the-loop (HILT), including crowdsourcing, to support metadata vocabulary development. The paper reports on a proof-of-concept use case evaluating the AI-HILT model in materials science, a highly interdisciplinary domain Six (6) participants affiliated with the NSF Institute for Data-Driven Dynamical Design (ID4) engaged with the MatSci-YAMZ plaform over several weeks, contributing term definitions and providing examples to prompt the AI-definitions refinement. Nineteen (19) AI-generated definitions were successfully created, with iterative feedback loops demonstrating the feasibility of AI-HILT refinement. Findings confirm the feasibility AI-HILT model highlighting 1) a successful proof of concept, 2) alignment with FAIR and open-science principles, 3) a research protocol to guide future studies, and 4) the potential for scalability across domains. Overall, MatSci-YAMZ's underlying model has the capacity to enhance semantic transparency and reduce time required for consensus building and metadata vocabulary development.

Rate-Distortion Guided Knowledge Graph Construction from Lecture Notes Using Gromov-Wasserstein Optimal Transport

Nov 18, 2025Abstract:Task-oriented knowledge graphs (KGs) enable AI-powered learning assistant systems to automatically generate high-quality multiple-choice questions (MCQs). Yet converting unstructured educational materials, such as lecture notes and slides, into KGs that capture key pedagogical content remains difficult. We propose a framework for knowledge graph construction and refinement grounded in rate-distortion (RD) theory and optimal transport geometry. In the framework, lecture content is modeled as a metric-measure space, capturing semantic and relational structure, while candidate KGs are aligned using Fused Gromov-Wasserstein (FGW) couplings to quantify semantic distortion. The rate term, expressed via the size of KG, reflects complexity and compactness. Refinement operators (add, merge, split, remove, rewire) minimize the rate-distortion Lagrangian, yielding compact, information-preserving KGs. Our prototype applied to data science lectures yields interpretable RD curves and shows that MCQs generated from refined KGs consistently surpass those from raw notes on fifteen quality criteria. This study establishes a principled foundation for information-theoretic KG optimization in personalized and AI-assisted education.

Enhancing Semantic Interoperability Across Materials Science With HIVE4MAT

Nov 01, 2024

Abstract:HIVE4MAT is a linked data interactive application for navigating ontologies of value to materials science. HIVE enables automatic indexing of textual resources with standardized terminology. This article presents the motivation underlying HIVE4MAT, explains the system architecture, reports on two evaluations, and discusses future plans.

Is the Lecture Engaging for Learning? Lecture Voice Sentiment Analysis for Knowledge Graph-Supported Intelligent Lecturing Assistant (ILA) System

Aug 20, 2024Abstract:This paper introduces an intelligent lecturing assistant (ILA) system that utilizes a knowledge graph to represent course content and optimal pedagogical strategies. The system is designed to support instructors in enhancing student learning through real-time analysis of voice, content, and teaching methods. As an initial investigation, we present a case study on lecture voice sentiment analysis, in which we developed a training set comprising over 3,000 one-minute lecture voice clips. Each clip was manually labeled as either engaging or non-engaging. Utilizing this dataset, we constructed and evaluated several classification models based on a variety of features extracted from the voice clips. The results demonstrate promising performance, achieving an F1-score of 90% for boring lectures on an independent set of over 800 test voice clips. This case study lays the groundwork for the development of a more sophisticated model that will integrate content analysis and pedagogical practices. Our ultimate goal is to aid instructors in teaching more engagingly and effectively by leveraging modern artificial intelligence techniques.

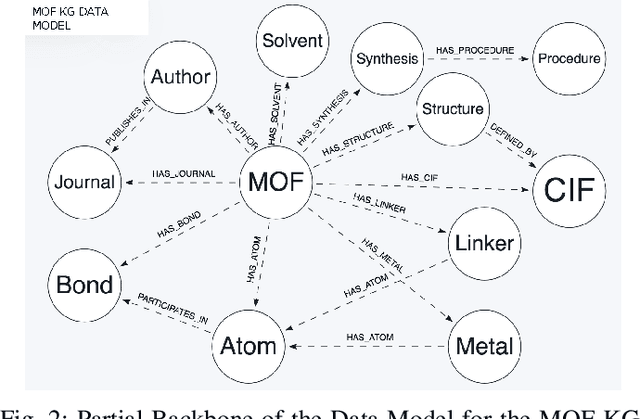

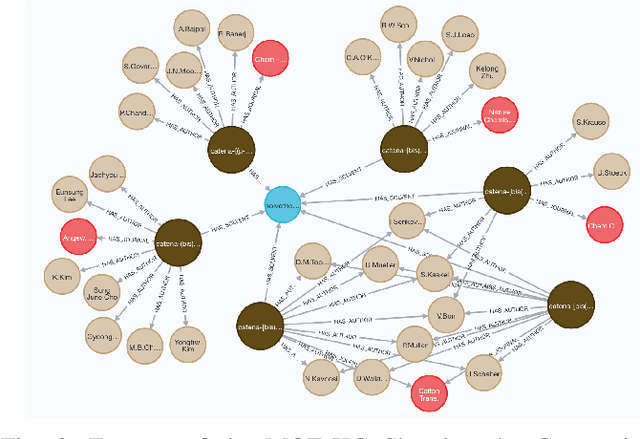

Knowledge Graph Question Answering for Materials Science (KGQA4MAT): Developing Natural Language Interface for Metal-Organic Frameworks Knowledge Graph (MOF-KG)

Sep 20, 2023Abstract:We present a comprehensive benchmark dataset for Knowledge Graph Question Answering in Materials Science (KGQA4MAT), with a focus on metal-organic frameworks (MOFs). A knowledge graph for metal-organic frameworks (MOF-KG) has been constructed by integrating structured databases and knowledge extracted from the literature. To enhance MOF-KG accessibility for domain experts, we aim to develop a natural language interface for querying the knowledge graph. We have developed a benchmark comprised of 161 complex questions involving comparison, aggregation, and complicated graph structures. Each question is rephrased in three additional variations, resulting in 644 questions and 161 KG queries. To evaluate the benchmark, we have developed a systematic approach for utilizing ChatGPT to translate natural language questions into formal KG queries. We also apply the approach to the well-known QALD-9 dataset, demonstrating ChatGPT's potential in addressing KGQA issues for different platforms and query languages. The benchmark and the proposed approach aim to stimulate further research and development of user-friendly and efficient interfaces for querying domain-specific materials science knowledge graphs, thereby accelerating the discovery of novel materials.

Repurposing Knowledge Graph Embeddings for Triple Representation via Weak Supervision

Aug 22, 2022

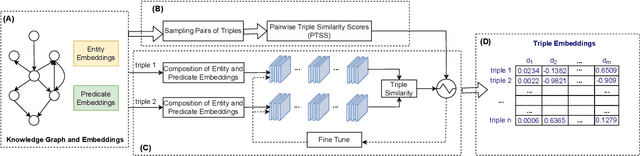

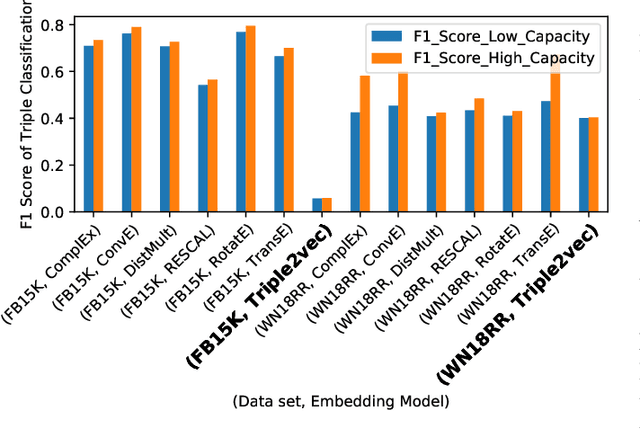

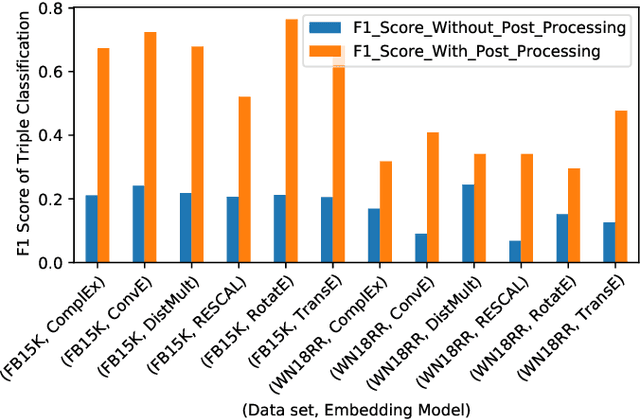

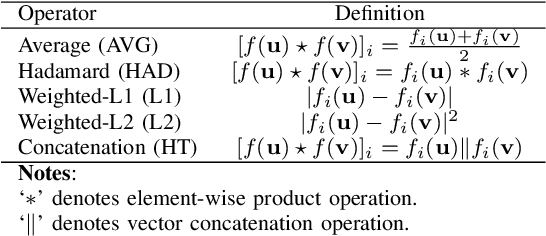

Abstract:The majority of knowledge graph embedding techniques treat entities and predicates as separate embedding matrices, using aggregation functions to build a representation of the input triple. However, these aggregations are lossy, i.e. they do not capture the semantics of the original triples, such as information contained in the predicates. To combat these shortcomings, current methods learn triple embeddings from scratch without utilizing entity and predicate embeddings from pre-trained models. In this paper, we design a novel fine-tuning approach for learning triple embeddings by creating weak supervision signals from pre-trained knowledge graph embeddings. We develop a method for automatically sampling triples from a knowledge graph and estimating their pairwise similarities from pre-trained embedding models. These pairwise similarity scores are then fed to a Siamese-like neural architecture to fine-tune triple representations. We evaluate the proposed method on two widely studied knowledge graphs and show consistent improvement over other state-of-the-art triple embedding methods on triple classification and triple clustering tasks.

Exploring Wasserstein Distance across Concept Embeddings for Ontology Matching

Jul 22, 2022

Abstract:Measuring the distance between ontological elements is a fundamental component for any matching solutions. String-based distance metrics relying on discrete symbol operations are notorious for shallow syntactic matching. In this study, we explore Wasserstein distance metric across ontology concept embeddings. Wasserstein distance metric targets continuous space that can incorporate linguistic, structural, and logical information. In our exploratory study, we use a pre-trained word embeddings system, fasttext, to embed ontology element labels. We examine the effectiveness of Wasserstein distance for measuring similarity between (blocks of) ontolgoies, discovering matchings between individual elements, and refining matchings incorporating contextual information. Our experiments with the OAEI conference track and MSE benchmarks achieve competitive results compared to the leading systems such as AML and LogMap. Results indicate a promising trajectory for the application of optimal transport and Wasserstein distance to improve embedding-based unsupervised ontology matchings.

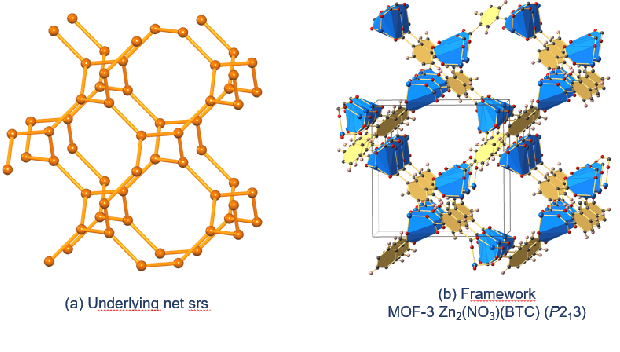

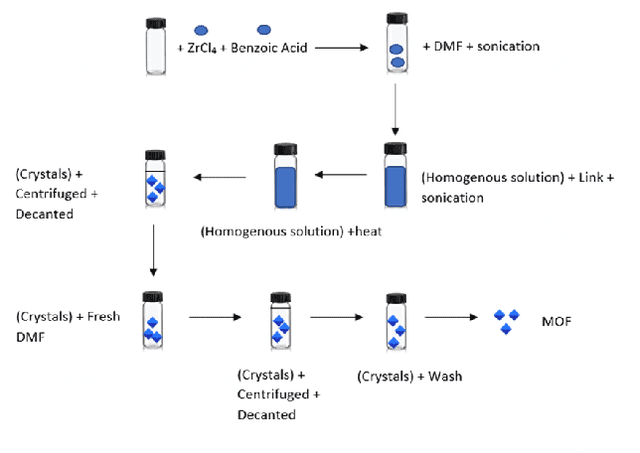

Building Open Knowledge Graph for Metal-Organic Frameworks (MOF-KG): Challenges and Case Studies

Jul 10, 2022

Abstract:Metal-Organic Frameworks (MOFs) are a class of modular, porous crystalline materials that have great potential to revolutionize applications such as gas storage, molecular separations, chemical sensing, catalysis, and drug delivery. The Cambridge Structural Database (CSD) reports 10,636 synthesized MOF crystals which in addition contains ca. 114,373 MOF-like structures. The sheer number of synthesized (plus potentially synthesizable) MOF structures requires researchers pursue computational techniques to screen and isolate MOF candidates. In this demo paper, we describe our effort on leveraging knowledge graph methods to facilitate MOF prediction, discovery, and synthesis. We present challenges and case studies about (1) construction of a MOF knowledge graph (MOF-KG) from structured and unstructured sources and (2) leveraging the MOF-KG for discovery of new or missing knowledge.

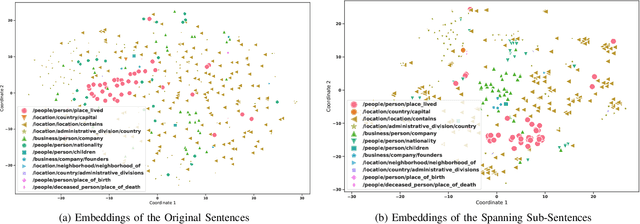

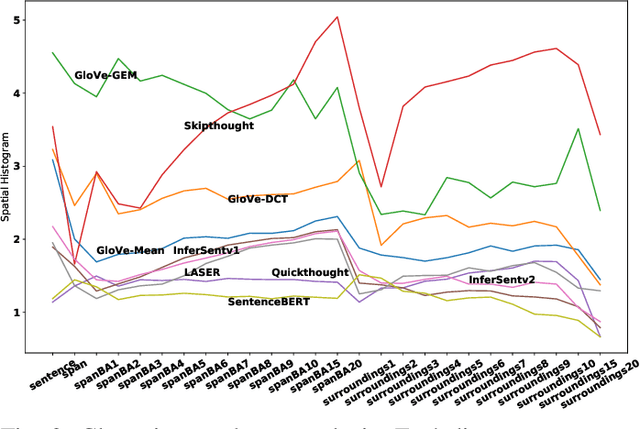

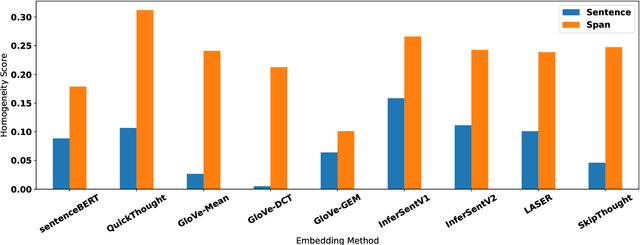

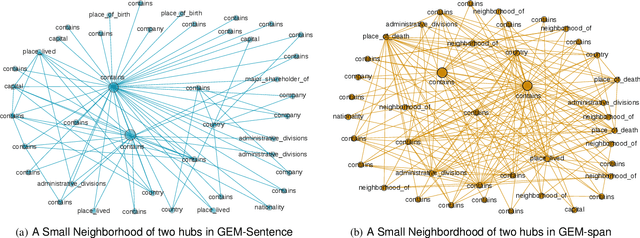

Clustering and Network Analysis for the Embedding Spaces of Sentences and Sub-Sentences

Oct 02, 2021

Abstract:Sentence embedding methods offer a powerful approach for working with short textual constructs or sequences of words. By representing sentences as dense numerical vectors, many natural language processing (NLP) applications have improved their performance. However, relatively little is understood about the latent structure of sentence embeddings. Specifically, research has not addressed whether the length and structure of sentences impact the sentence embedding space and topology. This paper reports research on a set of comprehensive clustering and network analyses targeting sentence and sub-sentence embedding spaces. Results show that one method generates the most clusterable embeddings. In general, the embeddings of span sub-sentences have better clustering properties than the original sentences. The results have implications for future sentence embedding models and applications.

A Survey of Embedding Space Alignment Methods for Language and Knowledge Graphs

Oct 26, 2020

Abstract:Neural embedding approaches have become a staple in the fields of computer vision, natural language processing, and more recently, graph analytics. Given the pervasive nature of these algorithms, the natural question becomes how to exploit the embedding spaces to map, or align, embeddings of different data sources. To this end, we survey the current research landscape on word, sentence and knowledge graph embedding algorithms. We provide a classification of the relevant alignment techniques and discuss benchmark datasets used in this field of research. By gathering these diverse approaches into a singular survey, we hope to further motivate research into alignment of embedding spaces of varied data types and sources.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge