Yasuhiro Wada

Mitigating the Impact of Electrode Shift on Classification Performance in Electromyography-Based Motion Prediction Using Sliding-Window Normalization

Apr 04, 2025Abstract:Electromyography (EMG) signals are used in many applications, including prosthetic hands, assistive suits, and rehabilitation. Recent advances in motion estimation have improved performance, yet challenges remain in cross-subject generalization, electrode shift, and daily variations. When electrode shift occurs, both transfer learning and adversarial domain adaptation improve classification performance by reducing the performance gap to -1\% (eight-class scenario). However, additional data are needed for re-training in transfer learning or for training in adversarial domain adaptation. To address this issue, we investigated a sliding-window normalization (SWN) technique in a real-time prediction scenario. This method combines z-score normalization with a sliding-window approach to reduce the decline in classification performance caused by electrode shift. We validated the effectiveness of SWN using experimental data from a target trajectory tracking task involving the right arm. For three motions classification (rest, flexion, and extension of the elbow) obtained from EMG signals, our offline analysis showed that SWN reduced the differential classification accuracy to -1.0\%, representing a 6.6\% improvement compared to the case without normalization (-7.6\%). Furthermore, when SWN was combined with a strategy that uses a mixture of multiple electrode positions, classification accuracy improved by an additional 2.4\% over the baseline. These results suggest that SWN can effectively reduce the performance degradation caused by electrode shift, thereby enhancing the practicality of EMG-based motion estimation systems.

Temporal convolutional neural networks to generate a head-related impulse response from one direction to another

Oct 21, 2023

Abstract:Virtual sound synthesis is a technology that allows users to perceive spatial sound through headphones or earphones. However, accurate virtual sound requires an individual head-related transfer function (HRTF), which can be difficult to measure due to the need for a specialized environment. In this study, we proposed a method to generate HRTFs from one direction to the other. To this end, we used temporal convolutional neural networks (TCNs) to generate head-related impulse responses (HRIRs). To train the TCNs, publicly available datasets in the horizontal plane were used. Using the trained networks, we successfully generated HRIRs for directions other than the front direction in the dataset. We found that the proposed method successfully generated HRIRs for publicly available datasets. To test the generalization of the method, we measured the HRIRs of a new dataset and tested whether the trained networks could be used for this new dataset. Although the similarity evaluated by spectral distortion was slightly degraded, behavioral experiments with human participants showed that the generated HRIRs were equivalent to the measured ones. These results suggest that the proposed TCNs can be used to generate personalized HRIRs from one direction to another, which could contribute to the personalization of virtual sound.

Sliding-Window Normalization to Improve the Performance of Machine-Learning Models for Real-Time Motion Prediction Using Electromyography

May 19, 2022

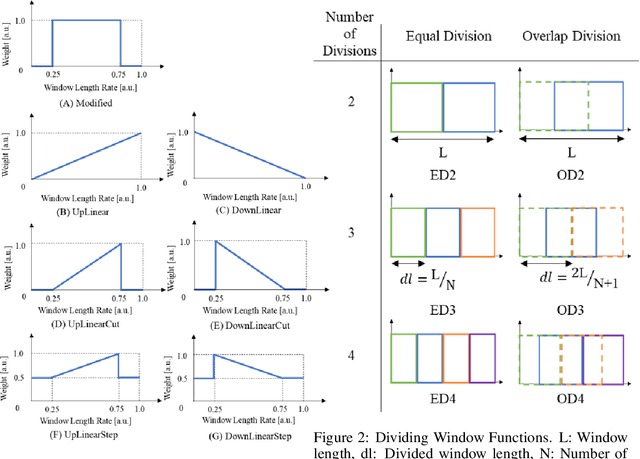

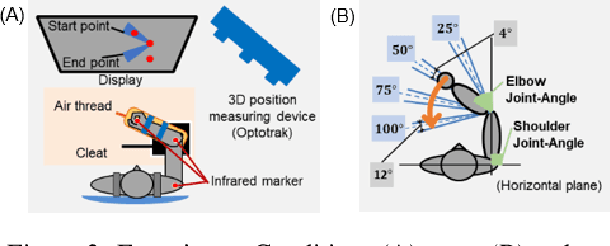

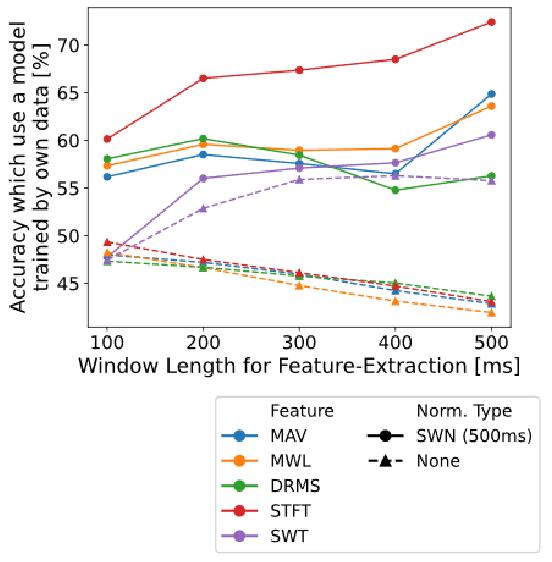

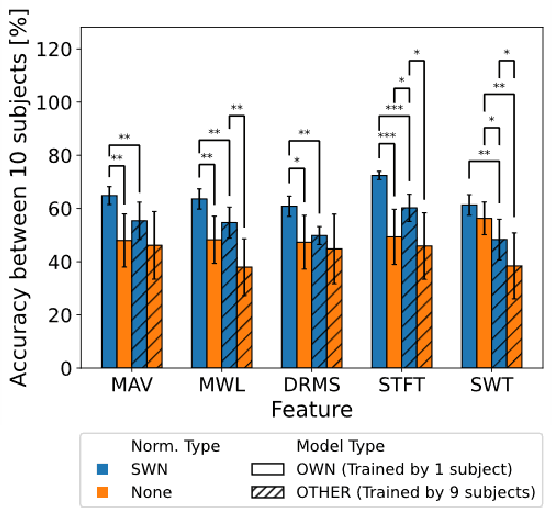

Abstract:Many researchers have used machine learning models to control artificial hands, walking aids, assistance suits, etc., using the biological signal of electromyography (EMG). The use of such devices requires high classification accuracy of machine learning models. One method for improving the classification performance of machine learning models is normalization, such as z-score. However, normalization is not used in most EMG-based motion prediction studies, because of the need for calibration and fluctuation of reference value for calibration (cannot re-use). Therefore, in this study, we proposed a normalization method that combines sliding-window analysis and z-score normalization, that can be implemented in real-time processing without need for calibration. The effectiveness of this normalization method was confirmed by conducting a single-joint movement experiment of the elbow and predicting its rest, flexion, and extension movements from the EMG signal. The proposed normalization method achieved a mean accuracy of 64.6%, an improvement of 15.0% compared to the non-normalization case (mean of 49.8%). Furthermore, to improve practical applications, recent research has focused on reducing the user data required for model learning and improving classification performance in models learned from other people's data. Therefore, we investigated the classification performance of the model learned from other's data. Results showed a mean accuracy of 56.5% when the proposed method was applied, an improvement of 11.1% compared to the non-normalization case (mean of 44.1%). These two results showed the effectiveness of the simple and easy-to-implement method, and that the classification performance of the machine learning model could be improved.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge