Xinke Ma

Deformable Medical Image Registration with Effective Anatomical Structure Representation and Divide-and-Conquer Network

Jun 24, 2025Abstract:Effective representation of Regions of Interest (ROI) and independent alignment of these ROIs can significantly enhance the performance of deformable medical image registration (DMIR). However, current learning-based DMIR methods have limitations. Unsupervised techniques disregard ROI representation and proceed directly with aligning pairs of images, while weakly-supervised methods heavily depend on label constraints to facilitate registration. To address these issues, we introduce a novel ROI-based registration approach named EASR-DCN. Our method represents medical images through effective ROIs and achieves independent alignment of these ROIs without requiring labels. Specifically, we first used a Gaussian mixture model for intensity analysis to represent images using multiple effective ROIs with distinct intensities. Furthermore, we propose a novel Divide-and-Conquer Network (DCN) to process these ROIs through separate channels to learn feature alignments for each ROI. The resultant correspondences are seamlessly integrated to generate a comprehensive displacement vector field. Extensive experiments were performed on three MRI and one CT datasets to showcase the superior accuracy and deformation reduction efficacy of our EASR-DCN. Compared to VoxelMorph, our EASR-DCN achieved improvements of 10.31\% in the Dice score for brain MRI, 13.01\% for cardiac MRI, and 5.75\% for hippocampus MRI, highlighting its promising potential for clinical applications. The code for this work will be released upon acceptance of the paper.

Dual-Flow Transformation Network for Deformable Image Registration with Region Consistency Constraint

Dec 04, 2021

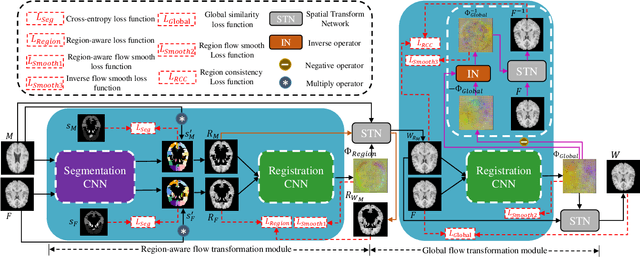

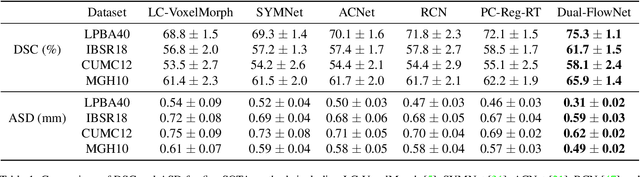

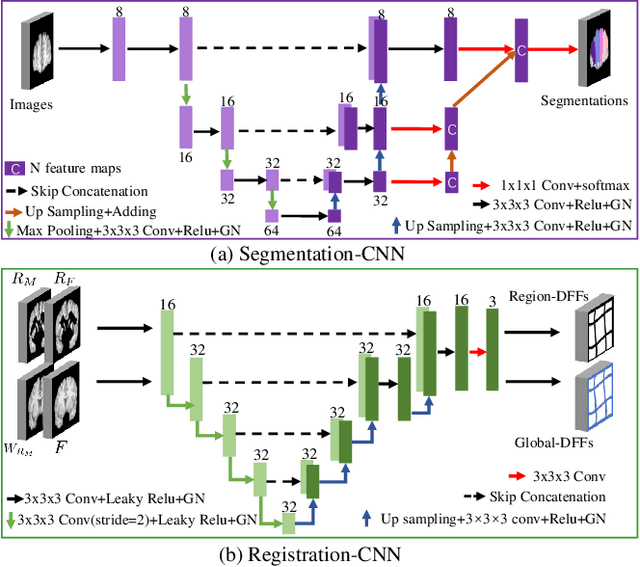

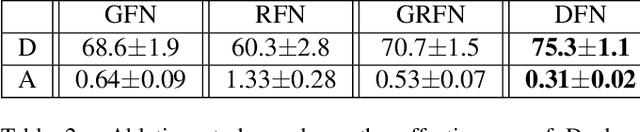

Abstract:Deformable image registration is able to achieve fast and accurate alignment between a pair of images and thus plays an important role in many medical image studies. The current deep learning (DL)-based image registration approaches directly learn the spatial transformation from one image to another by leveraging a convolutional neural network, requiring ground truth or similarity metric. Nevertheless, these methods only use a global similarity energy function to evaluate the similarity of a pair of images, which ignores the similarity of regions of interest (ROIs) within images. Moreover, DL-based methods often estimate global spatial transformations of image directly, which never pays attention to region spatial transformations of ROIs within images. In this paper, we present a novel dual-flow transformation network with region consistency constraint which maximizes the similarity of ROIs within a pair of images and estimates both global and region spatial transformations simultaneously. Experiments on four public 3D MRI datasets show that the proposed method achieves the best registration performance in accuracy and generalization compared with other state-of-the-art methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge