Wanqi Zhang

Why That Robot? A Qualitative Analysis of Justification Strategies for Robot Color Selection Across Occupational Contexts

Mar 30, 2026Abstract:As robots increasingly enter the workforce, human-robot interaction (HRI) must address how implicit social biases influence user preferences. This paper investigates how users rationalize their selections of robots varying in skin tone and anthropomorphic features across different occupations. By qualitatively analyzing 4,146 open-ended justifications from 1,038 participants, we map the reasoning frameworks driving robot color selection across four professional contexts. We developed and validated a comprehensive, multidimensional coding scheme via human--AI consensus ($κ= 0.73$). Our results demonstrate that while utilitarian \textit{Functionalism} is the dominant justification strategy (52\%), participants systematically adapted these practical rationales to align with established racial and occupational stereotypes. Furthermore, we reveal that bias frequently operates beneath conscious rationalization: exposure to racial stereotype primes significantly shifted participants' color choices, yet their spoken justifications remained masked by standard affective or task-related reasoning. We also found that demographic backgrounds significantly shape justification strategies, and that robot shape strongly modulates color interpretation. Specifically, as robots become highly anthropomorphic, users increasingly retreat from functional reasoning toward \textit{Machine-Centric} de-racialization. Through these empirical results, we provide actionable design implications to help reduce the perpetuation of societal biases in future workforce robots.

CoVerRL: Breaking the Consensus Trap in Label-Free Reasoning via Generator-Verifier Co-Evolution

Mar 18, 2026Abstract:Label-free reinforcement learning enables large language models to improve reasoning capabilities without ground-truth supervision, typically by treating majority-voted answers as pseudo-labels. However, we identify a critical failure mode: as training maximizes self-consistency, output diversity collapses, causing the model to confidently reinforce systematic errors that evade detection. We term this the consensus trap. To escape it, we propose CoVerRL, a framework where a single model alternates between generator and verifier roles, with each capability bootstrapping the other. Majority voting provides noisy but informative supervision for training the verifier, while the improving verifier progressively filters self-consistent errors from pseudo-labels. This co-evolution creates a virtuous cycle that maintains high reward accuracy throughout training. Experiments across Qwen and Llama model families demonstrate that CoVerRL outperforms label-free baselines by 4.7-5.9\% on mathematical reasoning benchmarks. Moreover, self-verification accuracy improves from around 55\% to over 85\%, confirming that both capabilities genuinely co-evolve.

From Human Bias to Robot Choice: How Occupational Contexts and Racial Priming Shape Robot Selection

Dec 24, 2025

Abstract:As artificial agents increasingly integrate into professional environments, fundamental questions have emerged about how societal biases influence human-robot selection decisions. We conducted two comprehensive experiments (N = 1,038) examining how occupational contexts and stereotype activation shape robotic agent choices across construction, healthcare, educational, and athletic domains. Participants made selections from artificial agents that varied systematically in skin tone and anthropomorphic characteristics. Our study revealed distinct context-dependent patterns. Healthcare and educational scenarios demonstrated strong favoritism toward lighter-skinned artificial agents, while construction and athletic contexts showed greater acceptance of darker-toned alternatives. Participant race was associated with systematic differences in selection patterns across professional domains. The second experiment demonstrated that exposure to human professionals from specific racial backgrounds systematically shifted later robotic agent preferences in stereotype-consistent directions. These findings show that occupational biases and color-based discrimination transfer directly from human-human to human-robot evaluation contexts. The results highlight mechanisms through which robotic deployment may unintentionally perpetuate existing social inequalities.

Towards in-store multi-person tracking using head detection and track heatmaps

May 16, 2020

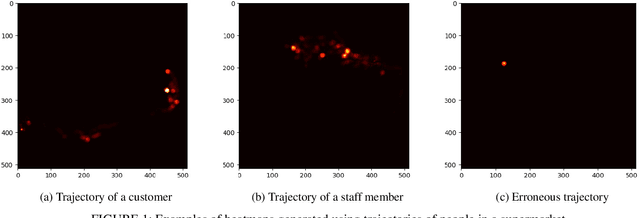

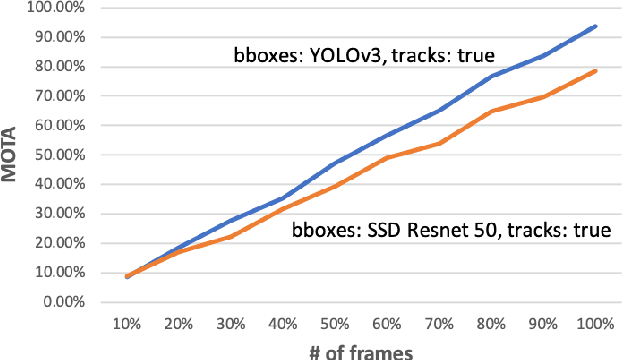

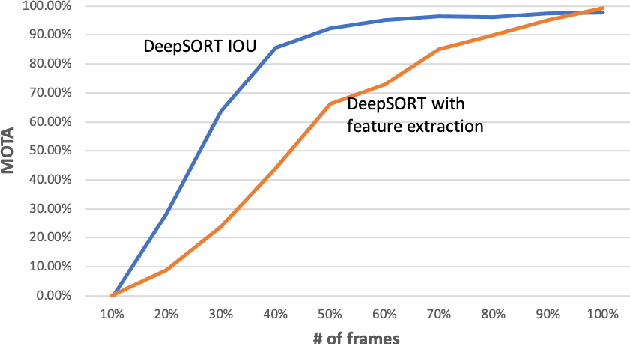

Abstract:Computer vision algorithms are being implemented across a breadth of industries to enable technological innovations. In this paper, we study the problem of computer vision based customer tracking in retail industry. To this end, we introduce a dataset collected from a camera in an office environment where participants mimic various behaviors of customers in a supermarket. In addition, we describe an illustrative example of the use of this dataset for tracking participants based on a head tracking model in an effort to minimize errors due to occlusion. Furthermore, we propose a model for recognizing customers and staff based on their movement patterns. The model is evaluated using a real-world dataset collected in a supermarket over a 24-hour period that achieves 98\% accuracy during training and 93\% accuracy during evaluation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge