Victor Garcia

Performance Assessment Strategies for Generative AI Applications in Healthcare

Sep 09, 2025Abstract:Generative artificial intelligence (GenAI) represent an emerging paradigm within artificial intelligence, with applications throughout the medical enterprise. Assessing GenAI applications necessitates a comprehensive understanding of the clinical task and awareness of the variability in performance when implemented in actual clinical environments. Presently, a prevalent method for evaluating the performance of generative models relies on quantitative benchmarks. Such benchmarks have limitations and may suffer from train-to-the-test overfitting, optimizing performance for a specified test set at the cost of generalizability across other task and data distributions. Evaluation strategies leveraging human expertise and utilizing cost-effective computational models as evaluators are gaining interest. We discuss current state-of-the-art methodologies for assessing the performance of GenAI applications in healthcare and medical devices.

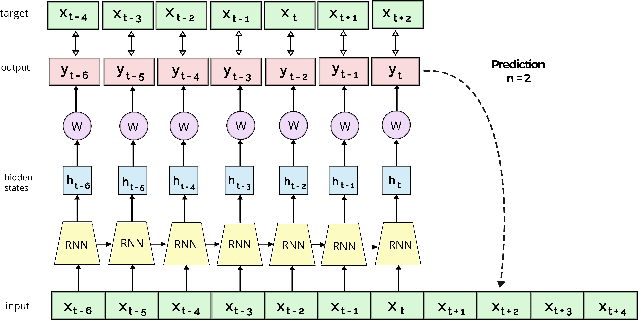

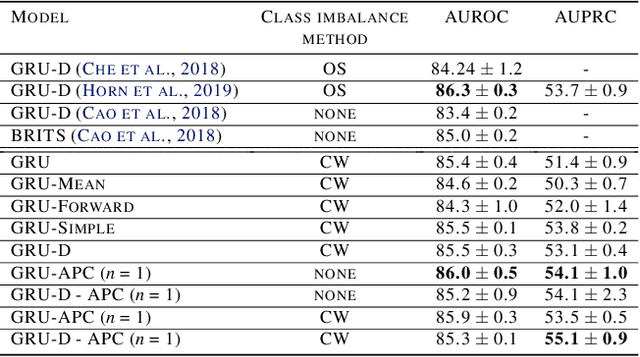

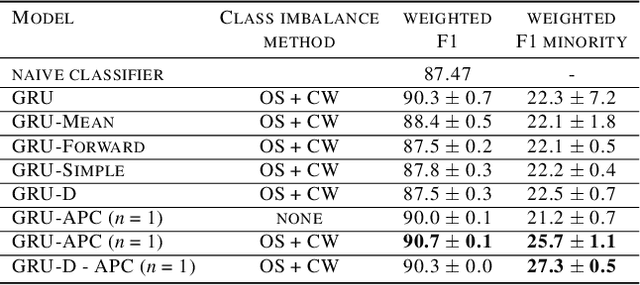

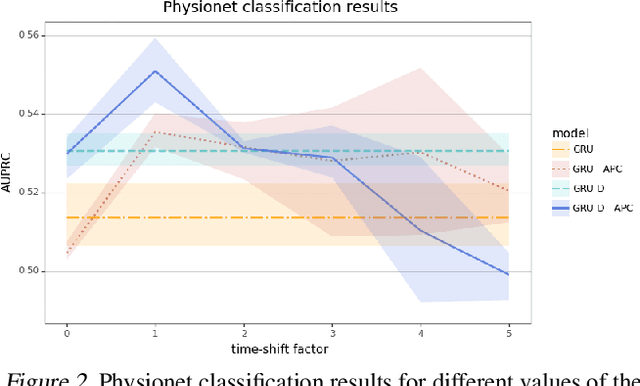

As easy as APC: Leveraging self-supervised learning in the context of time series classification with varying levels of sparsity and severe class imbalance

Jun 29, 2021

Abstract:High levels of sparsity and strong class imbalance are ubiquitous challenges that are often presented simultaneously in real-world time series data. While most methods tackle each problem separately, our proposed approach handles both in conjunction, while imposing fewer assumptions on the data. In this work, we propose leveraging a self-supervised learning method, specifically Autoregressive Predictive Coding (APC), to learn relevant hidden representations of time series data in the context of both missing data and class imbalance. We apply APC using either a GRU or GRU-D encoder on two real-world datasets, and show that applying one-step-ahead prediction with APC improves the classification results in all settings. In fact, by applying GRU-D - APC, we achieve state-of-the-art AUPRC results on the Physionet benchmark.

Few-Shot Learning with Graph Neural Networks

Feb 20, 2018

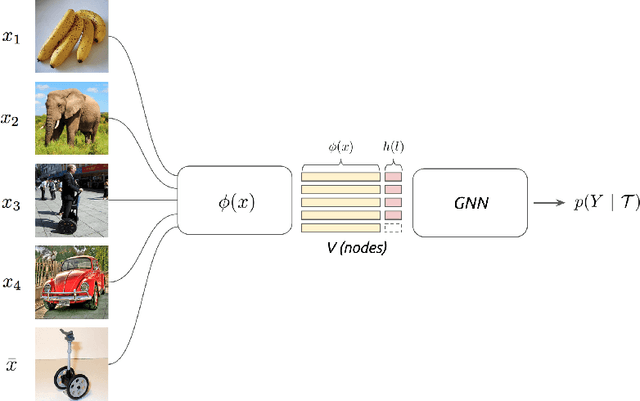

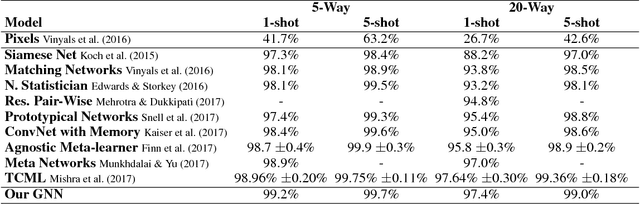

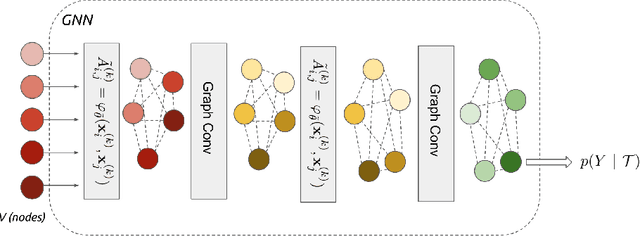

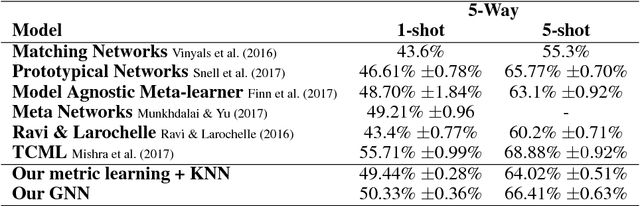

Abstract:We propose to study the problem of few-shot learning with the prism of inference on a partially observed graphical model, constructed from a collection of input images whose label can be either observed or not. By assimilating generic message-passing inference algorithms with their neural-network counterparts, we define a graph neural network architecture that generalizes several of the recently proposed few-shot learning models. Besides providing improved numerical performance, our framework is easily extended to variants of few-shot learning, such as semi-supervised or active learning, demonstrating the ability of graph-based models to operate well on 'relational' tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge