Turgay Ayer

Transfer Learning for Meta-analysis Under Covariate Shift

Apr 03, 2026Abstract:Randomized controlled trials often do not represent the populations where decisions are made, and covariate shift across studies can invalidate standard IPD meta-analysis and transport estimators. We propose a placebo-anchored transport framework that treats source-trial outcomes as abundant proxy signals and target-trial placebo outcomes as scarce, high-fidelity gold labels to calibrate baseline risk. A low-complexity (sparse) correction anchors proxy outcome models to the target population, and the anchored models are embedded in a cross-fitted doubly robust learner, yielding a Neyman-orthogonal, target-site doubly robust estimator for patient-level heterogeneous treatment effects when target treated outcomes are available. We distinguish two regimes: in connected targets (with a treated arm), the method yields target-identified effect estimates; in disconnected targets (placebo-only), it reduces to a principled screen--then--transport procedure under explicit working-model transport assumptions. Experiments on synthetic data and a semi-synthetic IHDP benchmark evaluate pointwise CATE accuracy, ATE error, ranking quality for targeting, decision-theoretic policy regret, and calibration. Across connected settings, the proposed method is best or near-best and improves substantially over proxy-only, target-only, and transport baselines at small target sample sizes; in disconnected settings, it retains strong ranking performance for targeting while pointwise accuracy depends on the strength of the working transport condition.

Generative AI in Health Economics and Outcomes Research: A Taxonomy of Key Definitions and Emerging Applications, an ISPOR Working Group Report

Oct 26, 2024

Abstract:Objective: This article offers a taxonomy of generative artificial intelligence (AI) for health economics and outcomes research (HEOR), explores its emerging applications, and outlines methods to enhance the accuracy and reliability of AI-generated outputs. Methods: The review defines foundational generative AI concepts and highlights current HEOR applications, including systematic literature reviews, health economic modeling, real-world evidence generation, and dossier development. Approaches such as prompt engineering (zero-shot, few-shot, chain-of-thought, persona pattern prompting), retrieval-augmented generation, model fine-tuning, and the use of domain-specific models are introduced to improve AI accuracy and reliability. Results: Generative AI shows significant potential in HEOR, enhancing efficiency, productivity, and offering novel solutions to complex challenges. Foundation models are promising in automating complex tasks, though challenges remain in scientific reliability, bias, interpretability, and workflow integration. The article discusses strategies to improve the accuracy of these AI tools. Conclusion: Generative AI could transform HEOR by increasing efficiency and accuracy across various applications. However, its full potential can only be realized by building HEOR expertise and addressing the limitations of current AI technologies. As AI evolves, ongoing research and innovation will shape its future role in the field.

Small Area Estimation of Case Growths for Timely COVID-19 Outbreak Detection

Dec 07, 2023

Abstract:The COVID-19 pandemic has exerted a profound impact on the global economy and continues to exact a significant toll on human lives. The COVID-19 case growth rate stands as a key epidemiological parameter to estimate and monitor for effective detection and containment of the resurgence of outbreaks. A fundamental challenge in growth rate estimation and hence outbreak detection is balancing the accuracy-speed tradeoff, where accuracy typically degrades with shorter fitting windows. In this paper, we develop a machine learning (ML) algorithm, which we call Transfer Learning Generalized Random Forest (TLGRF), that balances this accuracy-speed tradeoff. Specifically, we estimate the instantaneous COVID-19 exponential growth rate for each U.S. county by using TLGRF that chooses an adaptive fitting window size based on relevant day-level and county-level features affecting the disease spread. Through transfer learning, TLGRF can accurately estimate case growth rates for counties with small sample sizes. Out-of-sample prediction analysis shows that TLGRF outperforms established growth rate estimation methods. Furthermore, we conducted a case study based on outbreak case data from the state of Colorado and showed that the timely detection of outbreaks could have been improved by up to 224% using TLGRF when compared to the decisions made by Colorado's Department of Health and Environment (CDPHE). To facilitate implementation, we have developed a publicly available outbreak detection tool for timely detection of COVID-19 outbreaks in each U.S. county, which received substantial attention from policymakers.

Estimating County-Level COVID-19 Exponential Growth Rates Using Generalized Random Forests

Nov 14, 2020

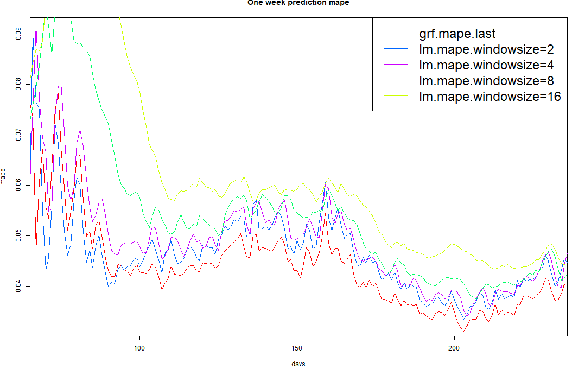

Abstract:Rapid and accurate detection of community outbreaks is critical to address the threat of resurgent waves of COVID-19. A practical challenge in outbreak detection is balancing accuracy vs. speed. In particular, while estimation accuracy improves with longer fitting windows, speed degrades. This paper presents a machine learning framework to balance this tradeoff using generalized random forests (GRF), and applies it to detect county level COVID-19 outbreaks. This algorithm chooses an adaptive fitting window size for each county based on relevant features affecting the disease spread, such as changes in social distancing policies. Experiment results show that our method outperforms any non-adaptive window size choices in 7-day ahead COVID-19 outbreak case number predictions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge