Tanzim Ahad

The Art of the Jailbreak: Formulating Jailbreak Attacks for LLM Security Beyond Binary Scoring

May 09, 2026Abstract:Jailbreak attacks -- adversarial prompts that bypass LLM alignment through purely linguistic manipulation -- pose a growing operational security threat, yet the field lacks large-scale, reproducible infrastructure for generating, categorizing, and evaluating them systematically. This paper addresses that gap with three contributions. (1) Large-scale compositional jailbreak dataset. We construct 114,000 adversarial prompts by applying 912 composing strategies to 125 harmful seed prompts from JailBreakV-28K. Every prompt is assigned to one of 14 cybersecurity attack categories (e.g., malware, phishing, privilege escalation) via a six-model majority-vote pipeline, and each strategy is ranked by effectiveness per category, enabling principled strategy selection grounded in concrete adversarial objectives. (2) Automated jailbreak generation. We instruction-fine-tune category-aware LLMs on Moderate and Optimal subsets, producing models that synthesize fluent jailbreak prompts from a harmful seed at inference time -- no templates, no gradient search. Our generators achieve perplexity 24-39 versus 40-140 for AutoDAN and AmpleGCG, with safety-filter evasion rates of 0.29-0.51 Mal (LlamaPromptGuard-2-86M), enabling controllable, scalable red-teaming under realistic adversarial conditions. (3) OPTIMUS: a training-free jailbreak evaluator. OPTIMUS is a continuous metric J(S,H) that jointly captures semantic similarity between the harmful seed and the jailbreak (S) and harmfulness probability (H) via calibrated penalty functions. Unlike binary attack success rate (ASR), OPTIMUS requires no task-specific training, generalizes across evolving strategies, and exposes a stealth-optimal regime (S*=0.57, H*=0.43) that ASR misses. Experiments across 114,000 prompts confirm that OPTIMUS separates Weak, Moderate, and Optimal jailbreaks with category-level evidence binary evaluation cannot supply.

Semantic Intent Fragmentation: A Single-Shot Compositional Attack on Multi-Agent AI Pipelines

Apr 08, 2026Abstract:We introduce Semantic Intent Fragmentation (SIF), an attack class against LLM orchestration systems where a single, legitimately phrased request causes an orchestrator to decompose a task into subtasks that are individually benign but jointly violate security policy. Current safety mechanisms operate at the subtask level, so each step clears existing classifiers -- the violation only emerges at the composed plan. SIF exploits OWASP LLM06:2025 through four mechanisms: bulk scope escalation, silent data exfiltration, embedded trigger deployment, and quasi-identifier aggregation, requiring no injected content, no system modification, and no attacker interaction after the initial request. We construct a three-stage red-teaming pipeline grounded in OWASP, MITRE ATLAS, and NIST frameworks to generate realistic enterprise scenarios. Across 14 scenarios spanning financial reporting, information security, and HR analytics, a GPT-20B orchestrator produces policy-violating plans in 71% of cases (10/14) while every subtask appears benign. Three independent signals validate this: deterministic taint analysis, chain-of-thought evaluation, and a cross-model compliance judge with 0% false positives. Stronger orchestrators increase SIF success rates. Plan-level information-flow tracking combined with compliance evaluation detects all attacks before execution, showing the compositional safety gap is closable.

Agent-Fence: Mapping Security Vulnerabilities Across Deep Research Agents

Feb 07, 2026Abstract:Large language models are increasingly deployed as *deep agents* that plan, maintain persistent state, and invoke external tools, shifting safety failures from unsafe text to unsafe *trajectories*. We introduce **AgentFence**, an architecture-centric security evaluation that defines 14 trust-boundary attack classes spanning planning, memory, retrieval, tool use, and delegation, and detects failures via *trace-auditable conversation breaks* (unauthorized or unsafe tool use, wrong-principal actions, state/objective integrity violations, and attack-linked deviations). Holding the base model fixed, we evaluate eight agent archetypes under persistent multi-turn interaction and observe substantial architectural variation in mean security break rate (MSBR), ranging from $0.29 \pm 0.04$ (LangGraph) to $0.51 \pm 0.07$ (AutoGPT). The highest-risk classes are operational: Denial-of-Wallet ($0.62 \pm 0.08$), Authorization Confusion ($0.54 \pm 0.10$), Retrieval Poisoning ($0.47 \pm 0.09$), and Planning Manipulation ($0.44 \pm 0.11$), while prompt-centric classes remain below $0.20$ under standard settings. Breaks are dominated by boundary violations (SIV 31%, WPA 27%, UTI+UTA 24%, ATD 18%), and authorization confusion correlates with objective and tool hijacking ($ρ\approx 0.63$ and $ρ\approx 0.58$). AgentFence reframes agent security around what matters operationally: whether an agent stays within its goal and authority envelope over time.

LLM-Guided Dynamic-UMAP for Personalized Federated Graph Learning

Nov 12, 2025

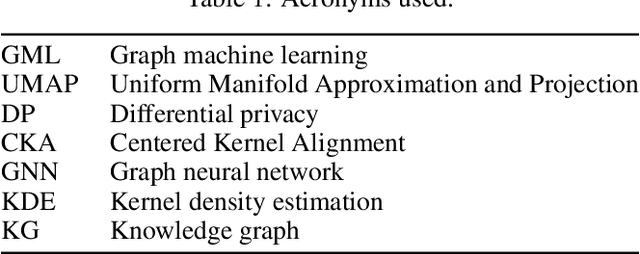

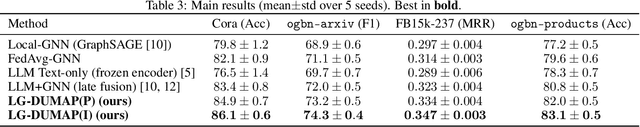

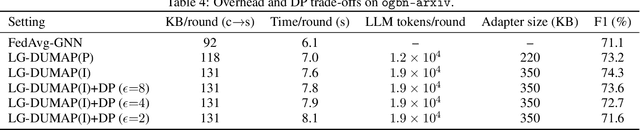

Abstract:We propose a method that uses large language models to assist graph machine learning under personalization and privacy constraints. The approach combines data augmentation for sparse graphs, prompt and instruction tuning to adapt foundation models to graph tasks, and in-context learning to supply few-shot graph reasoning signals. These signals parameterize a Dynamic UMAP manifold of client-specific graph embeddings inside a Bayesian variational objective for personalized federated learning. The method supports node classification and link prediction in low-resource settings and aligns language model latent representations with graph structure via a cross-modal regularizer. We outline a convergence argument for the variational aggregation procedure, describe a differential privacy threat model based on a moments accountant, and present applications to knowledge graph completion, recommendation-style link prediction, and citation and product graphs. We also discuss evaluation considerations for benchmarking LLM-assisted graph machine learning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge