Sunsern Cheamanunkul

Non-Convex Boosting Overcomes Random Label Noise

Sep 09, 2014

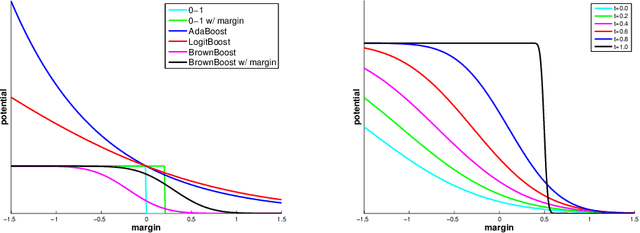

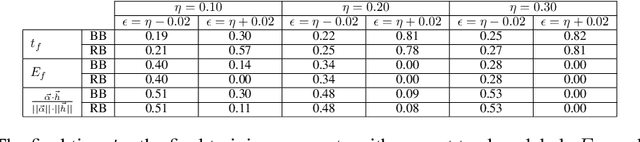

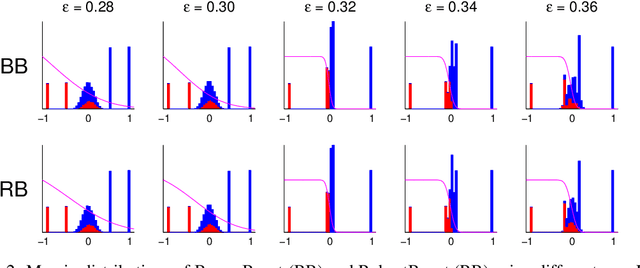

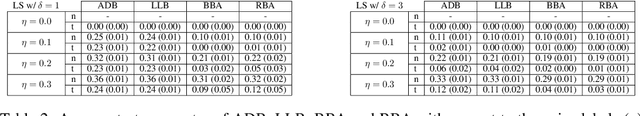

Abstract:The sensitivity of Adaboost to random label noise is a well-studied problem. LogitBoost, BrownBoost and RobustBoost are boosting algorithms claimed to be less sensitive to noise than AdaBoost. We present the results of experiments evaluating these algorithms on both synthetic and real datasets. We compare the performance on each of datasets when the labels are corrupted by different levels of independent label noise. In presence of random label noise, we found that BrownBoost and RobustBoost perform significantly better than AdaBoost and LogitBoost, while the difference between each pair of algorithms is insignificant. We provide an explanation for the difference based on the margin distributions of the algorithms.

Co-adaptation in a Handwriting Recognition System

Sep 09, 2014

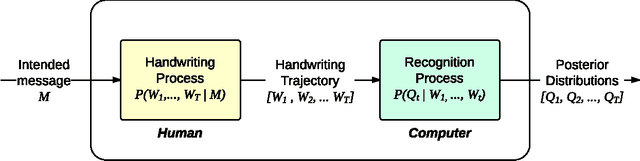

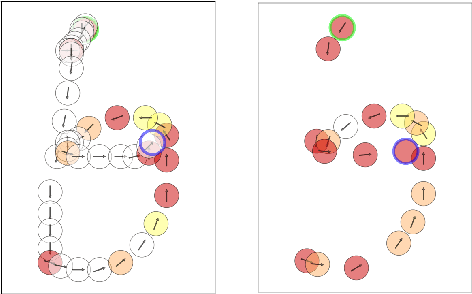

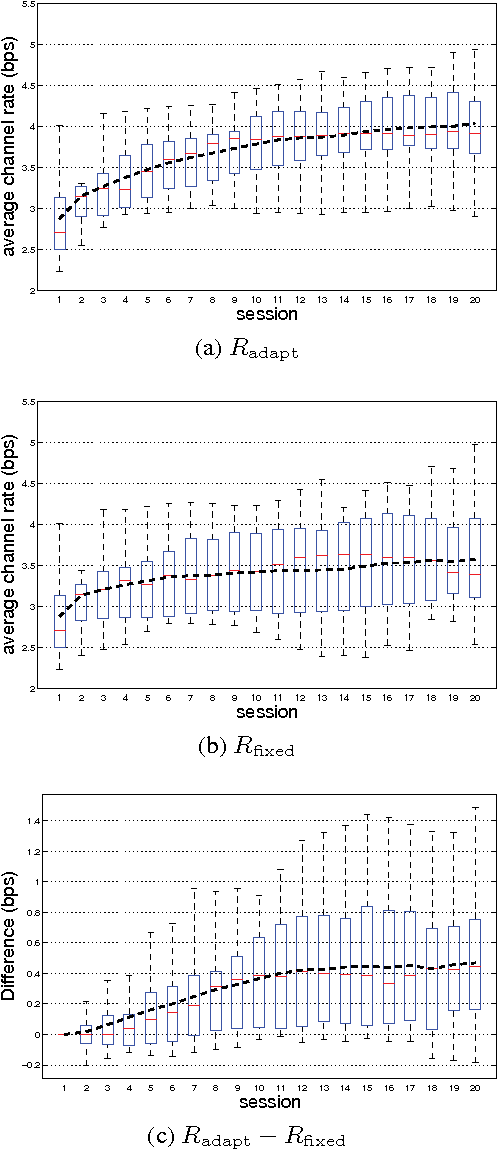

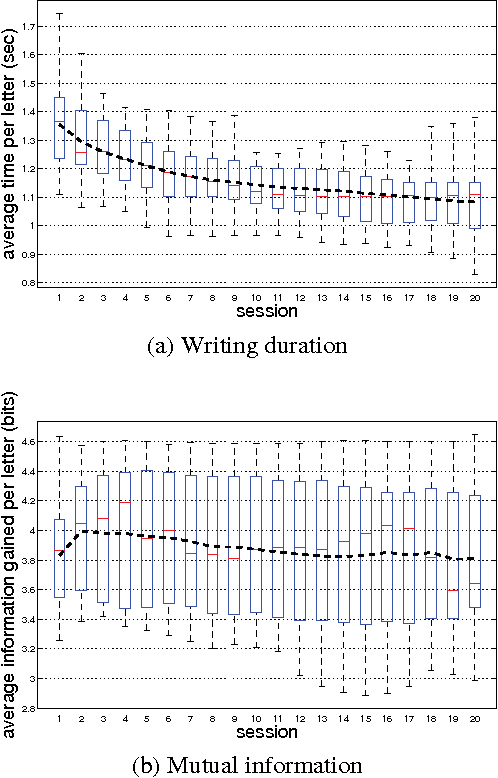

Abstract:Handwriting is a natural and versatile method for human-computer interaction, especially on small mobile devices such as smart phones. However, as handwriting varies significantly from person to person, it is difficult to design handwriting recognizers that perform well for all users. A natural solution is to use machine learning to adapt the recognizer to the user. One complicating factor is that, as the computer adapts to the user, the user also adapts to the computer and probably changes their handwriting. This paper investigates the dynamics of co-adaptation, a process in which both the computer and the user are adapting their behaviors in order to improve the speed and accuracy of the communication through handwriting. We devised an information-theoretic framework for quantifying the efficiency of a handwriting system where the system includes both the user and the computer. Using this framework, we analyzed data collected from an adaptive handwriting recognition system and characterized the impact of machine adaptation and of human adaptation. We found that both machine adaptation and human adaptation have significant impact on the input rate and must be considered together in order to improve the efficiency of the system as a whole.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge