Naresh Balaji Ravichandran

Dynamic Heuristic Neuromorphic Solver for the Edge User Allocation Problem with Bayesian Confidence Propagation Neural Network

Feb 01, 2026Abstract:We propose a neuromorphic solver for the NP-hard Edge User Allocation problem using an attractor network with Winner-Takes-All (WTA) mechanism implemented with the Bayesian Confidence Propagation Neural Network (BCPNN) framework. Unlike previous energy-based attractor networks, our solver uses dynamic heuristic biasing to guide allocations in real time and introduces a "no allocation" state to each WTA motif, achieving near-optimal performance with an empirically upper-bounded number of time steps. The approach is compatible with neuromorphic architectures and may offer improvements in energy efficiency.

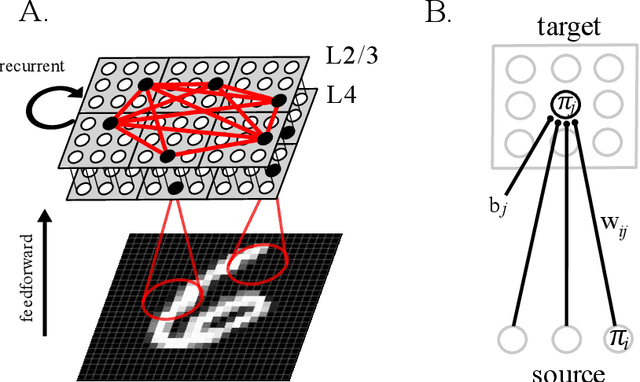

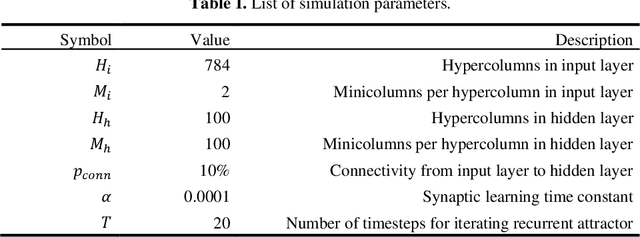

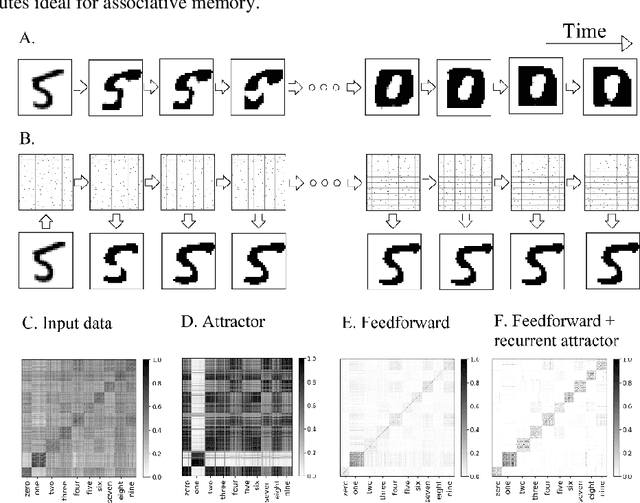

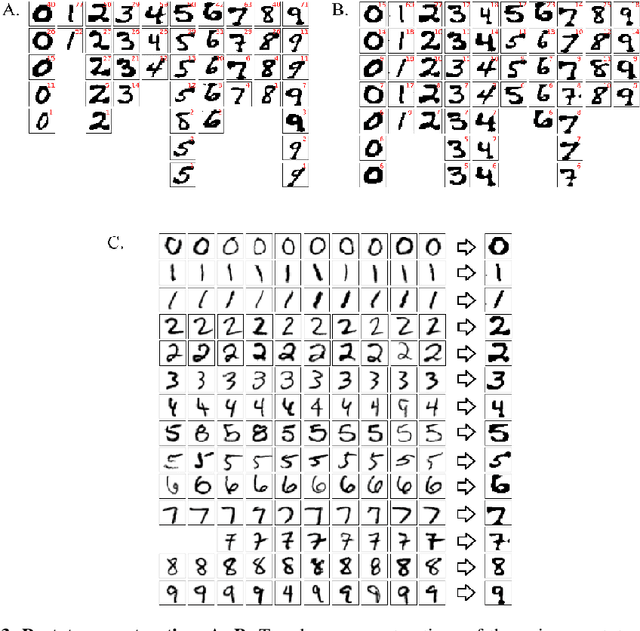

Brain-like combination of feedforward and recurrent network components achieves prototype extraction and robust pattern recognition

Jun 30, 2022

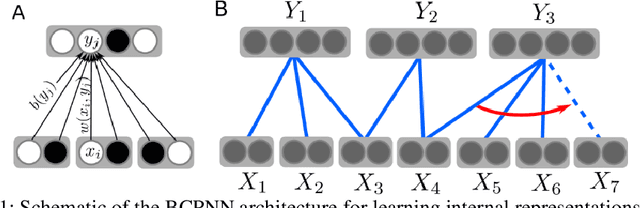

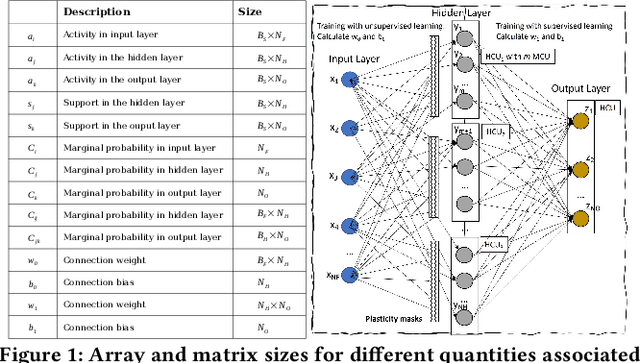

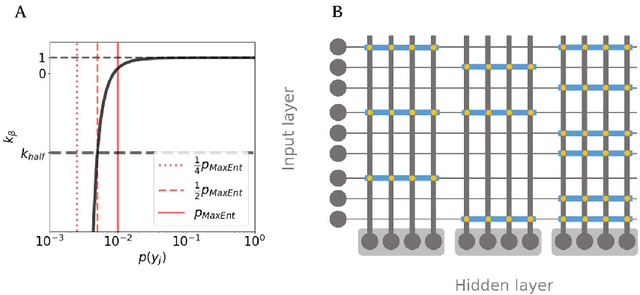

Abstract:Associative memory has been a prominent candidate for the computation performed by the massively recurrent neocortical networks. Attractor networks implementing associative memory have offered mechanistic explanation for many cognitive phenomena. However, attractor memory models are typically trained using orthogonal or random patterns to avoid interference between memories, which makes them unfeasible for naturally occurring complex correlated stimuli like images. We approach this problem by combining a recurrent attractor network with a feedforward network that learns distributed representations using an unsupervised Hebbian-Bayesian learning rule. The resulting network model incorporates many known biological properties: unsupervised learning, Hebbian plasticity, sparse distributed activations, sparse connectivity, columnar and laminar cortical architecture, etc. We evaluate the synergistic effects of the feedforward and recurrent network components in complex pattern recognition tasks on the MNIST handwritten digits dataset. We demonstrate that the recurrent attractor component implements associative memory when trained on the feedforward-driven internal (hidden) representations. The associative memory is also shown to perform prototype extraction from the training data and make the representations robust to severely distorted input. We argue that several aspects of the proposed integration of feedforward and recurrent computations are particularly attractive from a machine learning perspective.

Semi-supervised learning with Bayesian Confidence Propagation Neural Network

Jun 29, 2021

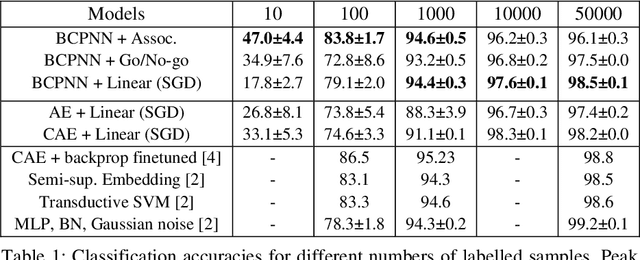

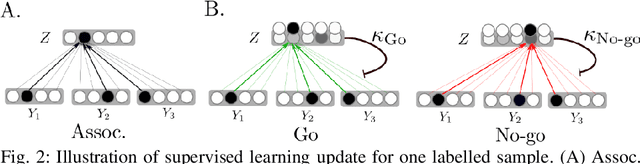

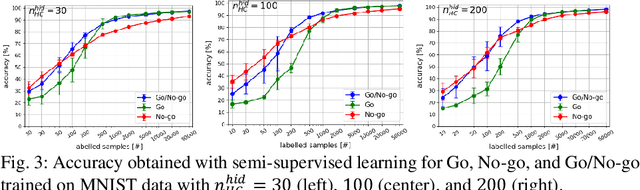

Abstract:Learning internal representations from data using no or few labels is useful for machine learning research, as it allows using massive amounts of unlabeled data. In this work, we use the Bayesian Confidence Propagation Neural Network (BCPNN) model developed as a biologically plausible model of the cortex. Recent work has demonstrated that these networks can learn useful internal representations from data using local Bayesian-Hebbian learning rules. In this work, we show how such representations can be leveraged in a semi-supervised setting by introducing and comparing different classifiers. We also evaluate and compare such networks with other popular semi-supervised classifiers.

StreamBrain: An HPC Framework for Brain-like Neural Networks on CPUs, GPUs and FPGAs

Jun 09, 2021

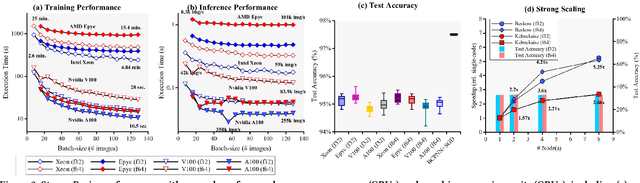

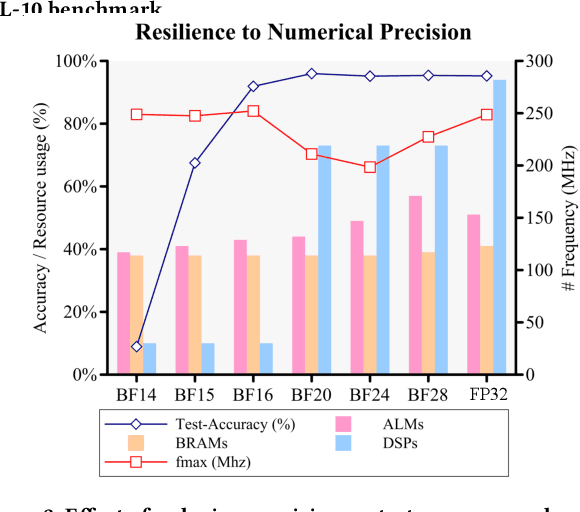

Abstract:The modern deep learning method based on backpropagation has surged in popularity and has been used in multiple domains and application areas. At the same time, there are other -- less-known -- machine learning algorithms with a mature and solid theoretical foundation whose performance remains unexplored. One such example is the brain-like Bayesian Confidence Propagation Neural Network (BCPNN). In this paper, we introduce StreamBrain -- a framework that allows neural networks based on BCPNN to be practically deployed in High-Performance Computing systems. StreamBrain is a domain-specific language (DSL), similar in concept to existing machine learning (ML) frameworks, and supports backends for CPUs, GPUs, and even FPGAs. We empirically demonstrate that StreamBrain can train the well-known ML benchmark dataset MNIST within seconds, and we are the first to demonstrate BCPNN on STL-10 size networks. We also show how StreamBrain can be used to train with custom floating-point formats and illustrate the impact of using different bfloat variations on BCPNN using FPGAs.

Brain-like approaches to unsupervised learning of hidden representations -- a comparative study

May 06, 2020

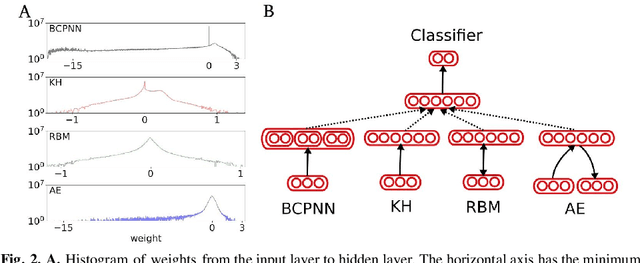

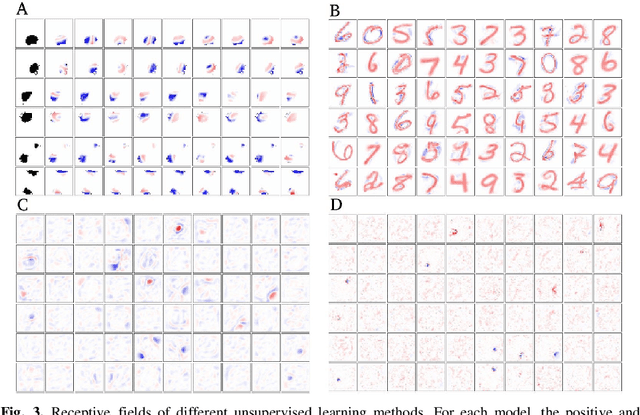

Abstract:Unsupervised learning of hidden representations has been one of the most vibrant research directions in machine learning in recent years. In this work we study the brain-like Bayesian Confidence Propagating Neural Network (BCPNN) model, recently extended to extract sparse distributed high-dimensional representations. The saliency and separability of the hidden representations when trained on MNIST dataset is studied using an external classifier, and compared with other unsupervised learning methods that include restricted Boltzmann machines and autoencoders.

Learning representations in Bayesian Confidence Propagation neural networks

Mar 27, 2020

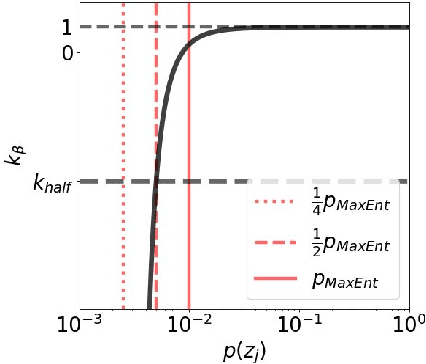

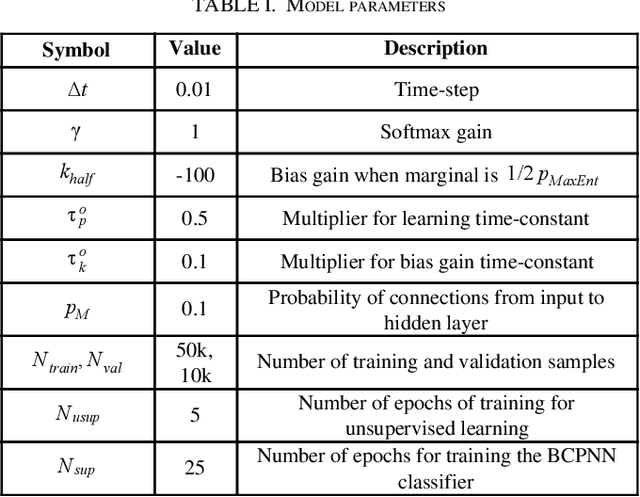

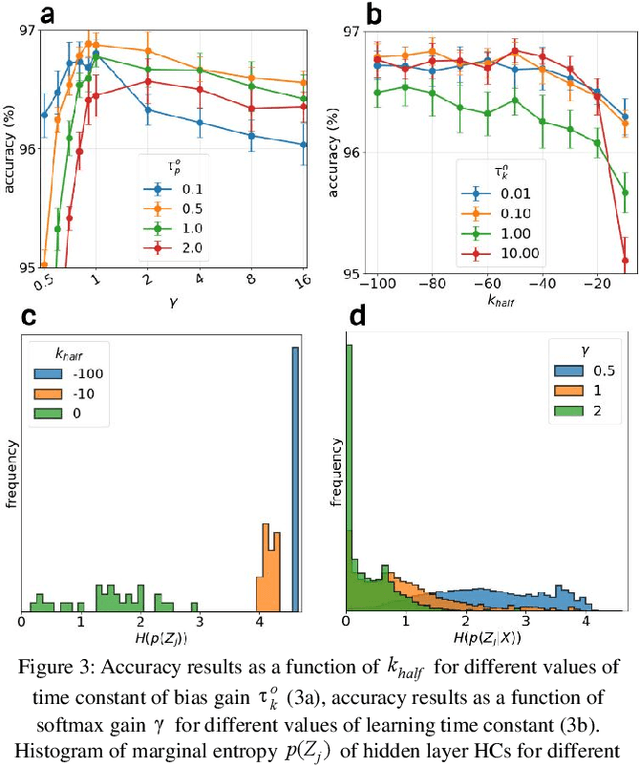

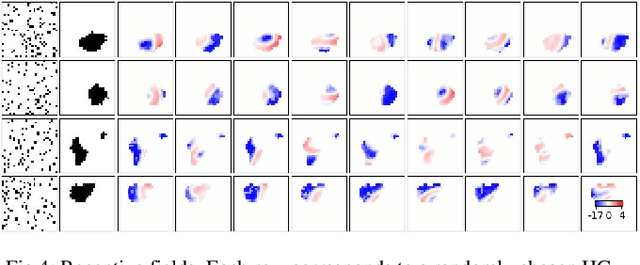

Abstract:Unsupervised learning of hierarchical representations has been one of the most vibrant research directions in deep learning during recent years. In this work we study biologically inspired unsupervised strategies in neural networks based on local Hebbian learning. We propose new mechanisms to extend the Bayesian Confidence Propagating Neural Network (BCPNN) architecture, and demonstrate their capability for unsupervised learning of salient hidden representations when tested on the MNIST dataset.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge