Soumya Ray

Optimizing Drug Design by Merging Generative AI With Active Learning Frameworks

May 04, 2023

Abstract:Traditional drug discovery programs are being transformed by the advent of machine learning methods. Among these, Generative AI methods (GM) have gained attention due to their ability to design new molecules and enhance specific properties of existing ones. However, current GM methods have limitations, such as low affinity towards the target, unknown ADME/PK properties, or the lack of synthetic tractability. To improve the applicability domain of GM methods, we have developed a workflow based on a variational autoencoder coupled with active learning steps. The designed GM workflow iteratively learns from molecular metrics, including drug likeliness, synthesizability, similarity, and docking scores. In addition, we also included a hierarchical set of criteria based on advanced molecular modeling simulations during a final selection step. We tested our GM workflow on two model systems, CDK2 and KRAS. In both cases, our model generated chemically viable molecules with a high predicted affinity toward the targets. Particularly, the proportion of high-affinity molecules inferred by our GM workflow was significantly greater than that in the training data. Notably, we also uncovered novel scaffolds significantly dissimilar to those known for each target. These results highlight the potential of our GM workflow to explore novel chemical space for specific targets, thereby opening up new possibilities for drug discovery endeavors.

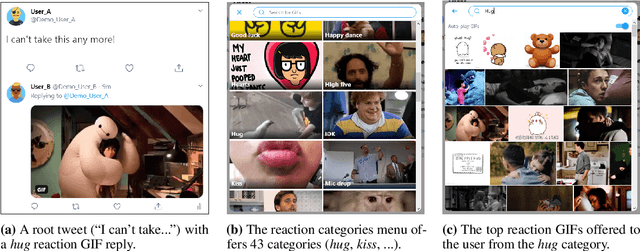

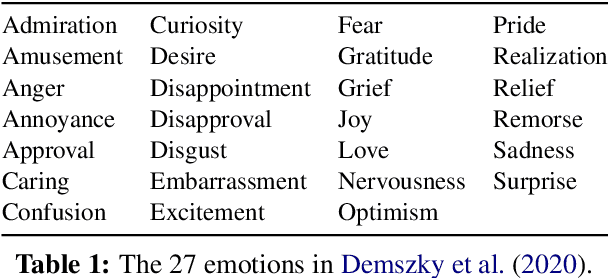

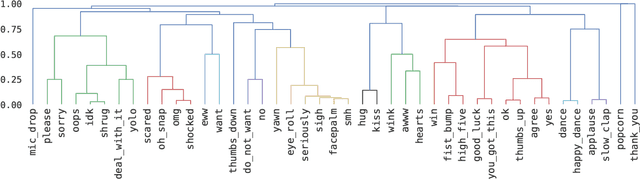

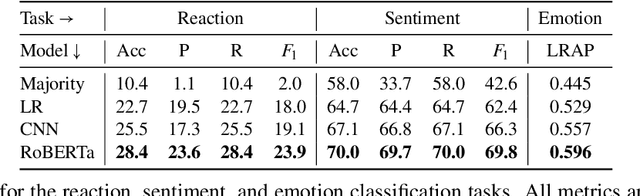

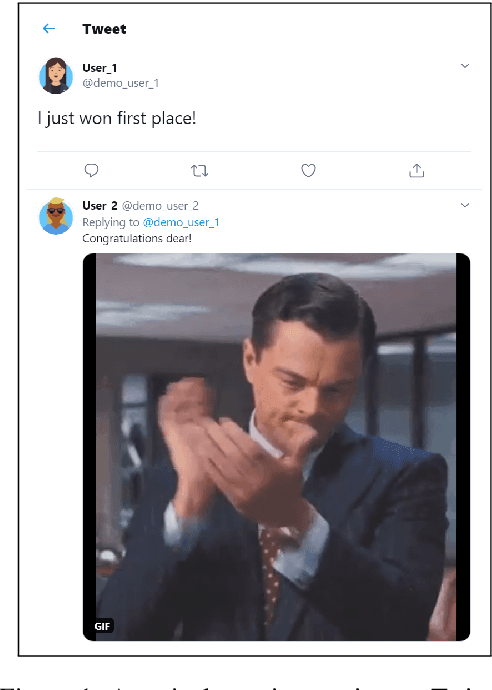

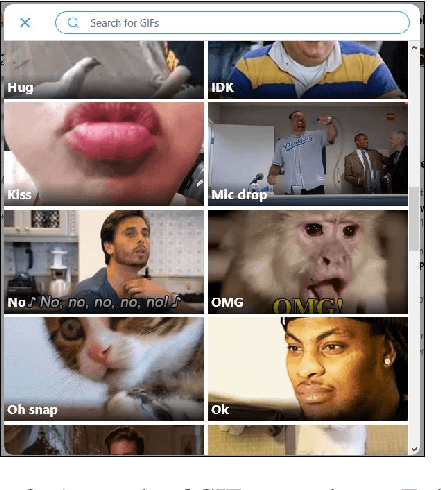

Happy Dance, Slow Clap: Using Reaction GIFs to Predict Induced Affect on Twitter

May 20, 2021

Abstract:Datasets with induced emotion labels are scarce but of utmost importance for many NLP tasks. We present a new, automated method for collecting texts along with their induced reaction labels. The method exploits the online use of reaction GIFs, which capture complex affective states. We show how to augment the data with induced emotion and induced sentiment labels. We use our method to create and publish ReactionGIF, a first-of-its-kind affective dataset of 30K tweets. We provide baselines for three new tasks, including induced sentiment prediction and multilabel classification of induced emotions. Our method and dataset open new research opportunities in emotion detection and affective computing.

Beyond Fair Pay: Ethical Implications of NLP Crowdsourcing

Apr 20, 2021

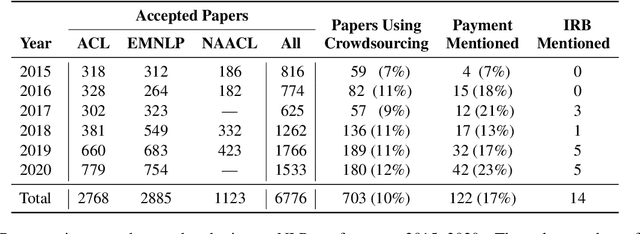

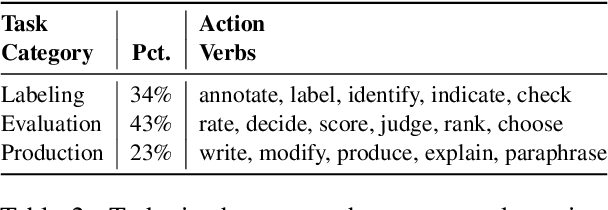

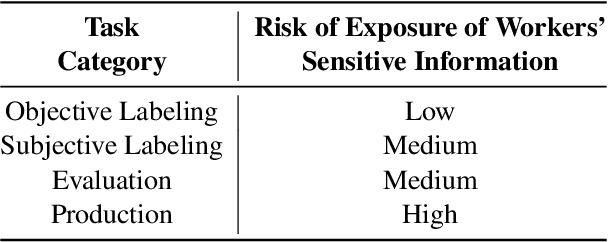

Abstract:The use of crowdworkers in NLP research is growing rapidly, in tandem with the exponential increase in research production in machine learning and AI. Ethical discussion regarding the use of crowdworkers within the NLP research community is typically confined in scope to issues related to labor conditions such as fair pay. We draw attention to the lack of ethical considerations related to the various tasks performed by workers, including labeling, evaluation, and production. We find that the Final Rule, the common ethical framework used by researchers, did not anticipate the use of online crowdsourcing platforms for data collection, resulting in gaps between the spirit and practice of human-subjects ethics in NLP research. We enumerate common scenarios where crowdworkers performing NLP tasks are at risk of harm. We thus recommend that researchers evaluate these risks by considering the three ethical principles set up by the Belmont Report. We also clarify some common misconceptions regarding the Institutional Review Board (IRB) application. We hope this paper will serve to reopen the discussion within our community regarding the ethical use of crowdworkers.

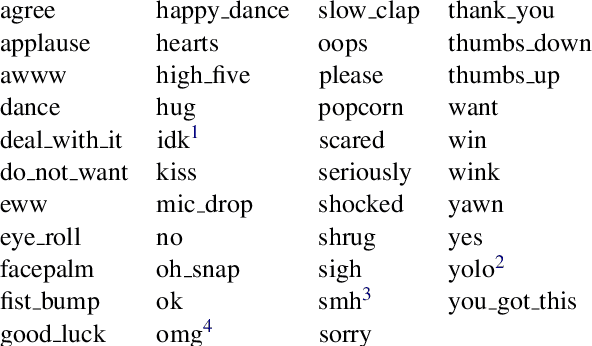

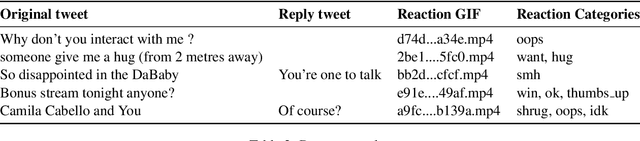

SocialNLP EmotionGIF 2020 Challenge Overview: Predicting Reaction GIF Categories on Social Media

Feb 24, 2021

Abstract:We present an overview of the EmotionGIF2020 Challenge, held at the 8th International Workshop on Natural Language Processing for Social Media (SocialNLP), in conjunction with ACL 2020. The challenge required predicting affective reactions to online texts, and included the EmotionGIF dataset, with tweets labeled for the reaction categories. The novel dataset included 40K tweets with their reaction GIFs. Due to the special circumstances of year 2020, two rounds of the competition were conducted. A total of 84 teams registered for the task. Of these, 25 teams success-fully submitted entries to the evaluation phase in the first round, while 13 teams participated successfully in the second round. Of the top participants, five teams presented a technical report and shared their code. The top score of the winning team using the Recall@K metric was 62.47%.

Reactive Supervision: A New Method for Collecting Sarcasm Data

Sep 28, 2020

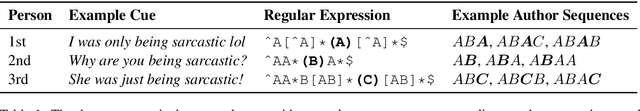

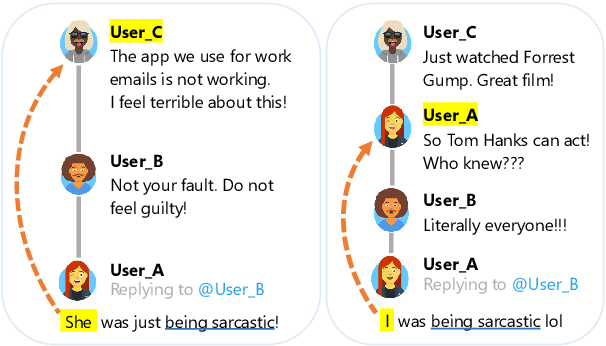

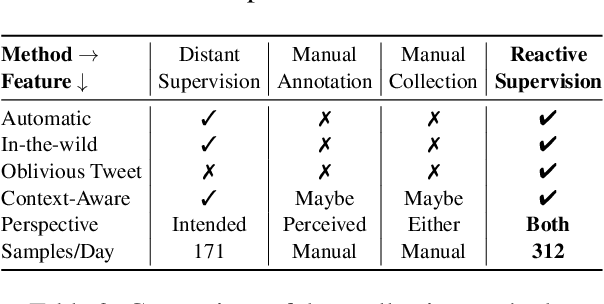

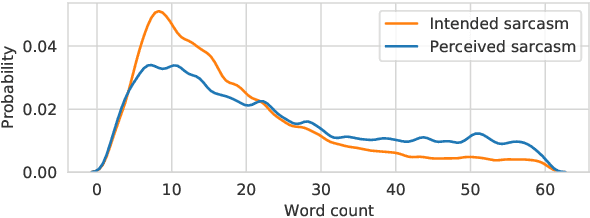

Abstract:Sarcasm detection is an important task in affective computing, requiring large amounts of labeled data. We introduce reactive supervision, a novel data collection method that utilizes the dynamics of online conversations to overcome the limitations of existing data collection techniques. We use the new method to create and release a first-of-its-kind large dataset of tweets with sarcasm perspective labels and new contextual features. The dataset is expected to advance sarcasm detection research. Our method can be adapted to other affective computing domains, thus opening up new research opportunities.

Learning Bayesian Network Structure from Correlation-Immune Data

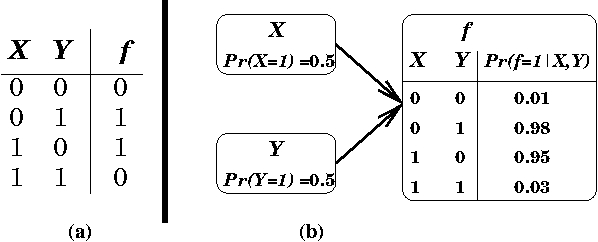

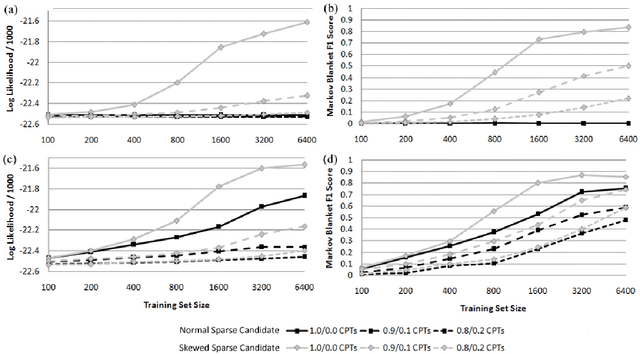

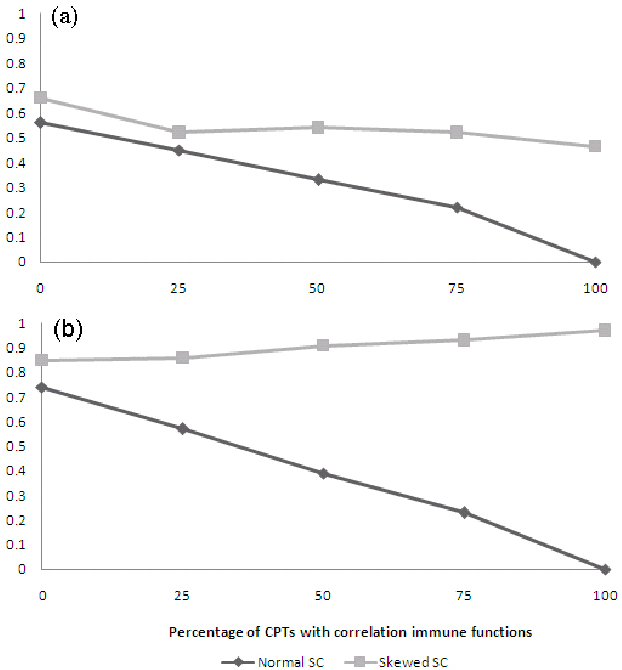

Jun 20, 2012

Abstract:Searching the complete space of possible Bayesian networks is intractable for problems of interesting size, so Bayesian network structure learning algorithms, such as the commonly used Sparse Candidate algorithm, employ heuristics. However, these heuristics also restrict the types of relationships that can be learned exclusively from data. They are unable to learn relationships that exhibit "correlation-immunity", such as parity. To learn Bayesian networks in the presence of correlation-immune relationships, we extend the Sparse Candidate algorithm with a technique called "skewing". This technique uses the observation that relationships that are correlation-immune under a specific input distribution may not be correlation-immune under another, sufficiently different distribution. We show that by extending Sparse Candidate with this technique we are able to discover relationships between random variables that are approximately correlation-immune, with a significantly lower computational cost than the alternative of considering multiple parents of a node at a time.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge