Son N. Tran

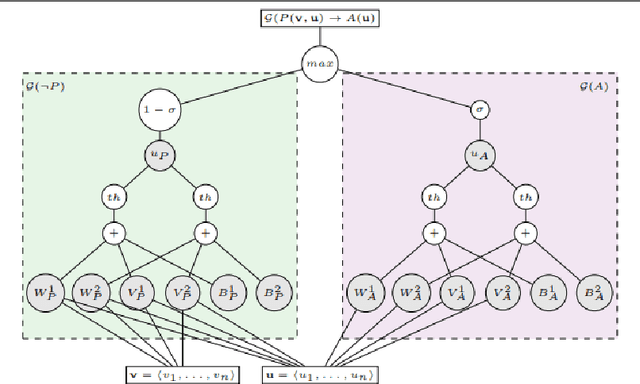

Neural-Symbolic Computing: An Effective Methodology for Principled Integration of Machine Learning and Reasoning

May 15, 2019

Abstract:Current advances in Artificial Intelligence and machine learning in general, and deep learning in particular have reached unprecedented impact not only across research communities, but also over popular media channels. However, concerns about interpretability and accountability of AI have been raised by influential thinkers. In spite of the recent impact of AI, several works have identified the need for principled knowledge representation and reasoning mechanisms integrated with deep learning-based systems to provide sound and explainable models for such systems. Neural-symbolic computing aims at integrating, as foreseen by Valiant, two most fundamental cognitive abilities: the ability to learn from the environment, and the ability to reason from what has been learned. Neural-symbolic computing has been an active topic of research for many years, reconciling the advantages of robust learning in neural networks and reasoning and interpretability of symbolic representation. In this paper, we survey recent accomplishments of neural-symbolic computing as a principled methodology for integrated machine learning and reasoning. We illustrate the effectiveness of the approach by outlining the main characteristics of the methodology: principled integration of neural learning with symbolic knowledge representation and reasoning allowing for the construction of explainable AI systems. The insights provided by neural-symbolic computing shed new light on the increasingly prominent need for interpretable and accountable AI systems.

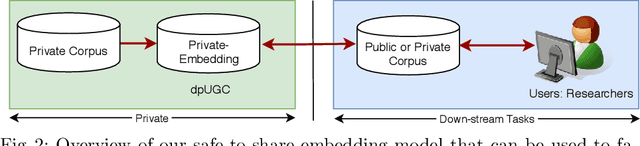

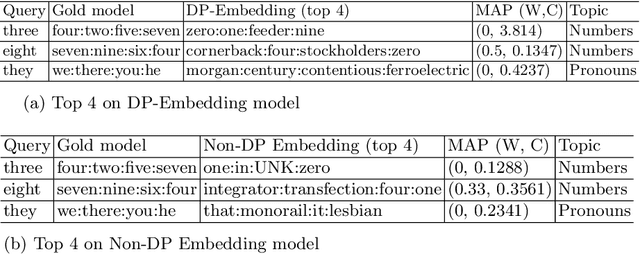

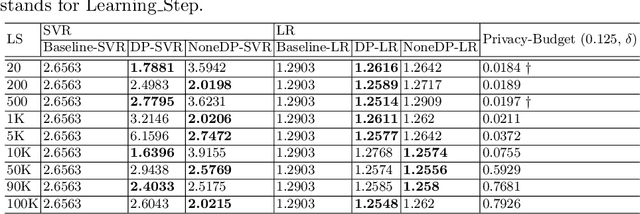

dpUGC: Learn Differentially Private Representation for User Generated Contents

Mar 25, 2019

Abstract:This paper firstly proposes a simple yet efficient generalized approach to apply differential privacy to text representation (i.e., word embedding). Based on it, we propose a user-level approach to learn personalized differentially private word embedding model on user generated contents (UGC). To our best knowledge, this is the first work of learning user-level differentially private word embedding model from text for sharing. The proposed approaches protect the privacy of the individual from re-identification, especially provide better trade-off of privacy and data utility on UGC data for sharing. The experimental results show that the trained embedding models are applicable for the classic text analysis tasks (e.g., regression). Moreover, the proposed approaches of learning differentially private embedding models are both framework- and data- independent, which facilitates the deployment and sharing. The source code is available at https://github.com/sonvx/dpText.

ETNLP: A Toolkit for Extraction, Evaluation and Visualization of Pre-trained Word Embeddings

Mar 11, 2019

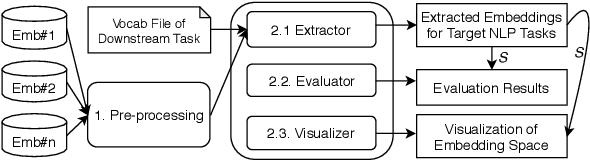

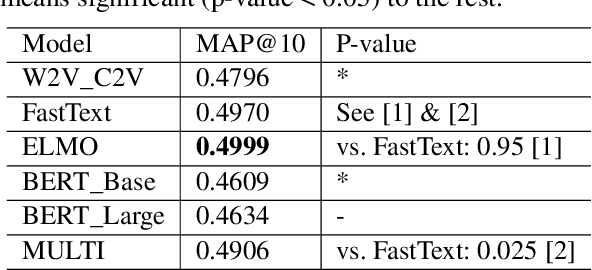

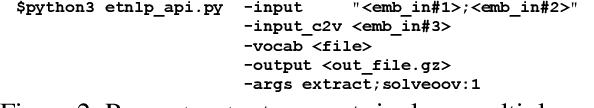

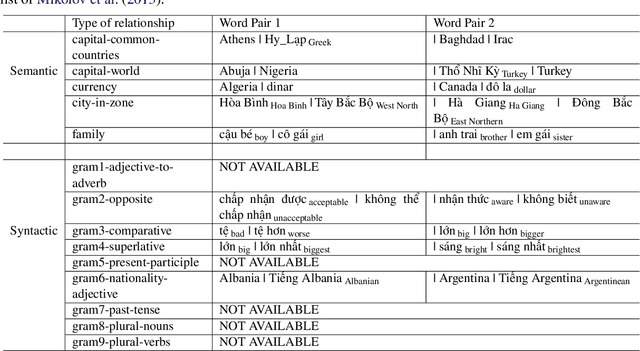

Abstract:In this paper, we introduce a comprehensive toolkit, ETNLP, which can evaluate, extract, and visualize multiple sets of pre-trained word embeddings. First, for evaluation, ETNLP analyses the quality of pre-trained embeddings based on an input word analogy list. Second, for extraction ETNLP provides a subset of the embeddings to be used in the downstream NLP tasks. Finally, ETNLP has a visualization module which is for exploring the embedded words interactively. We demonstrate the effectiveness of ETNLP on our pre-trained word embeddings in Vietnamese. Specifically, we create a large Vietnamese word analogy list to evaluate the embeddings. We then utilize the pre-trained embeddings for the name entity recognition (NER) task in Vietnamese and achieve the new state-of-the-art results on a benchmark dataset for the NER task. A video demonstration of ETNLP is available at https://vimeo.com/317599106. The source code and data are available at https: //github.com/vietnlp/etnlp.

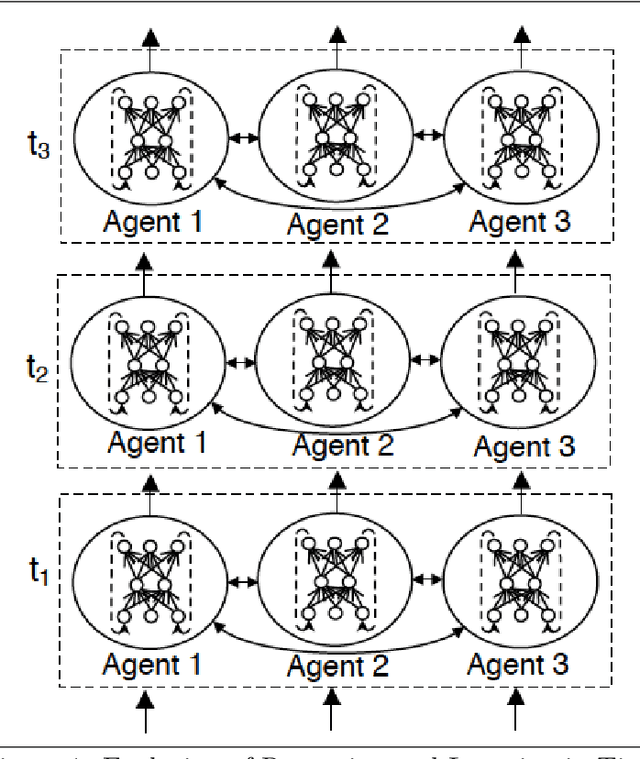

On Multi-resident Activity Recognition in Ambient Smart-Homes

Jun 18, 2018

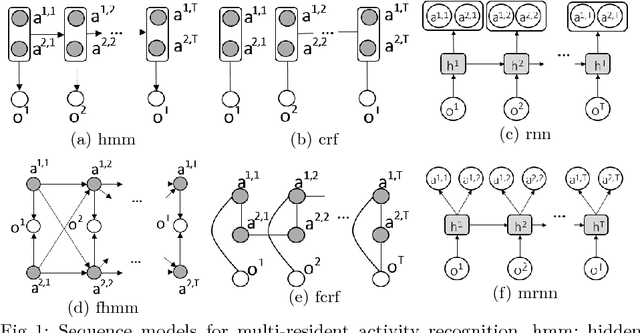

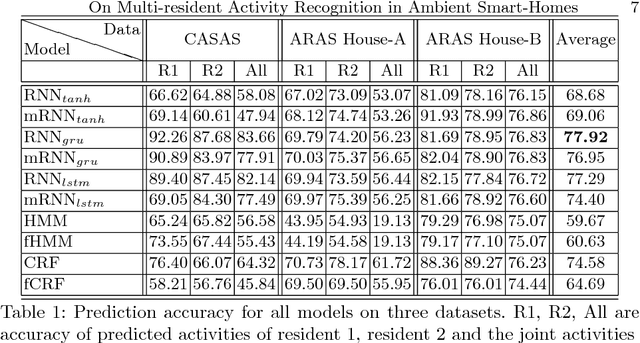

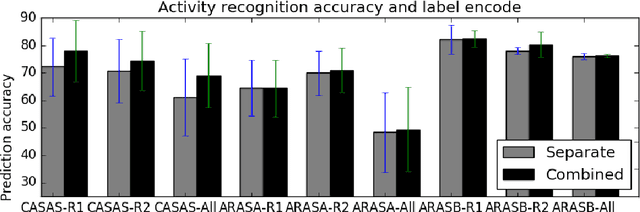

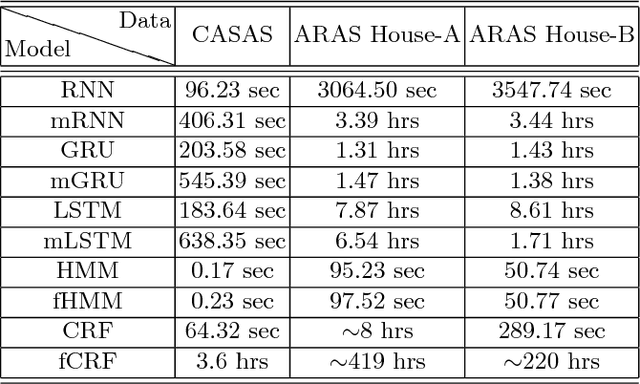

Abstract:Increasing attention to the research on activity monitoring in smart homes has motivated the employment of ambient intelligence to reduce the deployment cost and solve the privacy issue. Several approaches have been proposed for multi-resident activity recognition, however, there still lacks a comprehensive benchmark for future research and practical selection of models. In this paper we study different methods for multi-resident activity recognition and evaluate them on same sets of data. The experimental results show that recurrent neural network with gated recurrent units is better than other models and also considerably efficient, and that using combined activities as single labels is more effective than represent them as separate labels.

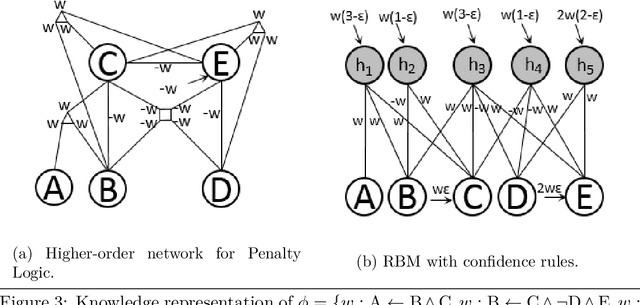

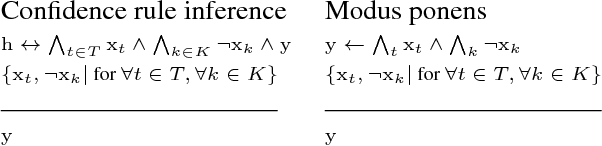

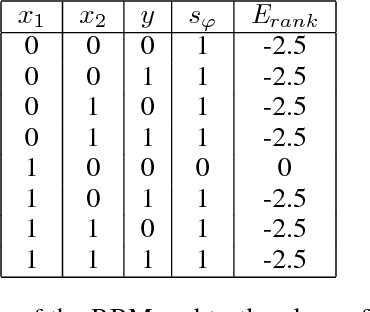

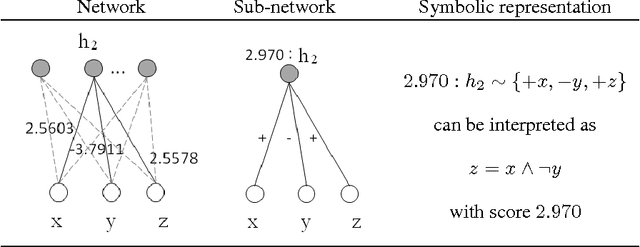

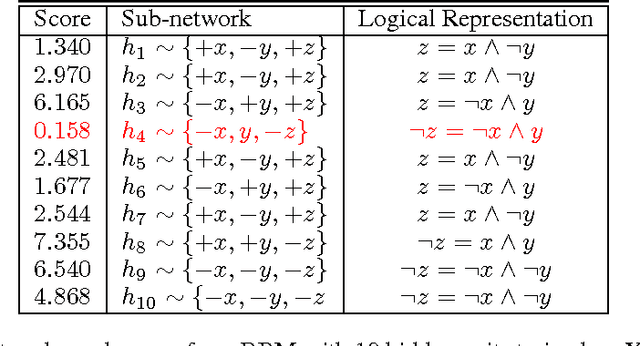

Propositional Knowledge Representation and Reasoning in Restricted Boltzmann Machines

May 29, 2018

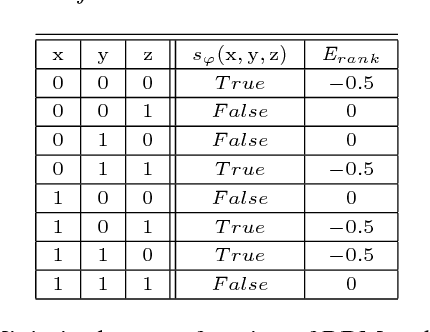

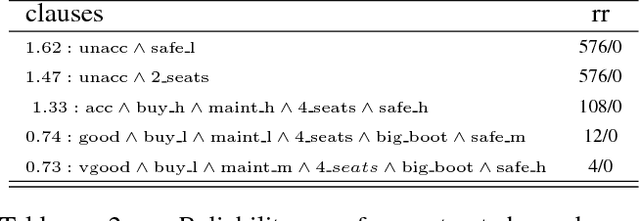

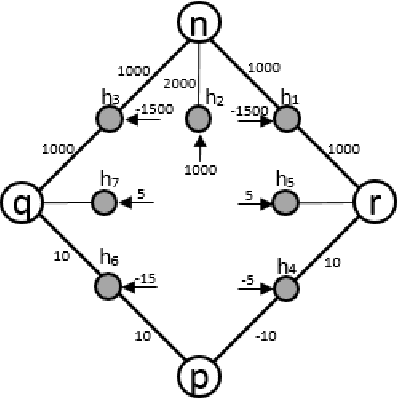

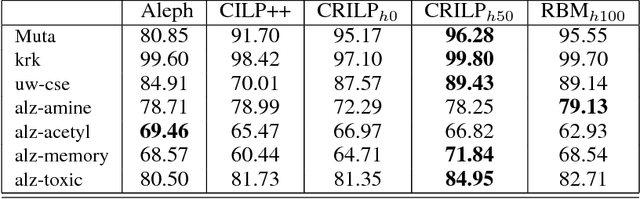

Abstract:While knowledge representation and reasoning are considered the keys for human-level artificial intelligence, connectionist networks have been shown successful in a broad range of applications due to their capacity for robust learning and flexible inference under uncertainty. The idea of representing symbolic knowledge in connectionist networks has been well-received and attracted much attention from research community as this can establish a foundation for integration of scalable learning and sound reasoning. In previous work, there exist a number of approaches that map logical inference rules with feed-forward propagation of artificial neural networks (ANN). However, the discriminative structure of an ANN requires the separation of input/output variables which makes it difficult for general reasoning where any variables should be inferable. Other approaches address this issue by employing generative models such as symmetric connectionist networks, however, they are difficult and convoluted. In this paper we propose a novel method to represent propositional formulas in restricted Boltzmann machines which is less complex, especially in the cases of logical implications and Horn clauses. An integration system is then developed and evaluated in real datasets which shows promising results.

Linear-Time Sequence Classification using Restricted Boltzmann Machines

Mar 08, 2018

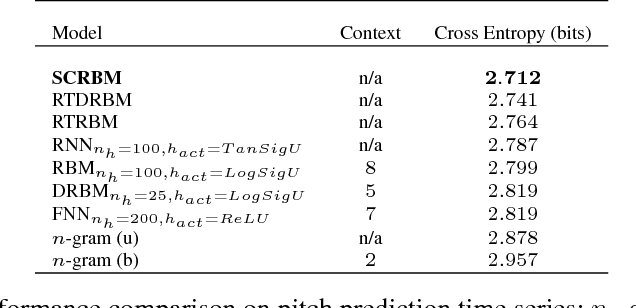

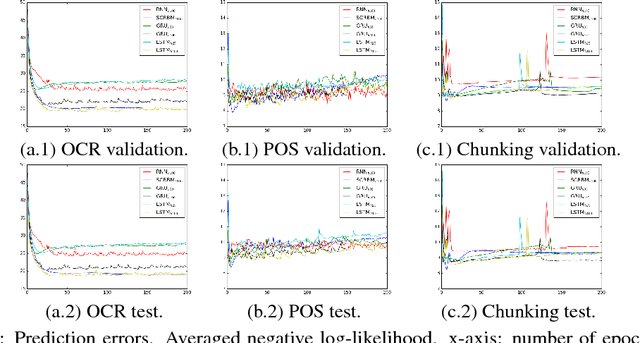

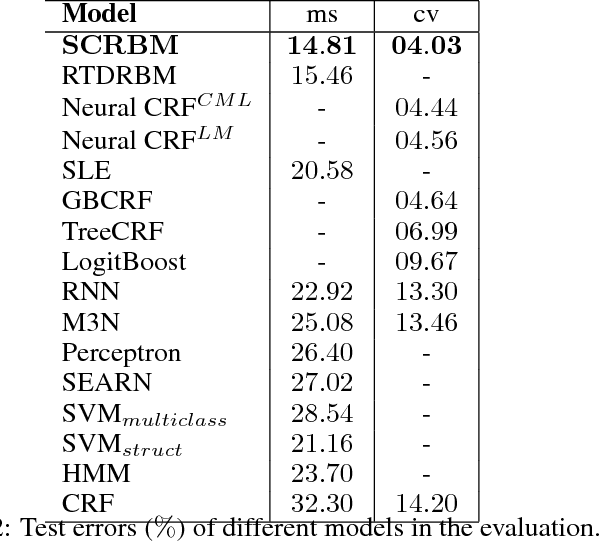

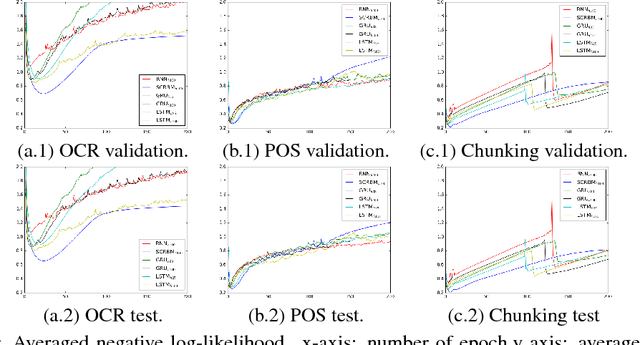

Abstract:Classification of sequence data is the topic of interest for dynamic Bayesian models and Recurrent Neural Networks (RNNs). While the former can explicitly model the temporal dependencies between class variables, the latter have a capability of learning representations. Several attempts have been made to improve performance by combining these two approaches or increasing the processing capability of the hidden units in RNNs. This often results in complex models with a large number of learning parameters. In this paper, a compact model is proposed which offers both representation learning and temporal inference of class variables by rolling Restricted Boltzmann Machines (RBMs) and class variables over time. We address the key issue of intractability in this variant of RBMs by optimising a conditional distribution, instead of a joint distribution. Experiments reported in the paper on melody modelling and optical character recognition show that the proposed model can outperform the state-of-the-art. Also, the experimental results on optical character recognition, part-of-speech tagging and text chunking demonstrate that our model is comparable to recurrent neural networks with complex memory gates while requiring far fewer parameters.

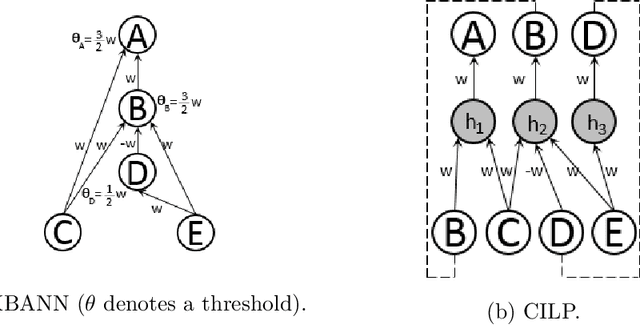

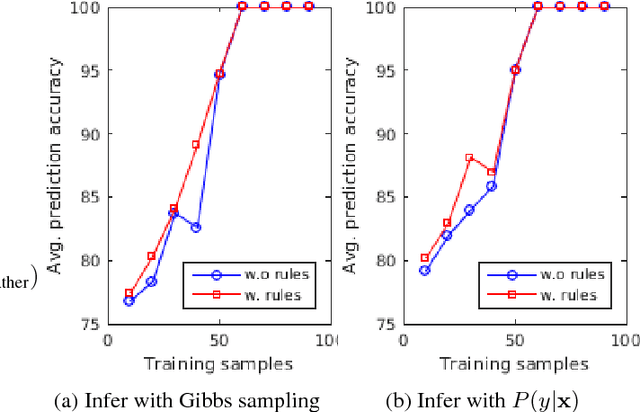

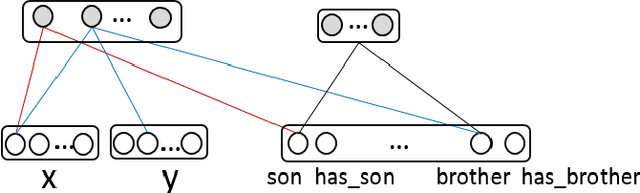

Unsupervised Neural-Symbolic Integration

Jun 22, 2017

Abstract:Symbolic has been long considered as a language of human intelligence while neural networks have advantages of robust computation and dealing with noisy data. The integration of neural-symbolic can offer better learning and reasoning while providing a means for interpretability through the representation of symbolic knowledge. Although previous works focus intensively on supervised feedforward neural networks, little has been done for the unsupervised counterparts. In this paper we show how to integrate symbolic knowledge into unsupervised neural networks. We exemplify our approach with knowledge in different forms, including propositional logic for DNA promoter prediction and first-order logic for understanding family relationship.

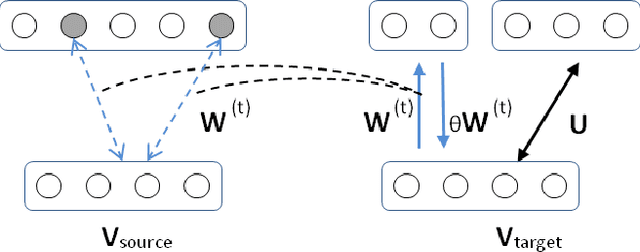

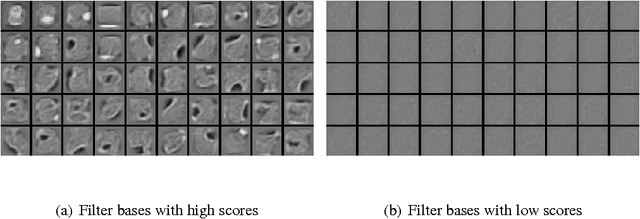

Adaptive Feature Ranking for Unsupervised Transfer Learning

May 28, 2014

Abstract:Transfer Learning is concerned with the application of knowledge gained from solving a problem to a different but related problem domain. In this paper, we propose a method and efficient algorithm for ranking and selecting representations from a Restricted Boltzmann Machine trained on a source domain to be transferred onto a target domain. Experiments carried out using the MNIST, ICDAR and TiCC image datasets show that the proposed adaptive feature ranking and transfer learning method offers statistically significant improvements on the training of RBMs. Our method is general in that the knowledge chosen by the ranking function does not depend on its relation to any specific target domain, and it works with unsupervised learning and knowledge-based transfer.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge