Slawomir Stanczak

D-Band RIS as a Reflect Array: Characterization and Hardware Impairments Study

May 10, 2023

Abstract:Reflecting intelligent surface (RIS) has emerged as a promising technology for enhancing wireless communication performance and enabling new applications in 6G networks with potentially low energy consumption and hardware complexity thanks to their passive nature. Despite the significant growth of the literature on RIS in recent years, covering various aspects of this technology, challenges and issues regarding the practical implementation of RIS have been less addressed. This issue is even more severe at D-band frequencies due to the inherent challenges. This paper aims to connect the requirements and aspirations from the link-level side to the actually achievable RIS hardware with the focus on the various models for RIS. In order to obtain a realistic hardware scenario while maintaining a manageable parameter set, a static reflect array with similar reflection behavior as the reconfigurable one is employed. The results of the study enable an improved RIS design process due to more realistic models. The special focus of this paper is on the hardware impairments, in particular, (i) the effect of specular reflection, and (ii) the beam squint effect.

Near-Optimal LOS and Orientation Aware Intelligent Reflecting Surface Placement

May 05, 2023

Abstract:Due to their passive nature and thus low energy consumption, intelligent reflecting surfaces (IRSs) have shown promise as means of extending coverage as a proxy for connection reliability. The relative locations of the base station (BS), IRS, and user equipment (UE) determine the extent of the coverage that the IRS provides which demonstrates the importance of the IRS placement problem. More specifically, locations, which determine whether BS-IRS and IRS-UE line of sight (LOS) links exist, and surface orientation, which determines whether the BS and UE are within the field of view (FoV) of the surface, play crucial roles in the quality of provided coverage. Moreover, another challenge is high computational complexity, since the IRS placement problem is a combinatorial optimization, and is NP-hard. Identifying the orientation of the surface and LOS channel as two crucial factors, we propose an efficient IRS placement algorithm that takes these two characteristics into account in order to maximize the network coverage. We prove the submodularity of the objective function which establishes near-optimal performance bounds for the algorithm. Simulation results demonstrate the performance of the proposed algorithm in a real environment.

Characterization of the weak Pareto boundary of resource allocation problems in wireless networks -- Implications to cell-less systems

Apr 27, 2023

Abstract:We establish necessary and sufficient conditions for a network configuration to provide utilities that are both fair and efficient in a well-defined sense. To cover as many applications as possible with a unified framework, we consider utilities defined in an axiomatic way, and the constraints imposed on the feasible network configurations are expressed with a single inequality involving a monotone norm. In this setting, we prove that a necessary and sufficient condition to obtain network configurations that are efficient in the weak Pareto sense is to select configurations attaining equality in the monotone norm constraint. Furthermore, for a given configuration satisfying this equality, we characterize a criterion for which the configuration can be considered fair for the active links. We illustrate potential implications of the theoretical findings by presenting, for the first time, a simple parametrization based on power vectors of achievable rate regions in modern cell-less systems subject to practical impairments.

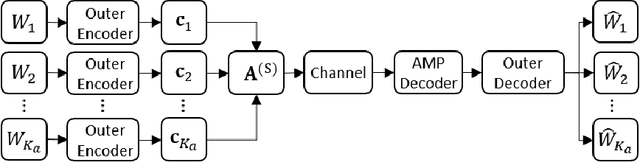

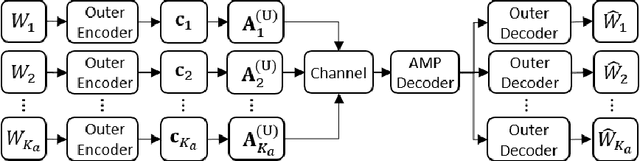

BiSPARCs for Unsourced Random Access in Massive MIMO

Apr 27, 2023Abstract:This paper considers the massive MIMO unsourced random access problem in a quasi-static Rayleigh fading setting. The proposed coding scheme is based on a concatenation of a "conventional" channel code (such as, e.g., LDPC) serving as an outer code, and a sparse regression code (SPARC) serving as an inner code. The scheme combines channel estimation, single-user decoding, and successive interference cancellation in a novel way. The receiver performs joint channel estimation and SPARC decoding via an instance of a bilinear generalized approximate message passing (BiGAMP) based algorithm, which leverages the intrinsic bilinear structure that arises in the considered communication regime. The detection step is followed by a per-user soft-input-soft-output (SISO) decoding of the outer channel code in combination with a successive interference cancellation (SIC) step. We show via numerical simulation that the resulting scheme achieves stat-of-the-art performance in the massive connectivity setting, while attaining comparatively low implementation complexity.

The Story of QoS Prediction in Vehicular Communication: From Radio Environment Statistics to Network-Access Throughput Prediction

Feb 23, 2023

Abstract:As cellular networks evolve towards the 6th Generation (6G), Machine Learning (ML) is seen as a key enabling technology to improve the capabilities of the network. ML provides a methodology for predictive systems, which, in turn, can make networks become proactive. This proactive behavior of the network can be leveraged to sustain, for example, a specific Quality of Service (QoS) requirement. With predictive Quality of Service (pQoS), a wide variety of new use cases, both safety- and entertainment-related, are emerging, especially in the automotive sector. Therefore, in this work, we consider maximum throughput prediction enhancing, for example, streaming or HD mapping applications. We discuss the entire ML workflow highlighting less regarded aspects such as the detailed sampling procedures, the in-depth analysis of the dataset characteristics, the effects of splits in the provided results, and the data availability. Reliable ML models need to face a lot of challenges during their lifecycle. We highlight how confidence can be built on ML technologies by better understanding the underlying characteristics of the collected data. We discuss feature engineering and the effects of different splits for the training processes, showcasing that random splits might overestimate performance by more than twofold. Moreover, we investigate diverse sets of input features, where network information proved to be most effective, cutting the error by half. Part of our contribution is the validation of multiple ML models within diverse scenarios. We also use Explainable AI (XAI) to show that ML can learn underlying principles of wireless networks without being explicitly programmed. Our data is collected from a deployed network that was under full control of the measurement team and covered different vehicular scenarios and radio environments.

MIMO Systems with Reconfigurable Antennas: Joint Channel Estimation and Mode Selection

Nov 24, 2022Abstract:Reconfigurable antennas (RAs) are a promising technology to enhance the capacity and coverage of wireless communication systems. However, RA systems have two major challenges: (i) High computational complexity of mode selection, and (ii) High overhead of channel estimation for all modes. In this paper, we develop a low-complexity iterative mode selection algorithm for data transmission in an RA-MIMO system. Furthermore, we study channel estimation of an RA multi-user MIMO system. However, given the coherence time, it is challenging to estimate channels of all modes. We propose a mode selection scheme to select a subset of modes, train channels for the selected subset, and predict channels for the remaining modes. In addition, we propose a prediction scheme based on pattern correlation between modes. Representative simulation results demonstrate the system's channel estimation error and achievable sum-rate for various selected modes and different signal-to-noise ratios (SNRs).

Machine Learning-based Methods for Reconfigurable Antenna Mode Selection in MIMO Systems

Nov 24, 2022

Abstract:MIMO technology has enabled spatial multiple access and has provided a higher system spectral efficiency (SE). However, this technology has some drawbacks, such as the high number of RF chains that increases complexity in the system. One of the solutions to this problem can be to employ reconfigurable antennas (RAs) that can support different radiation patterns during transmission to provide similar performance with fewer RF chains. In this regard, the system aims to maximize the SE with respect to optimum beamforming design and RA mode selection. Due to the non-convexity of this problem, we propose machine learning-based methods for RA antenna mode selection in both dynamic and static scenarios. In the static scenario, we present how to solve the RA mode selection problem, an integer optimization problem in nature, via deep convolutional neural networks (DCNN). A Multi-Armed-bandit (MAB) consisting of offline and online training is employed for the dynamic RA state selection. For the proposed MAB, the computational complexity of the optimization problem is reduced. Finally, the proposed methods in both dynamic and static scenarios are compared with exhaustive search and random selection methods.

Estimation of Doubly-Dispersive Channels in Linearly Precoded Multicarrier Systems Using Smoothness Regularization

Oct 11, 2022

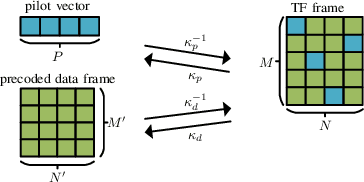

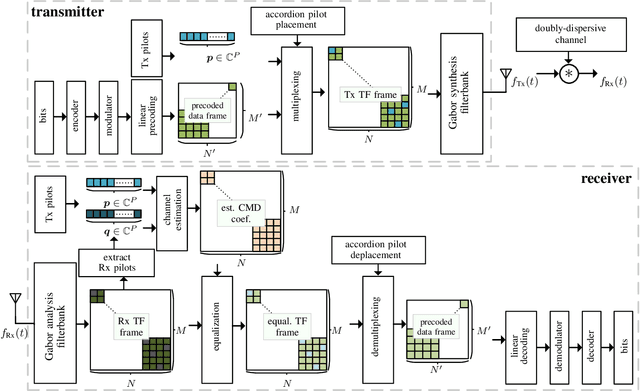

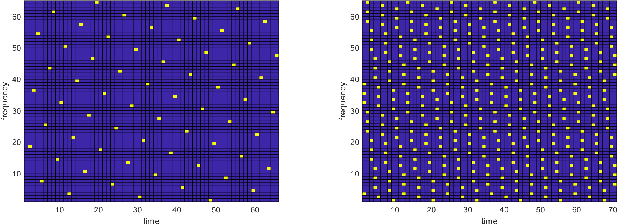

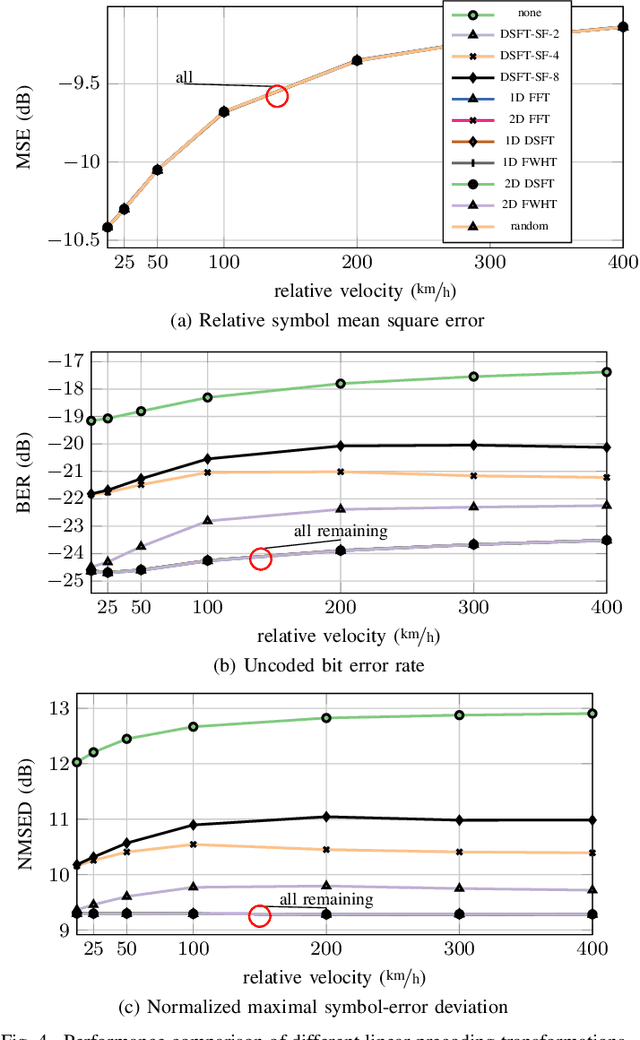

Abstract:In this paper, we propose a novel channel estimation scheme for pulse-shaped multicarrier systems using smoothness regularization for ultra-reliable low-latency communication (URLLC). It can be applied to any multicarrier system with or without linear precoding to estimate challenging doubly-dispersive channels. A recently proposed modulation scheme using orthogonal precoding is orthogonal time-frequency and space modulation (OTFS). In OTFS, pilot and data symbols are placed in delay-Doppler (DD) domain and are jointly precoded to the time-frequency (TF) domain. On the one hand, such orthogonal precoding increases the achievable channel estimation accuracy and enables high TF diversity at the receiver. On the other hand, it introduces leakage effects which requires extensive leakage suppression when the piloting is jointly precoded with the data. To avoid this, we propose to precode the data symbols only, place pilot symbols without precoding into the TF domain, and estimate the channel coefficients by interpolating smooth functions from the pilot samples. Furthermore, we present a piloting scheme enabling a smooth control of the number and position of the pilot symbols. Our numerical results suggest that the proposed scheme provides accurate channel estimation with reduced signaling overhead compared to standard estimators using Wiener filtering in the discrete DD domain.

Joint optimal beamforming and power control in cell-free massive MIMO

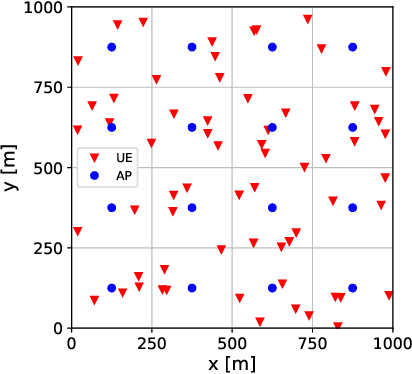

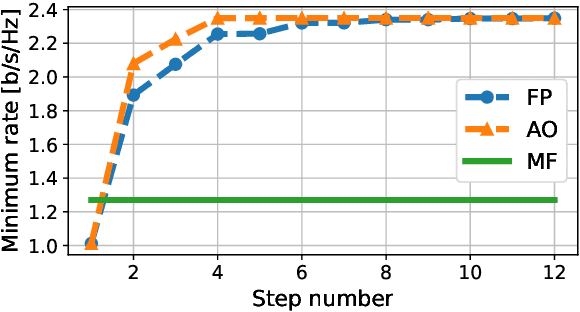

Aug 11, 2022

Abstract:We derive a fast and optimal algorithm for solving practical weighted max-min SINR problems in cell-free massive MIMO networks. For the first time, the optimization problem jointly covers long-term power control and distributed beamforming design under imperfect cooperation. In particular, we consider user-centric clusters of access points cooperating on the basis of possibly limited channel state information sharing. Our optimal algorithm merges powerful power control tools based on interference calculus with the recently developed team theoretic framework for distributed beamforming design. In addition, we propose a variation that shows faster convergence in practice.

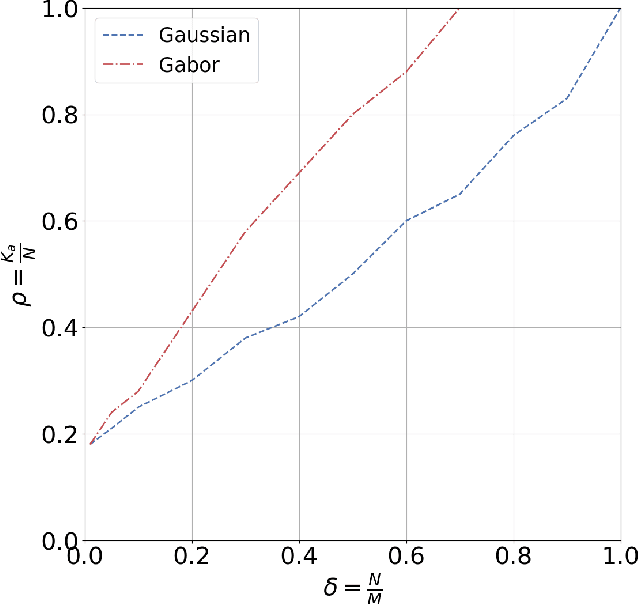

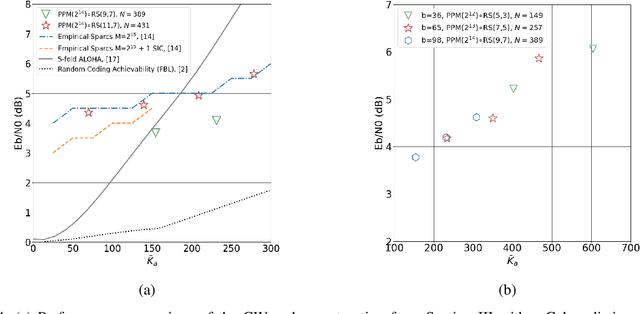

Constant Weight Codes with Gabor Dictionaries and Bayesian Decoding for Massive Random Access

Jul 26, 2022

Abstract:This paper considers a general framework for massive random access based on sparse superposition coding. We provide guidelines for the code design and propose the use of constant-weight codes in combination with a dictionary design based on Gabor frames. The decoder applies an extension of approximate message passing (AMP) by iteratively exchanging soft information between an AMP module that accounts for the dictionary structure, and a second inference module that utilizes the structure of the involved constant-weight code. We apply the encoding structure to (i) the unsourced random access setting, where all users employ a common dictionary, and (ii) to the "sourced" random access setting with user-specific dictionaries. When applied to a fading scenario, the communication scheme essentially operates non-coherently, as channel state information is required neither at the transmitter nor at the receiver. We observe that in regimes of practical interest, the proposed scheme compares favorably with state-of-the art schemes, in terms of the (per-user) energy-per-bit requirement, as well as the number of active users that can be simultaneously accommodated in the system. Importantly, this is achieved with a considerably smaller size of the transmitted codewords, potentially yielding lower latency and bandwidth occupancy, as well as lower implementation complexity.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge