Shreyansh Daftry

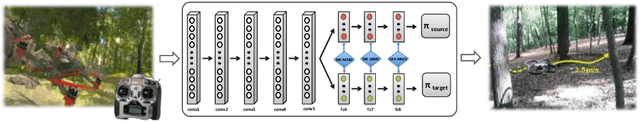

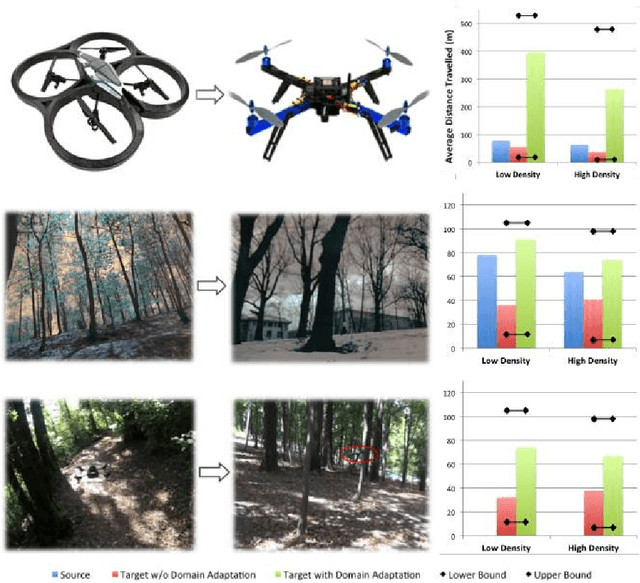

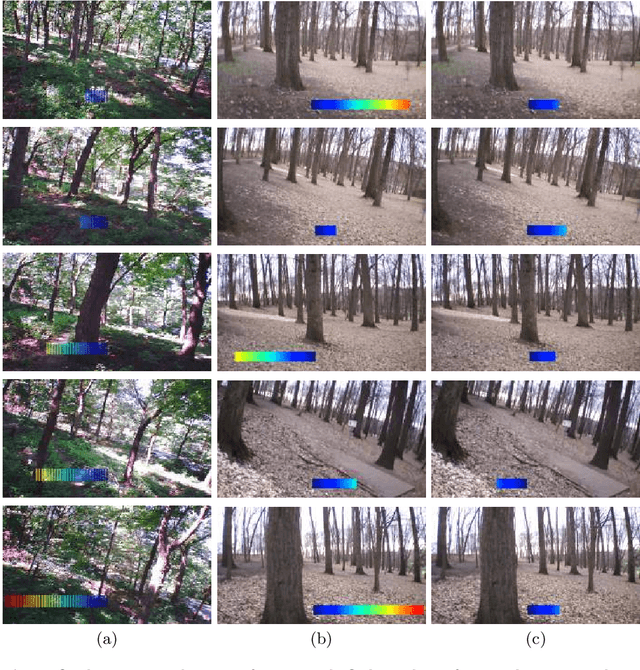

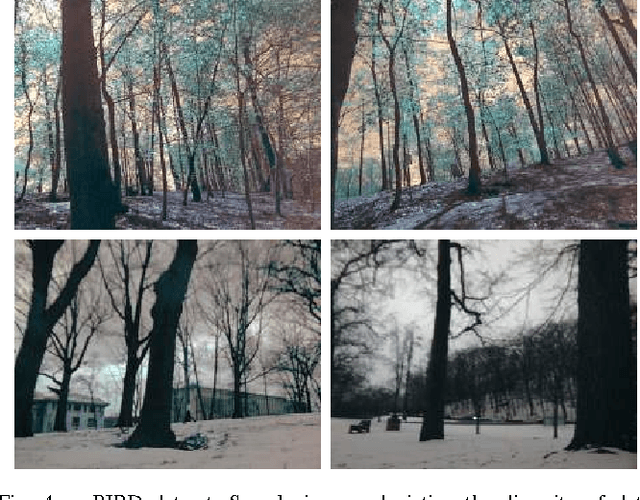

Learning Transferable Policies for Monocular Reactive MAV Control

Aug 01, 2016

Abstract:The ability to transfer knowledge gained in previous tasks into new contexts is one of the most important mechanisms of human learning. Despite this, adapting autonomous behavior to be reused in partially similar settings is still an open problem in current robotics research. In this paper, we take a small step in this direction and propose a generic framework for learning transferable motion policies. Our goal is to solve a learning problem in a target domain by utilizing the training data in a different but related source domain. We present this in the context of an autonomous MAV flight using monocular reactive control, and demonstrate the efficacy of our proposed approach through extensive real-world flight experiments in outdoor cluttered environments.

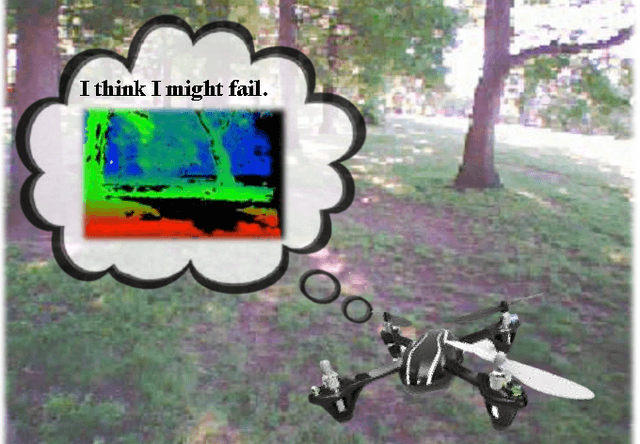

Introspective Perception: Learning to Predict Failures in Vision Systems

Jul 28, 2016

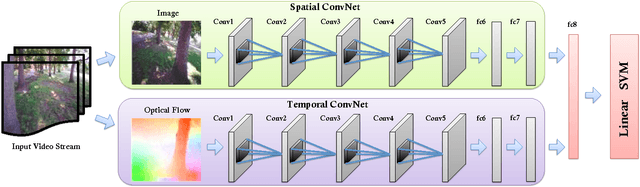

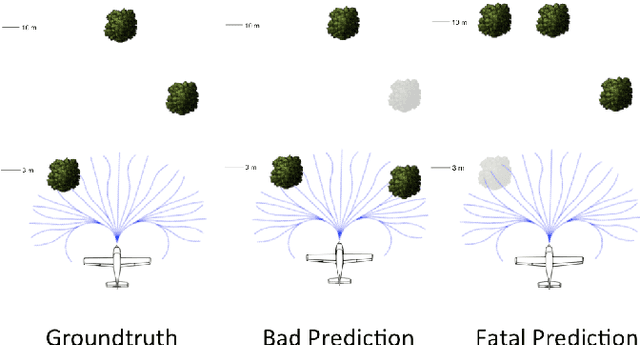

Abstract:As robots aspire for long-term autonomous operations in complex dynamic environments, the ability to reliably take mission-critical decisions in ambiguous situations becomes critical. This motivates the need to build systems that have situational awareness to assess how qualified they are at that moment to make a decision. We call this self-evaluating capability as introspection. In this paper, we take a small step in this direction and propose a generic framework for introspective behavior in perception systems. Our goal is to learn a model to reliably predict failures in a given system, with respect to a task, directly from input sensor data. We present this in the context of vision-based autonomous MAV flight in outdoor natural environments, and show that it effectively handles uncertain situations.

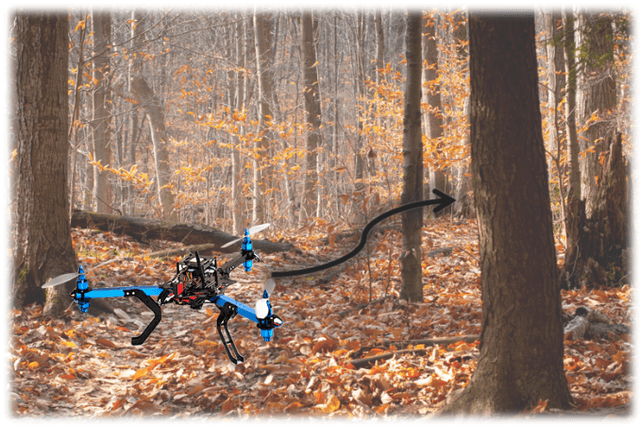

Robust Monocular Flight in Cluttered Outdoor Environments

Apr 16, 2016

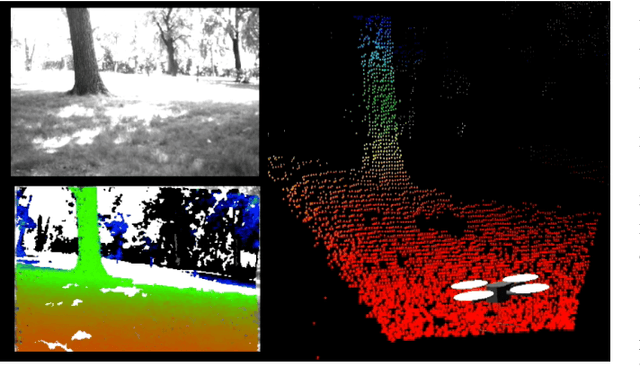

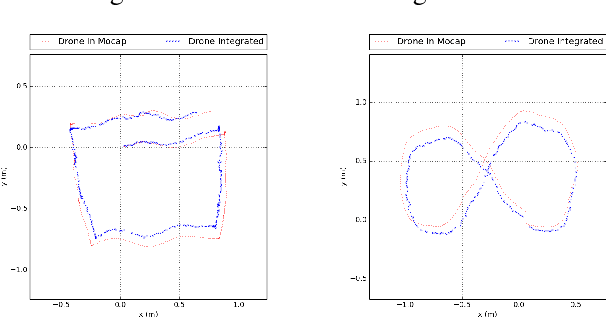

Abstract:Recently, there have been numerous advances in the development of biologically inspired lightweight Micro Aerial Vehicles (MAVs). While autonomous navigation is fairly straight-forward for large UAVs as expensive sensors and monitoring devices can be employed, robust methods for obstacle avoidance remains a challenging task for MAVs which operate at low altitude in cluttered unstructured environments. Due to payload and power constraints, it is necessary for such systems to have autonomous navigation and flight capabilities using mostly passive sensors such as cameras. In this paper, we describe a robust system that enables autonomous navigation of small agile quad-rotors at low altitude through natural forest environments. We present a direct depth estimation approach that is capable of producing accurate, semi-dense depth-maps in real time. Furthermore, a novel wind-resistant control scheme is presented that enables stable way-point tracking even in the presence of strong winds. We demonstrate the performance of our system through extensive experiments on real images and field tests in a cluttered outdoor environment.

Building with Drones: Accurate 3D Facade Reconstruction using MAVs

Feb 25, 2015

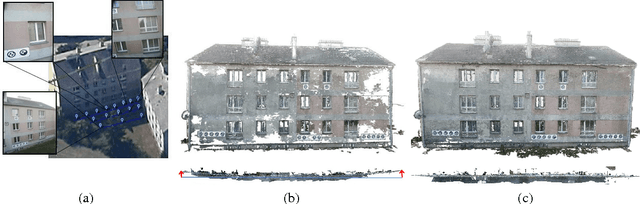

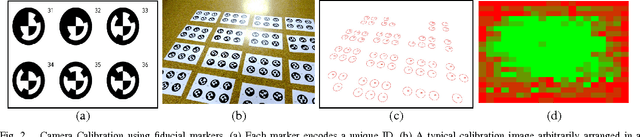

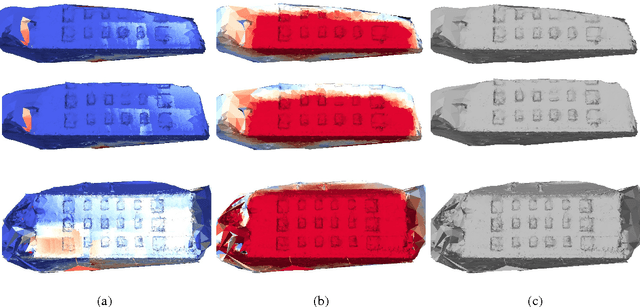

Abstract:Automatic reconstruction of 3D models from images using multi-view Structure-from-Motion methods has been one of the most fruitful outcomes of computer vision. These advances combined with the growing popularity of Micro Aerial Vehicles as an autonomous imaging platform, have made 3D vision tools ubiquitous for large number of Architecture, Engineering and Construction applications among audiences, mostly unskilled in computer vision. However, to obtain high-resolution and accurate reconstructions from a large-scale object using SfM, there are many critical constraints on the quality of image data, which often become sources of inaccuracy as the current 3D reconstruction pipelines do not facilitate the users to determine the fidelity of input data during the image acquisition. In this paper, we present and advocate a closed-loop interactive approach that performs incremental reconstruction in real-time and gives users an online feedback about the quality parameters like Ground Sampling Distance (GSD), image redundancy, etc on a surface mesh. We also propose a novel multi-scale camera network design to prevent scene drift caused by incremental map building, and release the first multi-scale image sequence dataset as a benchmark. Further, we evaluate our system on real outdoor scenes, and show that our interactive pipeline combined with a multi-scale camera network approach provides compelling accuracy in multi-view reconstruction tasks when compared against the state-of-the-art methods.

Vision and Learning for Deliberative Monocular Cluttered Flight

Nov 24, 2014

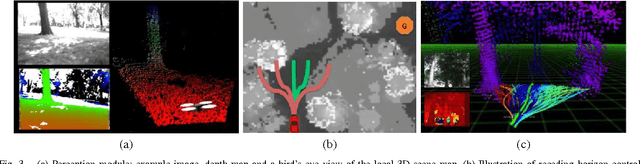

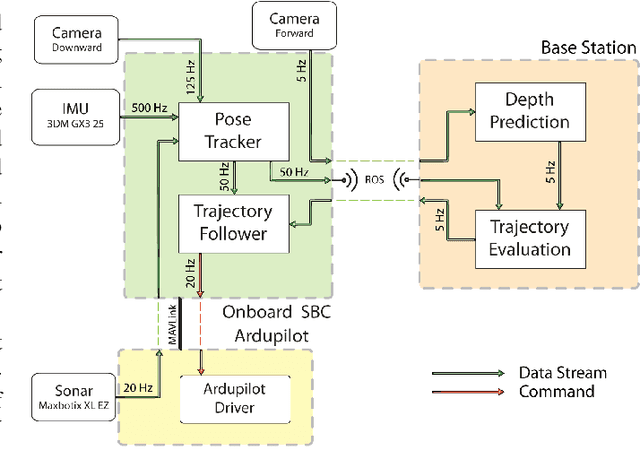

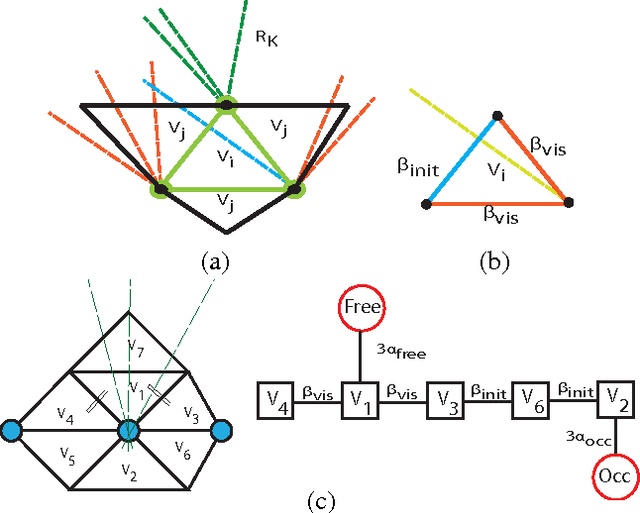

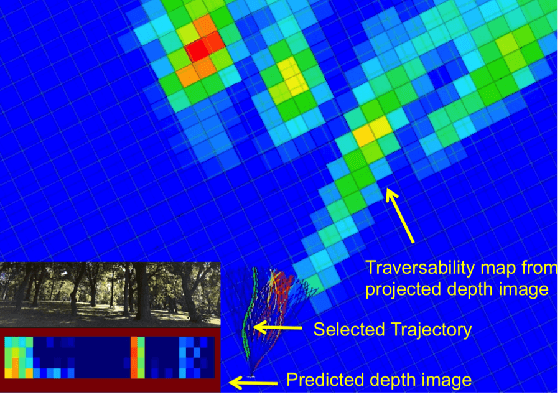

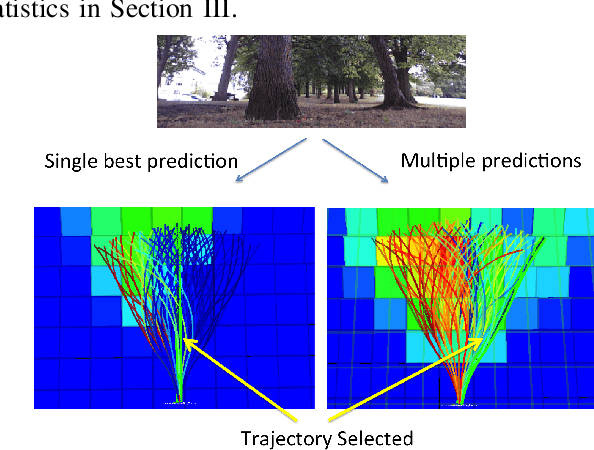

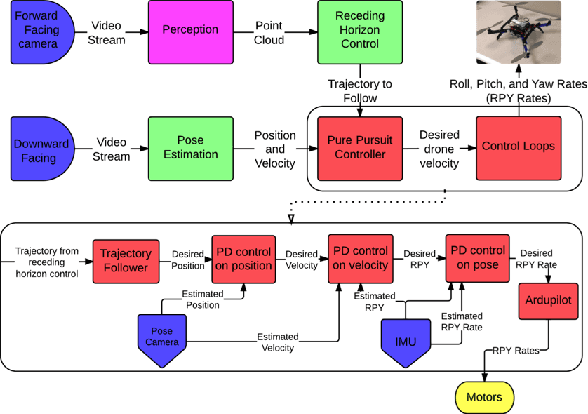

Abstract:Cameras provide a rich source of information while being passive, cheap and lightweight for small and medium Unmanned Aerial Vehicles (UAVs). In this work we present the first implementation of receding horizon control, which is widely used in ground vehicles, with monocular vision as the only sensing mode for autonomous UAV flight in dense clutter. We make it feasible on UAVs via a number of contributions: novel coupling of perception and control via relevant and diverse, multiple interpretations of the scene around the robot, leveraging recent advances in machine learning to showcase anytime budgeted cost-sensitive feature selection, and fast non-linear regression for monocular depth prediction. We empirically demonstrate the efficacy of our novel pipeline via real world experiments of more than 2 kms through dense trees with a quadrotor built from off-the-shelf parts. Moreover our pipeline is designed to combine information from other modalities like stereo and lidar as well if available.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge