Shibing Mo

High-order Knowledge Based Network Controllability Robustness Prediction: A Hypergraph Neural Network Approach

Feb 28, 2026Abstract:In order to evaluate the invulnerability of networks against various types of attacks and provide guidance for potential performance enhancement as well as controllability maintenance, network controllability robustness (NCR) has attracted increasing attention in recent years. Traditionally, controllability robustness is determined by attack simulations, which are computationally time-consuming and only applicable to small-scale networks. Although some machine learning-based methods for predicting network controllability robustness have been proposed, they mainly focus on pairwise interactions in complex networks, and the underlying relationships between high-order structural information and controllability robustness have not been explored. In this paper, a dual hypergraph attention neural network model based on high-order knowledge (NCR-HoK) is proposed to accomplish robustness learning and controllability robustness curve prediction. Through a node feature encoder, hypergraph construction with high-order relations, and a dedicated dual hypergraph attention module, the proposed method can effectively learn three types of network information simultaneously: explicit structural information in the original graph, high-order connection information in local neighborhoods, and hidden features in the embedding space. Notably, we explore for the first time the impact of high-order knowledge on network controllability robustness. Compared with state-of-the-art methods for network robustness learning, the proposed method achieves superior performance on both synthetic and real-world networks with low computational overhead.

Textual Self-attention Network: Test-Time Preference Optimization through Textual Gradient-based Attention

Nov 10, 2025

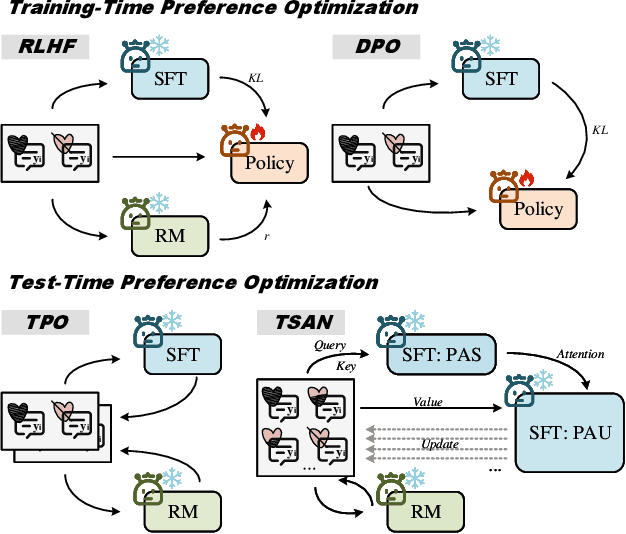

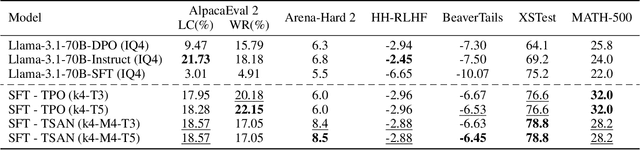

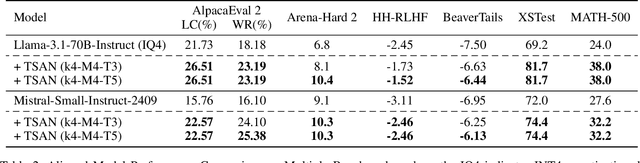

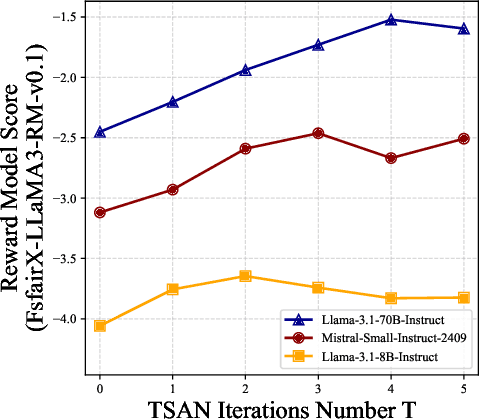

Abstract:Large Language Models (LLMs) have demonstrated remarkable generalization capabilities, but aligning their outputs with human preferences typically requires expensive supervised fine-tuning. Recent test-time methods leverage textual feedback to overcome this, but they often critique and revise a single candidate response, lacking a principled mechanism to systematically analyze, weigh, and synthesize the strengths of multiple promising candidates. Such a mechanism is crucial because different responses may excel in distinct aspects (e.g., clarity, factual accuracy, or tone), and combining their best elements may produce a far superior outcome. This paper proposes the Textual Self-Attention Network (TSAN), a new paradigm for test-time preference optimization that requires no parameter updates. TSAN emulates self-attention entirely in natural language to overcome this gap: it analyzes multiple candidates by formatting them into textual keys and values, weighs their relevance using an LLM-based attention module, and synthesizes their strengths into a new, preference-aligned response under the guidance of the learned textual attention. This entire process operates in a textual gradient space, enabling iterative and interpretable optimization. Empirical evaluations demonstrate that with just three test-time iterations on a base SFT model, TSAN outperforms supervised models like Llama-3.1-70B-Instruct and surpasses the current state-of-the-art test-time alignment method by effectively leveraging multiple candidate solutions.

AutoSGNN: Automatic Propagation Mechanism Discovery for Spectral Graph Neural Networks

Dec 17, 2024

Abstract:In real-world applications, spectral Graph Neural Networks (GNNs) are powerful tools for processing diverse types of graphs. However, a single GNN often struggles to handle different graph types-such as homogeneous and heterogeneous graphs-simultaneously. This challenge has led to the manual design of GNNs tailored to specific graph types, but these approaches are limited by the high cost of labor and the constraints of expert knowledge, which cannot keep up with the rapid growth of graph data. To overcome these challenges, we propose AutoSGNN, an automated framework for discovering propagation mechanisms in spectral GNNs. AutoSGNN unifies the search space for spectral GNNs by integrating large language models with evolutionary strategies to automatically generate architectures that adapt to various graph types. Extensive experiments on nine widely-used datasets, encompassing both homophilic and heterophilic graphs, demonstrate that AutoSGNN outperforms state-of-the-art spectral GNNs and graph neural architecture search methods in both performance and efficiency.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge