Sheldon Fung

Edge-Efficient Two-Stream Multimodal Architecture for Non-Intrusive Bathroom Fall Detection

Mar 17, 2026Abstract:Falls in wet bathroom environments are a major safety risk for seniors living alone. Recent work has shown that mmWave-only, vibration-only, and existing multimodal schemes, such as vibration-triggered radar activation, early feature concatenation, and decision-level score fusion, can support privacy-preserving, non-intrusive fall detection. However, these designs still treat motion and impact as loosely coupled streams, depending on coarse temporal alignment and amplitude thresholds, and do not explicitly encode the causal link between radar-observed collapse and floor impact or address timing drift, object drop confounders, and latency and energy constraints on low-power edge devices. To this end, we propose a two-stream architecture that encodes radar signals with a Motion--Mamba branch for long-range motion patterns and processes floor vibration with an Impact--Griffin branch that emphasizes impact transients and cross-axis coupling. Cross-conditioned fusion uses low-rank bilinear interaction and a Switch--MoE head to align motion and impact tokens and suppress object-drop confounders. The model keeps inference cost suitable for real-time execution on a Raspberry Pi 4B gateway. We construct a bathroom fall detection benchmark dataset with frame-level annotations, comprising more than 3~h of synchronized mmWave radar and triaxial vibration recordings across eight scenarios under running water, together with subject-independent training, validation, and test splits. On the test split, our model attains 96.1% accuracy, 94.8% precision, 88.0% recall, a 91.1% macro F1 score, and an AUC of 0.968. Compared with the strongest baseline, it improves accuracy by 2.0 percentage points and fall recall by 1.3 percentage points, while reducing latency from 35.9 ms to 15.8 ms and lowering energy per 2.56 s window from 14200 mJ to 10750 mJ on the Raspberry Pi 4B gateway.

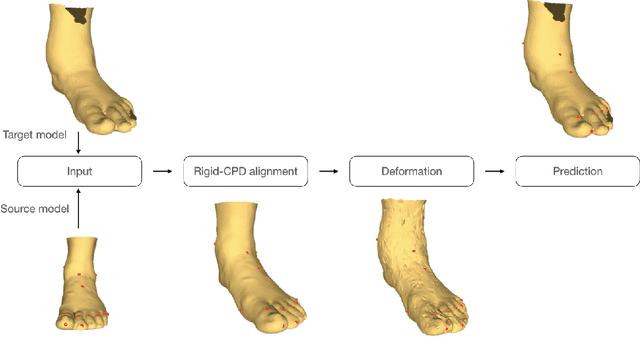

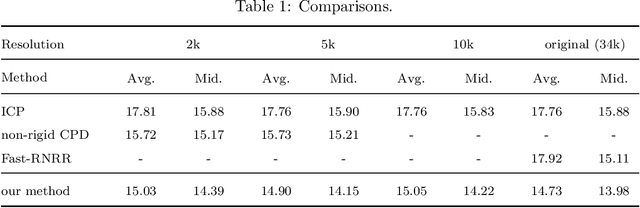

Anatomical Landmarks Localization for 3D Foot Point Clouds

Oct 03, 2021

Abstract:3D anatomical landmarks play an important role in health research. Their automated prediction/localization thus becomes a vital task. In this paper, we introduce a deformation method for 3D anatomical landmarks prediction. It utilizes a source model with anatomical landmarks which are annotated by clinicians, and deforms this model non-rigidly to match the target model. Two constraints are introduced in the optimization, which are responsible for alignment and smoothness, respectively. Experiments are performed on our dataset and the results demonstrate the robustness of our method, and show that it yields better performance than the state-of-the-art techniques in most cases.

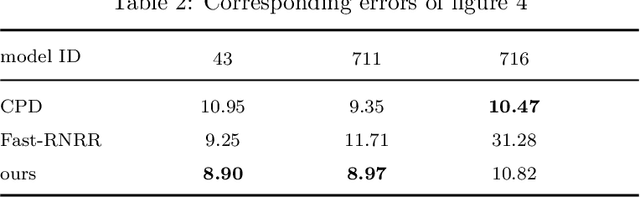

DeepfakeUCL: Deepfake Detection via Unsupervised Contrastive Learning

Apr 23, 2021

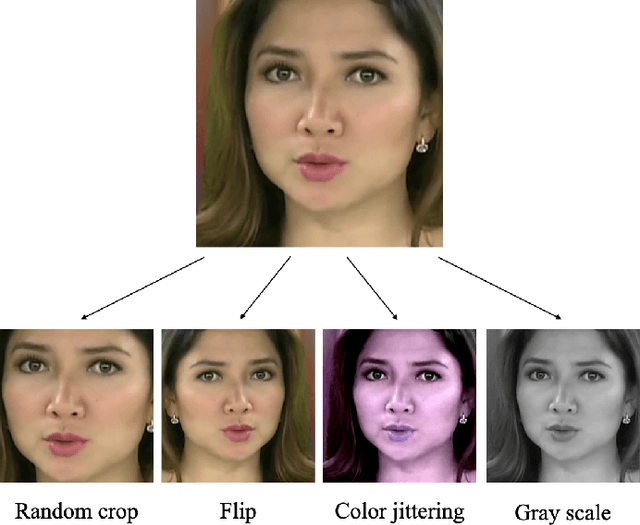

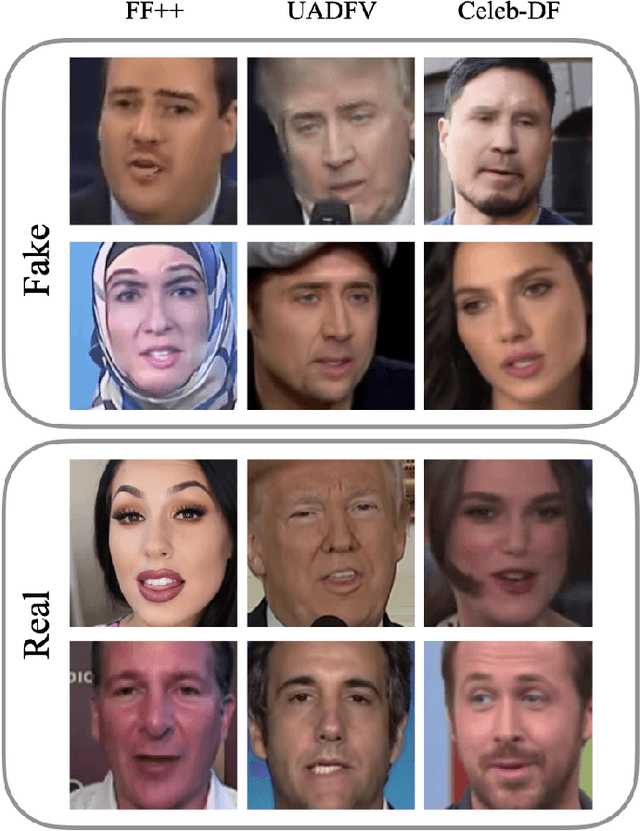

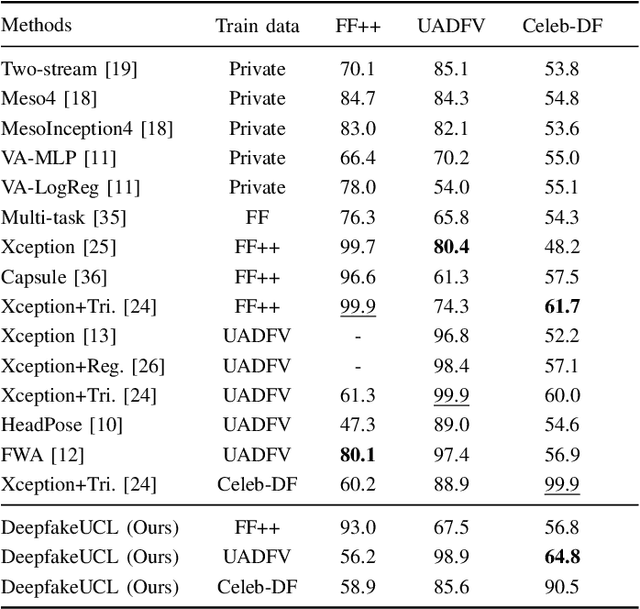

Abstract:Face deepfake detection has seen impressive results recently. Nearly all existing deep learning techniques for face deepfake detection are fully supervised and require labels during training. In this paper, we design a novel deepfake detection method via unsupervised contrastive learning. We first generate two different transformed versions of an image and feed them into two sequential sub-networks, i.e., an encoder and a projection head. The unsupervised training is achieved by maximizing the correspondence degree of the outputs of the projection head. To evaluate the detection performance of our unsupervised method, we further use the unsupervised features to train an efficient linear classification network. Extensive experiments show that our unsupervised learning method enables comparable detection performance to state-of-the-art supervised techniques, in both the intra- and inter-dataset settings. We also conduct ablation studies for our method.

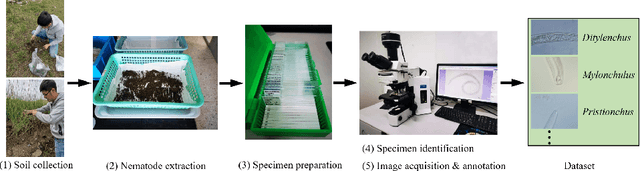

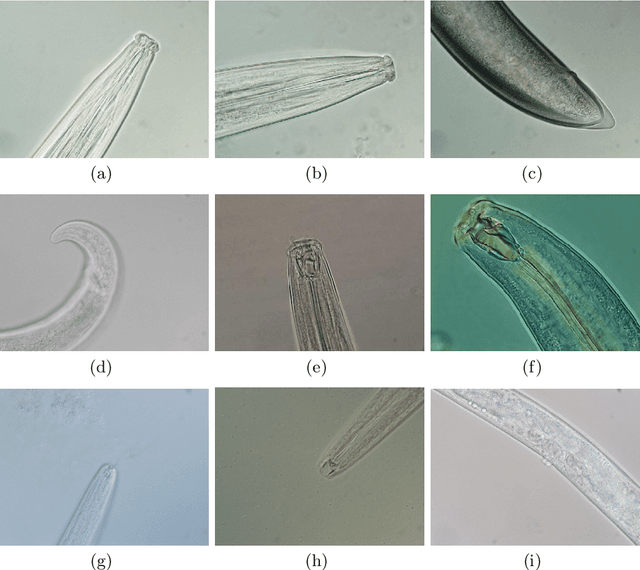

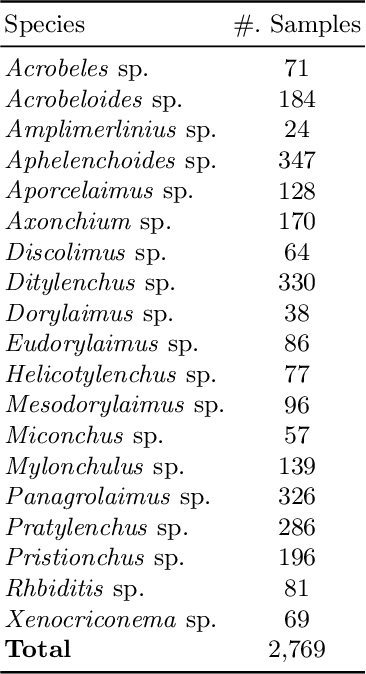

I-Nema: A Biological Image Dataset for Nematode Recognition

Mar 15, 2021

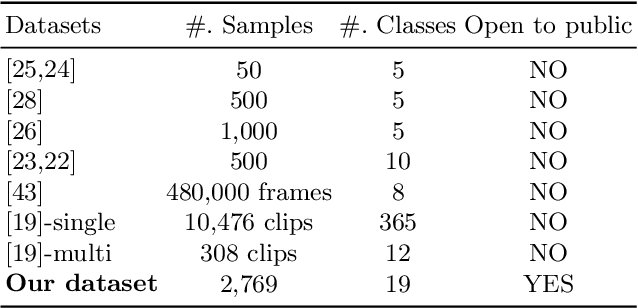

Abstract:Nematode worms are one of most abundant metazoan groups on the earth, occupying diverse ecological niches. Accurate recognition or identification of nematodes are of great importance for pest control, soil ecology, bio-geography, habitat conservation and against climate changes. Computer vision and image processing have witnessed a few successes in species recognition of nematodes; however, it is still in great demand. In this paper, we identify two main bottlenecks: (1) the lack of a publicly available imaging dataset for diverse species of nematodes (especially the species only found in natural environment) which requires considerable human resources in field work and experts in taxonomy, and (2) the lack of a standard benchmark of state-of-the-art deep learning techniques on this dataset which demands the discipline background in computer science. With these in mind, we propose an image dataset consisting of diverse nematodes (both laboratory cultured and naturally isolated), which, to our knowledge, is the first time in the community. We further set up a species recognition benchmark by employing state-of-the-art deep learning networks on this dataset. We discuss the experimental results, compare the recognition accuracy of different networks, and show the challenges of our dataset. We make our dataset publicly available at: https://github.com/xuequanlu/I-Nema

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge