Samuel Wilson

Noisy Inliers Make Great Outliers: Out-of-Distribution Object Detection with Noisy Synthetic Outliers

Aug 29, 2022

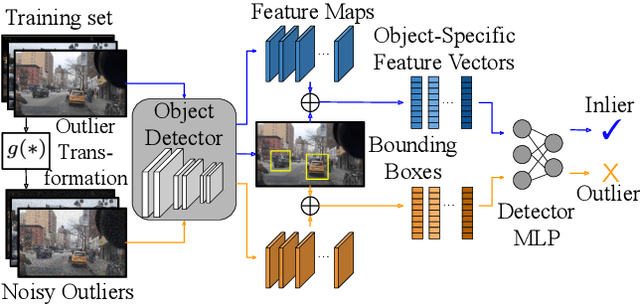

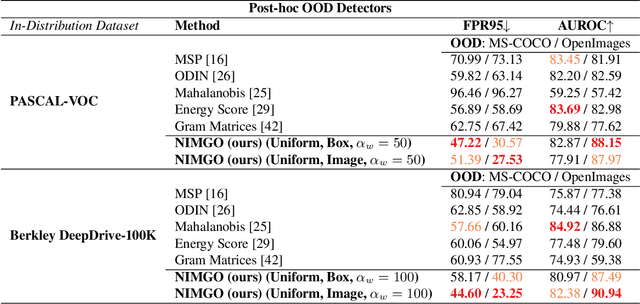

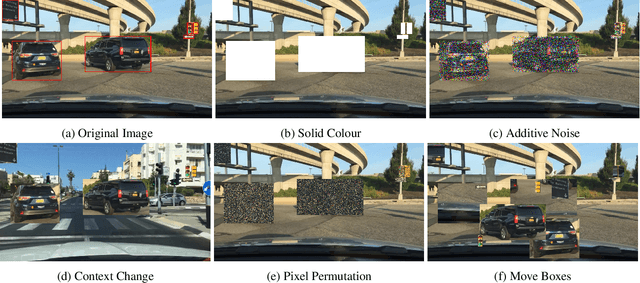

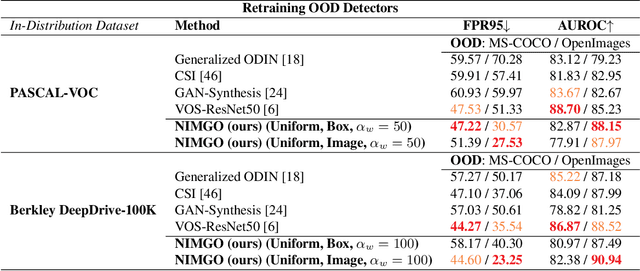

Abstract:Many high-performing works on out-of-distribution (OOD) detection use real or synthetically generated outlier data to regularise model confidence; however, they often require retraining of the base network or specialised model architectures. Our work demonstrates that Noisy Inliers Make Great Outliers (NIMGO) in the challenging field of OOD object detection. We hypothesise that synthetic outliers need only be minimally perturbed variants of the in-distribution (ID) data in order to train a discriminator to identify OOD samples -- without expensive retraining of the base network. To test our hypothesis, we generate a synthetic outlier set by applying an additive-noise perturbation to ID samples at the image or bounding-box level. An auxiliary feature monitoring multilayer perceptron (MLP) is then trained to detect OOD feature representations using the perturbed ID samples as a proxy. During testing, we demonstrate that the auxiliary MLP distinguishes ID samples from OOD samples at a state-of-the-art level, reducing the false positive rate by more than 20\% (absolute) over the previous state-of-the-art on the OpenImages dataset. Extensive additional ablations provide empirical evidence in support of our hypothesis.

Hyperdimensional Feature Fusion for Out-Of-Distribution Detection

Dec 10, 2021

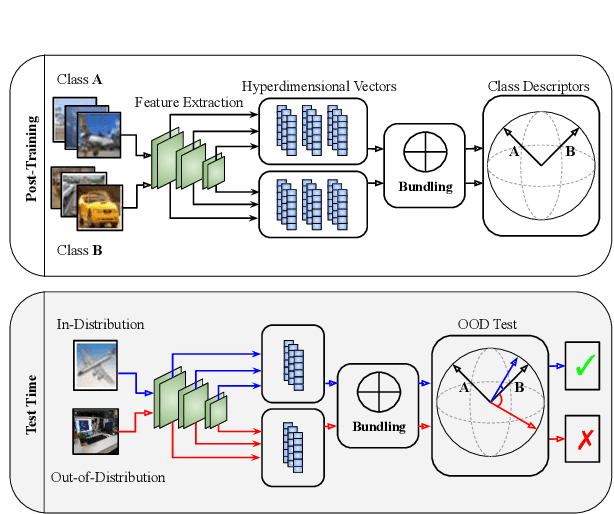

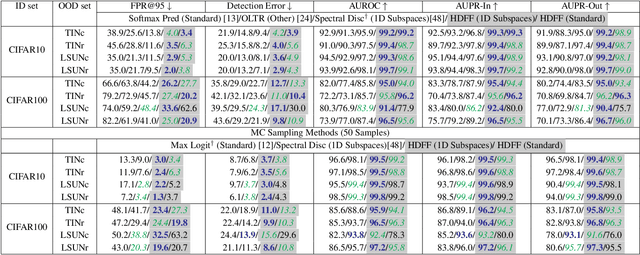

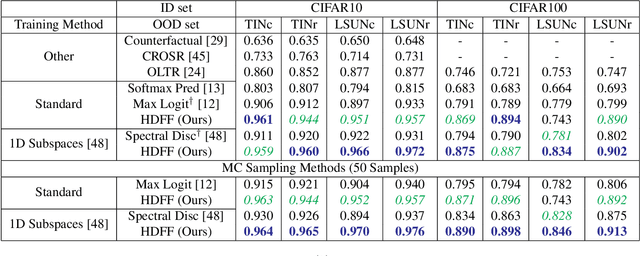

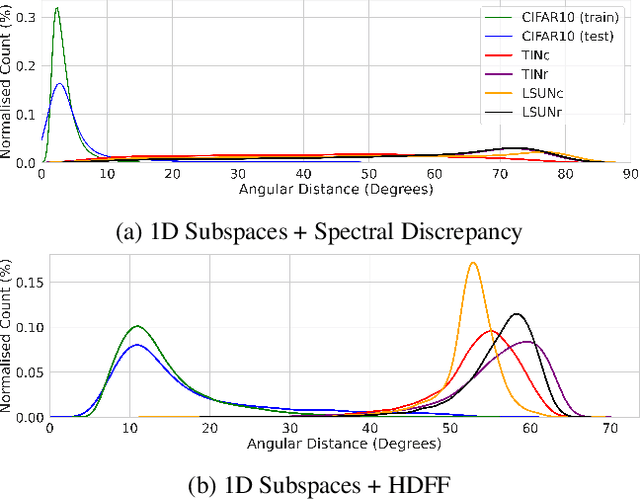

Abstract:We introduce powerful ideas from Hyperdimensional Computing into the challenging field of Out-of-Distribution (OOD) detection. In contrast to most existing work that performs OOD detection based on only a single layer of a neural network, we use similarity-preserving semi-orthogonal projection matrices to project the feature maps from multiple layers into a common vector space. By repeatedly applying the bundling operation $\oplus$, we create expressive class-specific descriptor vectors for all in-distribution classes. At test time, a simple and efficient cosine similarity calculation between descriptor vectors consistently identifies OOD samples with better performance than the current state-of-the-art. We show that the hyperdimensional fusion of multiple network layers is critical to achieve best general performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge