Sally Ghanem

Information Fusion: Scaling Subspace-Driven Approaches

Apr 26, 2022

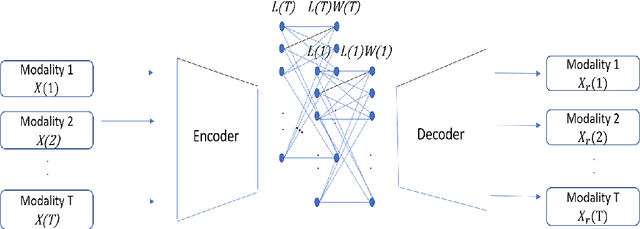

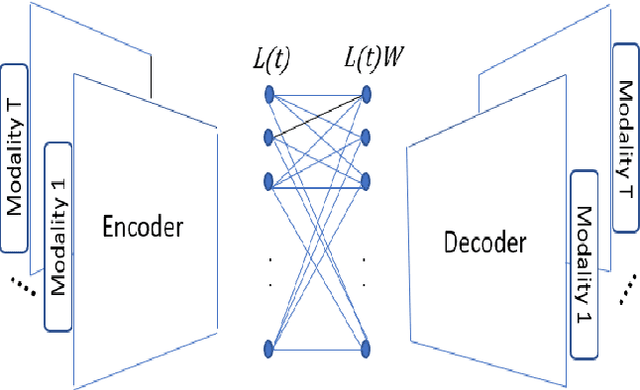

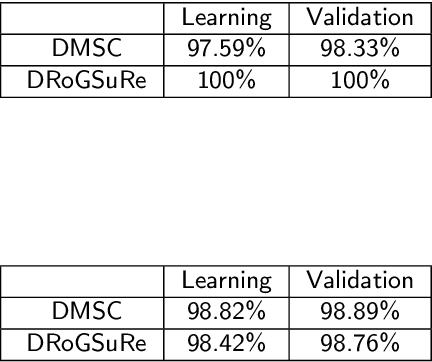

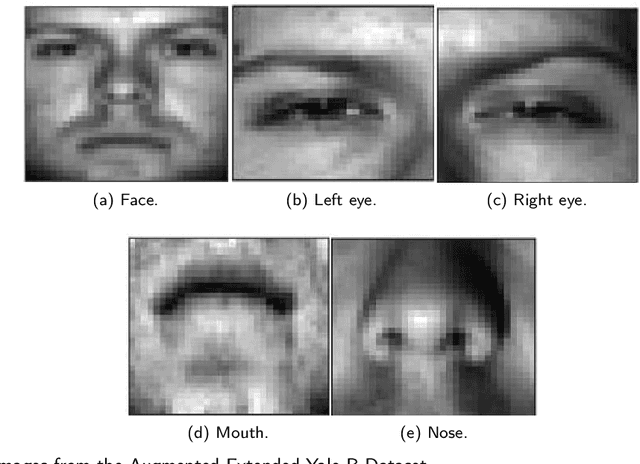

Abstract:In this work, we seek to exploit the deep structure of multi-modal data to robustly exploit the group subspace distribution of the information using the Convolutional Neural Network (CNN) formalism. Upon unfolding the set of subspaces constituting each data modality, and learning their corresponding encoders, an optimized integration of the generated inherent information is carried out to yield a characterization of various classes. Referred to as deep Multimodal Robust Group Subspace Clustering (DRoGSuRe), this approach is compared against the independently developed state-of-the-art approach named Deep Multimodal Subspace Clustering (DMSC). Experiments on different multimodal datasets show that our approach is competitive and more robust in the presence of noise.

Latent Code-Based Fusion: A Volterra Neural Network Approach

Apr 10, 2021

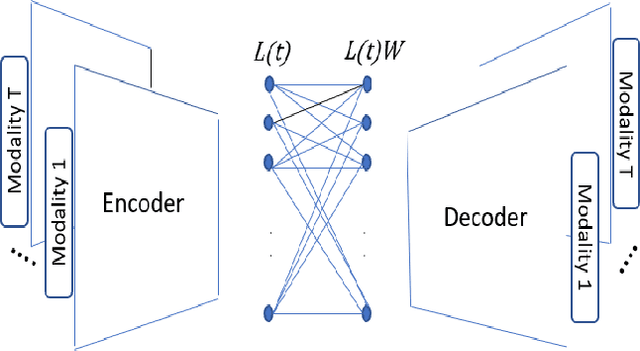

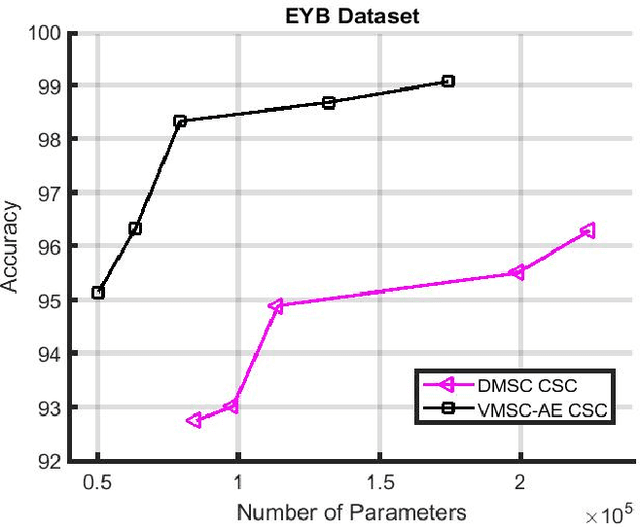

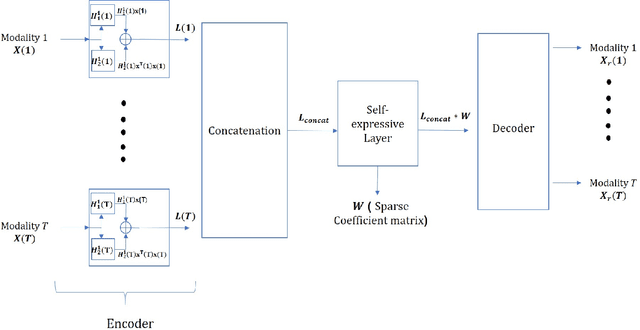

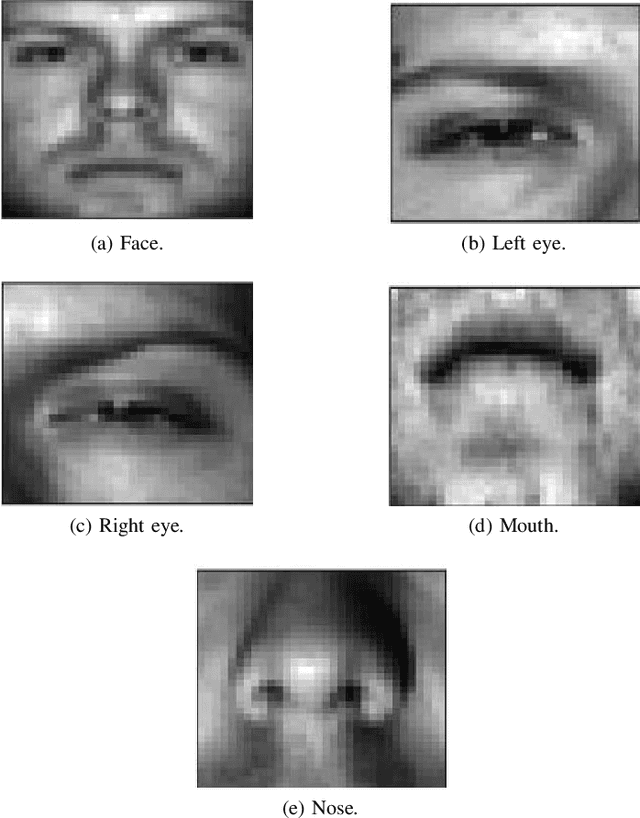

Abstract:We propose a deep structure encoder using the recently introduced Volterra Neural Networks (VNNs) to seek a latent representation of multi-modal data whose features are jointly captured by a union of subspaces. The so-called self-representation embedding of the latent codes leads to a simplified fusion which is driven by a similarly constructed decoding. The Volterra Filter architecture achieved reduction in parameter complexity is primarily due to controlled non-linearities being introduced by the higher-order convolutions in contrast to generalized activation functions. Experimental results on two different datasets have shown a significant improvement in the clustering performance for VNNs auto-encoder over conventional Convolutional Neural Networks (CNNs) auto-encoder. In addition, we also show that the proposed approach demonstrates a much-improved sample complexity over CNN-based auto-encoder with a superb robust classification performance.

Robust Group Subspace Recovery: A New Approach for Multi-Modality Data Fusion

Jun 18, 2020

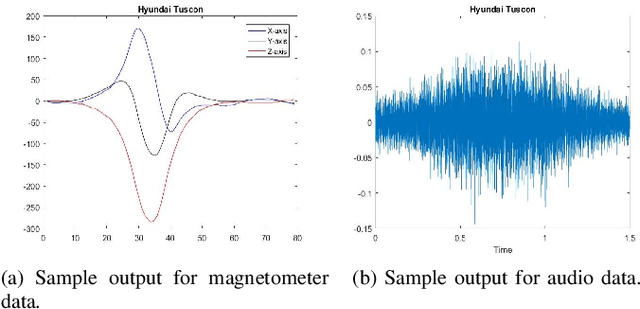

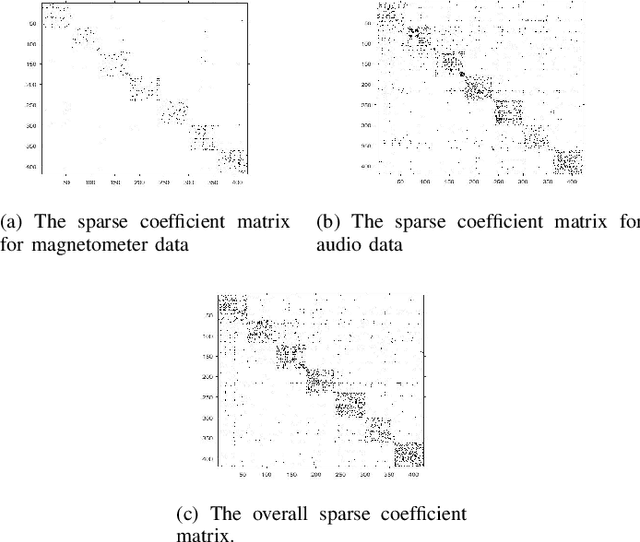

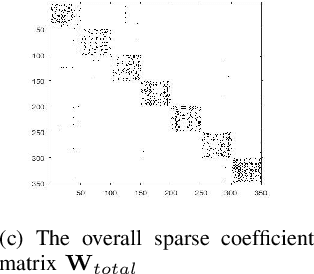

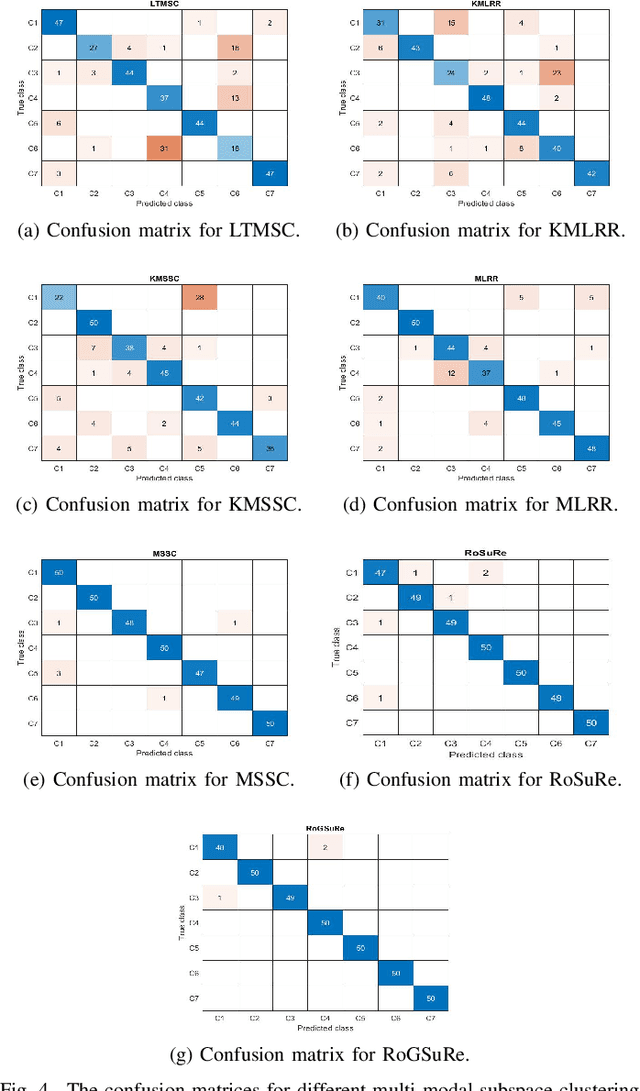

Abstract:Robust Subspace Recovery (RoSuRe) algorithm was recently introduced as a principled and numerically efficient algorithm that unfolds underlying Unions of Subspaces (UoS) structure, present in the data. The union of Subspaces (UoS) is capable of identifying more complex trends in data sets than simple linear models. We build on and extend RoSuRe to prospect the structure of different data modalities individually. We propose a novel multi-modal data fusion approach based on group sparsity which we refer to as Robust Group Subspace Recovery (RoGSuRe). Relying on a bi-sparsity pursuit paradigm and non-smooth optimization techniques, the introduced framework learns a new joint representation of the time series from different data modalities, respecting an underlying UoS model. We subsequently integrate the obtained structures to form a unified subspace structure. The proposed approach exploits the structural dependencies between the different modalities data to cluster the associated target objects. The resulting fusion of the unlabeled sensors' data from experiments on audio and magnetic data has shown that our method is competitive with other state of the art subspace clustering methods. The resulting UoS structure is employed to classify newly observed data points, highlighting the abstraction capacity of the proposed method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge