Sai Krishna

Scrapyard AI

Apr 09, 2026Abstract:This paper considers AI model churn as an opportunity for frugal investigation of large AI models. It describes how the incessant push for ever more powerful AI systems leaves in its wake a collection of obsolete yet powerful AI models, discarded in a veritable scrapyard of AI production. This scrapyard offers a potent opportunity for resource-constrained experimentation into AI systems. As in the physical scrapyard, nothing ever truly disappears in the AI scrapyard, it is just waiting to be reconfigured into something else. Project Nudge-x is an example of what can emerge from the AI scrapyard. Nudge-x seeks to manipulate legacy AI models to describe how mining sites across the planet are impacting landscapes and lives. By sharing this collection of brutal landscape interventions with people and AI systems alike, Nudge-x creates a venue for the appreciation of a history sadly shared between AI and people.

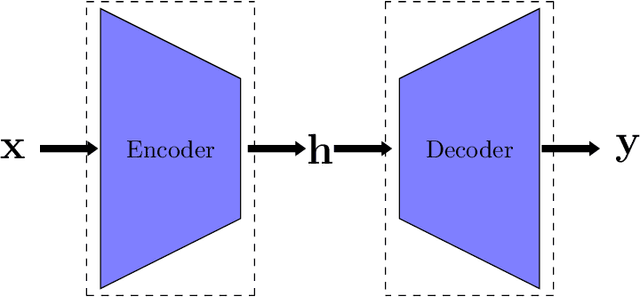

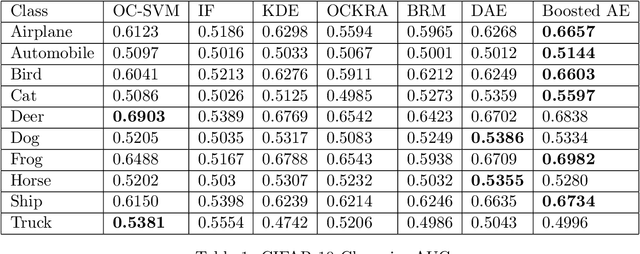

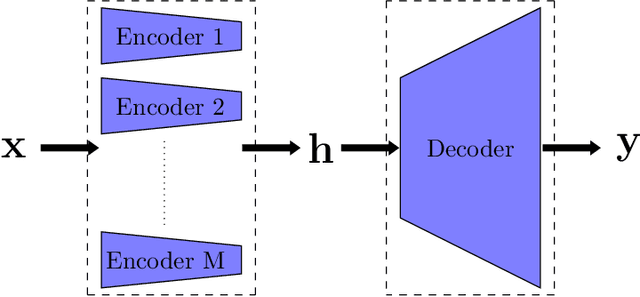

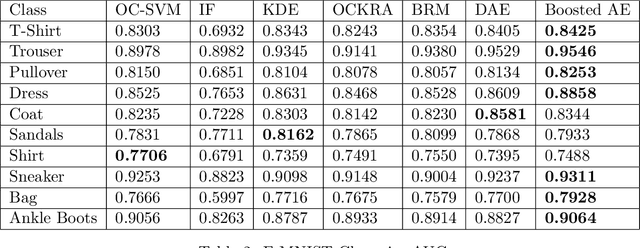

How to boost autoencoders?

Oct 28, 2021

Abstract:Autoencoders are a category of neural networks with applications in numerous domains and hence, improvement of their performance is gaining substantial interest from the machine learning community. Ensemble methods, such as boosting, are often adopted to enhance the performance of regular neural networks. In this work, we discuss the challenges associated with boosting autoencoders and propose a framework to overcome them. The proposed method ensures that the advantages of boosting are realized when either output (encoded or reconstructed) is used. The usefulness of the boosted ensemble is demonstrated in two applications that widely employ autoencoders: anomaly detection and clustering.

Deep Active Localization

Mar 05, 2019

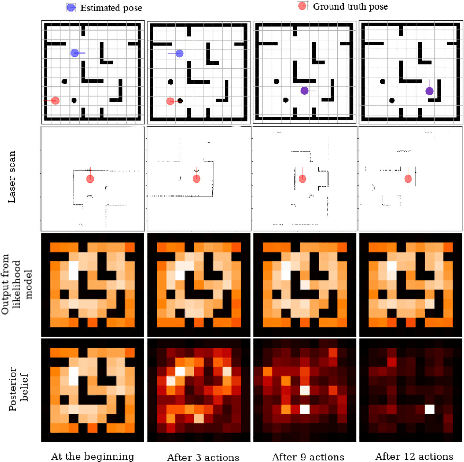

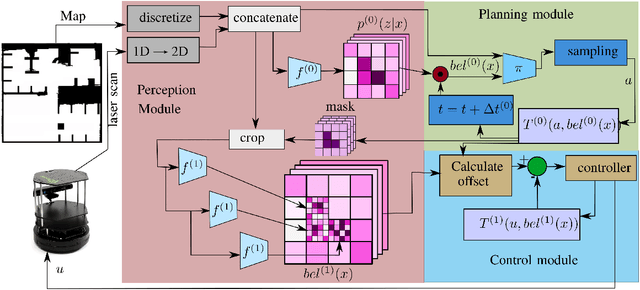

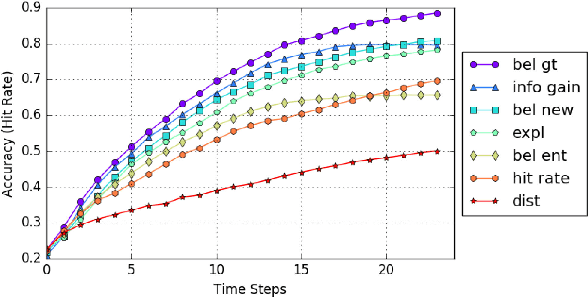

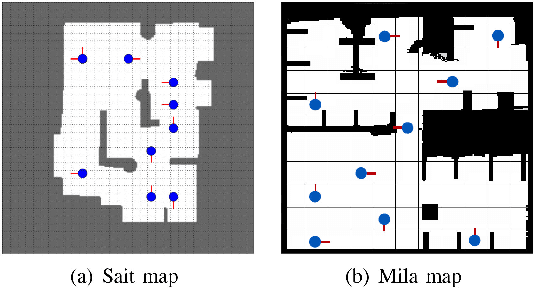

Abstract:Active localization is the problem of generating robot actions that allow it to maximally disambiguate its pose within a reference map. Traditional approaches to this use an information-theoretic criterion for action selection and hand-crafted perceptual models. In this work we propose an end-to-end differentiable method for learning to take informative actions that is trainable entirely in simulation and then transferable to real robot hardware with zero refinement. The system is composed of two modules: a convolutional neural network for perception, and a deep reinforcement learned planning module. We introduce a multi-scale approach to the learned perceptual model since the accuracy needed to perform action selection with reinforcement learning is much less than the accuracy needed for robot control. We demonstrate that the resulting system outperforms using the traditional approach for either perception or planning. We also demonstrate our approaches robustness to different map configurations and other nuisance parameters through the use of domain randomization in training. The code is also compatible with the OpenAI gym framework, as well as the Gazebo simulator.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge