S. Soatto

Stacked Residuals of Dynamic Layers for Time Series Anomaly Detection

Feb 25, 2022

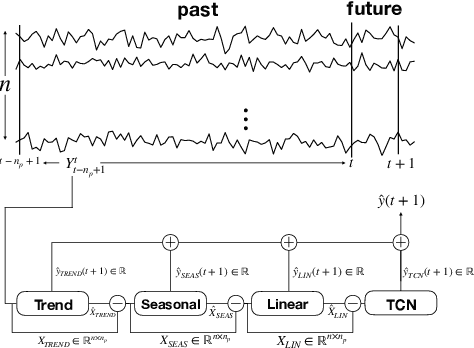

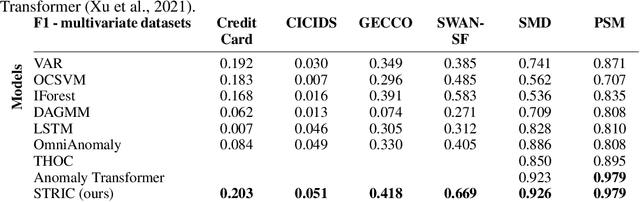

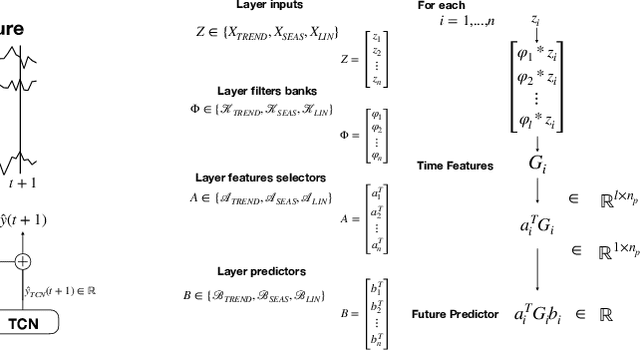

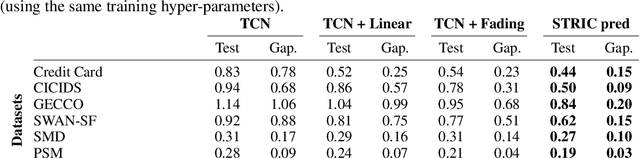

Abstract:We present an end-to-end differentiable neural network architecture to perform anomaly detection in multivariate time series by incorporating a Sequential Probability Ratio Test on the prediction residual. The architecture is a cascade of dynamical systems designed to separate linearly predictable components of the signal such as trends and seasonality, from the non-linear ones. The former are modeled by local Linear Dynamic Layers, and their residual is fed to a generic Temporal Convolutional Network that also aggregates global statistics from different time series as context for the local predictions of each one. The last layer implements the anomaly detector, which exploits the temporal structure of the prediction residuals to detect both isolated point anomalies and set-point changes. It is based on a novel application of the classic CUMSUM algorithm, adapted through the use of a variational approximation of f-divergences. The model automatically adapts to the time scales of the observed signals. It approximates a SARIMA model at the get-go, and auto-tunes to the statistics of the signal and its covariates, without the need for supervision, as more data is observed. The resulting system, which we call STRIC, outperforms both state-of-the-art robust statistical methods and deep neural network architectures on multiple anomaly detection benchmarks.

A geometric interpretation of stochastic gradient descent using diffusion metrics

Oct 27, 2019

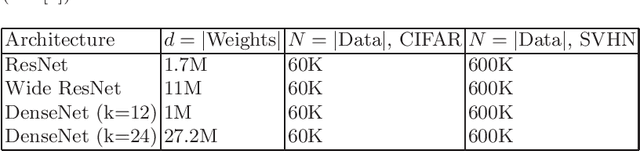

Abstract:Stochastic gradient descent (SGD) is a key ingredient in the training of deep neural networks and yet its geometrical significance appears elusive. We study a deterministic model in which the trajectories of our dynamical systems are described via geodesics of a family of metrics arising from the diffusion matrix. These metrics encode information about the highly non-isotropic gradient noise in SGD. We establish a parallel with General Relativity models, where the role of the electromagnetic field is played by the gradient of the loss function. We compute an example of a two layer network.

Second-order Shape Optimization for Geometric Inverse Problems in Vision

Apr 13, 2014

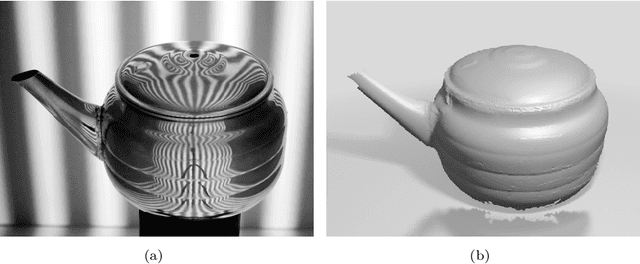

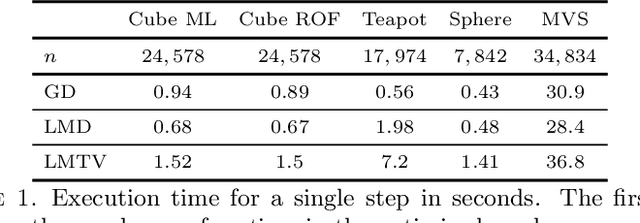

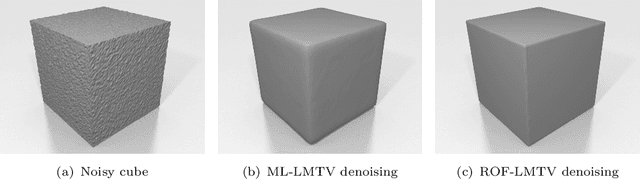

Abstract:We develop a method for optimization in shape spaces, i.e., sets of surfaces modulo re-parametrization. Unlike previously proposed gradient flows, we achieve superlinear convergence rates through a subtle approximation of the shape Hessian, which is generally hard to compute and suffers from a series of degeneracies. Our analysis highlights the role of mean curvature motion in comparison with first-order schemes: instead of surface area, our approach penalizes deformation, either by its Dirichlet energy or total variation. Latter regularizer sparks the development of an alternating direction method of multipliers on triangular meshes. Therein, a conjugate-gradients solver enables us to bypass formation of the Gaussian normal equations appearing in the course of the overall optimization. We combine all of the aforementioned ideas in a versatile geometric variation-regularized Levenberg-Marquardt-type method applicable to a variety of shape functionals, depending on intrinsic properties of the surface such as normal field and curvature as well as its embedding into space. Promising experimental results are reported.

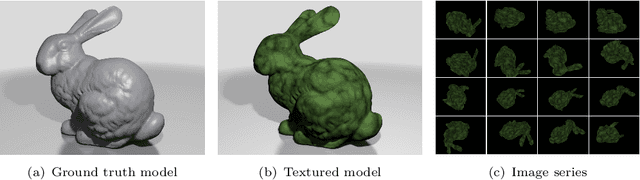

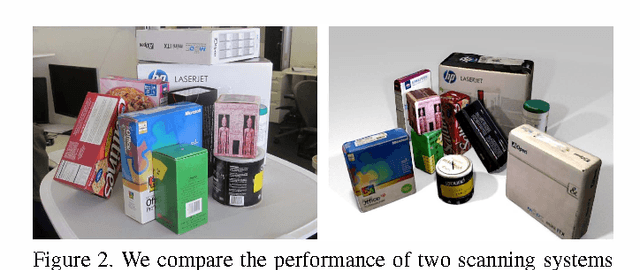

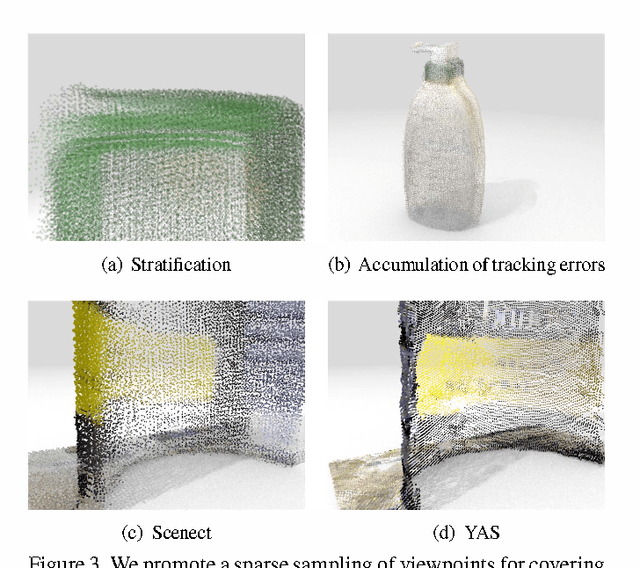

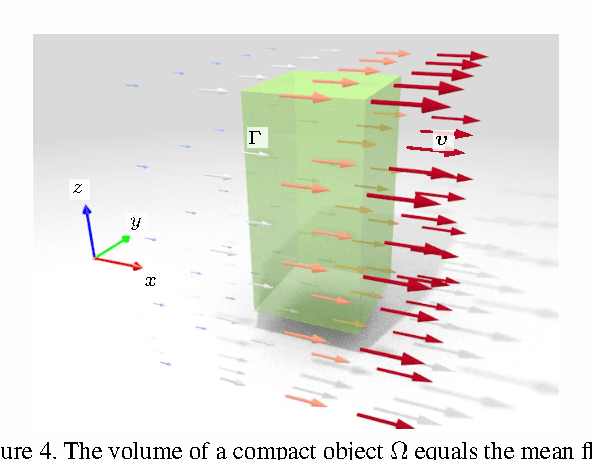

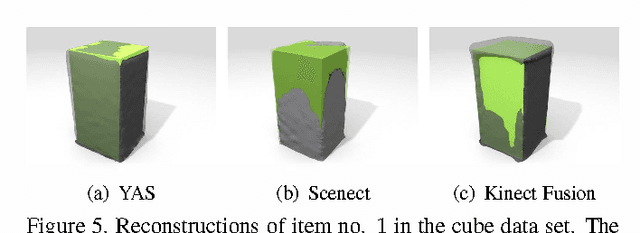

Volumetric Reconstruction Applied to Perceptual Studies of Size and Weight

Nov 11, 2013

Abstract:We explore the application of volumetric reconstruction from structured-light sensors in cognitive neuroscience, specifically in the quantification of the size-weight illusion, whereby humans tend to systematically perceive smaller objects as heavier. We investigate the performance of two commercial structured-light scanning systems in comparison to one we developed specifically for this application. Our method has two main distinct features: First, it only samples a sparse series of viewpoints, unlike other systems such as the Kinect Fusion. Second, instead of building a distance field for the purpose of points-to-surface conversion directly, we pursue a first-order approach: the distance function is recovered from its gradient by a screened Poisson reconstruction, which is very resilient to noise and yet preserves high-frequency signal components. Our experiments show that the quality of metric reconstruction from structured light sensors is subject to systematic biases, and highlights the factors that influence it. Our main performance index rates estimates of volume (a proxy of size), for which we review a well-known formula applicable to incomplete meshes. Our code and data will be made publicly available upon completion of the anonymous review process.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge