P. Chaudhari

A geometric interpretation of stochastic gradient descent using diffusion metrics

Oct 27, 2019

Abstract:Stochastic gradient descent (SGD) is a key ingredient in the training of deep neural networks and yet its geometrical significance appears elusive. We study a deterministic model in which the trajectories of our dynamical systems are described via geodesics of a family of metrics arising from the diffusion matrix. These metrics encode information about the highly non-isotropic gradient noise in SGD. We establish a parallel with General Relativity models, where the role of the electromagnetic field is played by the gradient of the loss function. We compute an example of a two layer network.

Discretization of Temporal Data: A Survey

Feb 18, 2014Abstract:In real world, the huge amount of temporal data is to be processed in many application areas such as scientific, financial, network monitoring, sensor data analysis. Data mining techniques are primarily oriented to handle discrete features. In the case of temporal data the time plays an important role on the characteristics of data. To consider this effect, the data discretization techniques have to consider the time while processing to resolve the issue by finding the intervals of data which are more concise and precise with respect to time. Here, this research is reviewing different data discretization techniques used in temporal data applications according to the inclusion or exclusion of: class label, temporal order of the data and handling of stream data to open the research direction for temporal data discretization to improve the performance of data mining technique.

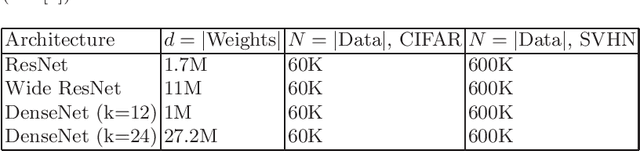

* 4 pages, 1 Table

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge