Robert Wagner

Physics Driven Image Simulation from Commercial Satellite Imagery

Apr 21, 2025Abstract:Physics driven image simulation allows for the modeling and creation of realistic imagery beyond what is afforded by typical rendering pipelines. We aim to automatically generate a physically realistic scene for simulation of a given region using satellite imagery to model the scene geometry, drive material estimates, and populate the scene with dynamic elements. We present automated techniques to utilize satellite imagery throughout the simulated scene to expedite scene construction and decrease manual overhead. Our technique does not use lidar, enabling simulations that could not be constructed previously. To develop a 3D scene, we model the various components of the real location, addressing the terrain, modelling man-made structures, and populating the scene with smaller elements such as vegetation and vehicles. To create the scene we begin with a Digital Surface Model, which serves as the basis for scene geometry, and allows us to reason about the real location in a common 3D frame of reference. These simulated scenes can provide increased fidelity with less manual intervention for novel locations on earth, and can facilitate algorithm development, and processing pipelines for imagery ranging from UV to LWIR $(200nm-20\mu m)$.

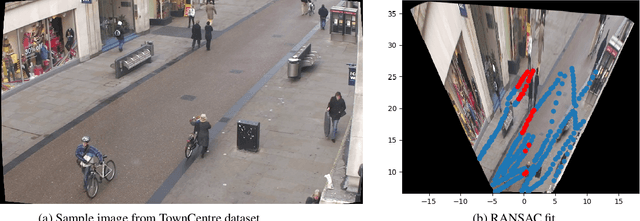

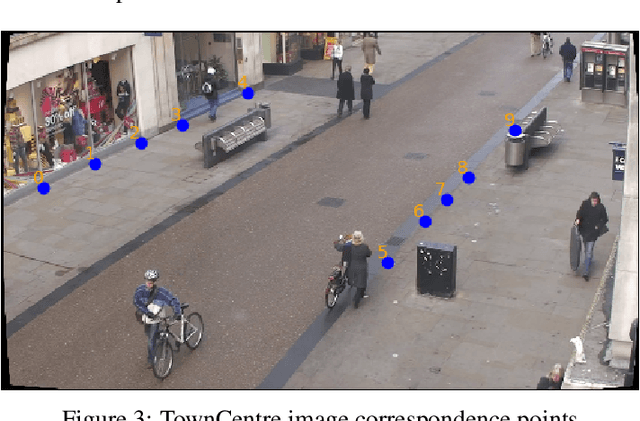

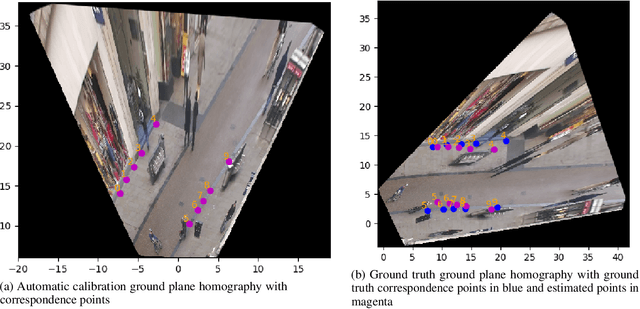

4-D Scene Alignment in Surveillance Video

Jun 06, 2019

Abstract:Designing robust activity detectors for fixed camera surveillance video requires knowledge of the 3-D scene. This paper presents an automatic camera calibration process that provides a mechanism to reason about the spatial proximity between objects at different times. It combines a CNN-based camera pose estimator with a vertical scale provided by pedestrian observations to establish the 4-D scene geometry. Unlike some previous methods, the people do not need to be tracked nor do the head and feet need to be explicitly detected. It is robust to individual height variations and camera parameter estimation errors.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge