Rob Saunders

When Visual Evidence is Ambiguous: Pareidolia as a Diagnostic Probe for Vision Models

Mar 04, 2026Abstract:When visual evidence is ambiguous, vision models must decide whether to interpret face-like patterns as meaningful. Face pareidolia, the perception of faces in non-face objects, provides a controlled probe of this behavior. We introduce a representation-level diagnostic framework that analyzes detection, localization, uncertainty, and bias across class, difficulty, and emotion in face pareidolia images. Under a unified protocol, we evaluate six models spanning four representational regimes: vision-language models (VLMs; CLIP-B/32, CLIP-L/14, LLaVA-1.5-7B), pure vision classification (ViT), general object detection (YOLOv8), and face detection (RetinaFace). Our analysis reveals three mechanisms of interpretation under ambiguity. VLMs exhibit semantic overactivation, systematically pulling ambiguous non-human regions toward the Human concept, with LLaVA-1.5-7B producing the strongest and most confident over-calls, especially for negative emotions. ViT instead follows an uncertainty-as-abstention strategy, remaining diffuse yet largely unbiased. Detection-based models achieve low bias through conservative priors that suppress pareidolia responses even when localization is controlled. These results show that behavior under ambiguity is governed more by representational choices than score thresholds, and that uncertainty and bias are decoupled: low uncertainty can signal either safe suppression, as in detectors, or extreme over-interpretation, as in VLMs. Pareidolia therefore provides a compact diagnostic and a source of ambiguity-aware hard negatives for probing and improving the semantic robustness of vision-language systems. Code will be released upon publication.

Pluri-perspectivism in Human-robot Co-creativity with Older Adults

Jul 10, 2025

Abstract:This position paper explores pluriperspectivism as a core element of human creative experience and its relevance to humanrobot cocreativity We propose a layered fivedimensional model to guide the design of cocreative behaviors and the analysis of interaction dynamics This model is based on literature and results from an interview study we conducted with 10 visual artists and 8 arts educators examining how pluriperspectivism supports creative practice The findings of this study provide insight in how robots could enhance human creativity through adaptive contextsensitive behavior demonstrating the potential of pluriperspectivism This paper outlines future directions for integrating pluriperspectivism with visionlanguage models VLMs to support context sensitivity in cocreative robots

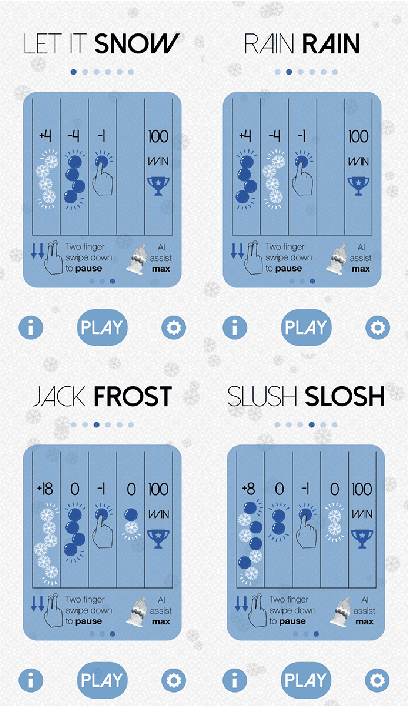

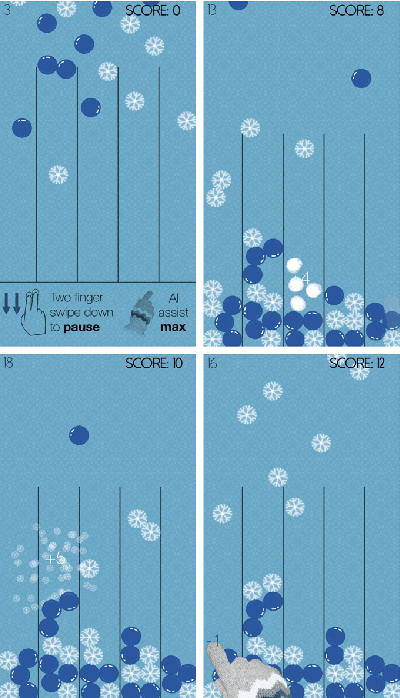

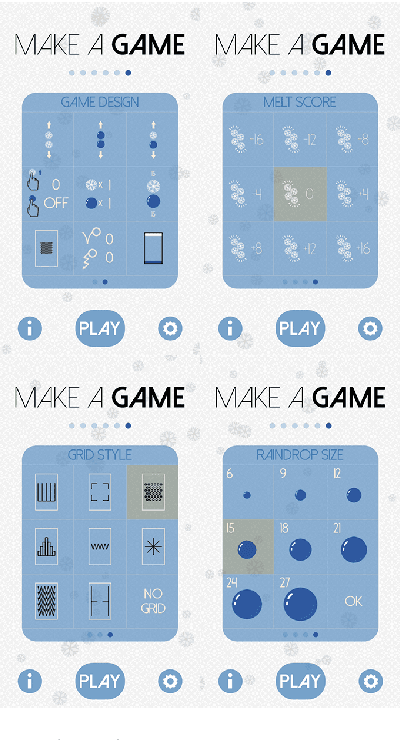

Exploring Novel Game Spaces with Fluidic Games

Mar 04, 2018

Abstract:With the growing integration of smartphones into our daily lives, and their increased ease of use, mobile games have become highly popular across all demographics. People listen to music, play games or read the news while in transit or bridging gap times. While mobile gaming is gaining popularity, mobile expression of creativity is still in its early stages. We present here a new type of mobile app -- fluidic games -- and illustrate our iterative approach to their design. This new type of app seamlessly integrates exploration of the design space into the actual user experience of playing the game, and aims to enrich the user experience. To better illustrate the game domain and our approach, we discuss one specific fluidic game, which is available as a commercial product. We also briefly discuss open challenges such as player support and how generative techniques can aid the exploration of the game space further.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge