Richard Camilli

Sonar-MASt3R: Real-Time Opti-Acoustic Fusion in Turbid, Unstructured Environments

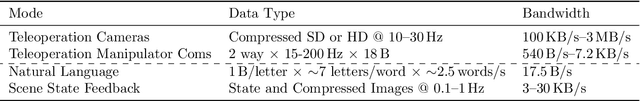

Mar 13, 2026Abstract:Underwater intervention is an important capability in several marine domains, with numerous industrial, scientific, and defense applications. However, existing perception systems used during intervention operations rely on data from optical cameras, which limits capabilities in poor visibility or lighting conditions. Prior work has examined opti-acoustic fusion methods, which use sonar data to resolve the depth ambiguity of the camera data while using camera data to resolve the elevation angle ambiguity of the sonar data. However, existing methods cannot achieve dense 3D reconstructions in real-time, and few studies have reported results from applying these methods in a turbid environment. In this work, we propose the opti-acoustic fusion method Sonar-MASt3R, which uses MASt3R to extract dense correspondences from optical camera data in real-time and pairs it with geometric cues from an acoustic 3D reconstruction to ensure robustness in turbid conditions. Experimental results using data recorded from an opti-acoustic eye-in-hand configuration across turbidity values ranging from <0.5 to >12 NTU highlight this method's improved robustness to turbidity relative to baseline methods.

Enhancing scientific exploration of the deep sea through shared autonomy in remote manipulation

Sep 15, 2023Abstract:Shared autonomy enables novice remote users to conduct deep-ocean science operations with robotic manipulators.

Towards Automated Sample Collection and Return in Extreme Underwater Environments

Dec 30, 2021

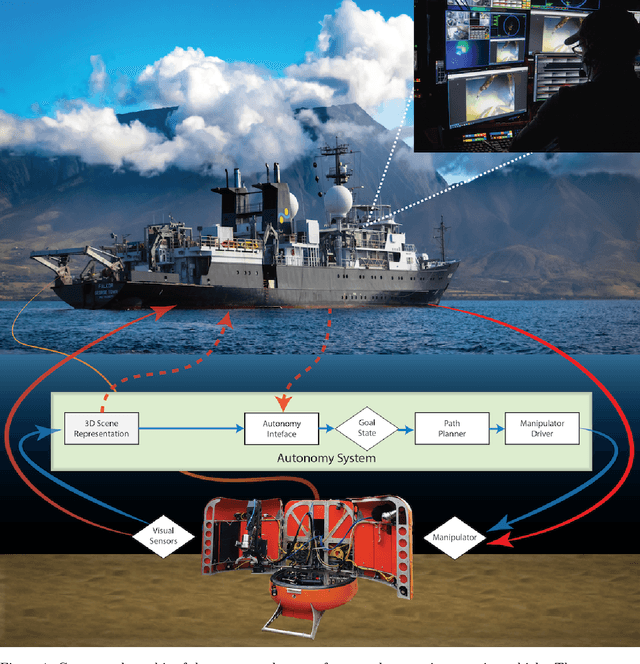

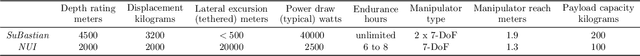

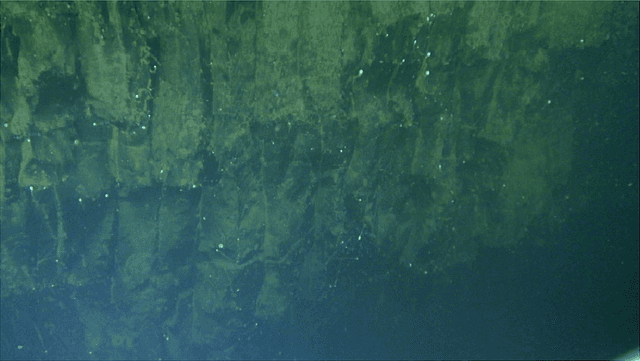

Abstract:In this report, we present the system design, operational strategy, and results of coordinated multi-vehicle field demonstrations of autonomous marine robotic technologies in search-for-life missions within the Pacific shelf margin of Costa Rica and the Santorini-Kolumbo caldera complex, which serve as analogs to environments that may exist in oceans beyond Earth. This report focuses on the automation of ROV manipulator operations for targeted biological sample-collection-and-return from the seafloor. In the context of future extraterrestrial exploration missions to ocean worlds, an ROV is an analog to a planetary lander, which must be capable of high-level autonomy. Our field trials involve two underwater vehicles, the SuBastian ROV and the Nereid Under Ice (NUI) hybrid ROV for mixed initiative (i.e., teleoperated or autonomous) missions, both equipped 7-DoF hydraulic manipulators. We describe an adaptable, hardware-independent computer vision architecture that enables high-level automated manipulation. The vision system provides a 3D understanding of the workspace to inform manipulator motion planning in complex unstructured environments. We demonstrate the effectiveness of the vision system and control framework through field trials in increasingly challenging environments, including the automated collection and return of biological samples from within the active undersea volcano, Kolumbo. Based on our experiences in the field, we discuss the performance of our system and identify promising directions for future research.

Hybrid Visual SLAM for Underwater Vehicle Manipulator Systems

Dec 07, 2021

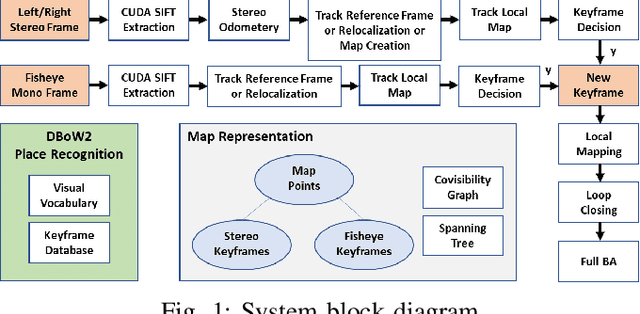

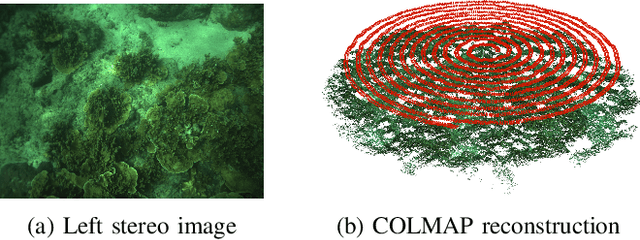

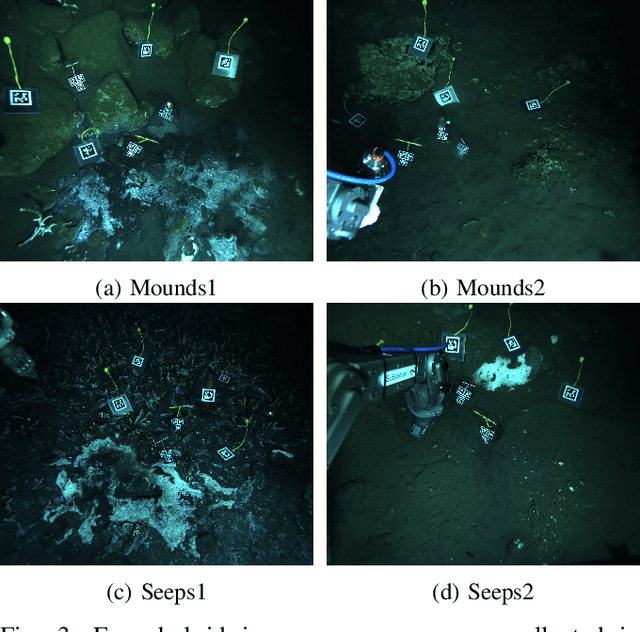

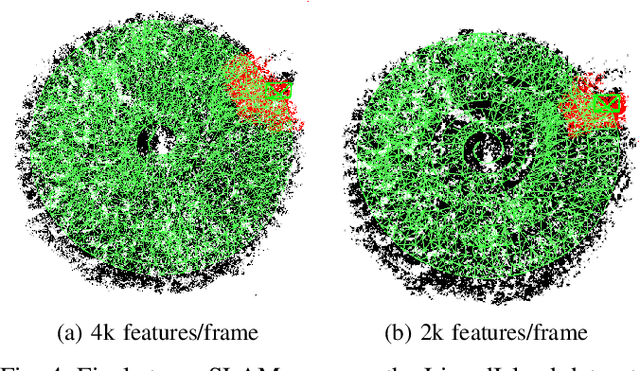

Abstract:This paper presents a novel visual scene mapping method for underwater vehicle manipulator systems (UVMSs), with specific emphasis on robust mapping in natural seafloor environments. Prior methods for underwater scene mapping typically process the data offline, while existing underwater SLAM methods that run in real-time are generally focused on localization and not mapping. Our method uses GPU accelerated SIFT features in a graph optimization framework to build a feature map. The map scale is constrained by features from a vehicle mounted stereo camera, and we exploit the dynamic positioning capability of the manipulator system by fusing features from a wrist mounted fisheye camera into the map to extend it beyond the limited viewpoint of the vehicle mounted cameras. Our hybrid SLAM method is evaluated on challenging image sequences collected with a UVMS in natural deep seafloor environments of the Costa Rican continental shelf margin, and we also evaluate the stereo only mode on a shallow reef survey dataset. Results on these datasets demonstrate the high accuracy of our system and suitability for operating in diverse and natural seafloor environments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge