Qunming Wang

Crowd Detection Using Very-Fine-Resolution Satellite Imagery

Apr 28, 2025Abstract:Accurate crowd detection (CD) is critical for public safety and historical pattern analysis, yet existing methods relying on ground and aerial imagery suffer from limited spatio-temporal coverage. The development of very-fine-resolution (VFR) satellite sensor imagery (e.g., ~0.3 m spatial resolution) provides unprecedented opportunities for large-scale crowd activity analysis, but it has never been considered for this task. To address this gap, we proposed CrowdSat-Net, a novel point-based convolutional neural network, which features two innovative components: Dual-Context Progressive Attention Network (DCPAN) to improve feature representation of individuals by aggregating scene context and local individual characteristics, and High-Frequency Guided Deformable Upsampler (HFGDU) that recovers high-frequency information during upsampling through frequency-domain guided deformable convolutions. To validate the effectiveness of CrowdSat-Net, we developed CrowdSat, the first VFR satellite imagery dataset designed specifically for CD tasks, comprising over 120k manually labeled individuals from multi-source satellite platforms (Beijing-3N, Jilin-1 Gaofen-04A and Google Earth) across China. In the experiments, CrowdSat-Net was compared with five state-of-the-art point-based CD methods (originally designed for ground or aerial imagery) using CrowdSat and achieved the largest F1-score of 66.12% and Precision of 73.23%, surpassing the second-best method by 1.71% and 2.42%, respectively. Moreover, extensive ablation experiments validated the importance of the DCPAN and HFGDU modules. Furthermore, cross-regional evaluation further demonstrated the spatial generalizability of CrowdSat-Net. This research advances CD capability by providing both a newly developed network architecture for CD and a pioneering benchmark dataset to facilitate future CD development.

Multisource and Multitemporal Data Fusion in Remote Sensing

Dec 19, 2018

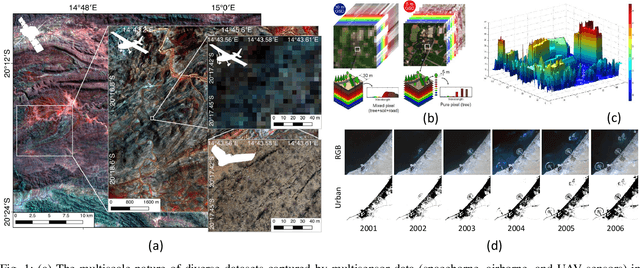

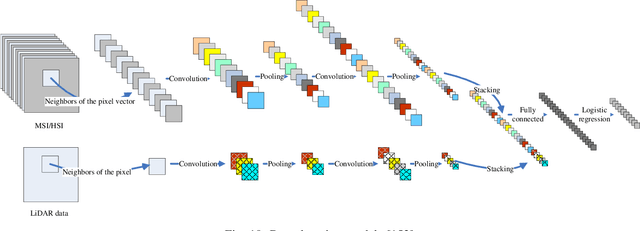

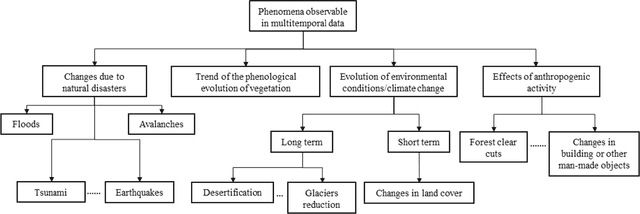

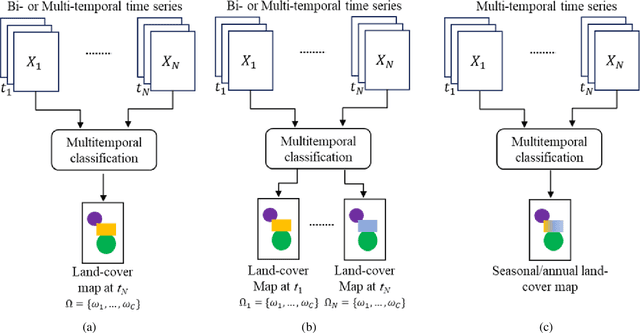

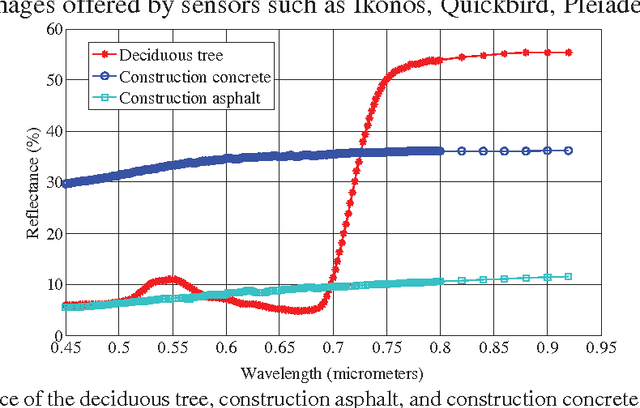

Abstract:The sharp and recent increase in the availability of data captured by different sensors combined with their considerably heterogeneous natures poses a serious challenge for the effective and efficient processing of remotely sensed data. Such an increase in remote sensing and ancillary datasets, however, opens up the possibility of utilizing multimodal datasets in a joint manner to further improve the performance of the processing approaches with respect to the application at hand. Multisource data fusion has, therefore, received enormous attention from researchers worldwide for a wide variety of applications. Moreover, thanks to the revisit capability of several spaceborne sensors, the integration of the temporal information with the spatial and/or spectral/backscattering information of the remotely sensed data is possible and helps to move from a representation of 2D/3D data to 4D data structures, where the time variable adds new information as well as challenges for the information extraction algorithms. There are a huge number of research works dedicated to multisource and multitemporal data fusion, but the methods for the fusion of different modalities have expanded in different paths according to each research community. This paper brings together the advances of multisource and multitemporal data fusion approaches with respect to different research communities and provides a thorough and discipline-specific starting point for researchers at different levels (i.e., students, researchers, and senior researchers) willing to conduct novel investigations on this challenging topic by supplying sufficient detail and references.

Extracting man-made objects from remote sensing images via fast level set evolutions

Sep 26, 2014

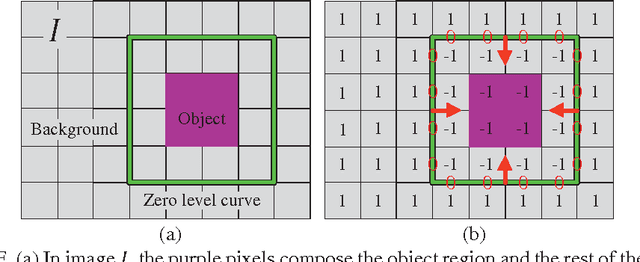

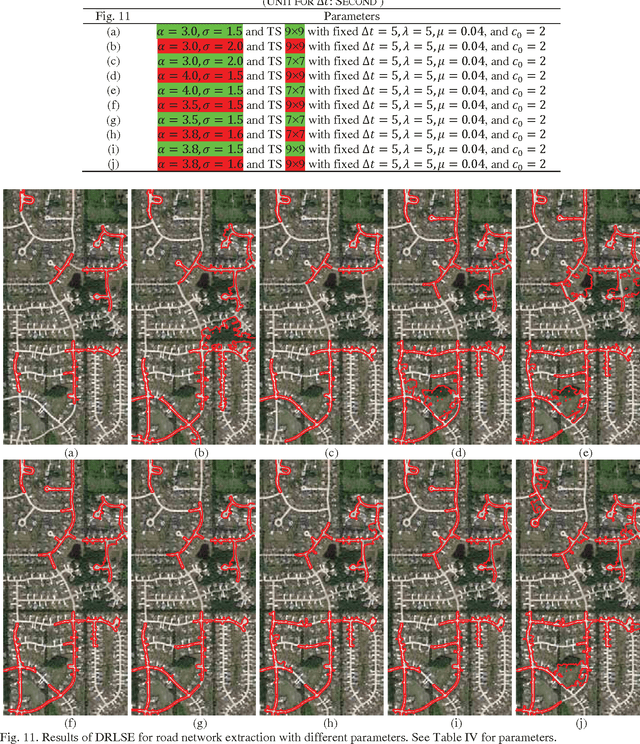

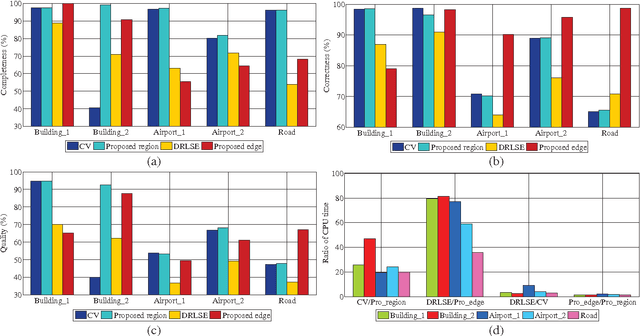

Abstract:Object extraction from remote sensing images has long been an intensive research topic in the field of surveying and mapping. Most existing methods are devoted to handling just one type of object and little attention has been paid to improving the computational efficiency. In recent years, level set evolution (LSE) has been shown to be very promising for object extraction in the community of image processing and computer vision because it can handle topological changes automatically while achieving high accuracy. However, the application of state-of-the-art LSEs is compromised by laborious parameter tuning and expensive computation. In this paper, we proposed two fast LSEs for man-made object extraction from high spatial resolution remote sensing images. The traditional mean curvature-based regularization term is replaced by a Gaussian kernel and it is mathematically sound to do that. Thus a larger time step can be used in the numerical scheme to expedite the proposed LSEs. In contrast to existing methods, the proposed LSEs are significantly faster. Most importantly, they involve much fewer parameters while achieving better performance. The advantages of the proposed LSEs over other state-of-the-art approaches have been verified by a range of experiments.

* This paper includes 31 pages and 12 figures

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge