Pongsate Tangseng

Toward Explainable Fashion Recommendation

Jan 15, 2019

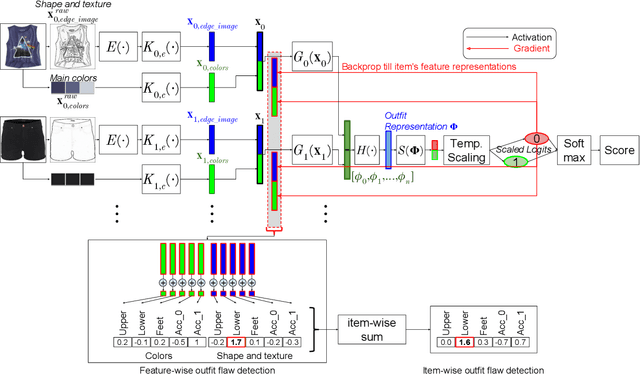

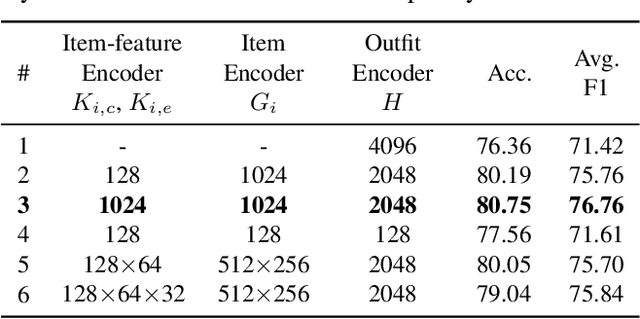

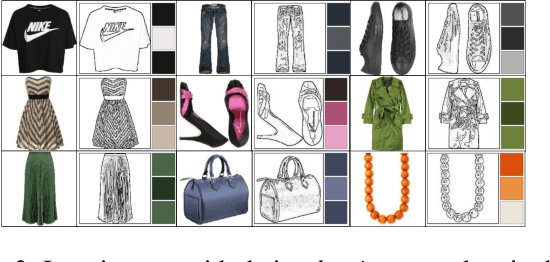

Abstract:Many studies have been conducted so far to build systems for recommending fashion items and outfits. Although they achieve good performances in their respective tasks, most of them cannot explain their judgments to the users, which compromises their usefulness. Toward explainable fashion recommendation, this study proposes a system that is able not only to provide a goodness score for an outfit but also to explain the score by providing reason behind it. For this purpose, we propose a method for quantifying how influential each feature of each item is to the score. Using this influence value, we can identify which item and what feature make the outfit good or bad. We represent the image of each item with a combination of human-interpretable features, and thereby the identification of the most influential item-feature pair gives useful explanation of the output score. To evaluate the performance of this approach, we design an experiment that can be performed without human annotation; we replace a single item-feature pair in an outfit so that the score will decrease, and then we test if the proposed method can detect the replaced item correctly using the above influence values. The experimental results show that the proposed method can accurately detect bad items in outfits lowering their scores.

Recommending Outfits from Personal Closet

Apr 26, 2018

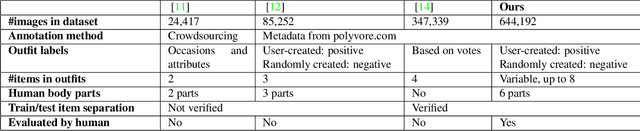

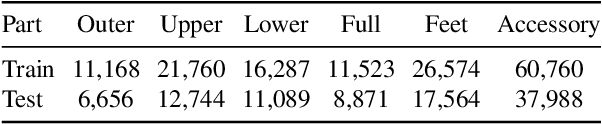

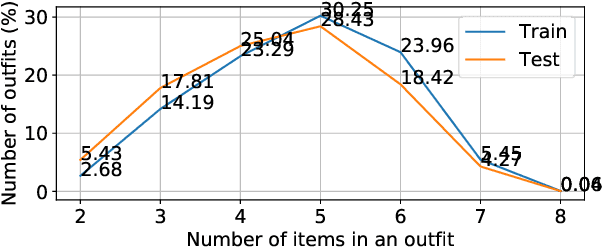

Abstract:We consider grading a fashion outfit for recommendation, where we assume that users have a closet of items and we aim at producing a score for an arbitrary combination of items in the closet. The challenge in outfit grading is that the input to the system is a bag of item pictures that are unordered and vary in size. We build a deep neural network-based system that can take variable-length items and predict a score. We collect a large number of outfits from a popular fashion sharing website, Polyvore, and evaluate the performance of our grading system. We compare our model with a random-choice baseline, both on the traditional classification evaluation and on people's judgment using a crowdsourcing platform. With over 84% in classification accuracy and 91% matching ratio to human annotators, our model can reliably grade the quality of an outfit. We also build an outfit recommender on top of our grader to demonstrate the practical application of our model for a personal closet assistant.

Looking at Outfit to Parse Clothing

Mar 04, 2017

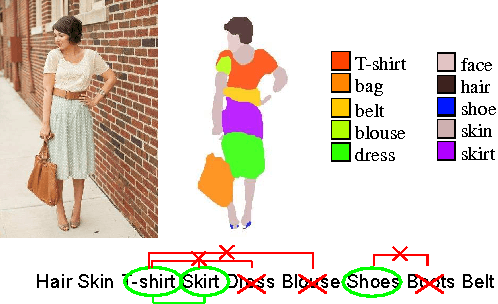

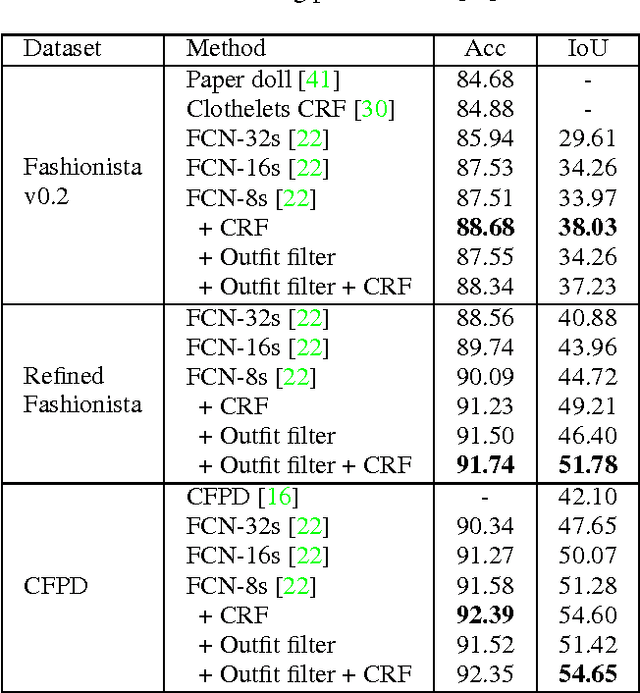

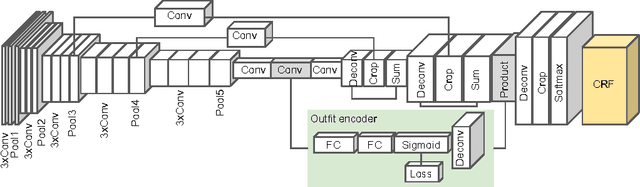

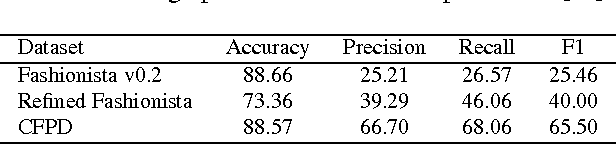

Abstract:This paper extends fully-convolutional neural networks (FCN) for the clothing parsing problem. Clothing parsing requires higher-level knowledge on clothing semantics and contextual cues to disambiguate fine-grained categories. We extend FCN architecture with a side-branch network which we refer outfit encoder to predict a consistent set of clothing labels to encourage combinatorial preference, and with conditional random field (CRF) to explicitly consider coherent label assignment to the given image. The empirical results using Fashionista and CFPD datasets show that our model achieves state-of-the-art performance in clothing parsing, without additional supervision during training. We also study the qualitative influence of annotation on the current clothing parsing benchmarks, with our Web-based tool for multi-scale pixel-wise annotation and manual refinement effort to the Fashionista dataset. Finally, we show that the image representation of the outfit encoder is useful for dress-up image retrieval application.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge