Philippa Ryan

Adelard LLP

Causal Explanations from the Geometric Properties of ReLU Neural Networks

May 11, 2026Abstract:Neural networks have proved an effective means of learning control policies for autonomous systems, but these learned policies are difficult to understand due to the black-box nature of neural networks. This lack of interpretability makes safety assurance for such autonomous systems challenging. The fields of eXplainable Artificial Intelligence (XAI) and eXplainable Reinforcement Learning (XRL) aim to interpret the decision making processes of neural networks and autonomous agents, respectively. In particular, work on causal explanations aims to provide "why" and "why not" explanations for why a model made a given decision. However, most of the work on explainability to date utilises a distilled version of the original model. While this distilled policy is interpretable, it necessarily degrades in performance significantly when compared to the original model, and is not guaranteed to be an accurate reflection of the decision making processes in the original model and as such cannot be used to guarantee its safety. Recent work on understanding the geometry of ReLU neural networks shows that a ReLU network corresponds to a piecewise linear function divided into regions defined by an n-dimensional convex polytope. Through this lens, a neural network can be understood as dividing the input space into distinct regions which apply a single linear function for each output neuron. We show that this geometric representation can be used to generate causal explanations for the network's behaviour similar to previous work, but which extracts rules directly from the geometry of Neural Networks with the ReLU activation function, and is therefore an accurate reflection of the network's behaviour.

Safety Case Templates for Autonomous Systems

Jan 29, 2021

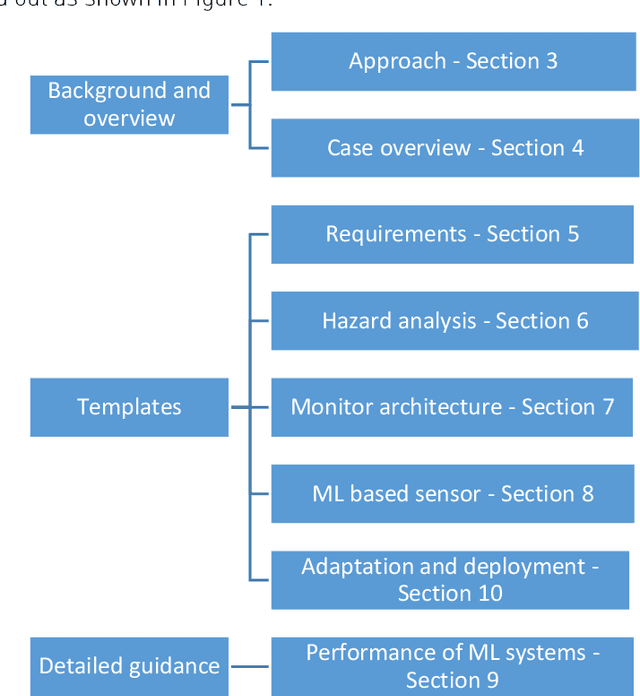

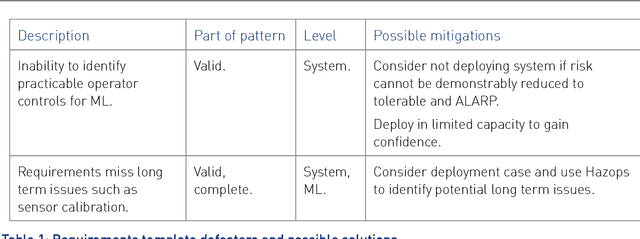

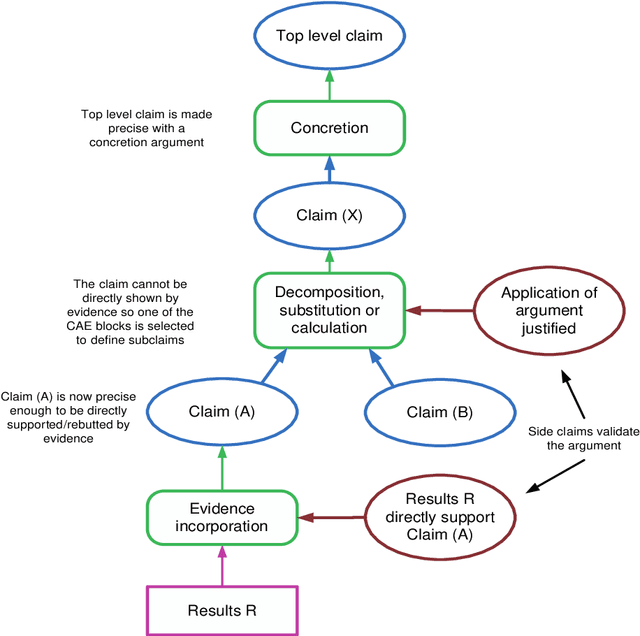

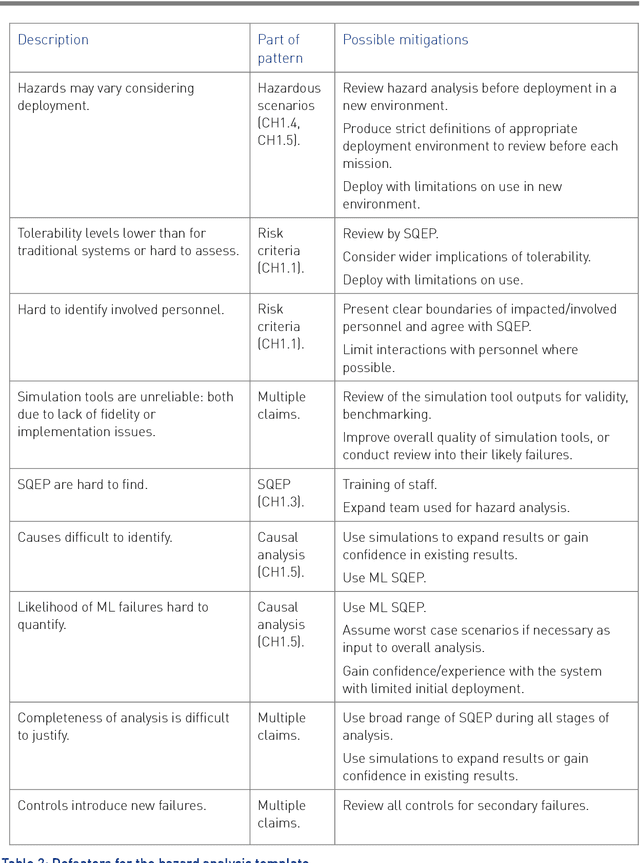

Abstract:This report documents safety assurance argument templates to support the deployment and operation of autonomous systems that include machine learning (ML) components. The document presents example safety argument templates covering: the development of safety requirements, hazard analysis, a safety monitor architecture for an autonomous system including at least one ML element, a component with ML and the adaptation and change of the system over time. The report also presents generic templates for argument defeaters and evidence confidence that can be used to strengthen, review, and adapt the templates as necessary. This Interim Report is made available to get feedback on the approach and on the templates. This work is being sponsored by the UK Dstl under the R-cloud framework.

Towards Identifying and closing Gaps in Assurance of autonomous Road vehicleS -- a collection of Technical Notes Part 2

Feb 28, 2020

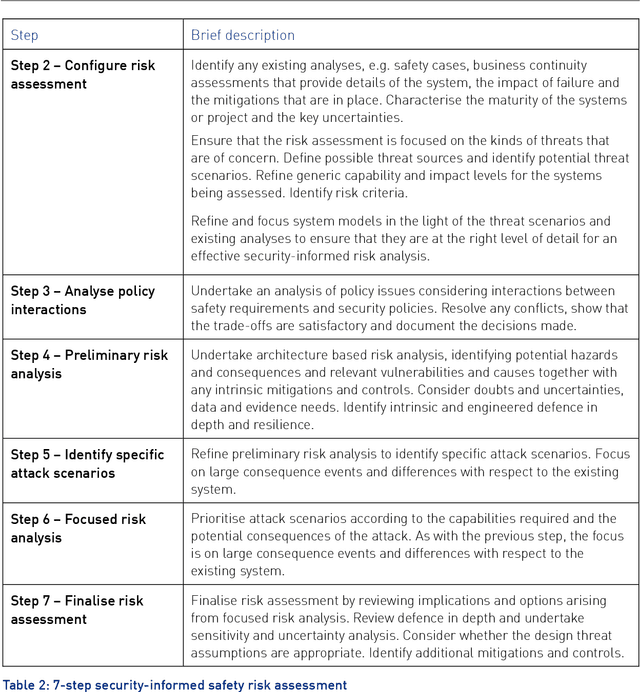

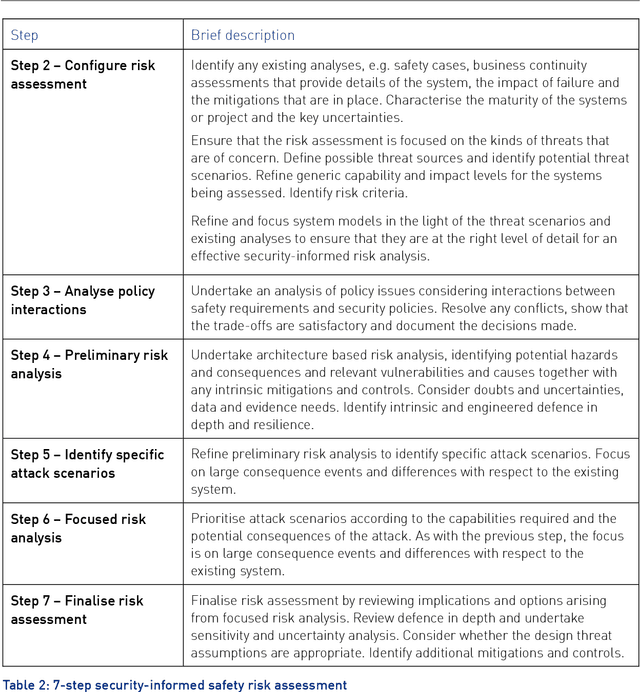

Abstract:This report provides an introduction and overview of the Technical Topic Notes (TTNs) produced in the Towards Identifying and closing Gaps in Assurance of autonomous Road vehicleS (Tigars) project. These notes aim to support the development and evaluation of autonomous vehicles. Part 1 addresses: Assurance-overview and issues, Resilience and Safety Requirements, Open Systems Perspective and Formal Verification and Static Analysis of ML Systems. This report is Part 2 and discusses: Simulation and Dynamic Testing, Defence in Depth and Diversity, Security-Informed Safety Analysis, Standards and Guidelines.

Towards Identifying and closing Gaps in Assurance of autonomous Road vehicleS -- a collection of Technical Notes Part 1

Feb 28, 2020

Abstract:This report provides an introduction and overview of the Technical Topic Notes (TTNs) produced in the Towards Identifying and closing Gaps in Assurance of autonomous Road vehicleS (Tigars) project. These notes aim to support the development and evaluation of autonomous vehicles. Part 1 addresses: Assurance-overview and issues, Resilience and Safety Requirements, Open Systems Perspective and Formal Verification and Static Analysis of ML Systems. Part 2: Simulation and Dynamic Testing, Defence in Depth and Diversity, Security-Informed Safety Analysis, Standards and Guidelines.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge