Pedro Batista

A Unified Family-optimal Solution to Covariance Intersection Problems with Semidefinite Programming

Mar 20, 2026Abstract:Covariance intersection (CI) methods provide a principled approach to fusing estimates with unknown cross-correlations by minimizing a worst-case measure of uncertainty that is consistent with the available information. This paper introduces a generalized CI framework, called overlapping covariance intersection (OCI), which unifies several existing CI formulations within a single optimization-based framework. This unification enables the characterization of family-optimal solutions for multiple CI variants, including standard CI and split covariance intersection (SCI), as solutions to a semidefinite program, for which efficient off-the-shelf solvers are available. When specialized to the corresponding settings, the proposed family-optimal solutions recover the state-of-the-art family-optimal solutions previously reported for CI and SCI. The resulting formulation facilitates the systematic design and real-time implementation of CI-based fusion methods in large-scale distributed estimation problems, such as cooperative localization.

Overlapping Covariance Intersection: Fusion with Partial Structural Knowledge of Correlation from Multiple Sources

Mar 17, 2026Abstract:Emerging large-scale engineering systems rely on distributed fusion for situational awareness, where agents combine noisy local sensor measurements with exchanged information to obtain fused estimates. However, at the sheer scale of these systems, tracking cross-correlations becomes infeasible, preventing the use of optimal filters. Covariance intersection (CI) methods address fusion problems with unknown correlations by minimizing worst-case uncertainty based on available information. Existing CI extensions exploit limited correlation knowledge but cannot incorporate structural knowledge of correlation from multiple sources, which naturally arises in distributed fusion problems. This paper introduces Overlapping Covariance Intersection (OCI), a generalized CI framework that accommodates this novel information structure. We formalize the OCI problem and establish necessary and sufficient conditions for feasibility. We show that a family-optimal solution can be computed efficiently via semidefinite programming, enabling real-time implementation. The proposed tools enable improved fusion performance for large-scale systems while retaining robustness to unknown correlations.

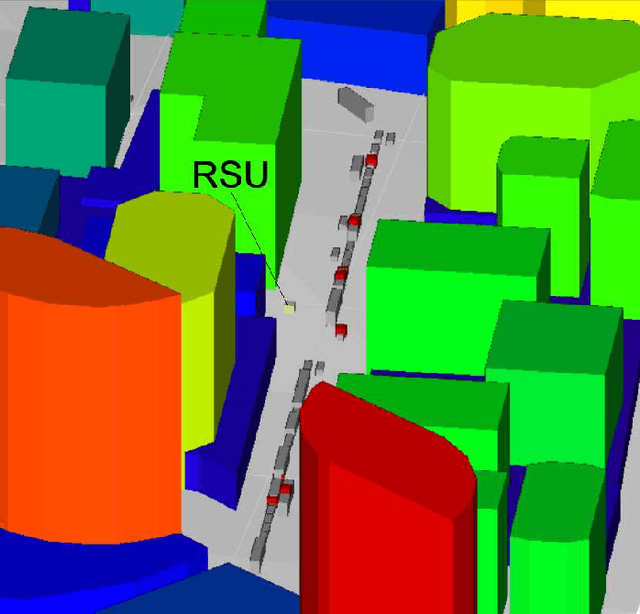

Accelerating Ray Tracing-Based Wireless Channels Generation for Real-Time Network Digital Twins

Apr 13, 2025Abstract:Ray tracing (RT) simulation is a widely used approach to enable modeling wireless channels in applications such as network digital twins. However, the computational cost to execute RT is proportional to factors such as the level of detail used in the adopted 3D scenario. This work proposes RT pre-processing algorithms that aim at simplifying the 3D scene without distorting the channel. It also proposes a post-processing method that augments a set of RT results to achieve an improved time resolution. These methods enable using RT in applications that use a detailed and photorealistic 3D scenario, while generating consistent wireless channels over time. Our simulation results with different 3D scenarios demonstrate that it is possible to reduce the simulation time by more than 50% without compromising the accuracy of the RT parameters.

CAVIAR: Co-simulation of 6G Communications, 3D Scenarios and AI for Digital Twins

Jan 06, 2024Abstract:Digital twins are an important technology for advancing mobile communications, specially in use cases that require simultaneously simulating the wireless channel, 3D scenes and machine learning. Aiming at providing a solution to this demand, this work describes a modular co-simulation methodology called CAVIAR. Here, CAVIAR is upgraded to support a message passing library and enable the virtual counterpart of a digital twin system using different 6G-related simulators. The main contributions of this work are the detailed description of different CAVIAR architectures, the implementation of this methodology to assess a 6G use case of UAV-based search and rescue mission (SAR), and the generation of benchmarking data about the computational resource usage. For executing the SAR co-simulation we adopt five open-source solutions: the physical and link level network simulator Sionna, the simulator for autonomous vehicles AirSim, scikit-learn for training a decision tree for MIMO beam selection, Yolov8 for the detection of rescue targets and NATS for message passing. Results for the implemented SAR use case suggest that the methodology can run in a single machine, with the main demanded resources being the CPU processing and the GPU memory.

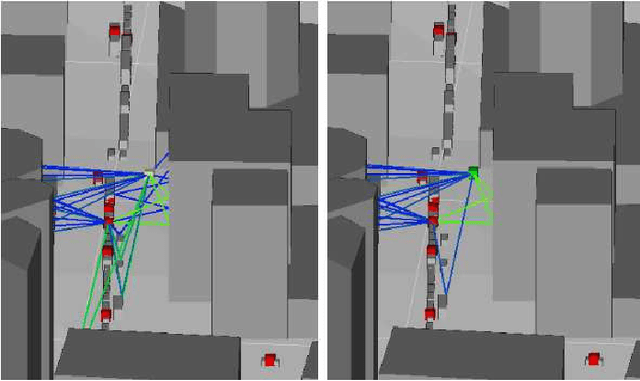

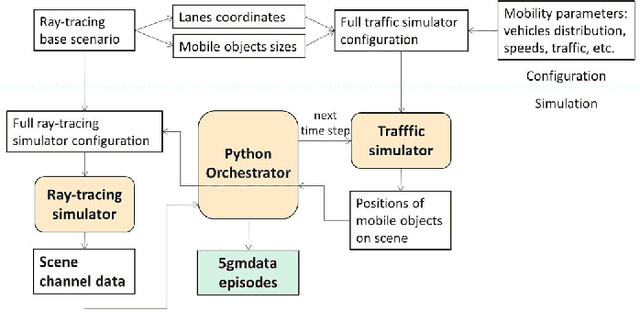

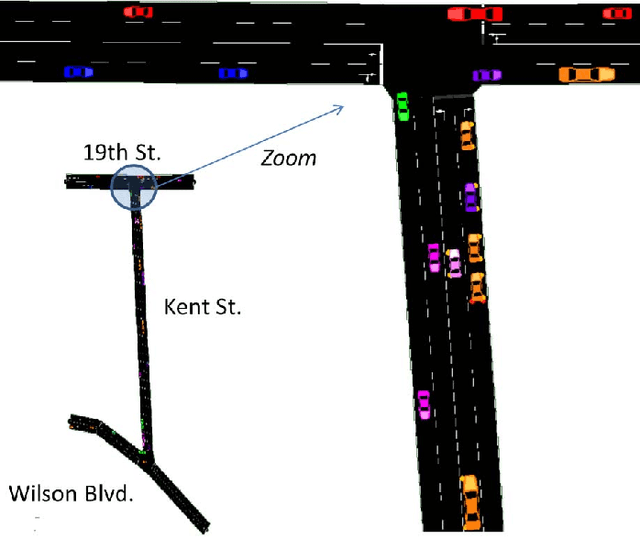

5G MIMO Data for Machine Learning: Application to Beam-Selection using Deep Learning

Jun 09, 2021

Abstract:The increasing complexity of configuring cellular networks suggests that machine learning (ML) can effectively improve 5G technologies. Deep learning has proven successful in ML tasks such as speech processing and computational vision, with a performance that scales with the amount of available data. The lack of large datasets inhibits the flourish of deep learning applications in wireless communications. This paper presents a methodology that combines a vehicle traffic simulator with a raytracing simulator, to generate channel realizations representing 5G scenarios with mobility of both transceivers and objects. The paper then describes a specific dataset for investigating beams-election techniques on vehicle-to-infrastructure using millimeter waves. Experiments using deep learning in classification, regression and reinforcement learning problems illustrate the use of datasets generated with the proposed methodology

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge