Paul Schwerdtner

Hankel Singular Value Regularization for Highly Compressible State Space Models

Oct 27, 2025Abstract:Deep neural networks using state space models as layers are well suited for long-range sequence tasks but can be challenging to compress after training. We use that regularizing the sum of Hankel singular values of state space models leads to a fast decay of these singular values and thus to compressible models. To make the proposed Hankel singular value regularization scalable, we develop an algorithm to efficiently compute the Hankel singular values during training iterations by exploiting the specific block-diagonal structure of the system matrices that is we use in our state space model parametrization. Experiments on Long Range Arena benchmarks demonstrate that the regularized state space layers are up to 10$\times$ more compressible than standard state space layers while maintaining high accuracy.

Operator Inference Aware Quadratic Manifolds with Isotropic Reduced Coordinates for Nonintrusive Model Reduction

Jul 28, 2025Abstract:Quadratic manifolds for nonintrusive reduced modeling are typically trained to minimize the reconstruction error on snapshot data, which means that the error of models fitted to the embedded data in downstream learning steps is ignored. In contrast, we propose a greedy training procedure that takes into account both the reconstruction error on the snapshot data and the prediction error of reduced models fitted to the data. Because our procedure learns quadratic manifolds with the objective of achieving accurate reduced models, it avoids oscillatory and other non-smooth embeddings that can hinder learning accurate reduced models. Numerical experiments on transport and turbulent flow problems show that quadratic manifolds trained with the proposed greedy approach lead to reduced models with up to two orders of magnitude higher accuracy than quadratic manifolds trained with respect to the reconstruction error alone.

Nonlinear embeddings for conserving Hamiltonians and other quantities with Neural Galerkin schemes

Oct 11, 2023

Abstract:This work focuses on the conservation of quantities such as Hamiltonians, mass, and momentum when solution fields of partial differential equations are approximated with nonlinear parametrizations such as deep networks. The proposed approach builds on Neural Galerkin schemes that are based on the Dirac--Frenkel variational principle to train nonlinear parametrizations sequentially in time. We first show that only adding constraints that aim to conserve quantities in continuous time can be insufficient because the nonlinear dependence on the parameters implies that even quantities that are linear in the solution fields become nonlinear in the parameters and thus are challenging to discretize in time. Instead, we propose Neural Galerkin schemes that compute at each time step an explicit embedding onto the manifold of nonlinearly parametrized solution fields to guarantee conservation of quantities. The embeddings can be combined with standard explicit and implicit time integration schemes. Numerical experiments demonstrate that the proposed approach conserves quantities up to machine precision.

Risk Assessment for Machine Learning Models

Nov 09, 2020

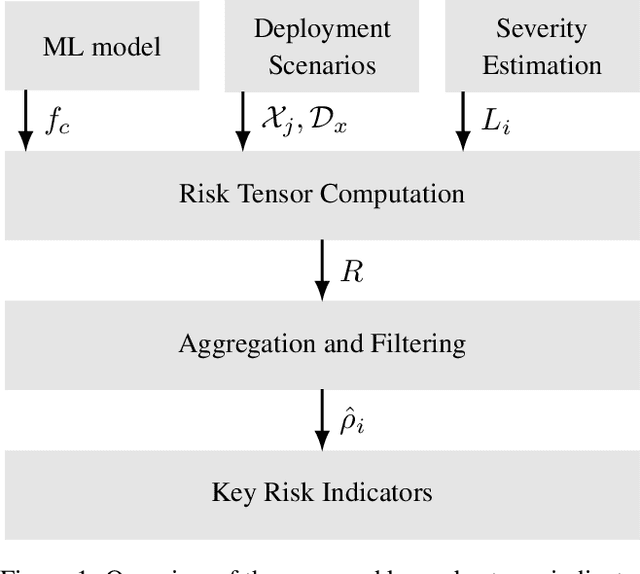

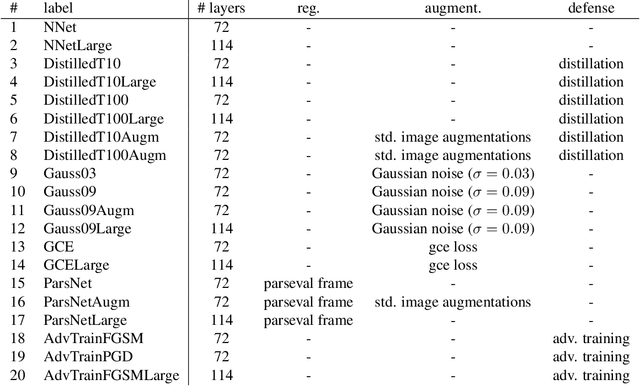

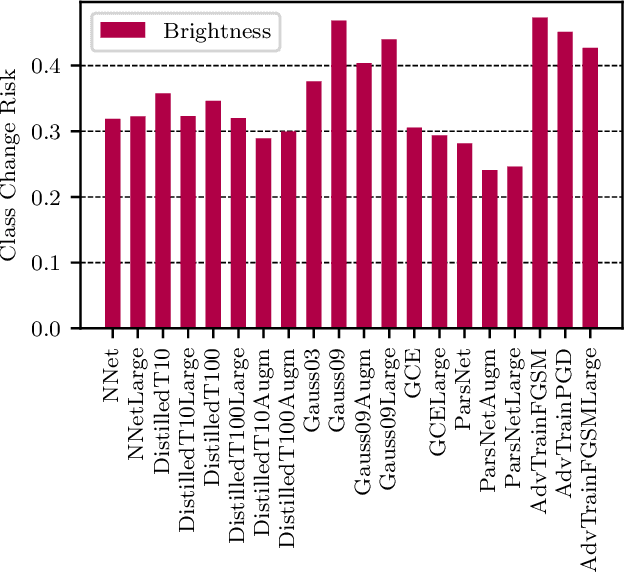

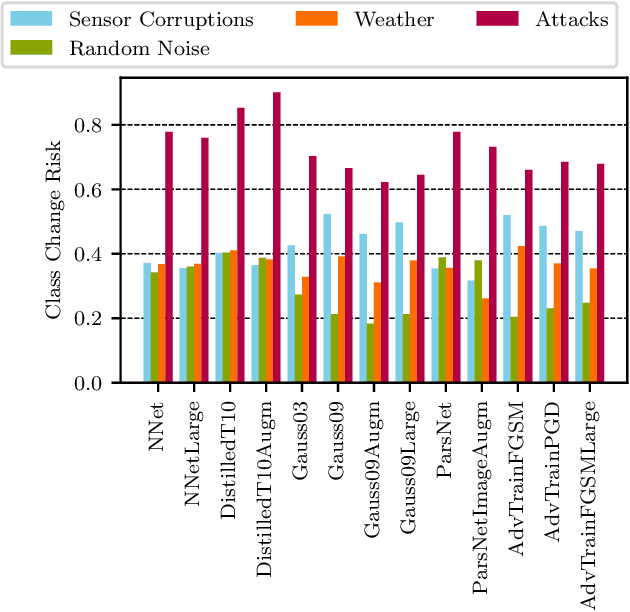

Abstract:In this paper we propose a framework for assessing the risk associated with deploying a machine learning model in a specified environment. For that we carry over the risk definition from decision theory to machine learning. We develop and implement a method that allows to define deployment scenarios, test the machine learning model under the conditions specified in each scenario, and estimate the damage associated with the output of the machine learning model under test. Using the likelihood of each scenario together with the estimated damage we define \emph{key risk indicators} of a machine learning model. The definition of scenarios and weighting by their likelihood allows for standardized risk assessment in machine learning throughout multiple domains of application. In particular, in our framework, the robustness of a machine learning model to random input corruptions, distributional shifts caused by a changing environment, and adversarial perturbations can be assessed.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge