Osama R. Shahin

Gesture based Arabic Sign Language Recognition for Impaired People based on Convolution Neural Network

Mar 10, 2022

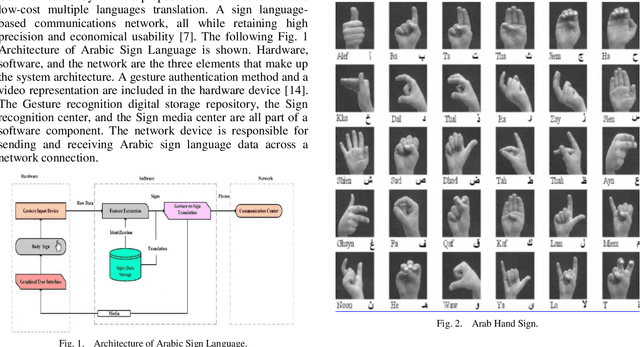

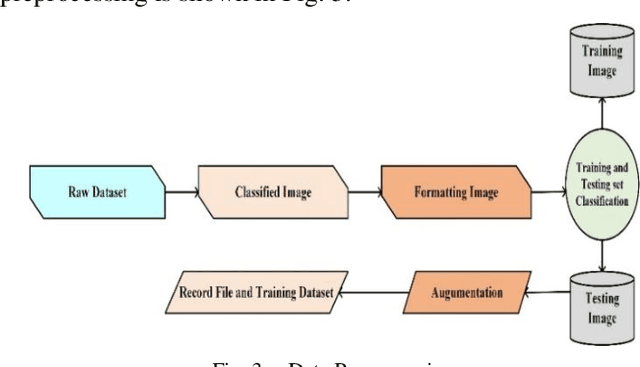

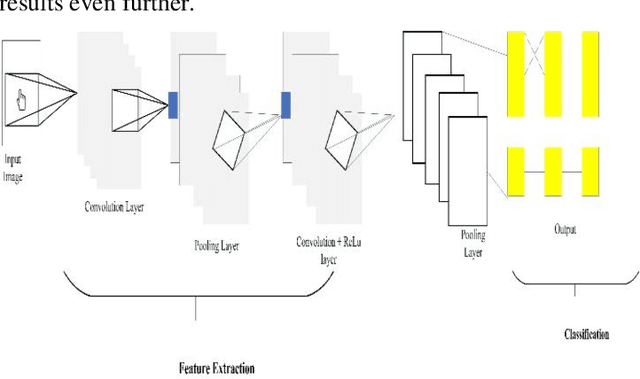

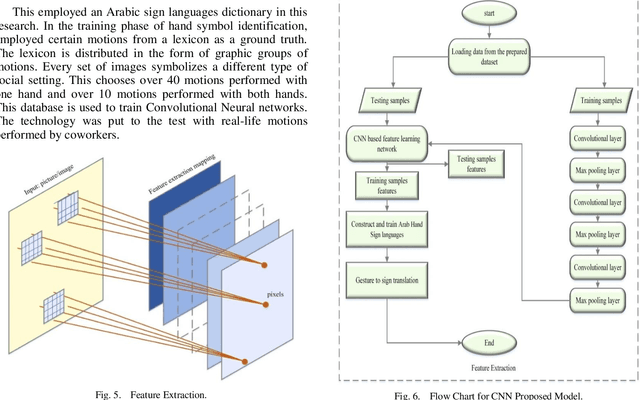

Abstract:The Arabic Sign Language has endorsed outstanding research achievements for identifying gestures and hand signs using the deep learning methodology. The term "forms of communication" refers to the actions used by hearing-impaired people to communicate. These actions are difficult for ordinary people to comprehend. The recognition of Arabic Sign Language (ArSL) has become a difficult study subject due to variations in Arabic Sign Language (ArSL) from one territory to another and then within states. The Convolution Neural Network has been encapsulated in the proposed system which is based on the machine learning technique. For the recognition of the Arabic Sign Language, the wearable sensor is utilized. This approach has been used a different system that could suit all Arabic gestures. This could be used by the impaired people of the local Arabic community. The research method has been used with reasonable and moderate accuracy. A deep Convolutional network is initially developed for feature extraction from the data gathered by the sensing devices. These sensors can reliably recognize the Arabic sign language's 30 hand sign letters. The hand movements in the dataset were captured using DG5-V hand gloves with wearable sensors. For categorization purposes, the CNN technique is used. The suggested system takes Arabic sign language hand gestures as input and outputs vocalized speech as output. The results were recognized by 90% of the people.

Human Face Recognition from Part of a Facial Image based on Image Stitching

Mar 10, 2022

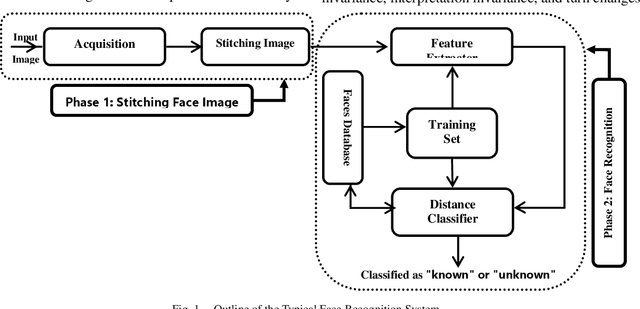

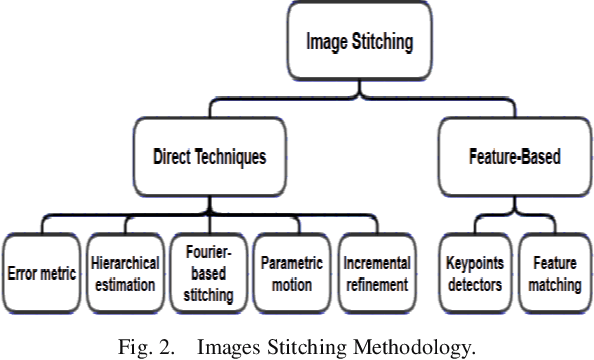

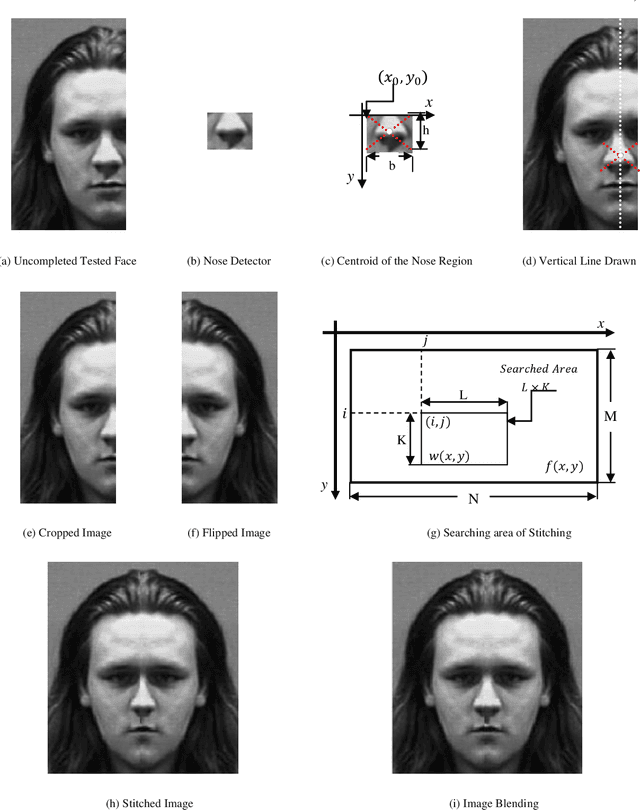

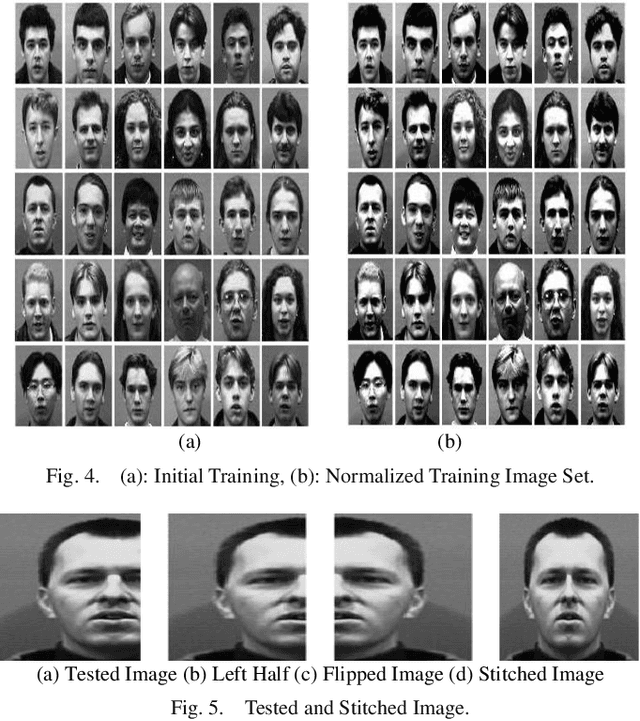

Abstract:Most of the current techniques for face recognition require the presence of a full face of the person to be recognized, and this situation is difficult to achieve in practice, the required person may appear with a part of his face, which requires prediction of the part that did not appear. Most of the current forecasting processes are done by what is known as image interpolation, which does not give reliable results, especially if the missing part is large. In this work, we adopted the process of stitching the face by completing the missing part with the flipping of the part shown in the picture, depending on the fact that the human face is characterized by symmetry in most cases. To create a complete model, two facial recognition methods were used to prove the efficiency of the algorithm. The selected face recognition algorithms that are applied here are Eigenfaces and geometrical methods. Image stitching is the process during which distinctive photographic images are combined to make a complete scene or a high-resolution image. Several images are integrated to form a wide-angle panoramic image. The quality of the image stitching is determined by calculating the similarity among the stitched image and original images and by the presence of the seam lines through the stitched images. The Eigenfaces approach utilizes PCA calculation to reduce the feature vector dimensions. It provides an effective approach for discovering the lower-dimensional space. In addition, to enable the proposed algorithm to recognize the face, it also ensures a fast and effective way of classifying faces. The phase of feature extraction is followed by the classifier phase.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge