Ole-Christoffer Granmo

Massively Parallel and Asynchronous Tsetlin Machine Architecture Supporting Almost Constant-Time Scaling

Sep 13, 2020

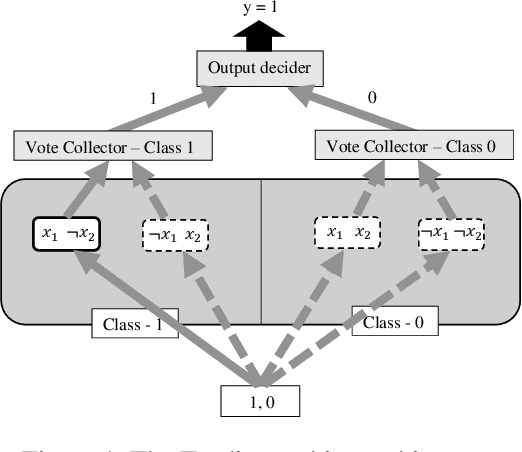

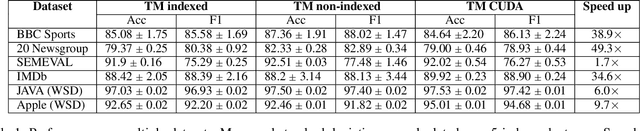

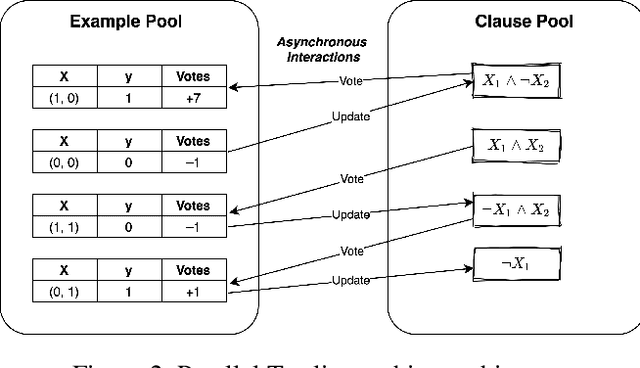

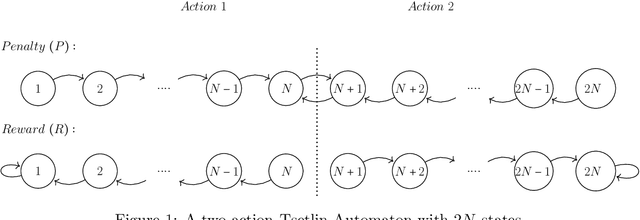

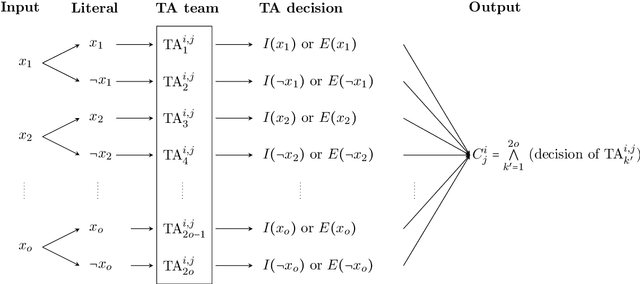

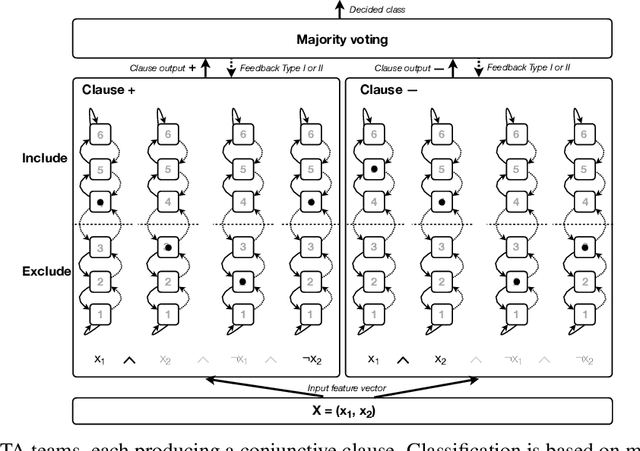

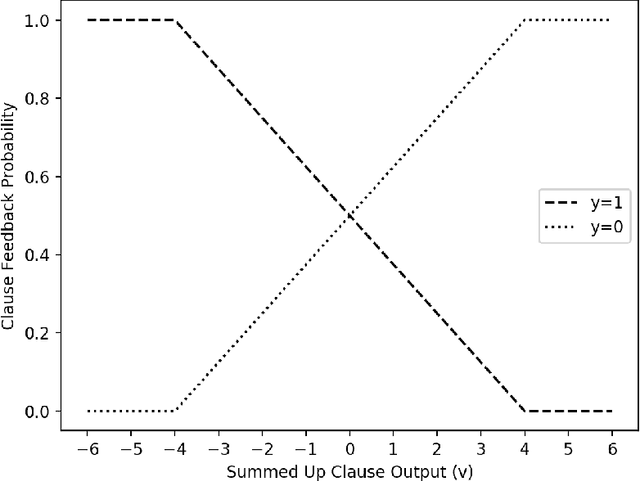

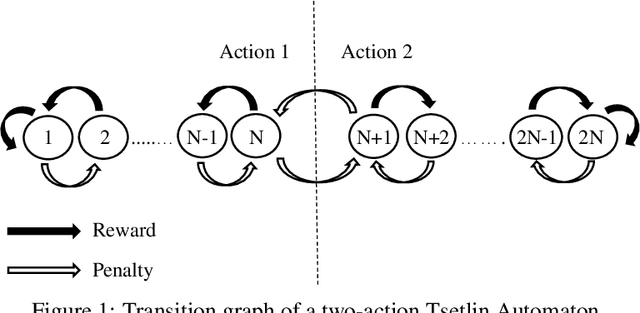

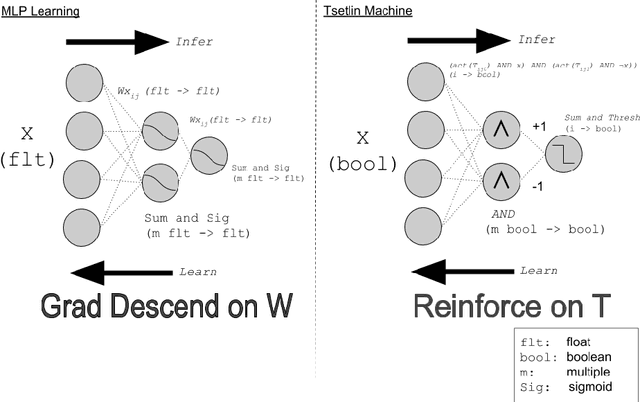

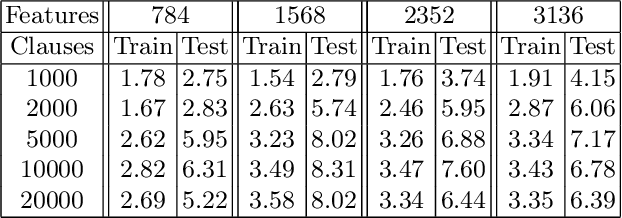

Abstract:Using logical clauses to represent patterns, Tsetlin machines (TMs) have recently obtained competitive performance in terms of accuracy, memory footprint, energy, and learning speed on several benchmarks. A team of Tsetlin automata (TAs) composes each clause, thus driving the entire learning process. These are rewarded/penalized according to three local rules that optimize global behaviour. Each clause votes for or against a particular class, with classification resolved using a majority vote. In the parallel and asynchronous architecture that we propose here, every clause runs in its own thread for massive parallelism. For each training example, we keep track of the class votes obtained from the clauses in local voting tallies. The local voting tallies allow us to detach the processing of each clause from the rest of the clauses, supporting decentralized learning. Thus, rather than processing training examples one-by-one as in the original TM, the clauses access the training examples simultaneously, updating themselves and the local voting tallies in parallel. There is no synchronization among the clause threads, apart from atomic adds to the local voting tallies. Operating asynchronously, each team of TA will most of the time operate on partially calculated or outdated voting tallies. However, across diverse learning tasks, it turns out that our decentralized TM learning algorithm copes well with working on outdated data, resulting in no significant loss in learning accuracy. Further, we show that the approach provides up to 50 times faster learning. Finally, learning time is almost constant for reasonable clause amounts. For sufficiently large clause numbers, computation time increases approximately proportionally. Our parallel and asynchronous architecture thus allows processing of more massive datasets and operating with more clauses for higher accuracy.

On the Convergence of Tsetlin Machines for the IDENTITY- and NOT Operators

Jul 28, 2020

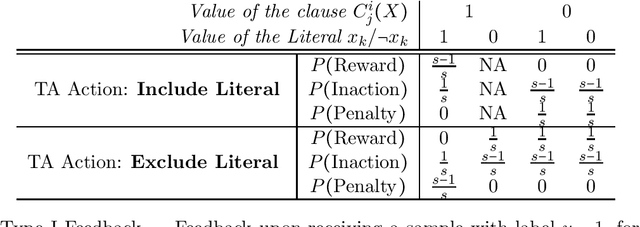

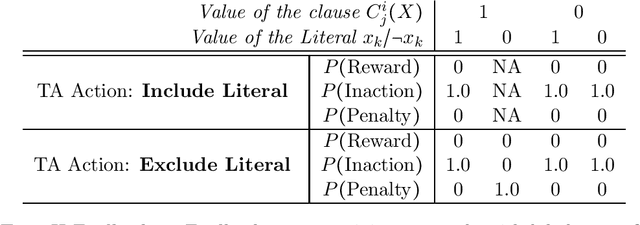

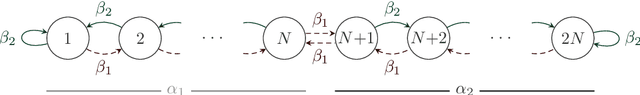

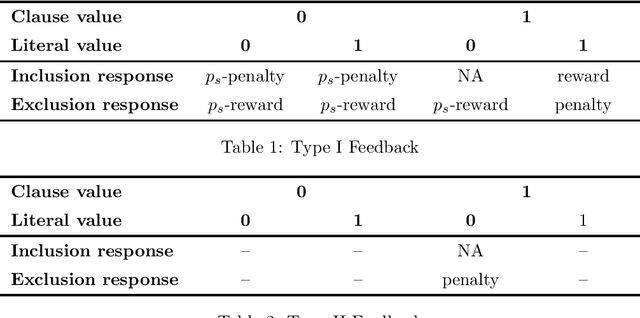

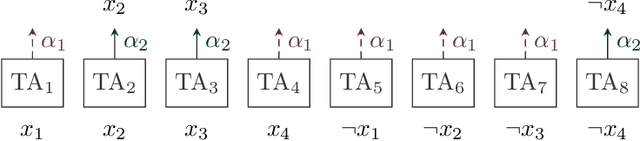

Abstract:The Tsetlin Machine (TM) is a recent machine learning algorithm with several distinct properties, such as interpretability, simplicity, and hardware-friendliness. Although numerous empirical evaluations report on its performance, the mathematical analysis of its convergence is still open. In this article, we analyze the convergence of the TM with only one clause involved for classification. More specifically, we examine two basic logical operators, namely, the IDENTITY- and NOT operators. Our analysis reveals that the TM, with just one clause, can converge correctly to the intended logical operator, learning from training data over an infinite time horizon. Besides, it can capture arbitrarily rare patterns and select the most accurate one when two candidate patterns are incompatible, by configuring a granularity parameter. The analysis of the convergence of the two basic operators lays the foundation for analyzing other logical operators. These analyses altogether, from a mathematical perspective, provide new insights on why TMs have obtained state-of-the-art performance on several pattern recognition problems.

Closed-Form Expressions for Global and Local Interpretation of Tsetlin Machines with Applications to Explaining High-Dimensional Data

Jul 27, 2020

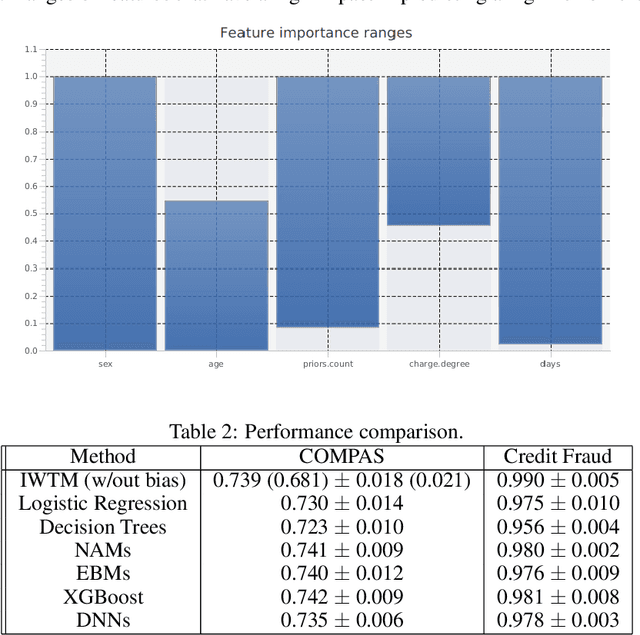

Abstract:Tsetlin Machines (TMs) capture patterns using conjunctive clauses in propositional logic, thus facilitating interpretation. However, recent TM-based approaches mainly rely on inspecting the full range of clauses individually. Such inspection does not necessarily scale to complex prediction problems that require a large number of clauses. In this paper, we propose closed-form expressions for understanding why a TM model makes a specific prediction (local interpretability). Additionally, the expressions capture the most important features of the model overall (global interpretability). We further introduce expressions for measuring the importance of feature value ranges for continuous features. The expressions are formulated directly from the conjunctive clauses of the TM, making it possible to capture the role of features in real-time, also during the learning process as the model evolves. Additionally, from the closed-form expressions, we derive a novel data clustering algorithm for visualizing high-dimensional data in three dimensions. Finally, we compare our proposed approach against SHAP and state-of-the-art interpretable machine learning techniques. For both classification and regression, our evaluation show correspondence with SHAP as well as competitive prediction accuracy in comparison with XGBoost, Explainable Boosting Machines, and Neural Additive Models.

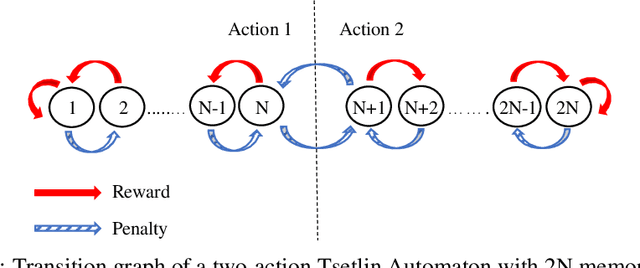

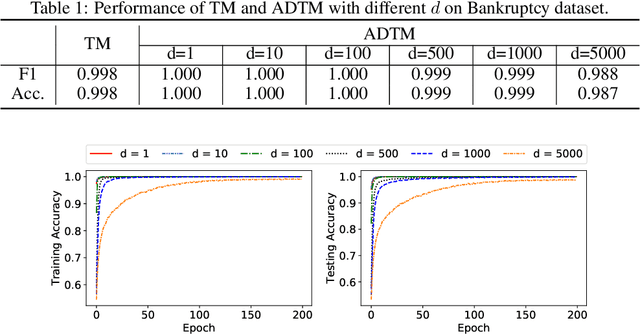

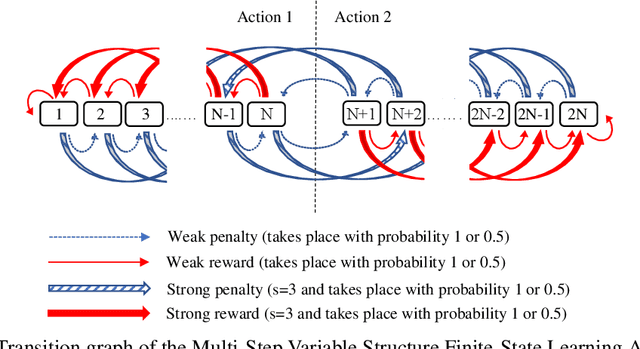

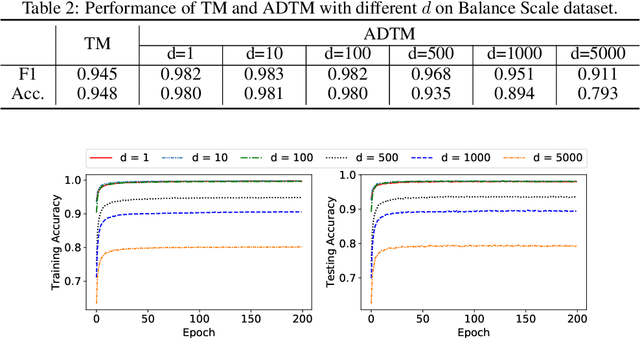

A Novel Multi-Step Finite-State Automaton for Arbitrarily Deterministic Tsetlin Machine Learning

Jul 04, 2020

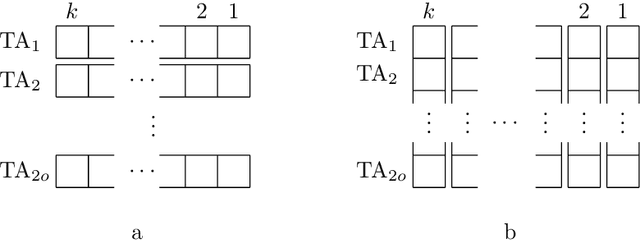

Abstract:Due to the high energy consumption and scalability challenges of deep learning, there is a critical need to shift research focus towards dealing with energy consumption constraints. Tsetlin Machines (TMs) are a recent approach to machine learning that has demonstrated significantly reduced energy usage compared to neural networks alike, while performing competitively accuracy-wise on several benchmarks. However, TMs rely heavily on energy-costly random number generation to stochastically guide a team of Tsetlin Automata to a Nash Equilibrium of the TM game. In this paper, we propose a novel finite-state learning automaton that can replace the Tsetlin Automata in TM learning, for increased determinism. The new automaton uses multi-step deterministic state jumps to reinforce sub-patterns. Simultaneously, flipping a coin to skip every $d$'th state update ensures diversification by randomization. The $d$-parameter thus allows the degree of randomization to be finely controlled. E.g., $d=1$ makes every update random and $d=\infty$ makes the automaton completely deterministic. Our empirical results show that, overall, only substantial degrees of determinism reduces accuracy. Energy-wise, random number generation constitutes switching energy consumption of the TM, saving up to 11 mW power for larger datasets with high $d$ values. We can thus use the new $d$-parameter to trade off accuracy against energy consumption, to facilitate low-energy machine learning.

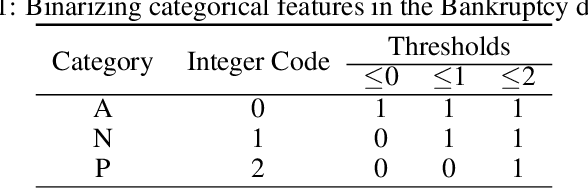

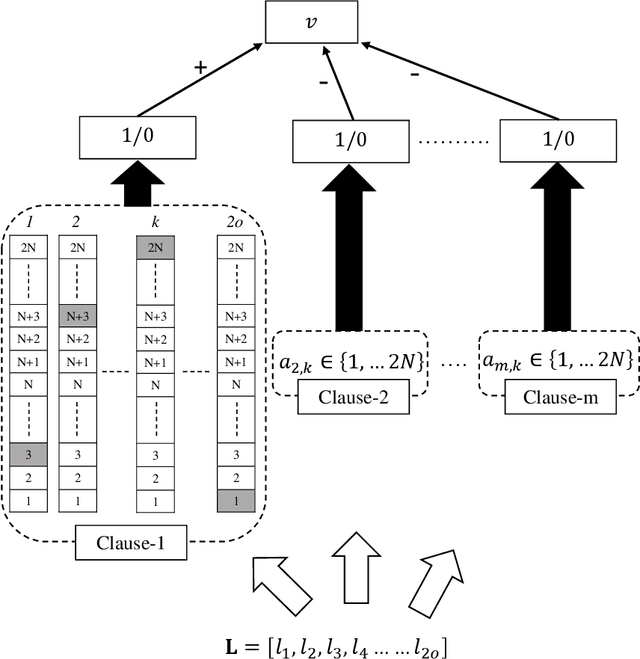

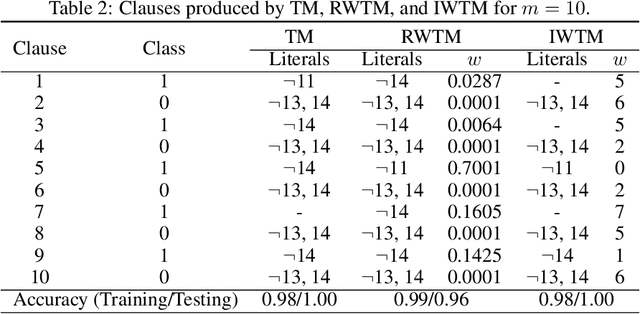

Extending the Tsetlin Machine With Integer-Weighted Clauses for Increased Interpretability

May 11, 2020

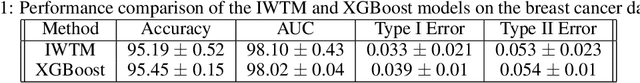

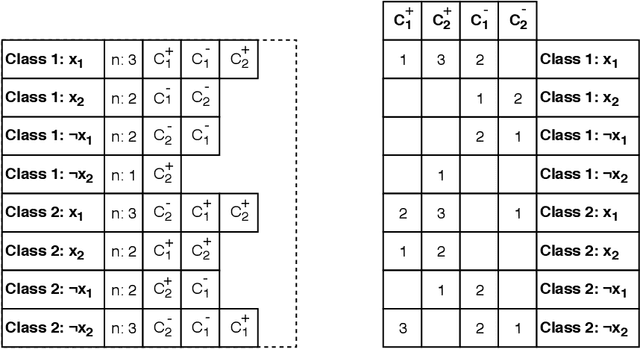

Abstract:Despite significant effort, building models that are both interpretable and accurate is an unresolved challenge for many pattern recognition problems. In general, rule-based and linear models lack accuracy, while deep learning interpretability is based on rough approximations of the underlying inference. Using a linear combination of conjunctive clauses in propositional logic, Tsetlin Machines (TMs) have shown competitive performance on diverse benchmarks. However, to do so, many clauses are needed, which impacts interpretability. Here, we address the accuracy-interpretability challenge in machine learning by equipping the TM clauses with integer weights. The resulting Integer Weighted TM (IWTM) deals with the problem of learning which clauses are inaccurate and thus must team up to obtain high accuracy as a team (low weight clauses), and which clauses are sufficiently accurate to operate more independently (high weight clauses). Since each TM clause is formed adaptively by a team of Tsetlin Automata, identifying effective weights becomes a challenging online learning problem. We address this problem by extending each team of Tsetlin Automata with a stochastic searching on the line (SSL) automaton. In our novel scheme, the SSL automaton learns the weight of its clause in interaction with the corresponding Tsetlin Automata team, which, in turn, adapts the composition of the clause by the adjusting weight. We evaluate IWTM empirically using five datasets, including a study of interpetability. On average, IWTM uses 6.5 times fewer literals than the vanilla TM and 120 times fewer literals than a TM with real-valued weights. Furthermore, in terms of average F1-Score, IWTM outperforms simple Multi-Layered Artificial Neural Networks, Decision Trees, Support Vector Machines, K-Nearest Neighbor, Random Forest, XGBoost, Explainable Boosting Machines, and standard and real-value weighted TMs.

Increasing the Inference and Learning Speed of Tsetlin Machines with Clause Indexing

Apr 07, 2020

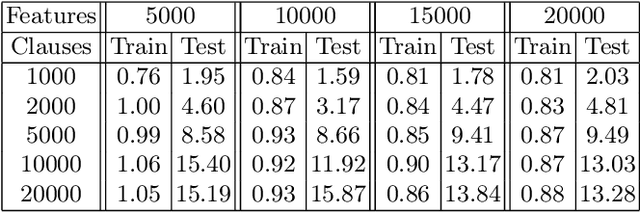

Abstract:The Tsetlin Machine (TM) is a machine learning algorithm founded on the classical Tsetlin Automaton (TA) and game theory. It further leverages frequent pattern mining and resource allocation principles to extract common patterns in the data, rather than relying on minimizing output error, which is prone to overfitting. Unlike the intertwined nature of pattern representation in neural networks, a TM decomposes problems into self-contained patterns, represented as conjunctive clauses. The clause outputs, in turn, are combined into a classification decision through summation and thresholding, akin to a logistic regression function, however, with binary weights and a unit step output function. In this paper, we exploit this hierarchical structure by introducing a novel algorithm that avoids evaluating the clauses exhaustively. Instead we use a simple look-up table that indexes the clauses on the features that falsify them. In this manner, we can quickly evaluate a large number of clauses through falsification, simply by iterating through the features and using the look-up table to eliminate those clauses that are falsified. The look-up table is further structured so that it facilitates constant time updating, thus supporting use also during learning. We report up to 15 times faster classification and three times faster learning on MNIST and Fashion-MNIST image classification, and IMDb sentiment analysis.

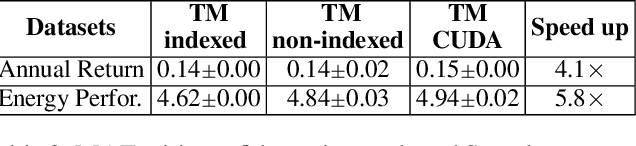

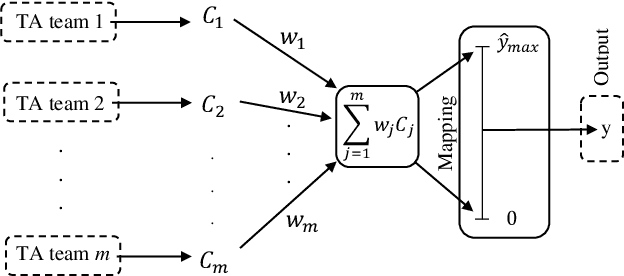

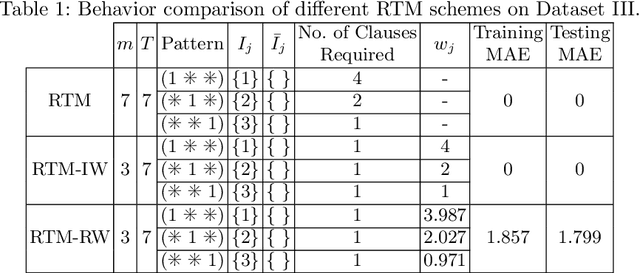

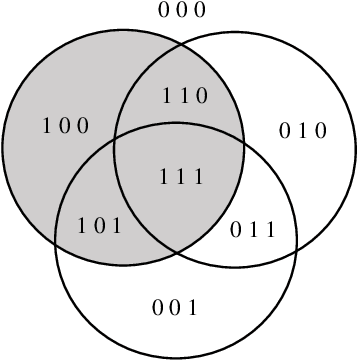

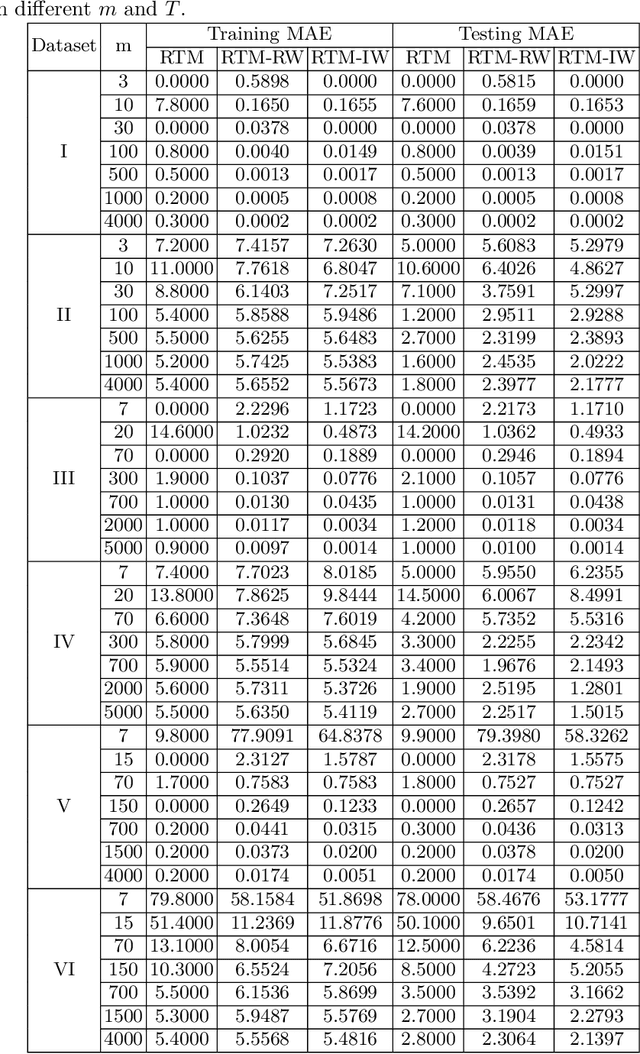

A Regression Tsetlin Machine with Integer Weighted Clauses for Compact Pattern Representation

Feb 04, 2020

Abstract:The Regression Tsetlin Machine (RTM) addresses the lack of interpretability impeding state-of-the-art nonlinear regression models. It does this by using conjunctive clauses in propositional logic to capture the underlying non-linear frequent patterns in the data. These, in turn, are combined into a continuous output through summation, akin to a linear regression function, however, with non-linear components and unity weights. Although the RTM has solved non-linear regression problems with competitive accuracy, the resolution of the output is proportional to the number of clauses employed. This means that computation cost increases with resolution. To reduce this problem, we here introduce integer weighted RTM clauses. Our integer weighted clause is a compact representation of multiple clauses that capture the same sub-pattern-N repeating clauses are turned into one, with an integer weight N. This reduces computation cost N times, and increases interpretability through a sparser representation. We further introduce a novel learning scheme that allows us to simultaneously learn both the clauses and their weights, taking advantage of so-called stochastic searching on the line. We evaluate the potential of the integer weighted RTM empirically using six artificial datasets. The results show that the integer weighted RTM is able to acquire on par or better accuracy using significantly less computational resources compared to regular RTMs. We further show that integer weights yield improved accuracy over real-valued ones.

The Weighted Tsetlin Machine: Compressed Representations with Weighted Clauses

Jan 14, 2020

Abstract:The Tsetlin Machine (TM) is an interpretable mechanism for pattern recognition that constructs conjunctive clauses from data. The clauses capture frequent patterns with high discriminating power, providing increasing expression power with each additional clause. However, the resulting accuracy gain comes at the cost of linear growth in computation time and memory usage. In this paper, we present the Weighted Tsetlin Machine (WTM), which reduces computation time and memory usage by weighting the clauses. Real-valued weighting allows one clause to replace multiple, and supports fine-tuning the impact of each clause. Our novel scheme simultaneously learns both the composition of the clauses and their weights. Furthermore, we increase training efficiency by replacing $k$ Bernoulli trials of success probability $p$ with a uniform sample of average size $p k$, the size drawn from a binomial distribution. In our empirical evaluation, the WTM achieved the same accuracy as the TM on MNIST, IMDb, and Connect-4, requiring only $1/4$, $1/3$, and $1/50$ of the clauses, respectively. With the same number of clauses, the WTM outperformed the TM, obtaining peak test accuracies of respectively $98.63\%$, $90.37\%$, and $87.91\%$. Finally, our novel sampling scheme reduced sample generation time by a factor of $7$.

Environment Sound Classification using Multiple Feature Channels and Deep Convolutional Neural Networks

Sep 25, 2019

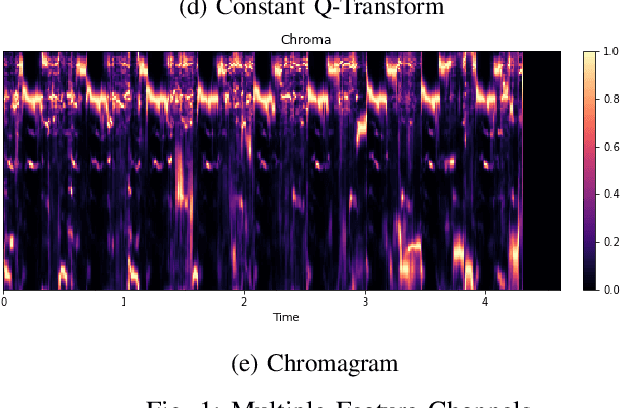

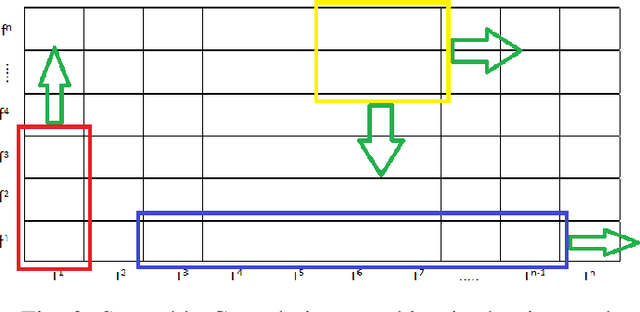

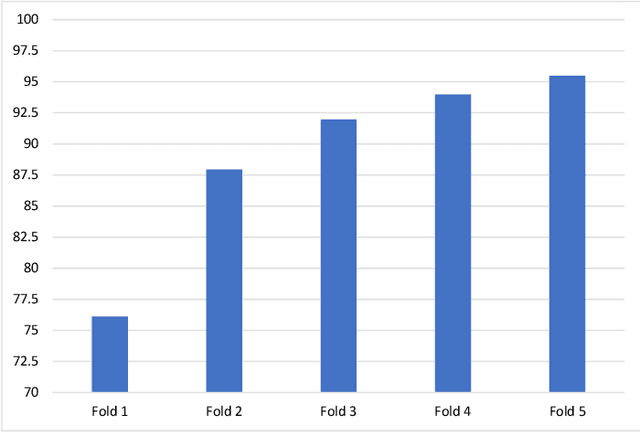

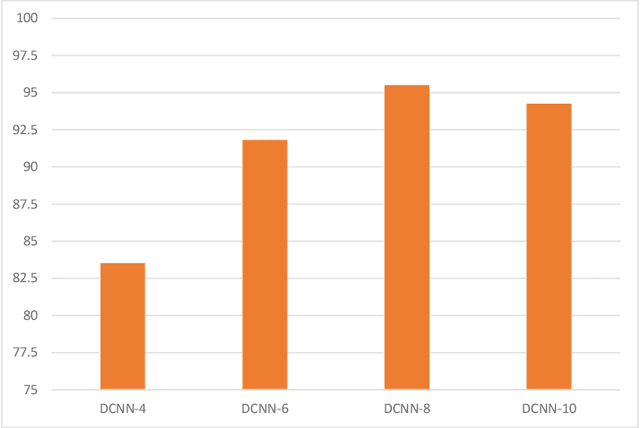

Abstract:In this paper, we propose a model for the Environment Sound Classification Task (ESC) that consists of multiple feature channels given as input to a Deep Convolutional Neural Network (CNN). The novelty of the paper lies in using multiple feature channels consisting of Mel-Frequency Cepstral Coefficients (MFCC), Gammatone Frequency Cepstral Coefficients (GFCC), the Constant Q-transform (CQT) and Chromagram. Such multiple features have never been used before for signal or audio processing. Also, we employ a deeper CNN (DCNN) compared to previous models, consisting of 2D separable convolutions working on time and feature domain separately. The model also consists of max pooling layers that downsample time and feature domain separately. We use some data augmentation techniques to further boost performance. Our model is able to achieve state-of-the-art performance on all three benchmark environment sound classification datasets, i.e. the UrbanSound8K (97.35%), ESC-10 (95.75%) and ESC-50 (90.48%). To the best of our knowledge, this is the first time that a single environment sound classification model is able to achieve state-of-the-art results on all three datasets. For ESC-10 and ESC-50 datasets, the accuracy achieved by the proposed model is beyond human accuracy of 95.7% and 81.3% respectively.

A Tsetlin Machine with Multigranular Clauses

Sep 16, 2019

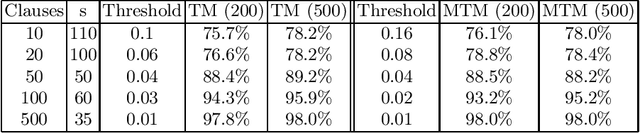

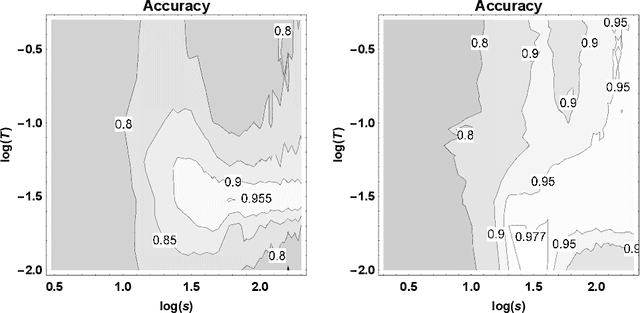

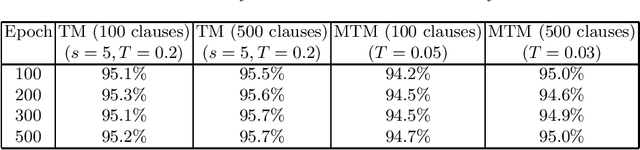

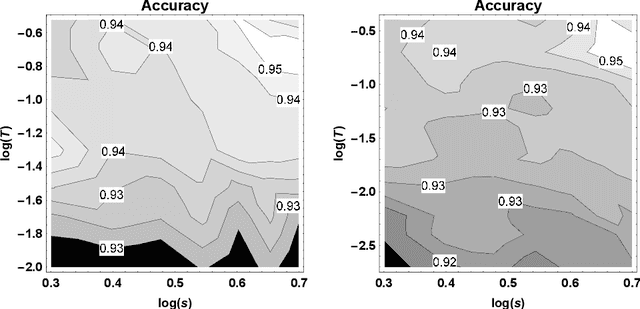

Abstract:The recently introduced Tsetlin Machine (TM) has provided competitive pattern recognition accuracy in several benchmarks, however, requires a 3-dimensional hyperparameter search. In this paper, we introduce the Multigranular Tsetlin Machine (MTM). The MTM eliminates the specificity hyperparameter, used by the TM to control the granularity of the conjunctive clauses that it produces for recognizing patterns. Instead of using a fixed global specificity, we encode varying specificity as part of the clauses, rendering the clauses multigranular. This makes it easier to configure the TM because the dimensionality of the hyperparameter search space is reduced to only two dimensions. Indeed, it turns out that there is significantly less hyperparameter tuning involved in applying the MTM to new problems. Further, we demonstrate empirically that the MTM provides similar performance to what is achieved with a finely specificity-optimized TM, by comparing their performance on both synthetic and real-world datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge