Nozomi Hata

Diversified Adversarial Attacks based on Conjugate Gradient Method

Jun 20, 2022

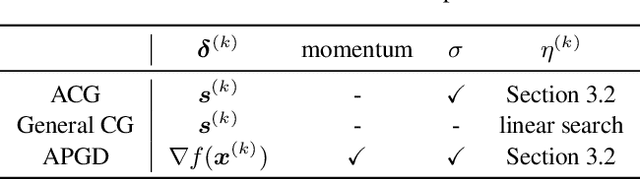

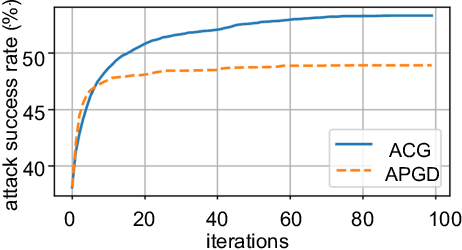

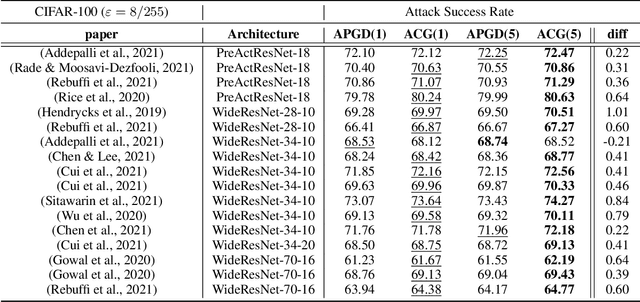

Abstract:Deep learning models are vulnerable to adversarial examples, and adversarial attacks used to generate such examples have attracted considerable research interest. Although existing methods based on the steepest descent have achieved high attack success rates, ill-conditioned problems occasionally reduce their performance. To address this limitation, we utilize the conjugate gradient (CG) method, which is effective for this type of problem, and propose a novel attack algorithm inspired by the CG method, named the Auto Conjugate Gradient (ACG) attack. The results of large-scale evaluation experiments conducted on the latest robust models show that, for most models, ACG was able to find more adversarial examples with fewer iterations than the existing SOTA algorithm Auto-PGD (APGD). We investigated the difference in search performance between ACG and APGD in terms of diversification and intensification, and define a measure called Diversity Index (DI) to quantify the degree of diversity. From the analysis of the diversity using this index, we show that the more diverse search of the proposed method remarkably improves its attack success rate.

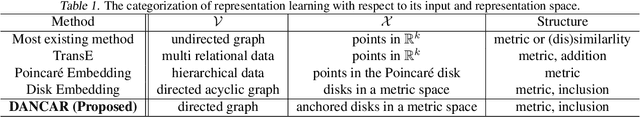

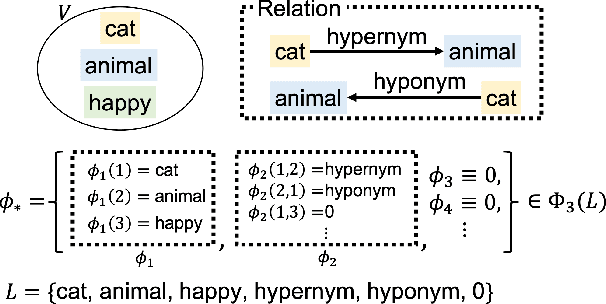

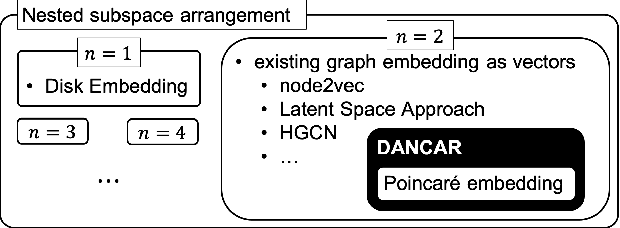

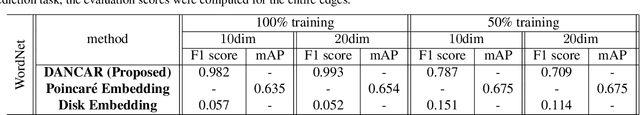

Nested Subspace Arrangement for Representation of Relational Data

Jul 04, 2020

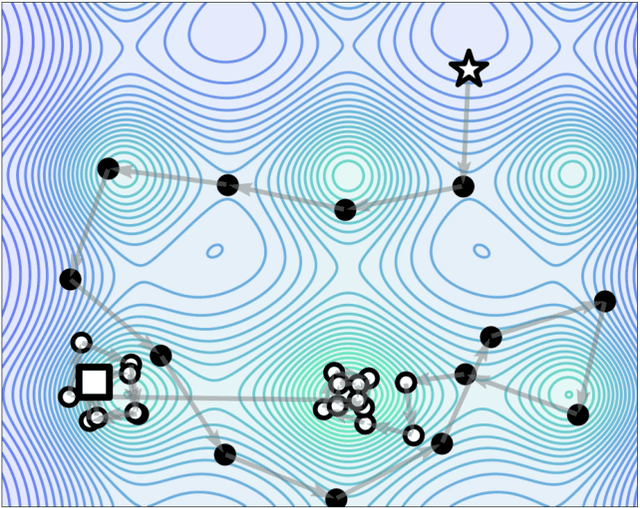

Abstract:Studies on acquiring appropriate continuous representations of discrete objects, such as graphs and knowledge base data, have been conducted by many researchers in the field of machine learning. In this study, we introduce Nested SubSpace (NSS) arrangement, a comprehensive framework for representation learning. We show that existing embedding techniques can be regarded as special cases of the NSS arrangement. Based on the concept of the NSS arrangement, we implement a Disk-ANChor ARrangement (DANCAR), a representation learning method specialized to reproducing general graphs. Numerical experiments have shown that DANCAR has successfully embedded WordNet in ${\mathbb R}^{20}$ with an F1 score of 0.993 in the reconstruction task. DANCAR is also suitable for visualization in understanding the characteristics of graphs.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge