Myong Chol Jung

Navigating Conflicting Views: Harnessing Trust for Learning

Jun 03, 2024

Abstract:Resolving conflicts is essential to make the decisions of multi-view classification more reliable. Much research has been conducted on learning consistent informative representations among different views, assuming that all views are identically important and strictly aligned. However, real-world multi-view data may not always conform to these assumptions, as some views may express distinct information. To address this issue, we develop a computational trust-based discounting method to enhance the existing trustworthy framework in scenarios where conflicts between different views may arise. Its belief fusion process considers the trustworthiness of predictions made by individual views via an instance-wise probability-sensitive trust discounting mechanism. We evaluate our method on six real-world datasets, using Top-1 Accuracy, AUC-ROC for Uncertainty-Aware Prediction, Fleiss' Kappa, and a new metric called Multi-View Agreement with Ground Truth that takes into consideration the ground truth labels. The experimental results show that computational trust can effectively resolve conflicts, paving the way for more reliable multi-view classification models in real-world applications.

Enhancing Near OOD Detection in Prompt Learning: Maximum Gains, Minimal Costs

May 25, 2024

Abstract:Prompt learning has shown to be an efficient and effective fine-tuning method for vision-language models like CLIP. While numerous studies have focused on the generalisation of these models in few-shot classification, their capability in near out-of-distribution (OOD) detection has been overlooked. A few recent works have highlighted the promising performance of prompt learning in far OOD detection. However, the more challenging task of few-shot near OOD detection has not yet been addressed. In this study, we investigate the near OOD detection capabilities of prompt learning models and observe that commonly used OOD scores have limited performance in near OOD detection. To enhance the performance, we propose a fast and simple post-hoc method that complements existing logit-based scores, improving near OOD detection AUROC by up to 11.67% with minimal computational cost. Our method can be easily applied to any prompt learning model without change in architecture or re-training the models. Comprehensive empirical evaluations across 13 datasets and 8 models demonstrate the effectiveness and adaptability of our method.

Generalized Contrastive Learning for Multi-Modal Retrieval and Ranking

Apr 12, 2024

Abstract:Contrastive learning has gained widespread adoption for retrieval tasks due to its minimal requirement for manual annotations. However, popular contrastive frameworks typically learn from binary relevance, making them ineffective at incorporating direct fine-grained rankings. In this paper, we curate a large-scale dataset featuring detailed relevance scores for each query-document pair to facilitate future research and evaluation. Subsequently, we propose Generalized Contrastive Learning for Multi-Modal Retrieval and Ranking (GCL), which is designed to learn from fine-grained rankings beyond binary relevance scores. Our results show that GCL achieves a 94.5% increase in NDCG@10 for in-domain and 26.3 to 48.8% increases for cold-start evaluations, all relative to the CLIP baseline and involving ground truth rankings.

Multimodal Neural Processes for Uncertainty Estimation

Apr 04, 2023

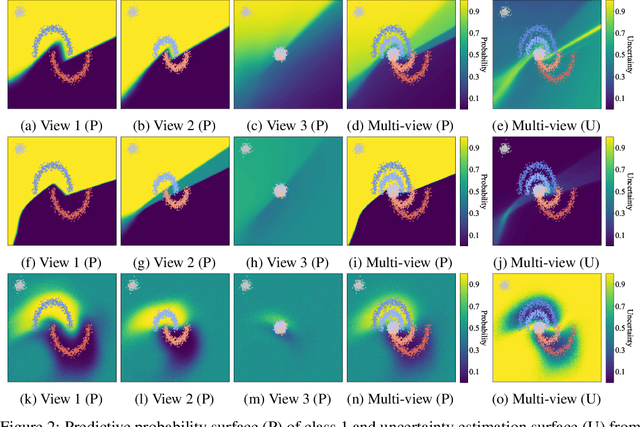

Abstract:Neural processes (NPs) have brought the representation power of parametric deep neural networks and the reliable uncertainty estimation of non-parametric Gaussian processes together. Although recent development of NPs has shown success in both regression and classification, how to adapt NPs to multimodal data has not be carefully studied. For the first time, we propose a new model of NP family for multimodal uncertainty estimation, namely Multimodal Neural Processes. In a holistic and principled way, we develop a dynamic context memory updated by the classification error, a multimodal Bayesian aggregation mechanism to aggregate multimodal representations, and a new attention mechanism for calibrated predictions. In extensive empirical evaluation, our method achieves the state-of-the-art multimodal uncertainty estimation performance, showing its appealing ability of being robust against noisy samples and reliable in out-of-domain detection.

Uncertainty Estimation for Multi-view Data: The Power of Seeing the Whole Picture

Oct 06, 2022

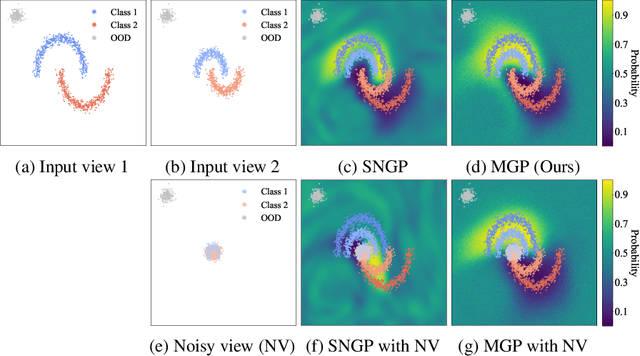

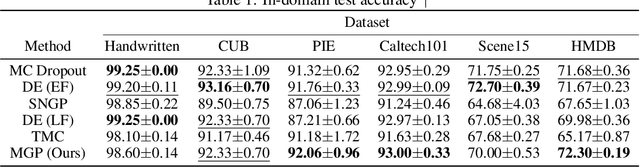

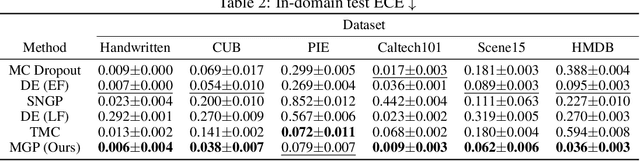

Abstract:Uncertainty estimation is essential to make neural networks trustworthy in real-world applications. Extensive research efforts have been made to quantify and reduce predictive uncertainty. However, most existing works are designed for unimodal data, whereas multi-view uncertainty estimation has not been sufficiently investigated. Therefore, we propose a new multi-view classification framework for better uncertainty estimation and out-of-domain sample detection, where we associate each view with an uncertainty-aware classifier and combine the predictions of all the views in a principled way. The experimental results with real-world datasets demonstrate that our proposed approach is an accurate, reliable, and well-calibrated classifier, which predominantly outperforms the multi-view baselines tested in terms of expected calibration error, robustness to noise, and accuracy for the in-domain sample classification and the out-of-domain sample detection tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge