Mohammad Rasouli

Data Sharing Markets

Jul 20, 2021Abstract:With the growing use of distributed machine learning techniques, there is a growing need for data markets that allows agents to share data with each other. Nevertheless data has unique features that separates it from other commodities including replicability, cost of sharing, and ability to distort. We study a setup where each agent can be both buyer and seller of data. For this setup, we consider two cases: bilateral data exchange (trading data with data) and unilateral data exchange (trading data with money). We model bilateral sharing as a network formation game and show the existence of strongly stable outcome under the top agents property by allowing limited complementarity. We propose ordered match algorithm which can find the stable outcome in O(N^2) (N is the number of agents). For the unilateral sharing, under the assumption of additive cost structure, we construct competitive prices that can implement any social welfare maximizing outcome. Finally for this setup when agents have private information, we propose mixed-VCG mechanism which uses zero cost data distortion of data sharing with its isolated impact to achieve budget balance while truthfully implementing socially optimal outcomes to the exact level of budget imbalance of standard VCG mechanisms. Mixed-VCG uses data distortions as data money for this purpose. We further relax zero cost data distortion assumption by proposing distorted-mixed-VCG. We also extend our model and results to data sharing via incremental inquiries and differential privacy costs.

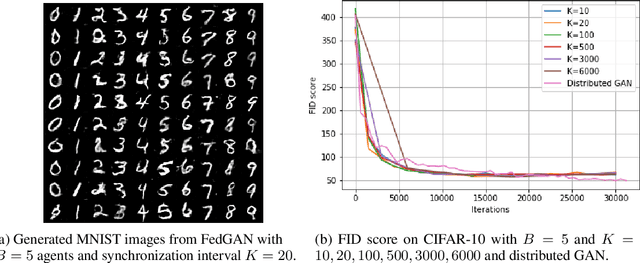

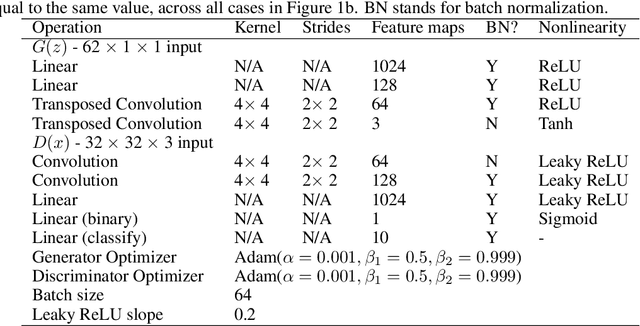

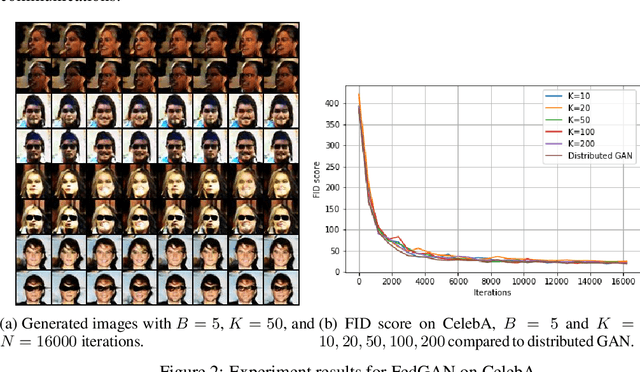

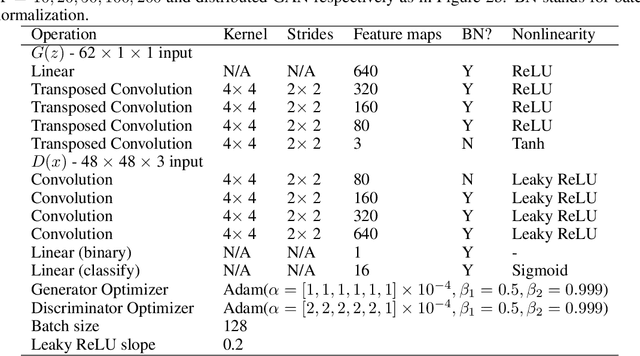

FedGAN: Federated Generative Adversarial Networks for Distributed Data

Jun 15, 2020

Abstract:We propose Federated Generative Adversarial Network (FedGAN) for training a GAN across distributed sources of non-independent-and-identically-distributed data sources subject to communication and privacy constraints. Our algorithm uses local generators and discriminators which are periodically synced via an intermediary that averages and broadcasts the generator and discriminator parameters. We theoretically prove the convergence of FedGAN with both equal and two time-scale updates of generator and discriminator, under standard assumptions, using stochastic approximations and communication efficient stochastic gradient descents. We experiment FedGAN on toy examples (2D system, mixed Gaussian, and Swiss role), image datasets (MNIST, CIFAR-10, and CelebA), and time series datasets (household electricity consumption and electric vehicle charging sessions). We show FedGAN converges and has similar performance to general distributed GAN, while reduces communication complexity. We also show its robustness to reduced communications.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge