Mohamed Selim

TinyIceNet: Low-Power SAR Sea Ice Segmentation for On-Board FPGA Inference

Mar 03, 2026Abstract:Accurate sea ice mapping is essential for safe maritime navigation in polar regions, where rapidly changing ice conditions require timely and reliable information. While Sentinel-1 Synthetic Aperture Radar (SAR) provides high-resolution, all-weather observations of sea ice, conventional ground-based processing is limited by downlink bandwidth, latency, and energy costs associated with transmitting large volumes of raw data. On-board processing, enabled by dedicated inference chips integrated directly within the satellite payload, offers a transformative alternative by generating actionable sea ice products in orbit. In this context, we present TinyIceNet, a compact semantic segmentation network co-designed for on-board Stage of Development (SOD) mapping from dual-polarized Sentinel-1 SAR imagery under strict hardware and power constraints. Trained on the AI4Arctic dataset, TinyIceNet combines SAR-aware architectural simplifications with low-precision quantization to balance accuracy and efficiency. The model is synthesized using High-Level Synthesis and deployed on a Xilinx Zynq UltraScale+ FPGA platform, demonstrating near-real-time inference with significantly reduced energy consumption. Experimental results show that TinyIceNet achieves 75.216% F1 score on SOD segmentation while reducing energy consumption by 2x compared to full-precision GPU baselines, underscoring the potential of chip-level hardware-algorithm co-design for future spaceborne and edge AI systems.

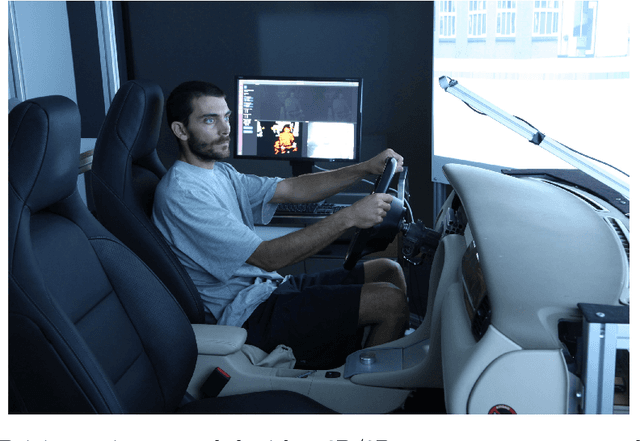

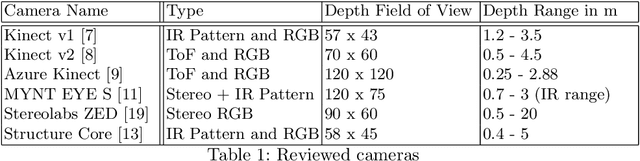

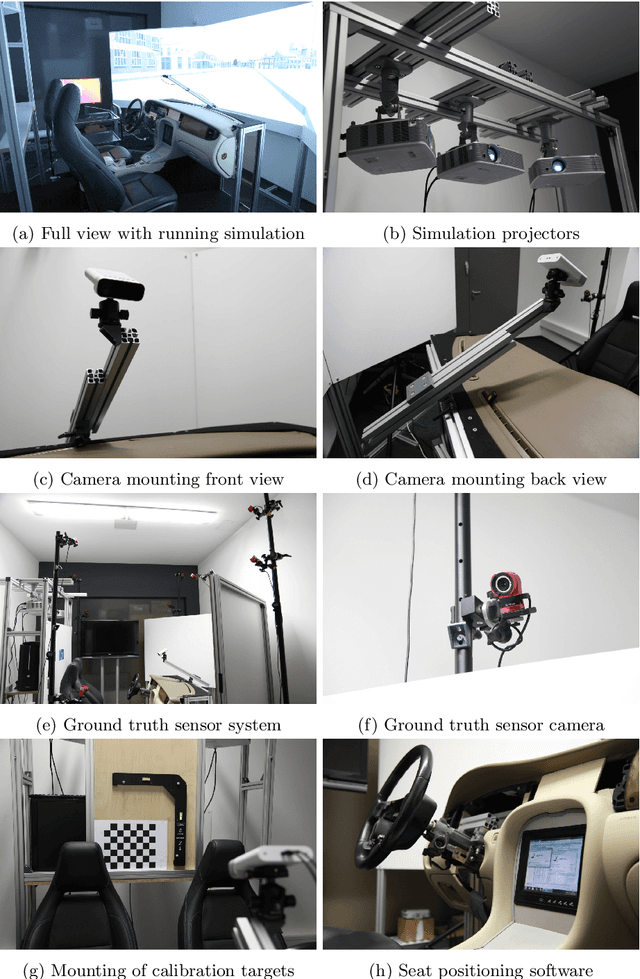

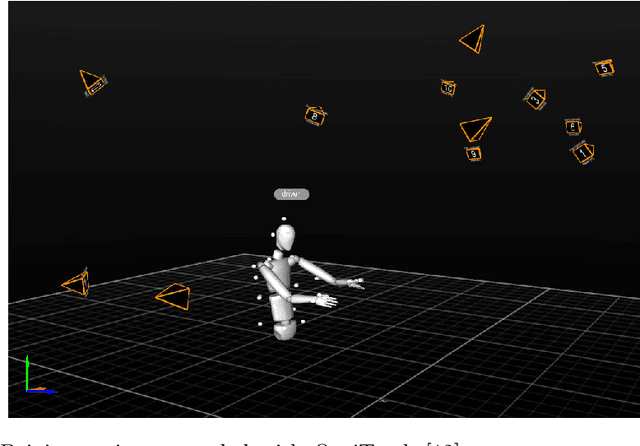

DFKI Cabin Simulator: A Test Platform for Visual In-Cabin Monitoring Functions

Feb 11, 2020

Abstract:We present a test platform for visual in-cabin scene analysis and occupant monitoring functions. The test platform is based on a driving simulator developed at the DFKI, consisting of a realistic in-cabin mock-up and a wide-angle projection system for a realistic driving experience. The platform has been equipped with a wide-angle 2D/3D camera system monitoring the entire interior of the vehicle mock-up of the simulator. It is also supplemented with a ground truth reference sensor system that allows to track and record the occupant's body movements synchronously with the 2D and 3D video streams of the camera. Thus, the resulting test platform will serve as a basis to validate numerous in-cabin monitoring functions, which are important for the realization of novel human-vehicle interfaces, advanced driver assistant systems, and automated driving. Among the considered functions are occupant presence detection, size and 3D-pose estimation and driver intention recognition. In addition, our platform will be the basis for the creation of large-scale in-cabin benchmark datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge