Mizuka Komatsu

Algebraically Observable Physics-Informed Neural Network and its Application to Epidemiological Modelling

Aug 06, 2025Abstract:Physics-Informed Neural Network (PINN) is a deep learning framework that integrates the governing equations underlying data into a loss function. In this study, we consider the problem of estimating state variables and parameters in epidemiological models governed by ordinary differential equations using PINNs. In practice, not all trajectory data corresponding to the population described by models can be measured. Learning PINNs to estimate the unmeasured state variables and epidemiological parameters using partial measurements is challenging. Accordingly, we introduce the concept of algebraic observability of the state variables. Specifically, we propose augmenting the unmeasured data based on algebraic observability analysis. The validity of the proposed method is demonstrated through numerical experiments under three scenarios in the context of epidemiological modelling. Specifically, given noisy and partial measurements, the accuracy of unmeasured states and parameter estimation of the proposed method is shown to be higher than that of the conventional methods. The proposed method is also shown to be effective in practical scenarios, such as when the data corresponding to certain variables cannot be reconstructed from the measurements.

Estimate Epidemiological Parameters given Partial Observations based on Algebraically Observable PINNs

Jul 17, 2024

Abstract:In this study, we considered the problem of estimating epidemiological parameters based on physics-informed neural networks (PINNs). In practice, not all trajectory data corresponding to the population estimated by epidemic models can be obtained, and some observed trajectories are noisy. Learning PINNs to estimate unknown epidemiological parameters using such partial observations is challenging. Accordingly, we introduce the concept of algebraic observability into PINNs. The validity of the proposed PINN, named as an algebraically observable PINNs, in terms of estimation parameters and prediction of unobserved variables, is demonstrated through numerical experiments.

Real-Time Cattle Interaction Recognition via Triple-stream Network

Sep 06, 2022

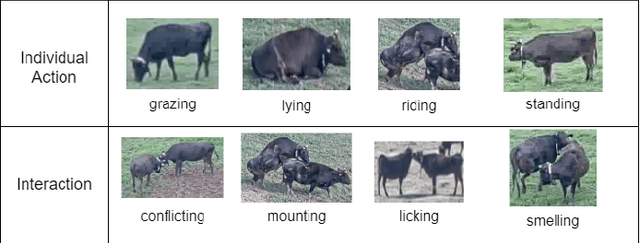

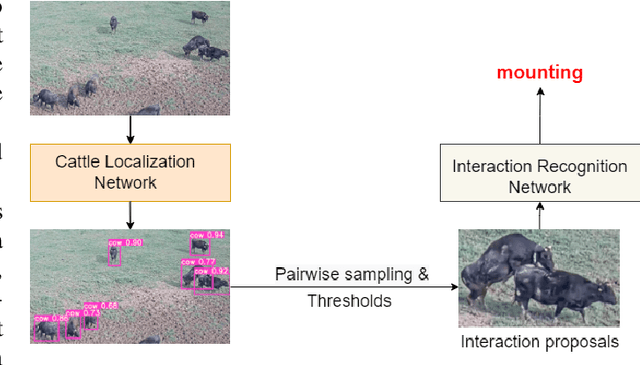

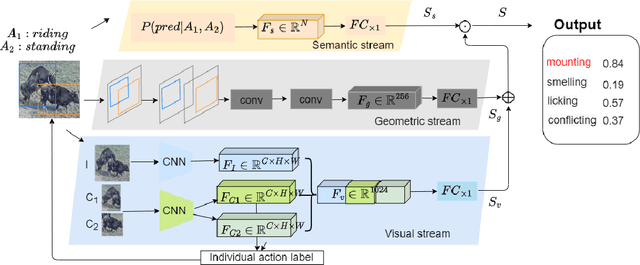

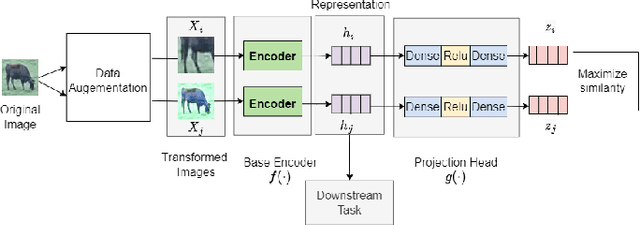

Abstract:In stockbreeding of beef cattle, computer vision-based approaches have been widely employed to monitor cattle conditions (e.g. the physical, physiology, and health). To this end, the accurate and effective recognition of cattle action is a prerequisite. Generally, most existing models are confined to individual behavior that uses video-based methods to extract spatial-temporal features for recognizing the individual actions of each cattle. However, there is sociality among cattle and their interaction usually reflects important conditions, e.g. estrus, and also video-based method neglects the real-time capability of the model. Based on this, we tackle the challenging task of real-time recognizing interactions between cattle in a single frame in this paper. The pipeline of our method includes two main modules: Cattle Localization Network and Interaction Recognition Network. At every moment, cattle localization network outputs high-quality interaction proposals from every detected cattle and feeds them into the interaction recognition network with a triple-stream architecture. Such a triple-stream network allows us to fuse different features relevant to recognizing interactions. Specifically, the three kinds of features are a visual feature that extracts the appearance representation of interaction proposals, a geometric feature that reflects the spatial relationship between cattle, and a semantic feature that captures our prior knowledge of the relationship between the individual action and interaction of cattle. In addition, to solve the problem of insufficient quantity of labeled data, we pre-train the model based on self-supervised learning. Qualitative and quantitative evaluation evidences the performance of our framework as an effective method to recognize cattle interaction in real time.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge