Minh Huy Pham

A Spatial Relationship Aware Dataset for Robotics

Jun 14, 2025

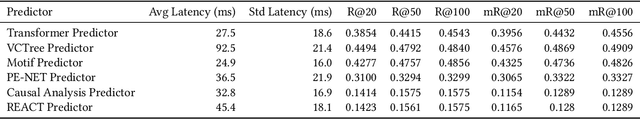

Abstract:Robotic task planning in real-world environments requires not only object recognition but also a nuanced understanding of spatial relationships between objects. We present a spatial-relationship-aware dataset of nearly 1,000 robot-acquired indoor images, annotated with object attributes, positions, and detailed spatial relationships. Captured using a Boston Dynamics Spot robot and labelled with a custom annotation tool, the dataset reflects complex scenarios with similar or identical objects and intricate spatial arrangements. We benchmark six state-of-the-art scene-graph generation models on this dataset, analysing their inference speed and relational accuracy. Our results highlight significant differences in model performance and demonstrate that integrating explicit spatial relationships into foundation models, such as ChatGPT 4o, substantially improves their ability to generate executable, spatially-aware plans for robotics. The dataset and annotation tool are publicly available at https://github.com/PengPaulWang/SpatialAwareRobotDataset, supporting further research in spatial reasoning for robotics.

Robot Shape and Location Retention in Video Generation Using Diffusion Models

Jul 03, 2024

Abstract:Diffusion models have marked a significant milestone in the enhancement of image and video generation technologies. However, generating videos that precisely retain the shape and location of moving objects such as robots remains a challenge. This paper presents diffusion models specifically tailored to generate videos that accurately maintain the shape and location of mobile robots. This development offers substantial benefits to those working on detecting dangerous interactions between humans and robots by facilitating the creation of training data for collision detection models, circumventing the need for collecting data from the real world, which often involves legal and ethical issues. Our models incorporate techniques such as embedding accessible robot pose information and applying semantic mask regulation within the ConvNext backbone network. These techniques are designed to refine intermediate outputs, therefore improving the retention performance of shape and location. Through extensive experimentation, our models have demonstrated notable improvements in maintaining the shape and location of different robots, as well as enhancing overall video generation quality, compared to the benchmark diffusion model. Codes will be opensourced at \href{https://github.com/PengPaulWang/diffusion-robots}{Github}.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge