Michal Šustr

Meta-Learning in Self-Play Regret Minimization

Apr 26, 2025Abstract:Regret minimization is a general approach to online optimization which plays a crucial role in many algorithms for approximating Nash equilibria in two-player zero-sum games. The literature mainly focuses on solving individual games in isolation. However, in practice, players often encounter a distribution of similar but distinct games. For example, when trading correlated assets on the stock market, or when refining the strategy in subgames of a much larger game. Recently, offline meta-learning was used to accelerate one-sided equilibrium finding on such distributions. We build upon this, extending the framework to the more challenging self-play setting, which is the basis for most state-of-the-art equilibrium approximation algorithms for domains at scale. When selecting the strategy, our method uniquely integrates information across all decision states, promoting global communication as opposed to the traditional local regret decomposition. Empirical evaluation on normal-form games and river poker subgames shows our meta-learned algorithms considerably outperform other state-of-the-art regret minimization algorithms.

Fast Algorithms for Poker Require Modelling it as a Sequential Bayesian Game

Dec 20, 2021

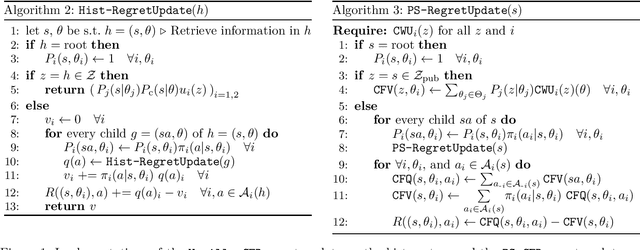

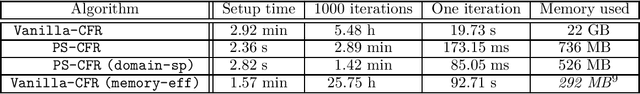

Abstract:Many recent results in imperfect information games were only formulated for, or evaluated on, poker and poker-like games such as liar's dice. We argue that sequential Bayesian games constitute a natural class of games for generalizing these results. In particular, this model allows for an elegant formulation of the counterfactual regret minimization algorithm, called public-state CFR (PS-CFR), which naturally lends itself to an efficient implementation. Empirically, solving a poker subgame with 10^7 states by public-state CFR takes 3 minutes and 700 MB while a comparable version of vanilla CFR takes 5.5 hours and 20 GB. Additionally, the public-state formulation of CFR opens up the possibility for exploiting domain-specific assumptions, leading to a quadratic reduction in asymptotic complexity (and a further empirical speedup) over vanilla CFR in poker and other domains. Overall, this suggests that the ability to represent poker as a sequential Bayesian game played a key role in the success of CFR-based methods. Finally, we extend public-state CFR to general extensive-form games, arguing that this extension enjoys some - but not all - of the benefits of the version for sequential Bayesian games.

Multi-platform Version of StarCraft: Brood War in a Docker Container: Technical Report

Jan 07, 2018

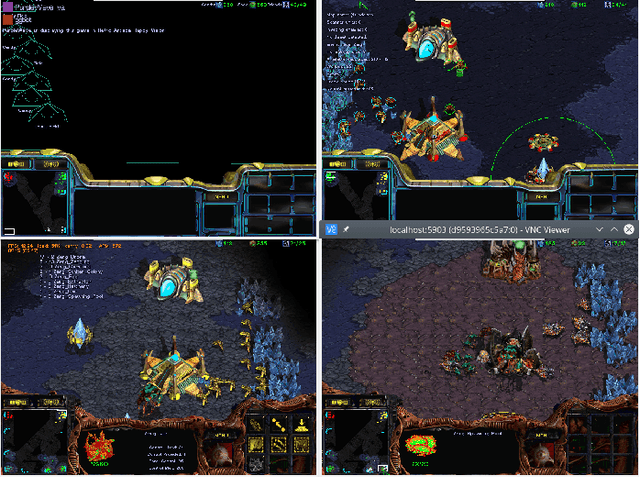

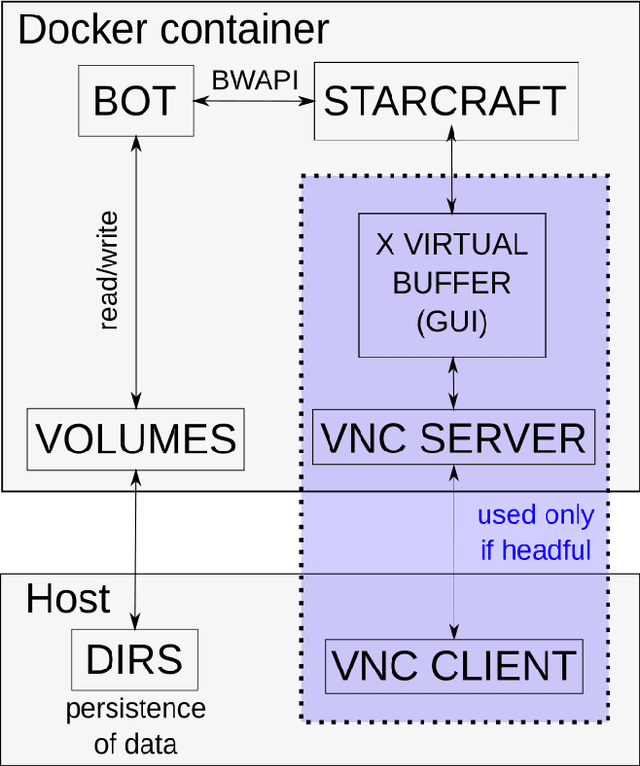

Abstract:We present a dockerized version of a real-time strategy game StarCraft: Brood War, commonly used as a domain for AI research, with a pre-installed collection of AI developement tools supporting all the major types of StarCraft bots. This provides a convenient way to deploy StarCraft AIs on numerous hosts at once and across multiple platforms despite limited OS support of StarCraft. In this technical report, we describe the design of our Docker images and present a few use cases.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge